Last time I talked about how to use AWK (or, more probably the GNU AWK known as GAWK) to process text files. You might be thinking: why did I care? Hardware hackers don’t need text files, right? Maybe they do. I want to talk about a few common cases where AWK can process things that are more up the hardware hacker’s alley.

The Simple: Data Logs

If you look around, a lot of data loggers and test instruments do produce text files. If you have a text file from your scope or a program like SIGROK, it is simple to slice and dice it with AWK. Your machines might not always put out nicely formatted text files. That’s what AWK is for.

AWK makes the default assumption that fields break on whitespace and end with line feeds. However, you can change those assumptions in lots of ways. You can set FS and RS to change the field separator and record separator, respectively. Usually, you’ll set this in the BEGIN action although you can also change it on the command line.

For example, suppose your test file uses semicolons between fields. No problem. Just set FS to “;” and you are ready to go. Setting FS to a newline will treat the entire line as a single field. Instead of delimited fields, you might also run into fixed-width fields. For a file like that, you can set FIELDWIDTHS.

If the records aren’t delimited, but a fixed length, things are a bit trickier. Some people use the Linux utility dd to break the file apart into lines by the number of bytes in each record. You can also set RS to a limited number of any character and then use the RT variable (see below) to find out what those characters were. There are other options and even ways to read multiple lines. The GAWK manual is your friend if you have these cases.

BEGIN { RS=".{10}" # records are 10 characters

}

{

$0=RT

}

{

print $0 # do what you want here

}

Once you have records and fields sorted, it is easy to do things like average values, detect values that are out of limit, and just about anything else you can think of.

Spreadsheet Data Logs

Some tools output spreadsheets. AWK isn’t great at handling spreadsheets directly. However, a spreadsheet can be saved as a CSV file and then AWK can chew those up easily. It is also an easy format to produce from an AWK file that you can then read into a spreadsheet. You can then easily produce nice graphs, if you don’t want to use GNUPlot.

Simplistically, setting FS to a comma will do the job. If all you have is numbers, this is probably enough. If you have strings, though, some programs put quotes around strings (that may contain commas or spaces). Some only put quotes around strings that have commas in them.

To work around this problem cleanly, AWK offers an alternate way to define fields. Normally, FS tells you what characters separate a field. However, you can set FPAT to define what a field looks like. In the case of CSV file, a field is any character other than a comma or a double quote and then anything up to the next double quote.

The manual has a good example:

BEGIN {

FPAT = "([^,]+)|(\"[^\"]+\")"

}

{

print "NF = ", NF

for (i = 1; i <= NF; i++) {

printf("$%d = <%s>\n", i, $i)

}

This isn’t perfect. For example, escaped quotes don’t work right. Quoted text with new lines in it don’t either. The manual has some changes that remove quotes and handle empty fields, but the example above works for most common cases. Often the easiest approach is to change the delimiter in the source program to something unique, instead of a comma.

Hex Files

Another text file common in hardware circles is a hex file. That is a text file that represents the hex contents of a programmable memory (perhaps embedded in a microcontroller). There are two common formats: Intel hex files and Motorola S records. AWK can handle both, but we’ll focus on the Intel variant.

Old versions of AWK didn’t work well with hex input, so you’d have to resort to building arrays to convert hex digits to numbers. You still see that sometimes in old code or code that strives to be compatible. However, GNU AWK has the strtonum function that explicitly converts a string to a number and understands the 0x prefix. So a highly compatible two digit hex function looks like this (not including the code to initialize the hexdigit array):

function hex2dec(x) {

return (hexdigit[substr(x,1,1)]*16)+hexdigit[substr(x,2,1)]

}

If you don’t mind requiring GAWK, it can look like this:

function hex2dec(x) {

return strtonum("0x" x);

}

In fact, the last function is a little better (and misnamed) because it can handle any hex number regardless of length (up to whatever limit is in GAWK).

Hex output is simple since you have printf and the X format specifier is available. Below is an AWK script that chews through a hex file and provides a count of the entire file, plus shows a breakdown of the segments (that is, non-contiguous memory regions).

BEGIN { ct=0;

adxpt=""

}

function hex4dec(y) {

return strtonum("0x" y)

}

function hex2dec(x) {

return strtonum("0x" x);

}

/:[[:xdigit:]][[:xdigit:]][[:xdigit:]][[:xdigit:]][[:xdigit:]][[:xdigit:]]00/ {

ad = hex4dec(substr($0, 4, 4))

if (ad != adxpt) {

block[++n] = ad

adxpt = ad;

}

l = hex2dec(substr($0, 2, 2))

blockct[n] = blockct[n] + l

adxpt = adxpt + l

ct = ct + l

}

END { printf("Count=%d (0x%04x) bytes\t%d (0x%04x) words\n\n", ct, ct, ct/2, ct/2)

for (i = 1 ; i <= n ; i++) {

printf("%04x: %d (0x%x) bytes\t", block[i], blockct[i], blockct[i])

printf("%d (0x%x) words\n", blockct[i]/2, blockct[i]/2)

}

}

This shows a few AWK features: the BEGIN action, user-defined functions, the use of named character classes (:xdigit: is a hex digit) and arrays (block and blockct use numeric indices even though they don’t have to). In the END action, the summary uses printf statements for both decimal and hex output.

Once you can parse a file like this, there are many things you could do with the resulting data. Here’s an example of some similar code that does a sanity check on hex files.

Binary Files

Text files are fine, but real hardware uses binary files that people (and AWK) can’t easily read, right? Well, maybe people, but AWK can read binary files in a few ways. You can use getline in the BEGIN part of the script and control how things are read directly. You can also use the RS/RT trick mentioned above to read a specific number of bytes. There are a few other AWK-only methods you can read about if you are interested.

However, the easiest way to deal with binary files in AWK is to convert them to text files using something like the od utility. This is a program available with Linux (or Cygwin, and probably other Windows toolkits) that converts a binary file to different readable formats. You probably want hex bytes, so that’s the -t x2 option (or use x4 for 16-bit words). However, the output is made for humans, not machines, so when a long run of the same output occurs, od omits them replacing all the missing lines with a single asterisk. For AWK use, you want to use the -v option to turn that behavior off. There are other options to change the output radix of the address, swap bytes, and more.

Here are a few lines from a random binary file:

0000000 d8ff e0ff 1000 464a 4649 0100 0001 0100 0000020 0100 0000 dbff 4300 5900 433d 434e 5938 0000040 484e 644e 595e 8569 90de 7a85 857a c2ff 0000060 a1cd ffde ffff ffff ffff ffff ffff ffff 0000100 ffff ffff ffff ffff ffff ffff ffff ffff 0000120 ffff ffff ffff ffff ffff 00db 0143 645e 0000140 8564 8575 90ff ff90 ffff ffff ffff ffff 0000160 ffff ffff ffff ffff ffff ffff ffff ffff 0000200 ffff ffff ffff ffff ffff ffff ffff ffff 0000220 ffff ffff ffff ffff ffff ffff ffff c0ff 0000240 1100 0108 02e0 0380 2201 0200 0111 1103 0000260 ff01 00c4 001f 0100 0105 0101 0101 0001 0000300 0000 0000 0000 0100 0302 0504 0706 0908 0000320 0b0a c4ff b500 0010 0102 0303 0402 0503 0000340 0405 0004 0100 017d 0302 0400 0511 2112 0000360 4131 1306 6151 2207 1471 8132 a191 2308

This is dead simple to parse with AWK. The address will be $1 and each field will be $2, $3, etc. You can just convert the file yourself, use a pipe in the shell, or–if you want a clean solution–have AWK run od as a subprocess. Since the input is text, all of AWK’s regular expression features still work, which is useful.

Writing binary files is easy, too, since printf can output nearly anything. An alternative is to use xxd instead of od. It can convert binary files to text, but also can do the reverse.

Full Languages

There’s an old saying that if all you have is a hammer, everything looks like a nail. I doubt that AWK is the best tool to build full languages, but it can be a component of some quick and dirty hacks. For example, the universal cross assembler uses AWK to transform assembly language files into an internal format the C preprocessor can handle

Since AWK can call out to external programs easily, it would be possible to write things that, for example, processed a text file of commands and used them to drive a robot arm. The regular expression matching makes text processing easy and external programs could actually handle the hardware interface.

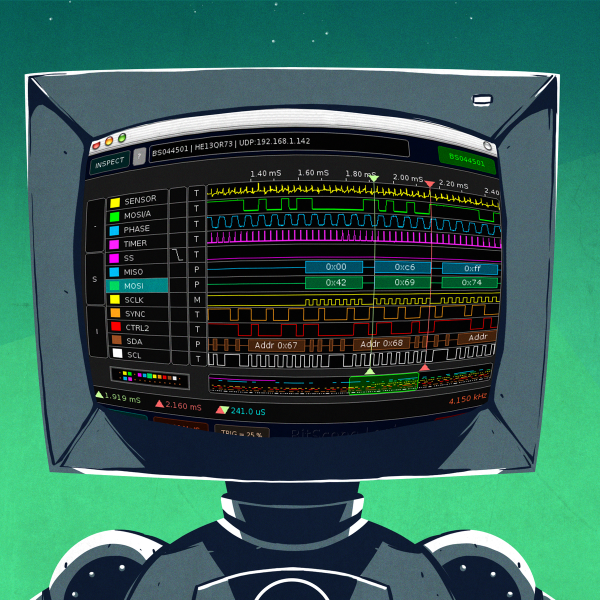

Think that’s far fetched? We’ve covered stranger AWK use cases, including a Wolfenstien-like game that uses 600 lines of AWK script (as seen to the right).

Think that’s far fetched? We’ve covered stranger AWK use cases, including a Wolfenstien-like game that uses 600 lines of AWK script (as seen to the right).

So, sure it is software, but it is a tool that has that Swiss Army knife quality that makes it a useful tool for software and hardware hackers alike. Of course, other tools like Perl, Python, and even C or C++ can do more. But often with a price in complexity and learning curve. AWK isn’t for every job, but when it works, it works well.

Ohh Awk… how I love thee. Many hours of writing awk code during my current and past sysadmin jobs. Thanks for the great post (rarely have to play with hex with awk but some of this could have come in handy back when)

Thank you for writing about such a great and powerful free tool like AWK.

As a personal choice, for data logging, charts and post-processing, any free spreadsheet program (e.g. Open Office Calc) will do a great job, and it will be way much easier to work with. But when hardware resources are very low, or batch data processing is required, then AWK will become the preferred one.

For hex I/O, I like ‘xxd’. It shows the printable characters on the right side, and has some fun options for writing files from hex input.

hexdump and od can do the characters too.

Try od -t x1z

Or just try hexdump -C

Of course, xxd can go both ways which is interesting.

This documentation rocks!

Thank you. I always worked with C but now I’ll give awk a try.

Awk is awksome. There’s not a lot you can’t do with bash and awk.

I couldn’t count the number of times awk comes to the rescue when having to parse huge unreadable logs. Anything long or wide and Excel generally baulks – and when you want to run your search and rerun it and rerun it the linux command line tools always wins hands down.

awk and it’s supporting cast tr: od, egrep and sed are the first tools I turn to for most tasks and only when (rarely) the problem is intractable to them do I consider reaching for gcc. my usual MO is use thse tools to get the output cut down standardised and formatted in a more digestible pattern and then if I really need to see something graphically I throw out as csv and do the pretty charts in Excel.

What amazes folks from PC backgrounds I find is when you process gigabytes of logs in a few seconds and give them the answer – they just can’t understand how the linux family of line based processing tools (all piped for parallel processing too of course) can chomp data in a few seconds when they have usually come to ask for help when Excel (64 bit Excel too mind) finally crashed after 40 minutes of egg timer and disk trashing trying to do the same thing.

Always fun is fitting the entire awk script onto the single command line along with whatever other commands are in the pipeline and telling them you did it with a single command :-)

Gawk has great performance, often beating out the alternatives of the day. It also easily lends itself to parallel computing because you can combine multiple awk invocations using pipes. That is even true with old awk scripts.

For further performance, check out awka. It will convert your awk to C, that is then compiled into a binary.

http://awka.sourceforge.net/index.html

You can find what delimiter to use for producing a CSV file by runing your data through the following filter.

cat mydata.txt | fold -w1 | sort -u

This will list the unique characters in the file, then just use a character that is not in that list.