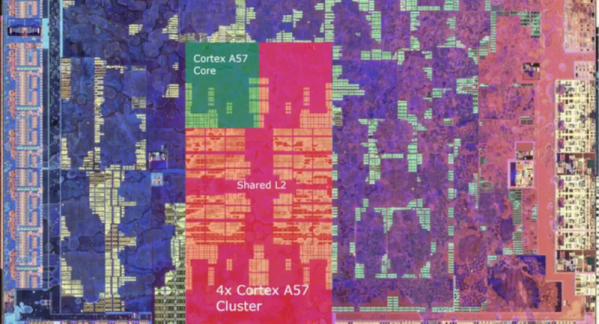

Ever wonder what’s inside a Nintendo Switch? Well, the chip is an Nvidia Tegra X1. However, if you peel back a layer, there are four ARM CPU cores inside — specifically Cortex A57 cores, which take up about two square millimeters of space on the die. The whole cluster, including some cache memory, takes up just over 13 square millimeters. [ClamChowder] takes us inside the Cortex A57 inside the Nintendo Switch in a recent post.

Interestingly, the X1 also has four A53 cores, which are more power efficient, but according to the post, Nintendo doesn’t use them. The 4 GB of DRAM is LPDDR4 memory with a theoretical bandwidth of 25.6 GB/s.

The post details the out-of-order execution and branch prediction used to improve performance. We can’t help but marvel that in our lifetime, we’ve seen computers go from giant, expensive machines to the point where a game console has 8 CPU cores and advanced things like out-of-order execution. Still, [ClamChowder] makes the point that the Switch’s processor is anemic by today’s standards, and can’t even compare with an outdated desktop CPU.

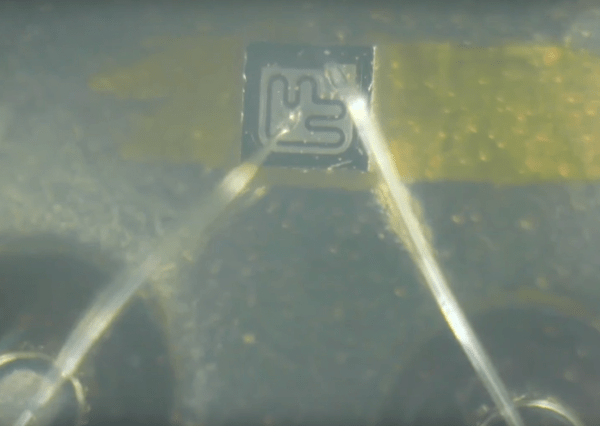

Want to program the ARM in assembly language? We can help you get started. You can even do it on a breadboard, though the LPC1114 is a pretty far cry from what even the Switch is packing under the hood.