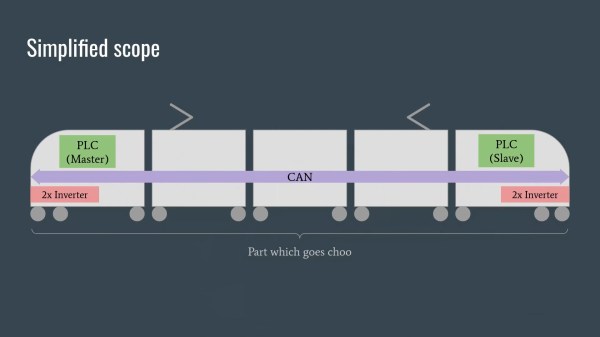

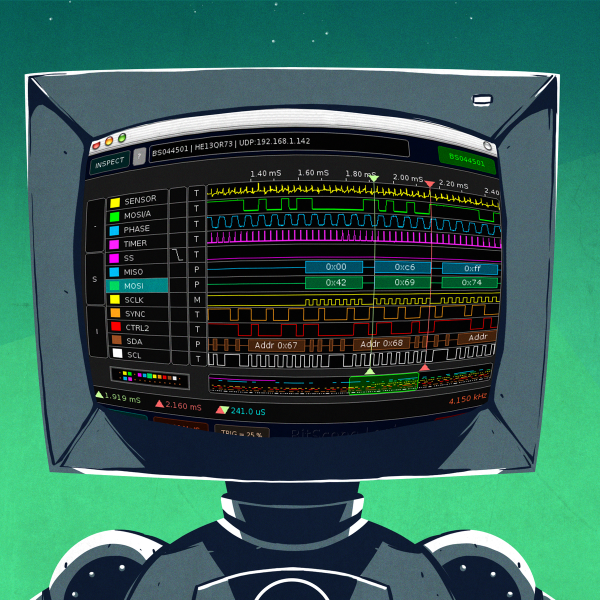

The first clue was that a number of locomotives started malfunctioning with exactly 1,000,000 km on the odometer. And when the company with the contract for servicing them couldn’t figure out why, they typed “Polish hackers” into a search engine, and found our heroes [Redford], [q3k], and [MrTick]. What follows is a story of industrial skullduggery, CAN bus sniffing, obscure reverse engineering, and heavy rolling stock, and a fantastically entertaining talk.

Cutting straight to the punchline, the manufacturer of the engines in question apparently also makes a lot of money on the service contracts, and included logic bombs in the firmware that would ensure that revenue stream while thwarting independent repair shops. They also included “cheat codes” that simply unlocked the conditions, which the Polish hackers uncovered as well. Perhaps the most blatant evidence of malfeasance, though, was that there were actually checks in some versions of the firmware that geofenced out the competitors’ repair shops.

We shouldn’t spoil too much more of the talk, and there’s active investigation and legal action pending, but the smoking guns are incredibly smoky. The theme of this year’s Chaos Communication Congress is “Unlocked”, and you couldn’t ask for a better demonstration of why it’s absolutely in the public interest that hackers gotta hack. Of course, [Daniel Lange] and [Felix Domke]’s reverse engineering of the VW Dieselgate ECU shenanigans, another all-time favorite, also comes to mind.