Over the last few years, we’ve all been given a valuable lesson in both the promise and limitations of advanced molecular biology methods for clinical diagnostics. Polymerase chain reaction (PCR) was held up as the “gold standard” of COVID-19 testing, but the cost, complexity, and need for advanced instrumentation and operators with specialized training made PCR difficult to scale to the levels demanded by a pandemic.

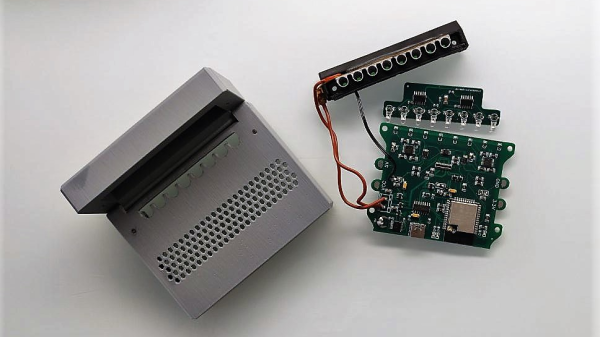

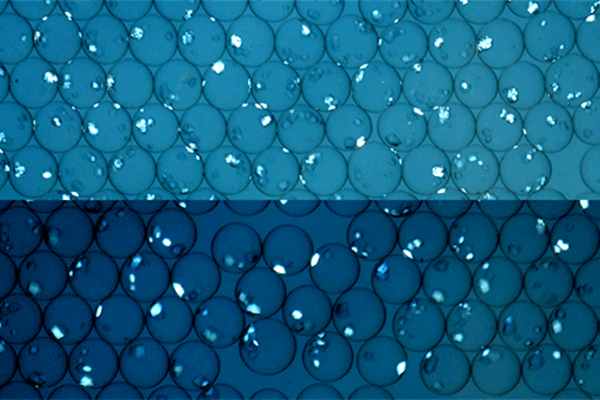

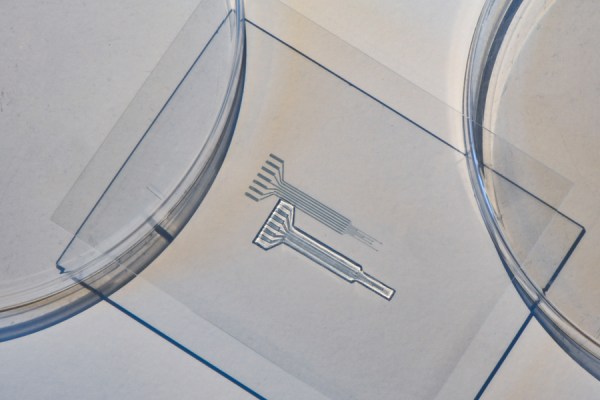

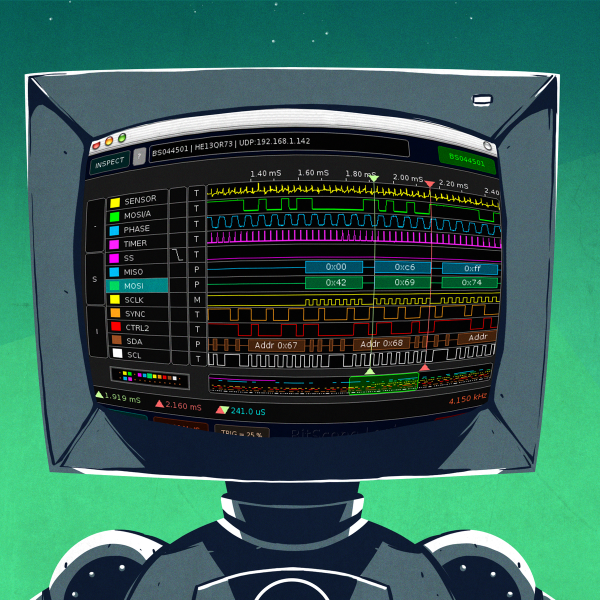

There are other diagnostic methods, of course, some of which don’t have all the baggage of PCR. RT-LAMP, or reverse transcriptase loop-mediated amplification, is one method with a lot of promise, especially when it can be done on a cheap open-source instrument like qLAMP. For about 50€, qLAMP makes amplification and detection of nucleic acids, like the RNA genome of the SARS-CoV-2 virus, a benchtop operation that can be performed by anyone. LAMP is an isothermal process; it can be done at one single temperature, meaning that no bulky thermal cycler is required. Detection is via the fluorescent dye SYTO 9, which layers into the base pairs inside the amplified DNA strands, using a 470-nm LED for excitation and a photodiode with a filter to detect the emission. Heating is provided by a PCB heater and a 3D-printed aluminum block that holds tubes for eight separate reactions. Everything lives in a 3D-printed case, including the ESP32 which takes care of all the housekeeping and data analysis duties.

With the proper test kits, which cost just a couple of bucks each, qLAMP would be useful for diagnosing a wide range of diseases, and under less-than-ideal conditions. It could also be a boon to biohackers, who could use it for their own citizen science efforts. We saw a LAMP setup at the height of the pandemic that used the Mark 1 eyeball as a detector; this one is far more quantitative.