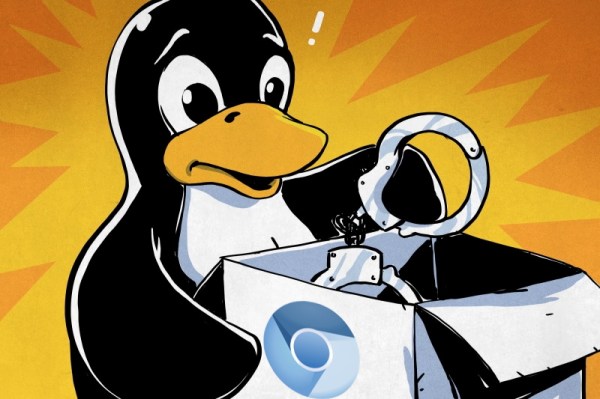

Everyone makes mistakes in their job, but very few of us get the chance to make a one-character mistake with the potential to brick millions of devices. But that’s what happened to a hapless Google developer, who made an understandable typo in the ChromeOS code that ended up making it all the way to production. The error, which was in the OS encryption keys vault, was supposed to include the “&&” operator for a logical AND. The developer instead used a single ampersand, which broke the who conditional statement. This meant the OS evaluated even correct passwords as invalid, leaving users locked out of their Chromebooks. To be fair to the developer there should be a lot of QA steps between that typo and production, but it still has to sting.

Speaking of whoopsies, sometimes it just doesn’t pay to be right on the internet. It started when a player of the popular tank battle simulator “War Thunder” took issue with the in-game 3D model of the British Challenger 2 main battle tank. The player argued that the model was inaccurate to the point of affecting gameplay, and thought the model should be changed to make things more realistic. There seemed to be some basis for this, as the player claimed to have been a Challenger 2 commander and gunnery instructor. What’s more, like any good Netizen, the player cited sources to back up the claims, including excerpts from the official Challenger 2 instruction manual. Players on the War Thunder forum flagged this as likely classified material, but the player insisted that it wasn’t — right up to the point where the UK Ministry of Defence said, “Not so fast.” It turns out that the manual hasn’t been declassified, and that releasing the material potentially runs afoul of the Official Secrets Act, which carries with it up to 14 years detention at Her Majesty’s pleasure.

For fans of pinball, the announcement that the Museum of Pinball in Banning, California is closing its doors for good is probably a mix of good news and bad. It’s obviously bad news for any museum to close, especially one that curates collections from popular culture. And there’s no denying that pinball has been a big part of that culture, and that the machines themselves are often works of electromechanical art. But it appears that the museum just couldn’t make a go of it, and now its cavernous space will be sold off to a cannabis grower. But the sad news is tempered by the potential for private collectors and other pinball aficionados to score one of the estimated 1,100 pins the museum now needs to find a home for. We’ve never been to the museum, so it’s hard to say what kinds of machines they have and how collectible they are, but regardless, the market is about to be flooded. If you’re nearby, you might want to take a chance to see and play some of these machines one last time, before they get shipped off to private game rooms around the world.

And finally, exciting news from Hackaday superfriend Fran Blanche, who will soon tick an item off her bucket list with a zero-G ride on “G-Force 1”. Not to be confused with its military cousin the “Vomit Comet”, the weightlessness-simulating aircraft will afford Fran a total of about five minutes of free-fall when she takes the ride in a couple of months. There will also be periods of the flight that will simulate the gravity on both the Moon and Mars, so Fran has promised some Matt Damon mythbusting and Buzz Aldrin moonbouncing. And always one to share, Fran will bring along a professional video crew, so she can concentrate on the experience rather than filming it. We’ve actually scheduled Fran for a Hack Chat in August, to talk about the flight and some of her other cool goings-on, so watch out for that.