Minecraft seems to be a game in which anything is possible, both in the virtual world and in the real one. As a sandbox-style game, we’ve seen all kinds of things implemented in it including arithmetic logic units and microcontroller emulators. On the other end of reality we’ve also seen a lot of projects in which real-world interfaces impact the virtual world in some way. As a game, the lines between these two worlds often seem to blur, and that’s no different for this project that allows for control of a smart home from within the game itself.

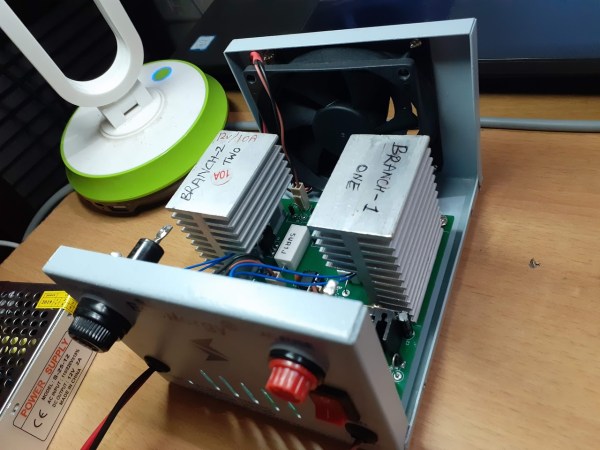

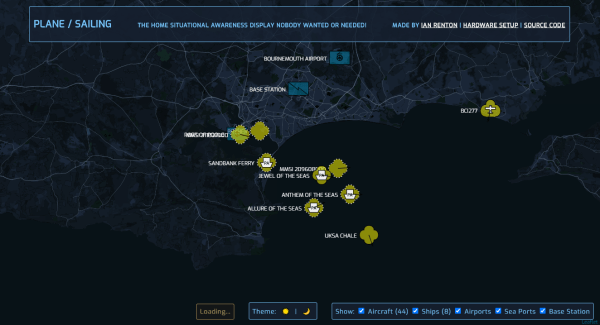

The project is called HomeAssistantMC and is built with Forge. The mod interfaces directly with a Minecraft game. From within the game, players can create a model of their home complete with light switches and other control interfaces. A WebSocket API listens to the game for changes to these devices, and interfaces with real-world controllers which control the home in real life. The game uses special state blocks to handle the control, and the entire control system can be configured in-game once all of the appropriate software has been installed.

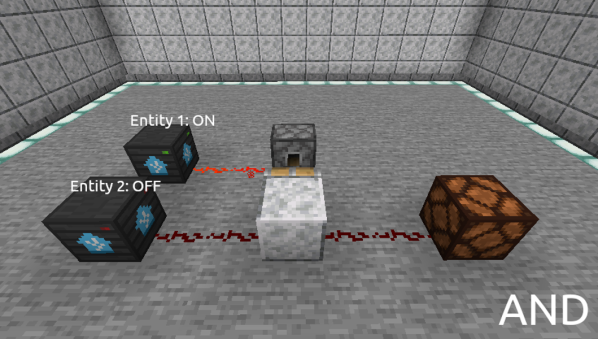

For anyone willing to experiment with this software, all of the code for this project is available on its GitHub page. One of the other interesting things about this project is the ability to use other creations within Minecraft for home automation. For example, building logic gates allows for nuanced control of the home automation setup with creations we’ve already seen in Minecraft before. And, if you really want to go deep into the weeds, you could even build a complete 6502 processor from within the game as well.