Although we see a lot of MATLAB use in industry and in academia, it isn’t as popular in the hacker community. That’s probably due to the cost. If you’ve ever wondered why companies will pay over $2000 for the base product, you might enjoy the video of a webinar covering using MATLAB and Simulink (a companion product) to program the CPU and FPGA on a Zynq Zedboard. Not interested because of the price? If you aren’t using it for commercial purposes, it isn’t as bad as you think.

MathWorks is one of those companies that likes to market by virtually giving away products to students with the hope that they’ll adopt the same tools when they land jobs in industry. Their flagship product, MATLAB, is well-entrenched in the labs and offices of big corporations. We’ve often thought that MATLAB is sort of what FORTRAN would look like if it had been developed in the last 20 years instead of 60 years ago. It is true that a base license for MATLAB is over $2000. However, if you aren’t using it for commercial purposes, and you can’t score a student license, you can get a personal license of MATLAB for about $150. The extra modules are also similarly reduced in price. If you are a student, the price drops to about $100, although many schools have licenses students can use at no cost to them.

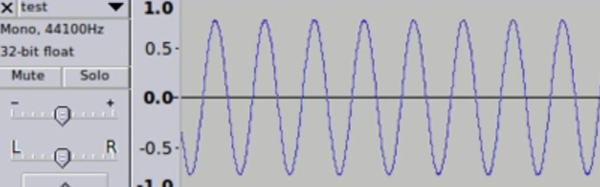

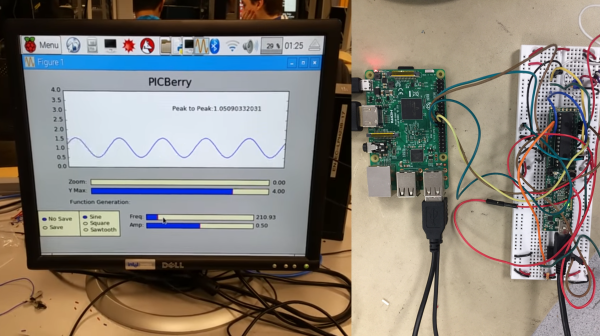

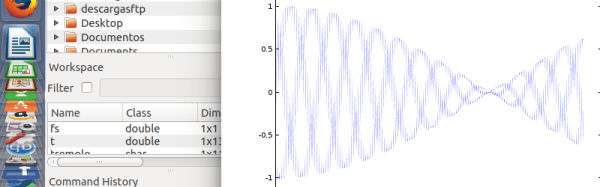

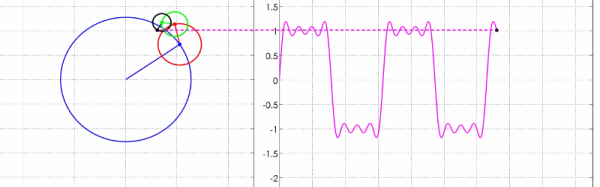

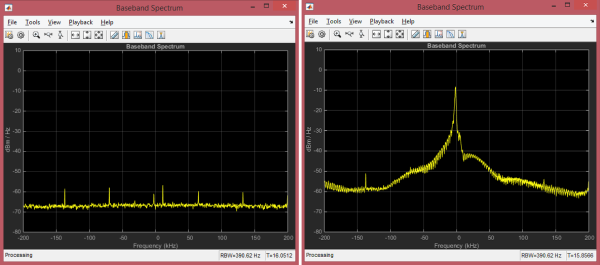

If you watch the video from [Noam Levine], you’ll see you get your money’s worth. If you are wanting to configure the FPGA directly, this isn’t for you. But if you just want to accelerate a program by pushing DSP or other algorithms that can benefit from hardware assistance, MATLAB makes it very easy.

Continue reading “MATLAB And Simulink For Zynq” →