If you have mischievous children or forgetful elderly in your life, you might want to build a couple of these tiny motion detection alarms to help keep them out of harm’s way. Maybe you want to keep yourself out of the cookie jar. We say good for you.

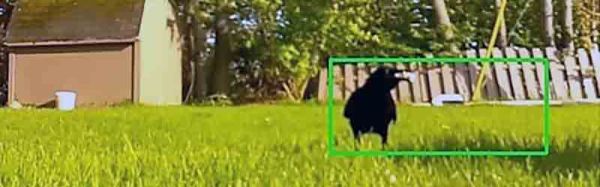

But you could always put one of these alarms on a window, a drawer, or anything else you don’t want opened or moved. The MPU6050 3-axis IMU makes sure that any way the chosen item gets jostled, that alarm is going off.

But you could always put one of these alarms on a window, a drawer, or anything else you don’t want opened or moved. The MPU6050 3-axis IMU makes sure that any way the chosen item gets jostled, that alarm is going off.

As you may have guessed, there isn’t much more to this build — the brain is a Seeed Xiao ESP32-C3, and there’s a buzzer, a battery, a switch, and a push button to program it.

The cool thing about using an ESP32-C3 is that [gokux] can use these for other things, like performing a task when motion is detected. If you do want to build yourself a couple of these, here are step-by-step instructions.

If you’d rather detect motion in the vicinity, here’s a PIR-based solution.

Five years later, I joined a hackerspace, and eventually found out that its CCTV cameras, while being quite visually prominent, stopped functioning a long time ago. At that point, I was in a position to do something about it, and I built an entire CCTV network around a software package called

Five years later, I joined a hackerspace, and eventually found out that its CCTV cameras, while being quite visually prominent, stopped functioning a long time ago. At that point, I was in a position to do something about it, and I built an entire CCTV network around a software package called

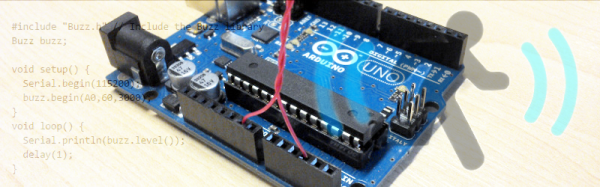

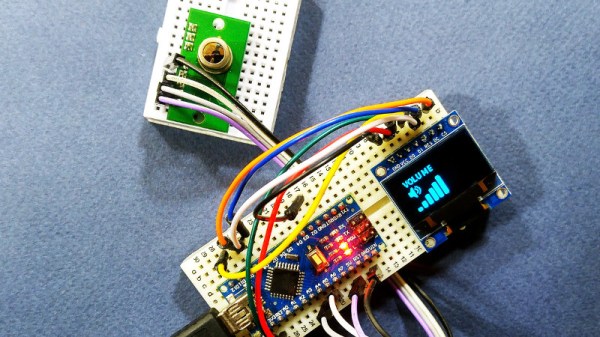

The project uses the TPA81 8-pixel thermopile array which detects the change in heat levels from 8 adjacent points. An Arduino reads these temperature points over I2C and then a simple thresholding function is used to detect the movement of the fingers. These movements are then used to do a number of things including turn the volume up or down as shown in the image alongside.

The project uses the TPA81 8-pixel thermopile array which detects the change in heat levels from 8 adjacent points. An Arduino reads these temperature points over I2C and then a simple thresholding function is used to detect the movement of the fingers. These movements are then used to do a number of things including turn the volume up or down as shown in the image alongside.