The Kinect is an interesting beast. On one hand, it’s fantastic for hacking – a purpose for which it was not designed. On the other hand, it’s “just OK” when it comes to gaming – its entire reason for being.

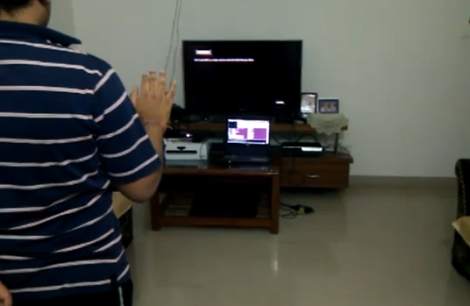

One of the big complaints regarding the Kinect’s control scheme is that it’s no good for games such as first person shooters, where a large majority of the action involves walking, jumping, and aiming. For his Master’s project, [Alex Poolton] put together a fantastic demonstration showing how the Kinect can be paired with a standard Xbox controller to provide hybrid gaming input.

While you might expect a simple game that shows the fundamentals of the hybrid control system, he has put together a full fledged game demo that shows how this control scheme might be implemented in a real game. [Alex] admits that it’s still a bit rough around the edges, but there’s some real potential in his design.

Continue reading to see a video demonstration of [Alex’s] project in action, and be sure to check out his blog for news and updates on the project.

Continue reading “Hybrid Control Scheme Using An Xbox Game Pad And Kinect”