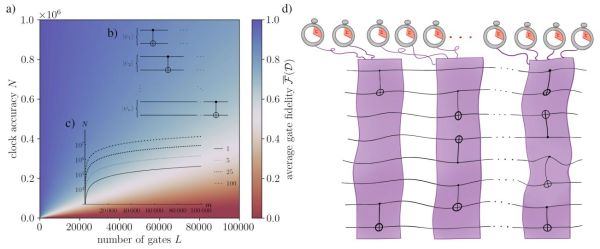

The central selling point of qubit-based quantum processors is that they can supposedly solve certain types of tasks much faster than a classical computer. This comes however with the major complication of quantum computing being ‘noisy’, i.e. affected by outside influences. That this shouldn’t be a hindrance was the point of an article published last year by IBM researchers where they demonstrated a speed-up of a Trotterized time evolution of a 2D transverse-field Ising model on an IBM Eagle 127-qubit quantum processor, even with the error rate of today’s noisy quantum processors. Now, however, [Joseph Tindall] and colleagues have demonstrated with a recently published paper in Physics that they can beat the IBM quantum processor with a classical processor.

In the IBM paper by [Yougseok Kim] and colleagues as published in Nature, the essential take is that despite fault-tolerance heuristics being required with noisy quantum computers, this does not mean that there are no applications for such flawed quantum systems in computing, especially when scaling and speeding up quantum processors. In this particular experiment it concerns an Ising model, a statistical mechanical model, which has many applications in physics, neuroscience, etc., based around phase transitions.

Unlike the simulation running on the IBM system, the classical simulation only has to run once to get accurate results, which along with other optimizations still gives classical systems the lead. Until we develop quantum processors with built-in error-tolerance, of course.