By now it’s probable that most readers will have heard about LastPass’s “Security Incident“, in which users’ password vaults were lifted from their servers. We’re told that the vaults are encrypted such that they’re of little use to anyone without futuristic computing power and a lot of time, but the damage is still done and I for one am glad that I wasn’t a subscriber to their service. But perhaps the debacle serves a very good purpose for all of us, in that it affords a much-needed opportunity for a look at the way we do passwords. Continue reading “The Problem With Passwords”

security453 Articles

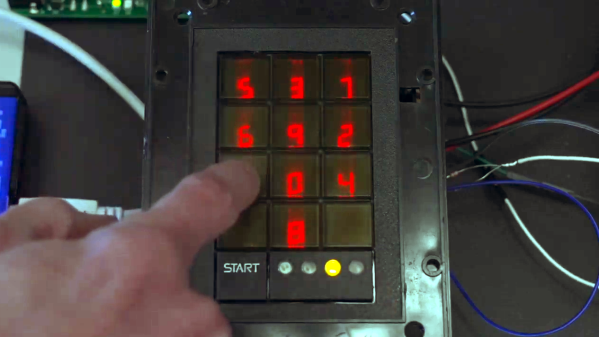

Scramblepad Teardown Reveals Complicated, Expensive Innards

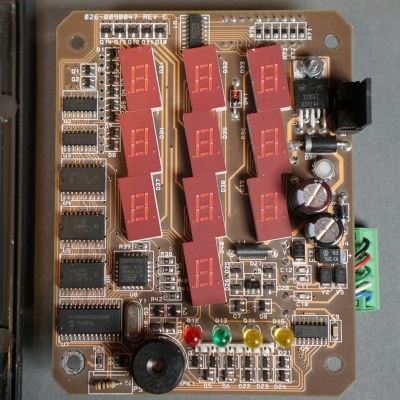

What’s a Scramblepad? It’s a type of number pad in which the numbers aren’t in fixed locations, and can only be seen from a narrow viewing angle. Every time the pad is activated, the buttons have different numbers. That way, a constant numerical code isn’t telegraphed by either button wear, or finger positions when punching it in. [Glen Akins] got his hands on one last year and figured out how to interface to it, and shared loads of nice photos and details about just how complicated this device was on the inside.

Patented in 1982 and used for access control, a Scramblepad aimed to avoid the risk of someone inferring a code by watching a user punch it in, while also preventing information leakage via wear and tear on the keys themselves. They were designed to solve some specific issues, but as [Glen] points out, there are many good reasons they aren’t used today. Not only is their accessibility poor (they only worked at a certain height and viewing angle, and aren’t accessible to sight-impaired folks) but on top of that they are complex, expensive, and not vandal-proof.

[Glen]’s Scramblepad might be obsolete, but with its black build, sharp lines, and red LED 7-segment displays it has an undeniable style. It also includes an RFID reader, allowing it to act as a kind of two-factor access control.

On the inside, the reader is a hefty piece of hardware with multiple layers of PCBs and antennas. Despite all the electronics crammed into the Scramblepad, all by itself it doesn’t do much. A central controller is what actually controls door access, and the pad communicates to this board via an unencrypted, proprietary protocol. [Glen] went through the work of decoding this, and designed a simplified board that he plans to use for his own door access controller.

In the meantime, it’s a great peek inside a neat piece of hardware. You can see [Glen]’s Scramblepad in action in the short video embedded below.

Continue reading “Scramblepad Teardown Reveals Complicated, Expensive Innards”

Front Door Keys Hidden In Plain Sight

If there’s one thing about managing a bunch of keys, whether they’re for RSA, SSH, or a car, it’s that large amounts of them can be a hassle. In fact, anything that makes life even a little bit simpler is a concept we often see projects built on to of, and keys are no different. This project, for example, eliminates the need to consciously carry a house key around by hiding it in a piece of jewelry.

This project sprang from [Maxime]’s previous project, which allowed the front door to be unlocked with a smartphone or tablet. This isn’t much better than carrying a key, since the valuable piece of electronics must be toted along in place of one. Instead, this build eschews the smartphone for a ring which can be worn and used to unlock the door with the wave of a hand. The ring contains an RFID which is read by an antenna that’s monitored by a Wemos D1 Mini. When it sees the ring, a set of servos unlocks the door.

The entire device is mounted on the front of the door about where a peephole would normally be, with the mechanical actuators on the inside. It seems just as secure (if not more so) than carrying around a metal key, and we also appreciate the aesthetic of circuit boards shown off in this way, rather than hidden inside an enclosure. It’s an interesting build that reminds us of some other unique ways of unlocking a door.

What’s Old Is New Again: GPT-3 Prompt Injection Attack Affects AI

What do SQL injection attacks have in common with the nuances of GPT-3 prompting? More than one might think, it turns out.

Many security exploits hinge on getting user-supplied data incorrectly treated as instruction. With that in mind, read on to see [Simon Willison] explain how GPT-3 — a natural-language AI — can be made to act incorrectly via what he’s calling prompt injection attacks.

This all started with a fascinating tweet from [Riley Goodside] demonstrating the ability to exploit GPT-3 prompts with malicious instructions that order the model to behave differently than one would expect.

Continue reading “What’s Old Is New Again: GPT-3 Prompt Injection Attack Affects AI”

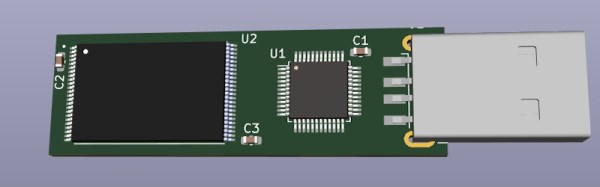

USB Drive Keeps Your Secrets… As Long As Your Fingers Are Wet?

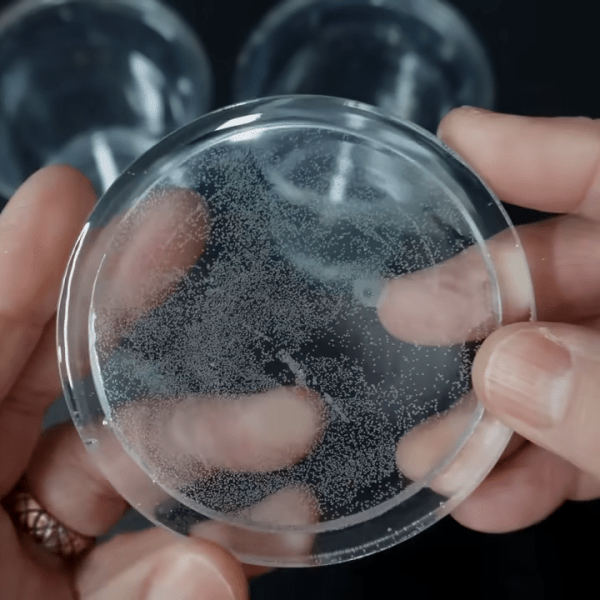

[Walker] has a very interesting new project: a completely different take on a self-destructing USB drive. Instead of relying on encryption or other “visible” security features, this device looks and works like an utterly normal USB drive. The only difference is this: if an unauthorized person plugs it in, there’s no data. What separates authorized access from unauthorized? Wet fingers.

It sounds weird, but let’s walk through the thinking behind the concept. First, encryption is of course the technologically sound and correct solution to data security. But in some environments, the mere presence of encryption technology can be considered incriminating. In such environments, it is better for the drive to appear completely normal.

The second part is the access control; the “wet fingers” part. [Walker] plans to have hidden electrodes surreptitiously measure the resistance of a user’s finger when it’s being plugged in. He says a dry finger should be around 1.5 MΩ, but wet fingers are more like 500 kΩ.

But why detect a wet finger as part of access control? Well, what’s something no normal person would do right before plugging in a USB drive? Lick their finger. And what’s something a microcontroller should be able to detect easily without a lot of extra parts? A freshly-licked finger.

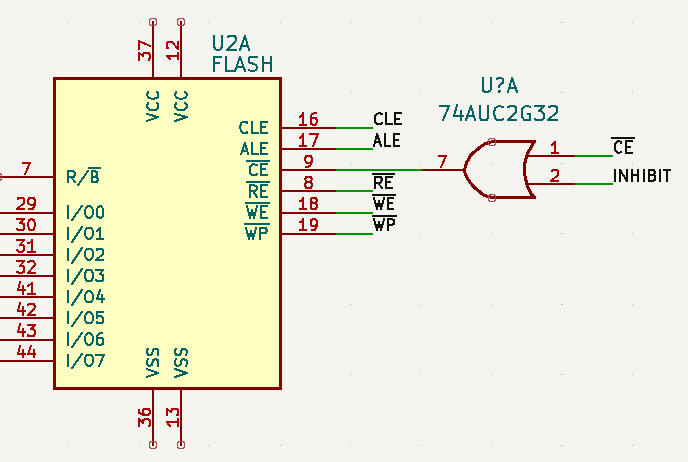

Of course, detecting wet skin is only half the equation. You still need to implement a USB Mass Storage device, and that’s where things get particularly interesting. Even if you aren’t into the covert aspect of this device, the research [Walker] has done into USB storage controllers and flash chips, combined with the KiCad footprints he’s already put together means this open source project will be a great example for anyone looking to roll their own USB flash drives.

Regular readers may recall that [Walker] was previously working on a very impressive Linux “wall wart” intended for penetration testers, but the chip shortage has put that ambitious project on hold for the time being. As this build looks to utilize less exotic components, hopefully it can avoid a similar fate.

McTerminals Give The Hamburglar A Chance

The golden arches of a McDonald’s restaurant are a ubiquitous feature of life in so many parts of the world, and while their food might not be to all tastes their comforting familiarity draws in many a weary traveler. There was a time when buying a burger meant a conversation with a spotty teen behind the till, but now the transaction is more likely to take place at a terminal with a large touch screen. These terminals have caught the attention of [Geoff Huntley], who has written about their surprising level of vulnerability.

When you’re ordering your Big Mac and fries, you’re in reality standing in front of a Windows PC, and repeated observation of start-up reveals that the ordering application runs under an administrator account. The machine has a card reader and a receipt printer, and it’s because of this printer that the vulnerability starts. In a high-traffic restaurant the paper rolls often run out, and the overworked staff often leave the cabinets unlocked to facilitate access. Thus an attacker need only gain access to the machine to reset it and they can be in front of a touch screen with administrator access during boot, and from that start they can do anything. Given that these machines handle thousands of card transactions daily, the prospect of a skimming attack becomes very real.

The fault here lies in whoever designed these machines for McDonalds, instead of putting appropriate security on the software the whole show relies on the security of the lock. We hope that they don’t come down on the kids changing the paper, and instead get their software fixed. Meanwhile this isn’t the first time we’ve peered into some McHardware.

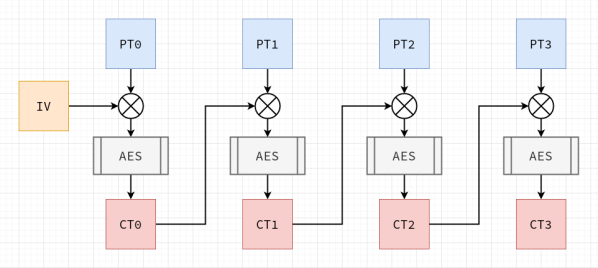

Why You Should Totally Roll Your Own AES Cryptography

Software developers are usually told to ‘never write your own cryptography’, and there definitely are sufficient examples to be found in the past decades of cases where DIY crypto routines caused real damage. This is also the introduction to [Francis Stokes]’s article on rolling your own crypto system. Even if you understand the mathematics behind a cryptographic system like AES (symmetric encryption), assumptions made by your code, along with side-channel and many other types of attacks, can nullify your efforts.

So then why write an article on doing exactly what you’re told not to do? This is contained in the often forgotten addendum to ‘don’t roll your own crypto’, which is ‘for anything important’. [Francis]’s tutorial on how to implement AES is incredibly informative as an introduction to symmetric key cryptography for software developers, and demonstrates a number of obvious weaknesses users of an AES library may not be aware of.

This then shows the reason why any developer who uses cryptography in some fashion for anything should absolutely roll their own crypto: to take a peek inside what is usually a library’s black box, and to better understand how the mathematical principles behind AES are translated into a real-world system. Additionally it may be very instructive if your goal is to become a security researcher whose day job is to find the flaws in these systems.

Essentially: definitely do try this at home, just keep your DIY crypto away from production servers :)