If you’re worried that [Roman Dvořák]’s spectroscopic analysis of fireworks is going to ruin New Year’s Eve or the Fourth of July, relax — the science of this build only adds to the fun.

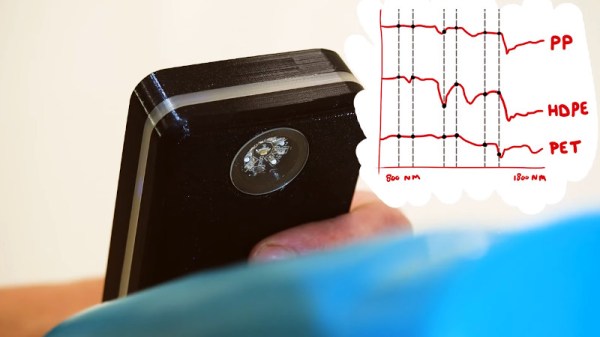

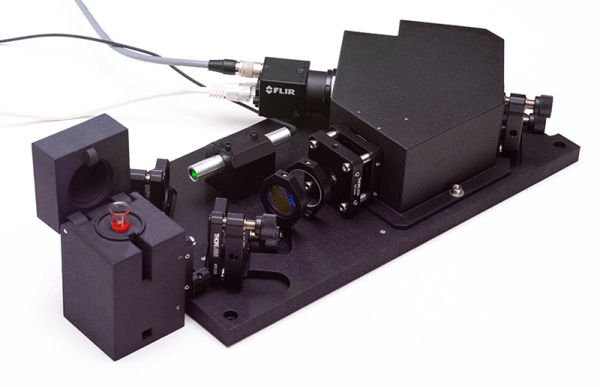

Not that there’s nothing to worry about with fireworks, of course; there are plenty of nasty chemicals in there, and we can say from first-hand experience that getting hit in the face and chest with shrapnel from a shell is an unpleasant experience. [Roman]’s goal with this experiment is pretty simple: to see if it’s possible to cobble together a spectrograph to identify the elements that light up the sky during a pyrotechnic display. The camera rig was mainly assembled from readily available gear, including a Chronos monochrome high-speed camera and a 500-mm telescopic lens. A 100 line/mm grating was attached between the lens and the camera, a finding scope was attached, and the whole thing went onto a sturdy tripod.

From a perch above Prague on New Year’s Eve, [Roman] collected a ton of images in RAW12 format. The files were converted to TIFFs by a Python script and converted to video by FFmpeg. Frames with good spectra were selected for analysis using a Jupyter Notebook project. Spectra were selected by moving the cursor across the image using slider controls, converting pixel positions into wavelengths.

There are some optical improvements [Roman] would like to make, especially in aiming and focusing the camera; as he says, the dynamic and unpredictable nature of fireworks makes them difficult to photograph. As for identifying elements in the spectra, that’s on the to-do list until he can find a library of spectra to use. Or, there’s always DIY Raman spectroscopy. Continue reading “Seeing Fireworks In A Different Light”