There are a number of companies now providing turn-key computers that meet the Free Software Foundation’s criteria for their “Respects Your Freedom” certification. This means, in a general sense, that the computer is guaranteed not to spy on you or otherwise do anything else you didn’t explicitly ask it to. Unfortunately these machines often have a hefty premium tacked on, making it an unpleasant decision between privacy and performance.

Freedom-loving hacker [SolidHal] writes in to tell us about his quest to create a FSF-compliant laptop without breaking the bank. Based on a cheap Asus C201 Chromebook, his custom machine checks off all the appropriate boxes. The operating system was easy enough with an install of Debian, and the bootloader was rid of any Intel Management Engine shenanigans with a healthy dose of Libreboot. But there was one problem: the permanently installed WiFi hardware that required proprietary firmware. To remedy the issue, he decided to install an internal USB Wi-Fi adapter that has the FSF seal of approval.

Freedom-loving hacker [SolidHal] writes in to tell us about his quest to create a FSF-compliant laptop without breaking the bank. Based on a cheap Asus C201 Chromebook, his custom machine checks off all the appropriate boxes. The operating system was easy enough with an install of Debian, and the bootloader was rid of any Intel Management Engine shenanigans with a healthy dose of Libreboot. But there was one problem: the permanently installed WiFi hardware that required proprietary firmware. To remedy the issue, he decided to install an internal USB Wi-Fi adapter that has the FSF seal of approval.

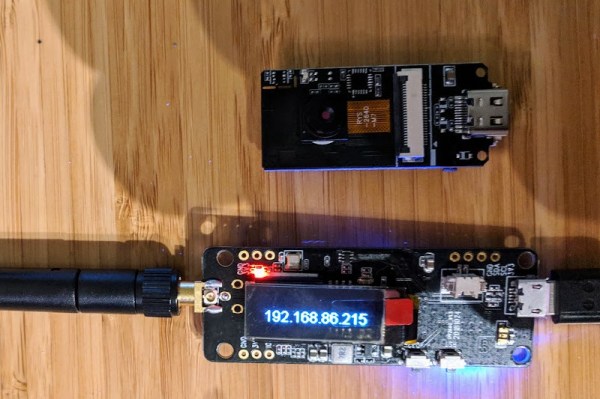

As the Chromebook obviously doesn’t have an internal USB port, this was easier said than done. But as [SolidHal] is not the kind of guy who would want his laptop taking pictures of him in the first place, he had the idea to take the internal USB connection used by the integrated webcam and use that. He pulled the webcam out, studied the wiring, and determined which wires corresponded to the normal USB pinout.

The FSF approved ThinkPenguin Wi-Fi adapter he chose is exceptionally small, so it was easy enough to tuck it inside some empty space inside of the Chromebook. [SolidHal] just needed to solder it to the old webcam connection, and wrap it up in Kapton tape to prevent any possible shorts. The signal probably isn’t great considering the antenna is stuck inside the machine with all the noisy components, but it’s a trade-off for having a fully free and open source driver. But as already established, sometimes these are the kind of tough choices you have to make when walking in the righteous footsteps of Saint Ignucius.

Internal laptop modifications like this one remind us of the Ye Olden Days of Hackaday, when Eee PC modifications were all the rage and we still ran black and white pictures “taped” to the screen. Ah, the memories.