Ecclesiastes 1:9 reads “What has been will be again, what has done will be done again; there is nothing new under the sun.” Or in other words, 5G is mostly marketing nonsense; like 4G, 3G, and 2G was before it. Let’s not forget LTE, 4G LTE, Advance 4G, and Edge.

Technically, 5G means that providers could, if they wanted to, install some EHF antennas; the same kind we’ve been using forever to do point to point microwave internet in cities. These frequencies are too lazy to pass through a wall, so we’d have to install these antennas in a grid at ground level. The promised result is that we’ll all get slightly lower latency tiered internet connections that won’t live up to the hype at all. From a customer perspective, about the only thing it will do is let us hit the 8Gb ceiling twice as faster on our “unlimited” plans before they throttle us. It might be nice on a laptop, but it would be a historically ridiculous assumption that Verizon is going to let us tether devices to their shiny new network without charging us a million Yen for the privilege.

So, what’s the deal? From a practical standpoint we’ve already maxed out what a phone needs. For example, here’s a dirty secret of the phone world: you can’t tell the difference between 1080p and 720p video on a tiny screen. I know of more than one company where the 1080p on their app really means 640 or 720 displayed on the device and 1080p is recorded on the cloud somewhere for download. Not a single user has noticed or complained. Oh, maybe if you’re looking hard you can feel that one picture is sharper than the other, but past that what are you doing? Likewise, what’s the point of 60fps 8k video on a phone? Or even a laptop for that matter?

Are we really going to max out a mobile webpage? Since our device’s ability to present information exceeds our ability to process it, is there a theoretical maximum to the size of an app? Even if we had Gbit internet to every phone in the world, from a user standpoint it would be a marginal improvement at best. Unless you’re a professional mobile game player (is that a thing yet?) latency is meaningless to you. The buffer buffs the experience until it shines.

So why should we care about billion dollar corporations racing to have the best network for sending low resolution advertising gifs to our disctracto cubes? Because 5G is for robots.

Bruteforce, But With Antennas

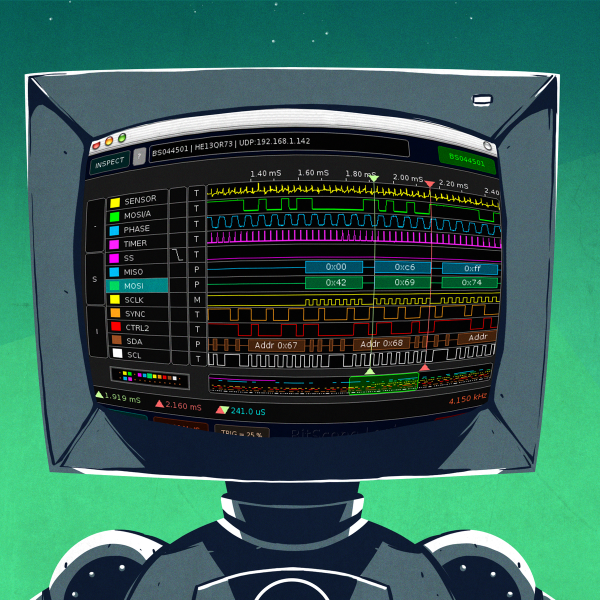

Yes, 5G was always for robots and never for human-smartphone hybrids. More specifically, a massive grid of microwave antennas delivering low latency gigabit connections to cities and farms is for robots. Right now if you want to hook up to an EHF internet band you need to send a team of wizards onto multiple rooftops to align antennas and do all sorts of other chanting, dancing, cursing, and runework to get the damn things to go. Every time a breeze knocks the antennas out of alignment or a tree grows too tall they need to go back up there to do it again. It’s not that the FR2 band on 5G is going to rock our world by itself, it’s that we’ll have an agreed upon hardware solution that handles all the difficult things like swapping antennas on this band that’s going to do that.

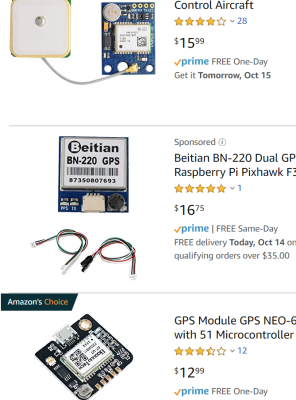

I remember my robot team in university digging through catalog after catalog for GPS antennas. We finally discovered that a growing market for reliable antenna modules in agriculture had dropped the price down to ridiculously low prices like $1,500 dollars. At this time, the iPhone had just come out the year before and the Motorola Razer was still the cool kids’ phone. A few years later I ditched my Nokia 3310 equivalent for an HTC Thunderbolt. Fast forward to today and a GPS module is fifteen bucks with prime shipping. That’s a 100x drop in price. We can expect these microwave modems and antennas to fall similarly.

Todays Robots Store Their Memories Somewhere Else

Lets look at a modern robot. We’ve had a machine learning boom these last few years. This has changed the model for robots dramatically.

Before you absolutely had to have a ton of compute sitting on the robot itself. Also the robot ran on human generated algorithms and didn’t necessarily require a lot of storage space to improve its performance. The performance was tied more directly to human engineering time.

Now what you need is enough computer to run a fairly deterministic model for real time decisions only. The rest of the data is sent to a server farms somewhere in the world where it’s fed into the learning algorithms which improve the results. Some of the more expensive but lest time intense decisions are also run there. This however sometimes has the flip equation of how much data you collect being the primary driver in how well your robot can perform.

An example familiar to me is that an IoT camera might run motion detection on the device, but it will do things like object detection on the cloud.

An example familiar to me is that an IoT camera might run motion detection on the device, but it will do things like object detection on the cloud.

A better example is that there’s quite a market for the hard drives that live inside an autonomous car. If you have a fleet of Cruises, Zooxs’, Waymos, Ubers, or Teslas, how the heck do you get the data off the car to the cloud for processing? Especially when this data can rack up terabytes from a single run. The current solution is a very expensive hot-swappable cartridge full of hard drives. When you drive your test car back in the garage they pull out the spent clip and slot in another one. It’s pretty dang cyber punk, but inherently inefficient.

5G networks are the perfect solution for this kind of problem. Not only does it have enough bandwidth to get all this data straight off the device as it’s being generated, but its extremely low latency also allows for those cloud processed decisions to come back to the robot even faster. My bet is that in the next twenty years we will see a few billion dollar companies spring up doing things like building interconnect layers, hardware modules, and setting up massive agricultural 5G networks for robots.

All in all this technology will likely be the weight that tips the scale for tech like those drone deliveries the universe has been threatening us with for the past few years. It will make useful augmented reality more likely, and it will dramatically boost the capabilities of robots living in the 5G grids. It just won’t make our phone experience any better.

Is 5G merely the replacement of last mile (or last 100m) cables with wireless (millimeter wave)? In which case, the game will not be restricted to telcos. Any business or contractor experienced in providing network cabling can play in this.

I don’t see how a business case can be created to support universal coverage with cells of a few hundred meters. If it is going to be spotty, CT2 (https://en.wikipedia.org/wiki/CT2) has taught us that wireless has to be available *everywhere* in order to succeed.

It’s actually going to have a bigger impact in industry than at home or with the consumer market.

Imagine wiring up a shop floor with network cabling for robots, production lines, process monitoring, automated delivery carts, inventory and tool management etc. Now imagine the same thing with a 5G micro-cell and wireless everything all the way down to small radio-ID tags on the products. If the product or process changes, you no longer have to spend two weeks pulling everything apart and putting it back together – you just move this table over there, that robot over here, and reconfigure the software that keeps track of everything.

That’s the real point.

not quite sure what you mean by “robots”, but those big things aren’t very mobile. in places where you have real robots – say manufacturing plants – you’ll have a loads of electromagnetic noise, extreme amount of metallic structures, none of those is cool with wireless communication. 5GNR is a really spectrum efficient thing, but it will not solve all the issues and make everyone happy.

true robots – automated say welding lines in a car plant – are set up for quite long period and need to have extremely well tuned motion plan, there’s no room for remote control.

with the logistic aspect, stuff can work fine. but for this you don’t need no 5G, this works very well with anything that is wireless.

if you pay attention, these “industry 4.0” pamphlets depict the current very efficient and very developed industry as if it was from 17th century England. automation – and i mean reliable automation is a real burden. connected things are already there for years. but i tell you, all this crap must be 100% reliable – and even then plants are just partially automated: stuff that is not complex, where the effort of automating a certain task or chain of tasks pays back on the long run. i’ve been to a plant where they assemble internal combustion engines for a german car manufacturer. they spit out almost 10k diesel and petrol engines a day, they do it in 3 shifts non stop for years, but still the complicated parts are done by workers. to deliver reliable automation for complex tasks is very very expensive and even in this case doesn’t pay for itself.

sadly the ‘great use cases’ you constantly hear about more or less culminated in eMBB and FWA for now. and yes, 5G could also do geolocation, as there are already a bunch of terrestrial location services.

5GNR is really a great stuff, it can totally boost the throughput and deliver high speed access to more connected nodes, but this all comes at a price. a 64×64 MIMO antenna and RRU easily slurps in over 1KW.

and while it is really efficient, it is absolutely complex on the modulation side, so the power hungriness is understandable.

you can’t cheat physics. even with 5GNR you are not able to magically have multi-10Gbps throughput from the currently available sub-6GHz bands. as for mmWave, regardless of the modulation some caveats apply: LOS is a bitch, licensed spectrum is expensive as hell.

to me this really sounds like a direct relative to the dotcom bubble back in 2000s.

there is a way too much talk all over the place about 5G, and most people just keep on repeating the nonsense the ‘tech media’ spits out on full throughput. you can hear kids cheering for 5G ‘cuz there will be no more lag in games, everything is accessible within 1ms’. sure, someone just misunderstood to what the 1ms latency applies: the air interface, so the 100-400m between the UE and the BS – and after a year everyone goes bananas that we will be remotely driving cars in bangladesh from our living room, as there will be no latency. if this tech would be this cool at ‘removing’ latency from data communication, believe me the first and foremost application would be real time trading and arbitrage, where the milliseconds literally translate into money.

and the throughput, which can be really there, ends at the baseband. from this point on you’ll need your RAN to carry the bits to the EPC, as the initial 5G deployments rely on the classic 4G packet core – so a bottleneck. and your packets need to travel along that path before we can call them as packets and they can take their first hop towards their destination.

5GNR is a great stuff, but it will not make the rain fall upwards. with a little less steam on the ship horns would be nice, this would leave a bit more at the engines.

>but those big things aren’t very mobile

Having been inside modern high-tech factories, the new trend is to make them mobile, or at least with the possibility of being carted around to reconfigure the production lines quickly. So called “cobots” are also getting along, which means having small robots that do routine tasks alongside a human operator. Then there’s the mechanics of shuffling parts and products along the shop floor with little wheeled robots that keep you supplied with the right sort of parts, or take away the PCB you just tested etc. You can order stuff you need from a “supermarket” and the robot delivers them to your station just as the system determines you’re about to run out of components A and D.

All that pretty much requires that it’s done wirelessly, because the amount of cables you need to install and maintain would be just ridiculous.

>why not just use normal 802.11 wireless?

Because it’s really not designed for serving that many endpoints. The network gets congested too easily. Meanwhile, the 5G protocols such as those underpinning NB-IoT are designed to support up to hundreds of thousands of devices in the same cell – because the devices don’t have to be actively on and transmitting to remain in the network and thus waste the spectrum.

If you’re doing it inside a building, why not just use normal 802.11 wireless?

The kit already exists, and is relativity affordable. It is very widely used, so getting it to interoperate with all your other equipment and network is trivial.

5G is just expensive wifi.

802.11 gets congested very easily and the efficiency drops dramatically when the number of devices is increased. In practice, almost all wlan networks operate in this condition because there’s just too much interference on the ISM band and people don’t know or care to set their channels so they wouldn’t overlap. Then someone comes and turns on the access point on their cellphone, and it’s all ruined anyways.

https://www.riverpublishers.com/journal/journal_articles/RP_Journal_1904-4720%20_245.pdf

>”Results show inefficient use of the wireless medium incertain scenarios, due to a large portion of management and control framescompared to data content frames (i.e. only 21% of the frames is identified asdata frames).”

This is the reason why it’s hard to get any wireless to your laptop that would actually transmit faster than 1-10 Mbps – unless you live in a detached home and the neighbors are far enough.

CT2 had its own set of problems, with not being able to receive a call being a major one, requiring you to also have a pager. 1994 February Wired (available on archive.org) has an article by Neal Stephenson about his Shenzhen adventure trip describing CT2 system in use.

Hi There, just to confirm, the only difference between 3G and 5G is the last mile and both use the same cables for the majority? Can you provide more detail about the 5G endpoint?

Ring ring ring…. better check that door bell quick.

Not a single mention of 5G Networks Improved Location Awareness, where every device is known within less than a meter with an acquired time under of 15 milliseconds. Which in most cities where GPS is next to useless would be helpful for robotics.

dual band gps helps this too… between the two location services will be able to unlock next generation of nav technologies. your phone can know what lane you’re in not just what road your on.

If something is pushed upon you, you know it will give someone already mightier than you an even more disproportional power advantage over you.

To a large degree, 5G is mostly just driven forth due to hype.

Like fiber to the home is overhyped as a solution to slow DSL lines, since an old DSL line can’t supply more then a few Mb/s of bandwidth? (VDSL2 goes to 100Mb/s for a distance of 500 meters from the DSLAM station on the curb down the street, and uses an old telephone cable… And this has been the case since 2011. (There is also the G.fast DSL system that goes to 1Gb/s for 100 meters. (why not use an Ethernet cable at this point? Though the telephone line is 1 twisted pair, not 4 like in an Ethernet cable… Maybe 4x G.fast could be a thing….)))

Though, with more bandwidth, website and software makers will quickly figure out a way to add a figurative ton of new data, generally trash that is totally worthless. Or just splurge on live video backgrounds at 4K resolution, since that makes the user experience “better”…….

Sensor data from cars/autonomous-systems might seem like something that will need tons of bandwidth, well, not really.

A typical car spends less then half a day out on the road, electric ones especially. So there is plenty of downtime to idly send over that data. Not to mention that a fair portion of the data is most likely worthless trash that likely can easily be filtered out, though one can just refer back to the prior paragraph…

WiFi 6 on the other hand did make a large improvement to WiFi latency, and this is kinda one of the only things that is actually good with it. Otherwise, most WiFi connections already has plenty of speed for most actual applications. (Might not be good in some edge case scenarios like media production companies, or other high bandwidth applications where 10Gb/s networking is more logical. But then one wouldn’t really use WiFi…)

Though, marketers likes to overstate things. Like a 4K video stream needs a 1Gb/s connection, doesn’t work without it…. (A 4K video stream can use 1kb/s, but it will look like garbage, at 40Mb/s most people can’t see the compression noise anymore… (unless one looks at vector art, or the desktop of an OS, or maybe a text console. Video production companies on the other hand wants far more then 40Mb/s from their cameras, but that is due to them wanting to do post editing on the video, for final delivery, it is a different story.))

In the end, 5G is about as important of a step forward as . .. … ….

exactly. i love how many folks claimed til their death that 5G is required for autonomous driving. since autonomous means that … it relies on an external telecommunication infrastructure?

I think 5G may be useful for making data cheaper. 4G is totally good enough, but it’s not cheap enough.

5G is mostly for pushing multi megabyte websites full of useless javascripts, tracking stuff and advertisements.

For decent content (text, not video or audio) a few hundred kB should be enough for any website.

The world is shifting backwards, from 8 to 1 or:

It’s: *&^%$#@!

One of the worst of the websites I use is Aliexpress.

They keep directing me to login windows when all I want to do is sniff around a bit for availability of stuff.

Testing the VPN funcion in Opera, but I have to switch locations regularly to keep Aliexpress from nagging at me.

Well the most common argument for broadband (the faster the better) has always been entertainment. People will even fight you if you suggest anything lessor. Life, liberty, and the pursuit of fast speeds. Even if the reality is that much like insurance, people are overspending for what they don’t really need.

Aliexpress login is a joke. Persistence is broken, so repeat logins are often required during the same visit, and the login button still appears after you are already logged in.

Except latency is not meaningless, sadly now that the cloud+as-a-service thing has been thoroughly rammed down our throats by various corporations it seems that fewer and fewer things run locally in any meaningful way; the round trip time to the server is make or break if you are stuck using M$ Office 365 for instance (and many of us with more sense than to ever trust such a monstrosity still have to use it in some capacity in the course of the old day job).

Latency should be meaningless for most tasks because everything should run locally, fully offline, and the network should only come into play when the user makes an intentional conscious choice to push a file to the server, or pull a file down from the server, or query the server to search, or whatever. Sadly this is not the world we live in these days. Instead we carry pocket supercomputers which software vendors have seen fit to use as dumb thin clients and weave client/server hooks into even the most trivial interaction so that data may be gathered, engagement inferred, and attention monetized.

Aside from that cesspool of commercial interests I wouldn’t mind low enough latency to run interactive ssh sessions over the cell network without the great chunkiness one experinces today. I’ll always prefer to have smarts at the edge of the network than on a remote server, but for when that is not practical for some reason there’s still much improvement to be had in the latency department.

5G is for mass surveillance. Bunch of connected cameras sending high resolution data to face recognition farm somewhere.

Its eerie how accurate you are with this without being accurate at all.

There are 2 components to 5G, the wireless spectrum standard and the network / routing standard connecting towers together. The first is what 99% of people think 5G is.

the real issue is that the network / routing standard is designed to re-introduce a form of source routing into the arrangement that would allow ALL communications from a device to be routed through any pathway the network provider determined to be appropriate. If an organisation of a state deemed it appropriate to collect all communications from your 5G connected device, then they only need to instruct the edge devices to route your traffic via their devices instead of the rest of the network.

Current methods require TAPs to be in place and filters to extract the data, voice and data are on separate connections making it even harder. 5G is simple….

Nobody will ever need more than 64k……

….64k video for 360 degree stereoscopic 480 FPS gaming!

While many current “robot” (and other types of sensing, thinking actuating systems like autonomous vehicles…) builders like using a cloud server to handle the computing gruntwork this is a BAD PRACTICE to be getting into. It makes you reliant not just on the external service hosting your cloud servers but also on the path of connectivity between you and it, maybe you think you can trust the various ISPs and server companies not to fall down too often, but can you trust them not to spy on the data involved, not all types on info can be practically encrypted and traffic analysis /geolocations can be just as revealing. The sensible thing to do is keep all essentiaol stuff local, 5G will only make bad practice an easier hole to fall into. It will only increase the chaos when, as inevitably happens now and again, some big piece of back end infrastructure has a Total Inability To Support Usual Protocols error.

Didn’t the weather service say that 5g will push back forecasting back to the 80’s and interfere with the way we detect changes to the weather?

Yes they did but they did not provide sufficient hookers and cocaine to the FCC, so it sided with the carriers.

Yet another stupid solution looking for a problem. All this “5G will be awesome at everything” crap completely ignores the fact that radio is an inherently unreliable means of data communications! Any company that switches from wired networks to 5G will almost certainly regret doing so. Interference will only become more and more of an issue as more people start using devices on that band. Wired data connections are not going to be supplanted by a technology that can’t be secured, hardened, or even relied upon to be usable when needed. Watch the signal meter on your pocket radio, I mean cell phone, if you don’t believe me that radio is inherently unreliable. Unless you live under a tower, you will find many places where you will lose the signal completely, even in towns with overlapping coverage. 5G is even less reliable than that due to millimeter-wave radio’s inherent inability to go through walls and around corners.

I’m a radio hobbyist (ham) and have lots of fun playing with it, but I sure as heck would never trust anything vital to radio when a wired solution is available.

“All this “5G will be awesome at everything” crap completely ignores the fact that radio is an inherently unreliable means of data communications! ”

Implying wired can’t. Just ask all the backhoe drivers out there.

5G cell sites are fiber fed. 10Gbps is the new normal. The ability of a backhoe to find and rip out fiber is uncanny.

Key phrase” ” when a wired solution is available”. What ever it’s called wireless will be the option, for some to get internet speed faster thai dial-up. I’m an amateur radio operator as well. I always figure, if I wanted internet faster than dial-up, I would have to purchase telephone in nearby town that has better intenet service, and build/mainmast my own wireless clink. However a local telephone CoOnput in a WISP equipment, and that’s what I’m using now. Yea a hard connection would be more reliable, but, no Telecom will be replace the old Bell/ATT copper many of us ae connected to.hen the wind cranks the WISP antenna out of position,I activate the iPhone hotspot.

More sensors for more tracking, remote sensing and remote transmission that seems to me to be for, from an optimist perspective better information awareness, health and safety. From a pessimist perspective, more invasive nuisance invaders with malicious intent to mask armed rob at the least.

Seems creepy reviewing the 5G approved frequencies and the phase synchronized combination with existing infrastructure frequencies having a capability to converge and be ~ the 95GHz active denial frequency even though more than 95GHz is effective from what I’ve read. https://en.wikipedia.org/wiki/Active_Denial_System

Then their is the MEDUSA sound control method(s). https://en.wikipedia.org/wiki/MEDUSA_(weapon)

https://www.transformation.dk/www.raven1.net/proventechs.pdf

With all the sensors that seem to need to be implemented… makes me wonder how much electrophysiological signals can be detected and adversely impacted whether main stream known or not. https://en.wikipedia.org/wiki/Electrophysiology#Electrographic_modalities_by_body_part

How does this 5g is for robots idea play out with “autonomous” vehicles?

I thought trucking was supposed to be one of the big drivers for such vehicles.

A lot of that happens on medium to long distance (i.e. highways).

A lot of highways don’t even have 4g yet. Are they really going to plaster Montana (and other large western states) with 5g antennas all along the highways?

Nah, it’s just hype and lies so they can get a bunch of money.

Ecclesiastes? WTH? I’m not really sure why scripture that been proven false many times, shouldn’t lead into an article. Then again this is a rant not an article. Sure 5G is new, just like the first celluar istalations. I rember the IMTS. Sure this a lot of marketing (Hype I suppose) to entice a custmer bas isn’t uuseal. I tune it out,understanding if I want basic mobile phone, and internet serice, I will have to use the equipment and network that is made available to me. I suspect this is tech to acceleration and expand roll out I firstnet https://www.firstnet.gov/ suppose there can’t never be nothing new, hackaday will be turning out the lights on it’s way out the door. ;)

So uh, this designated national security issue wouldn’t be used for illuminating the entire US with millimeter-wave-like background for passive image detection, right? Asking for a friend.

“…be used for illuminating the entire US with millimeter-wave-like background for passive image detection, right?”

Yeah, my thought for more advanced not well disclosed imaging RADAR’s with remote transmission capabilities for more than denial of service or area denial performance and I’m guessing for jamming valid systems that are legitimate public servant officials public service role and responsibility systems as defined by their offices mission and duties.

Seems as the weather predictions begin to improve better than historically were and boom, 5G: https://hackaday.com/2019/04/16/5g-buildout-likely-to-put-weather-forecasting-at-risk/

5g is a grift. It’s for scamming money.

5G needs to keep its grubby little hands off my C-BAND. They’re grabbing at the preferred distribution method for Cable providers and giving us NOTHING in return. The FCC is being idiotic in this ruling. You tell me of a technology that can hit the ENTIRE Continental US….Only satellite has this ability. Hundreds of Billions have been spent to put a network in Geosync orbit, and the phone companies just begin to hammer away at an industry that beat them to the punch.

5G is fine as long as it’s not using established and still viable frequencies to push out this money grab. But if you heard their arguments for taking the bandwidth, you’d plotz.

5G is meaningless in country side …useless for farmers ..

Hey, lets steal 600 MHz TV bandwidth and tell people it will give them better range. It’ll still be LOS but it’ll make business happy because we will do a better job of building penetration, maybe. Then there’s this 5G mess. Sometimes it appears that new markets are being created out of whole cloth to peddle more equipment. I’m sure the FCC has competent engineers, but they sure don’t appear to have any real idea as to what going on.

ceiling twice as faster on

Meet talk english real good huh.

Seems like at least half the articles you publish, nobody bothered to proof read.

Might anyone address why the Federal government/FCC is pushing 5g so hard, and why, as part of that, is there major deregulation of resource protection. Thank you.

5G is a costly con job by the telco$.

My home setup is fixed Home Wireless Broadband (4g)

which worked well enough for the usual streaming and gaming and endless updates that multiply devices seem to need.

But living in a regional postcode, my provider (uses the Australian Optus network),

can only offer the throttled speed which optus supply’s

“12 mbps for metro areas and 5 mbps for outside the metro area”

Yes 4g can go faster but the telco (optus) has chosen to cheapskate it.

with 5G it’ll be the same, great in the cities

but cost vs number of people covered regionally

will factor in their $$ plans and speed offerings.

Also with 5G the higher the frequency the shorter the range.

there’ll need to a 5G tower every 500 meters.

(closer if there are a few trees, buildings, metal fences)

So its not cost effective for the telcos to build everwhere for everyone.

(if it was worth it to them $$, but they of fixed the regional 4G blackspots)

your mobile at say the Football, or a large concert may work better?, with half the crowd on 5G and the other half on 4G.

but the telcos will be putting the infrastructure in place for the average use vs peak use.)

The all the customers will have to upgrade their mobiles the the high end models ,and all other devices to be able to connect at the “faster” 5G.

and so your pizza in that Self driving home delivering car, well its going cold.

cause its stuck without signal, since it raining and the leaves on

the roadside trees are interfering with the weak coverage.

The robots are already here in our lives. They are in the shape of the algorithms that govern the information we consume.

Very interesting. I don’t know much about tech, but this makes sense. Also the Razer will always be the cool kids phone.