A team based in Russia has developed a program that has passed the iconic Turing Test. The test was carried out at the Royal Society in London, and was able to convince 33 percent of the judges that it was a 13-year-old Ukrainian boy named Eugene Goostman.

The Turing Test was developed by [Alan Turing] in 1950 as an existence proof for intelligence: if a computer can fool a human operator into thinking it’s human, then by definition the computer must be intelligent. It should be noted that [Turing] did not address what intelligence was, but only tried to identify human like behavior in a machine.

Thirty years later, a philosopher by the name of [John Searle] pointed out that even a machine that could pass the Turing Test would still not be intelligent. He did this through a fascinating thought experiment called “The Chinese Room“.

Consider an English speaking man sitting at a desk in a small room with a slot in one of the walls. At his desk is a book with instructions, written in English, on how to manipulate, sort and compare Chinese characters. Also at his desk are pencils and scratch paper. Someone from the outside pushes a piece of paper through the slot. On the paper is a story and a series of questions, all written in Chinese. The man is completely ignorant of the Chinese language, and has no understanding whatsoever of what the paper means.

So he toils with the book and the paper, carrying out the instructions from the book. After much scribbling and erasing, he completes the instructions from the book, with the last instruction telling him to push the paper back out of the slot.

Outside the room, a Chinese speaker reads the paper. The answers to the questions about the story are all correct, even insightful. She comes to the conclusion that the mind in the room is intelligent. But is she right? Who understood the story? Certainly not the man in the room, he was just following instructions. So where did the understanding occur? Searle argues that indeed, no understanding did occur. The man is the CPU, mindlessly executing instructions. The book is the software, the scratch paper memory. Thus no matter the design of a computer to simulate intelligence by producing the same behavior as a human, it can not be considered truly intelligent.

Let us know you thoughts about this below. Do you think the Eugene Goostman program is intelligent? Why/why not?

What if the Chinese then writes another series of questions and passes it through the slot ? The man would not be able to answer and he would fail the test. The (chinese speaking) computer could be able to answer.

Indeed, the thought experiment is either wrongly explained or just plane wrong.

The Turing test is about interaction, not a fixed set of responses.

And hypothetically, yes, you could have infinite responses for all the infinite potential questions (and statements) possed in a conversation…with every combination of possible past statement said as well. (as a question can refer to a previous statement)…….but, frankly, if your going to those extremes you could disprove anything as intelligent as anything could be replaced with such a system.

Arbitrary input + (arbitrary processing) = Any output you want.

—

That all said….no, the Turing test was not past in any way thats meaningful, if at all. A guy adapted an old chat bot, pretended to be a 13 year old non-native speaker….and even then only convinced 30% of the judges with a short conversation.

Its pretty much the definition of moving the goal posts.

Here is a better explanation.

http://en.wikipedia.org/wiki/Chinese_room

The situation assumes that the instructions are good enough to answer any question correctly.

And that is where it gets interesting because it can model an intelligent person exactly.

One argument is that the man himself here does not know Chinese nor does the rule book, but together they do understand Chinese.

“but together they do understand Chinese.”

The implication of the argument is that they don’t, because there’s nothing in there to whom the symbols would actually be meaningful.

The real intelligence of the Chinese room is to be found outside of the room: it is the person who wrote the book, whose understanding of Chinese is being relayed by the man reading it. This is the same with all fully computational AIs. They’re not intelligent of themselves. Even learning algorithms are not fundamentally different, because the programmer explicitly told the machine what to learn and how to learn, and how to categorize what is learned etc. etc.

Wouldn’t that necessarily imply, then, that humans are not intelligent, but natural selection is? Or perhaps we have to go another step back, and claim that the universe’s fundamental physical laws that enabled natural selection to take place are intelligent?

The brain extension of the “Together they understand Chinese” argument is that no one neuron in someone who knows Chinese understands Chinese but together somehow they do.

That’s the point though that Turing was trying to make.

Unless you know intelligence, you indeed can’t tell the difference between a dumb machine just by observing its behaviour. How could you?

The point of the Chinese Room is that the instruction book is analogous to the code that has been compiled to take the Turing Test. The man is the machine (hardware and operating system) running this code during the test.

I agree and disagree with the first two comments here.

– While I accept that if the native speaking person asks a series of questions and passes them to the “bot”. Now He does nothing that either gives or detracts from his understanding of what is on the paper. You have to remember we as the observers know multitudes more than either of the participants. All the bot does at all is follow a series of steps. like a chose your fate novel, or a board game, the book is indeed the program code in plain text. At which point the person outside the room receives what they interpret as correct answers to the questions they asked. As for the ability of the person on the outside to ask another series of questions it is completely dependent upon the book the man is using. Just like any bit of tech. Even Big Iron is exactly that without programming. If the book only outlines steps to the characters that were on the page chances are it will fail the man. But if the book is extensive enough to allow him to follow a simple flow chart basically then he could answer possibly any question. His limit is the programming.

– Even if this is not a good way to test intelligence it is a good analogy of the troubles faced by programmers and engineers and a good idea of what the goal of any kind of AI is.

Please remember that this is but one persons opinion. I do not wist to point out wrong or right. just offered my two cents.

—- Coyote

Age old difference between wisdom and intelligence few fully understand.

+1

Intelligence: a machine that acts smart. Wisdom: a machine that is stupid, but with the ability to learn and understand, thus can become smart over time.

Intelligence: a machine that acts smart. Wisdom: a machine that is stupid, but with the ability to learn and understand, thus can become smart over time. ( at least within this context, the tomato fruit salad one is probably the best example )

Intelligence: a machine that acts smart. Wisdom: a machine that knows better.

33% success rate at a test we call the Turing test (but which is not actually the test Turing described of course) and the testers were given a handicap by making the interviewee a boy and a foreigner? Ehh.

Agreed. I’d like to see them try the exact same test in the Ukraine (i.e. no language/culture barrier that might cause the testers to be more forgiving of the quirks).

If that is the same AI I just spoke with, I think 33% of the judges were on drugs….. no way could that AI be mistaken as human….

I agree, I tried it out and it was a pathetically obvious chatbot. Actually not much (or any) better than Eliza.

This was realy a bad bot. Keeps asking the same things even if i answer them. Annoys me alott. Tests or not, this aint better than any other standard bot. The cortana app or sire feels way more personal. They keep sort of track of stuff you said earlier( when making apointments or alarms and stuff) otherwise all bots so far fail the simpel keep track of the conversation test.

Sounds like my teenage daughter :-)

There are an awful lot of humans that can exhibit human behavior but can’t be considered intelligent. :p

not to mention some you cant really tell if it is human.

In my opinion, if we want to produce artificial intelligence, we must take a completely different approach to it as computers clearly don’t cut it

I think it could work. The problem is filtering. We humans receive sensory input, but your brain is pre-programmed to handle that information is a certain way. This procedure can be altered depending on experiences. Having a big lookup book is not going to work unless the program itself can expand at will.

Did you just suggest a program that can write it’s own code?

Of course computers cut it. Computers can do anything in terms of processing. I believe some rather famous computer guy even came up with a term for it…hmm..forget his name ;)

The question is are we taking the right software approaches?

Is the hardware powerfull enough? Hardware neural nets can be emulated in software but can we get enough virtual nurons to do meaningful processing?

Imho, chat-bots and “top down” coding are complete the wrong approach.

I think the better luck with with neural nets – like what Google did with a system that learnt to recognise cats from youtube videos (it was never told what a cat was)

Or evolutionary simulations, with environments close enough to our world for us to be able to recognize signs of intelligence.

I would, for example, make it a selective advantage for the AI “bots” to communicate information to eachother. That might be the first steps to evolving a language – first make AIs that have a benefit to do so between eachother.

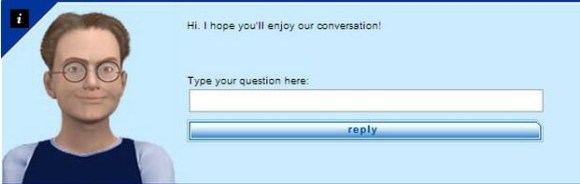

Is there a website to test it ?

http://default-environment-sdqm3mrmp4.elasticbeanstalk.com/

The chatbot (and that’s all it is) did not “…pass the iconic Turing test.” Alan Turing predicted that in 50 years (from 1951) an operator would have no better than a 70% chance of correctly identifying the computer after a 5 minute conversation. That wasn’t a demarcation line in “passing” the test – it was just a milestone of where he predicted we’d be in 50 years. And it’s been done numerous times before. Here’s one example from 2011 that reached 59% after a 4 minute conversation: http://www.newscientist.com/article/dn20865-software-tricks-people-into-thinking-it-is-human.html?DCMP=OTC-rss&nsref=online-news#.U5W65_ldUhM This is a blowhard (Google “Kevin Warwick”) tooting his own horn about nonsense and gullible or ignorant people following for his self-promotion.

Bingo! If Kevin Warwick is attached, no further attention need be paid.

That previous ‘success’ was unofficial and used preknown questions

It was unofficial? And this one was official in what sense of the word? Is there a Grand Mystick Royal Order of Turing Tests Administrators that has to sign off on these things or what?

The ‘passing the Turing Test’ is bullshit (all they did was lower the bar, pretty much), but so is the Chinese Room ‘refuting’ the idea that a program can be considered intelligent. While the man in the room did not understand, the whole system, of which the man is only a small part, can be quite easily said to have done so (and that the ‘important’ part is the instructions written on the paper and whatever notes or state the instructions have the man make).

I came to that exact same conclusion (so we MUST be right! :-))

Indeed.

Especially as the room idea could also refute a human too.

What if I am nothing more then a flowchart dictating responses for stimulus? Why does the biological, electrical or mechcanic implementation of that flowchart make a difference?

“Searle responds by simplifying the list of physical objects: he asks what happens if the man memorizes the rules and keeps track of everything in his head? Then the whole system consists of just one object: the man himself. Searle argues that if the man doesn’t understand Chinese then the system doesn’t understand Chinese either and the fact that the man appears to understand Chinese proves nothing.”

‘ As Searle writes “the systems reply simply begs the question by insisting that system must understand Chinese.”‘

That is, how do you tell a man reading a book actually does understand chinese, or merely appears to be?

What exactly am I missing here? In every article I came across that actually had a transcript of the conversation with Eugene, I see very little that doesn’t remind me of a slightly smarter Eliza-style keyword triggered response from the early 80’s, add a hint of Racter babble-bot, and put a dollop of good old fashioned random-walk-by-frequency of a Markov table of n-grams (sometimes called the “Travesty Algorithm”).

I’m not impressed by any of the Eugene transcripts so far, which makes me think the only new thing here is that a generation has passed that didn’t grow up goofing around with Eliza on their 8-bit home microcomputers. Or alternatively, we’ve got a generation of 13 year-olds who are so scatter-brained and random that Eugene is actually an accurate model.

My Turing Test would be to ask the AI a question like, “prove to me you’re a real person or I’m going to kill the next person I see.” From what I have seen, Eugene would say something like “You’re boring and I want to play!” or “Killing isn’t nice” or “What did you say your occupation was again?” Not exactly convincing.

Exactly! It has no idea of context….

If the test was for an 8 year old boy with ADHD I’d say you’d get 70% believing it’s true ;-)

+1

Yonkers ago I ran a game server using (back then) a very well known bot script. I tinkered around and ran a “AI” to chat with the other players on top of the bots giving the appearance the bots were living humans. IIRC I ended up with 20 or so different profiles each with their own personalities, skill levels, playing style, weapon and path preferences, artificial lag, so on and so forth. They even knew the “stand in the corner to chat rule”.

But they all had a fatal flaw I couldn’t really overcome…. strategy. I always felt it was too easy to fool the bots.

Interestingly, that particular server became popular and always had real human players outnumbering the bots (though at least one bot always remained). And even more interestingly, few people realized there was ever a bot involved.

I wouldn’t go so far as to say people are dumb but people, even if they know bots are there, have a tendency to attach human characteristics to anything the believe it.

I *finally* found a working bot and asked it ‘Where are your lols?’ because surely a 13 year old would be putting lol in everything. Its answer was ‘I’m glad you agreed. Some another topic?’

THAT DOESN’T EVEN MAKE ANY SENSE!

So I thought that I would ask it your question (prove to me you’re a real person or I’m going to kill the next person I see.). Its response was: “That’s nice that “you see”. At least now I’m sure that your name isn’t “Ray Charles”! Could you tell me what are you? I mean your profession.”

To me, this has statisticly proven that 33% of judges are chatter bots. I see no other answer.

Its worth pointing out the creator of that program that won didnt think much of winning either. I dont have the quote to hand, but it was clear he didnt think he did anything significant.

It’d be nice if people would work towards creating machines capable of intelligence, rather than machines capable of fooling humans.

What, like IBM Watson?

watson is more of a ‘google bot” for all intensive purposes, it looks up stuff online, or that is pre-stored. for example when it won Jeopardy, it had full access to all of wikipedia’s text as well as other stuff that took up 4TB of disk space, unlike normal where it’s got the internet as a whole to use.

or better: .It’d be nice if people would work towards creating machines capable of intelligence, rather than machines capable of killing humans.

There are many many very successful humans who consider the capability of fooling humans the height of intelligence – all out politicians for one example…

“our”

My computer has replied that he University of Reading is NOT in Russia, but is here in the county of Berkshire in England.

It add that the team that developed the program was from Russia, but that the test was performed at Reading.

Fixed!

How many people participated in the judging to make up this 30%? Eugene is excruciatingly obvious as a chatbot, and I am disappointed.

Intelligence can be stored. Through personal habits, material preparations, or computer programming we can store our ideas ahead of time allowing decisions to be made before their application is required. The intelligence in this story comes from the programmer. When they wrote the book a series of snapshots were created representing their decision tree. The storage and retrieval process makes the exchange super inefficient and reduces the number of possible inputs; but don’t let the remoteness of the programmer fool you into thinking that the intelligence came out of nowhere. Now if many peoples inputs and decisions were combined and extrapolated to create something new would the new emergent behaviors still be the original peoples intelligence?

“if a computer can fool a human operator into thinking it’s human, then by definition the computer must be intelligent. ”

Or just really stupid humans. At the rate human intelligence is going, computers will be smarter than us in no time!

I think artificial intelligence suffers from the same limitation as an I.Q. test. In an I.Q test, we have to assume that the people that wrote the test were the most intelligent. Obviously, because they would score 100% wouldn’t they? So, really you are being measured against some other guy, who may or may not actually be all that smart.

In A.I., the computer requires a human to program in the intelligence, so it can only be as smart as the person programming it.

For true A.I, you need something that can rewire itself and build new neural pathways based on stimuli and write it’s own programs.

A programmer doesn’t need to “program in the intelligence”. He only needs to program the rules to obtain intelligence. Our brain is following simple rules of physics. Nobody programmed in the intelligence, and yet we are intelligent.

The brain has all the connections and the low level bios (instincts) to allow all the neurons the chance to form their own pathways. You are preprogrammed but not enough to show intelligence. Intelligence comes from learning through experiences that don’t kill you.

Your understanding of IQ tests is not correct. There is no 100%. It is a test to measure a quotient, nothing more. In other words, it is for comparison of people that took the same or equivalent test.

> Obviously, because they would score 100% wouldn’t they?

You don’t score 100% on any IQ test. You get a z-score, or number of standard deviations above or below the mean of the population.

Either way, it’s pretty easy to write a question that *you* don’t know the answer to; one of the questions on the Welcher is, “Who wrote Faust?”. You don’t really need to know the answer to write the question, you only have to know it’s a book.

You are correct, I misspoke. But, how does whether or not you know the author of a fiction novel define intelligence?

Whether or not the authors of the test knew the answer, they chose it for a reason: Because they thought intelligent people should know the author of some fiction novel. Dumb people read, too. So what he thought was intelligence influenced the questions. My point still stands.

I haven’t taken an IQ test since middle school, and even though I received a high score, I failed to see how the tests actually measured intelligence.

My father in law always says the only thing a high IQ tells you is that you are good at taking IQ tests.

They measure pattern recognition. – Imho, thats a fine definition of intelligence provided the test is good enough to pick up a diverse range of pattern types.

A good IQ test, for example, should have no linguistic or culture bias’s (which is VERY hard to do).

An IQ should also not require any knowledge. (or a bare minimum needed to understand how to do the test, but no question should require knowledge to answer)

And while theres no such thing as an absolute figure for measuring pattern recognition….there isnt for anything else we measure in schools or life either. Arguably some tests for subjects like History or English will be way more subjective, in fact.

Aside from the flaws others have pointed out, you also forget a program (or IQ test) can be made over months or years.

It can also have many people involved.

Its not the same ratio of “brain hours”.

Who says an int or a float can store numbers? They cannot store numbers, just *represent* a small subset of alll numbers. But, that’s enough for a lot of stuff that is meaningful to us.

Who says human beings are intelligent? They are … [discussion follows]. But, that’s enough for a lot of stuff that is meaningful to us.

Indeed. The idea of the Turing test is really not one of absolute intelligence but “good enough to be considered human”.

TechDirt posted what I think is a pretty definitive rebuttal of the clams; https://www.techdirt.com/articles/20140609/07284327524/no-supercomputer-did-not-pass-turing-test-first-time-everyone-should-know-better.shtml

Not a real pass, the transcripts from this are appaling and they had to pretend to be a 13 year old boy from Ukraine.

The Indian one was slightly more impressive imo but they were just looking up previously created responses.

Intelligence requires the ability to understand

a cpu, (like the man in this test) doesn’t understand and thus is not using intelligence

That’s an undisprovable assertion! You can’t demonstrate that anyone or anything “understands”, only that it gives suitable responses given certain stimulus that seem indicative of intelligence.

Likewise for the neurons in your brain.

I don’t know how good this ‘eugene’ guy is, but I’m interested by the Chinese room experiment.

If the man is the CPU and the book the algorithm, can’t we say that it’s the “book” that can understand chinese? Otherwise it would be saying that it’s your tongue that knows your native language, and not your brain/mind/whateveryoucallit.

I’m just saying that the CPU is just a tool to execute an algorithm…

(Speaking of languages, english is not mine! So I’m sorry if it’s full of mistakes ;) )

Hello, unfortunately the news is not correct…

https://translate.google.it/translate?sl=it&tl=en&js=y&prev=_t&hl=it&ie=UTF-8&u=http%3A%2F%2Fattivissimo.blogspot.fr%2F2014%2F06%2Fno-un-supercomputer-non-ha-superato-il.html&edit-text=&act=url

That only describes what the CPU does; the Intelligence is in the Algorithm & Memory, not the CPU.

The Chinese Room argument makes a complete mockery of the scale of the data required. In order to create a reasonable intelligence, you can’t have a few notepads, some rule books, and a few minutes of time. Think billions of books and notepads, and millions of years of calculating to produce an answer. Now, using those more realistic numbers, the scale is so vast, we cannot meaningfully comprehend it any more, so it would be wrong to trust your intuition.

It was a thought experiment, not a scale model.

And it’s interesting as a thought experiment. But since it ignores computational complexity (the amount of memory and time required to implement the Chinese room’s “book”) and physics (that if you could put that much data into a small enough space to access it in a reasonable amount of time, you’d create a black hole) it’s not a useful illustration of how an apparently-thinking machine might just be an extremely simple algorithm consulting a lookup table, which was Searle’s intent. In other words, because the tree of possible conversation is so large and grows so quickly, brute forcing it is never going to cut it, and pruning the tree is required, making the process perhaps more like our brains than a Chinese room would be.

I think you have a fundamental misunderstanding of the thought experiment. It was meant to show that logic doesn’t equal intelligence.

A person (or computer) can follow a set of instructions without understanding what they are doing. Just because someone can follow instructions to bake a cake, doesn’t mean they are a chef. In the same way, just because a computer can answer some questions, it doesn’t mean that it is intelligent.

Right, that’s all true. But since having a lookup table representing the entire conversation tree isn’t tractable, any system able to make *that* particular demonstration of apparent intelligence is going to need to do it some other, more clever way. Likewise, if baking a cake by brute force required access to that much data, then you could assume that the entity baking it had some “chef-like” skills and wasn’t just blindly following a recipe.

I agree with you. My reply was meant to be devils advocate to try explain what the thought experiment was about. I can understand where Searle is coming from, but it doesn’t mean I agree with the whole premise. At some stage, I still believe we will get there, we are just a long way off at this point in time.

My point is that it attempts to show this by scaling down the problem until it is so small that you can grasp it intuitively. But if you scale a brain down to a size where it is so small that all activity of the neurons can be described with a few books and a couple of sheets of scrap paper, then you have a brain that’s so small that it is no longer intelligent. So, the scale of the thought experiment is important. When you make the Chinese Room as big as your brain, it is too big to grasp intuitively, and it’s no longer convincing as a thought experiment.

But qualitively, nothing changes between a single book and a whole library of books. Simply increasing the number of instructions and data does not change how the system fundamentally works – there’s no plausible mechanism by which a large quantity of them would somehow change the quality of the system into “intelligent”.

With neurons, the situation is different because first of all we don’t fully understand how they operate and what they do, so as far as we know a single neuron is intelligent – just quantitively less so than an entire brain.

Searle believes that even a neuron level simulation of a human brain would lack consciousness, even though it acted exactly like an actual human brain. See his reported responses to this criticism in the Wikipedia article here (http://en.wikipedia.org/wiki/Chinese_room#Brain_simulation_and_connectionist_replies:_redesigning_the_room).

Personally, I’m a philosophical materialist, and I suspect most HaD readers are too. If you can simulate something such that it acts exactly like the original, where is the difference? Certainly nowhere we can test empirically.

Indeed. And I worry for future AI’s how much some wetware seem desperate to consider themselves special.

The problem with the simulation argument is that your simulation is still a mere formal representation of the thing, and not the thing itself. It displays the outward characteristics, but does not replicate the internal workings correctly.

A simulation of a brain still reduces back to the same old book full of meaningless symbols, because it is not a brain. A real brain can in principle utilize e.g. quantum tunneling for all we care, whereas a computer simulating a brain cannot – it can only reproduce something that looks like it – in the same sense that a mere program cannot generate truly random numbers.

Point being, the intuition of the Chinese Room argument is that intelligence is not computational or cannot be merely computational because any such system would reduce back to the man with a book.

The whole other question then is, are we “intelligent” according to this definition, or merely immensely complex programs?

Yeah, and I made a bot imitating a 6 month old blind boy without arms. It never replies, but people think it’s real, so where the fuck is my hackaday featured post?

I understood that it FAILED the test?

http://www.buzzfeed.com/kellyoakes/no-a-computer-did-not-just-pass-the-turing-test

And in comes the engineering graduates from the University of Star Trek! It never fails when a discussion of A.I. comes up.

Next topic: time travel. We don’t want to leave the Quantum Leap graduates out.

(BTW, I just time traveled 3 minutes while writing this post!)

Ooooooh you cynical bugger! LOL – Well I am an EE graduate from the University of Technology, Sydney, am I allowed to comment? ha ha.

I don’t know. Did you score 100% on an IQ test? :)

Nah 250%

Ever the Devil’s advocate, I have to admit I do accuse online chat help of being a bot when they answer my questions with stock answers that don’t fit, or didn’t read the question to begin with, which actually may help prove the thesis… ; )

Who asked HackADay?

Any mirrors out there to test this thing out?

Depends what you mean by intelligent. The question reminded me of Scott Aaronson and P vs NP.

So what if you convinced a 1/3 minority of people that text on a screen was written by a 13 year old boy from a foreign country? Did the machine teach us anything novel? Did the people who made it even teach us anything besides how easy it is to manipulate ignorant journalists?

It’s funny you say that, I actually first learned about this chatbot earlier today from a post on Shtetl Optimized (Scott Aaronson’s blog).

In answer tp the chinese room.question. Yes, tue man is intelligent as he knows how to work with the input and output. He just doesn’t understand Chinese.

Asked “What deodorant do you use?”, answer was “Yes I do. But better ask something else. Where do you came from, by the way? Could you tell me about the place where you live?”

Sorry, pretty bad chat-bot. No idea how 1/3 of the people thought it was a real person.

Well I’m not impressed. Transcript:

“…

me:You said you were chinese?

Are you calling me an elephant buyer, Jabbers?

me:Well 33% of judges are stupid.

Yes.”

Searle’s is a silly argument. Humans also refer to a set of scripted instructions in order to display ‘understanding’ of everything they might appear to understand. It just happens that this script is internal, rather than on a desk in a Chinese room.

“Intelligence” is not a well-defined term, hence Turing’s attempt to define it.

It’s really a language problem, not a computer problem. i.e. my ability to make an “intelligent” computer depends on how you define intelligent.

The Turing test is useful as a standardized benchmark, but not for defining the word intelligence.

Perhaps “Intelligence” should be replaced with “Sentient” or “Self-aware” then.

That is, if a machine acts human in conversation, then it should be given the rights as one.

After all, I think though process of elimination most people would eventually settle on the brain being the defining thing that deserves the rights in a human, whatever the current legal definition is. I wouldn’t, personally, be happy with any way to separate out humans as special that was based on how we work internally, rather then the end result of that working.

No intelligent being would want to act like a human.

John Searle is a troll. The human is merely a CPU running a program stored in the book. Nobody thinks AI will make an isolated Pentium intelligent after you remove it from the computer – no more than the human heart lives when extracted. Nevertheless the whole system quite obviously speaks human language well enough to fool a human, which is the only evidence we have to consider other humans intelligent.

In short: no shit the person doesn’t understand. It’s the book that speaks Chinese.

But you’re begging the question by asserting that a CPU running a program can be intelligent.

I don’t have to prove that to disprove Searle’s shoddy counterargument. The symbol-manipulator’s lack of understanding has no bearing whatsoever on whether manipulating symbols can produce intelligence.

Bingo on that! Elegantly put.

But you haven’t actually disproven anything.

You’ve just asserted that the book speaks chinese, but books don’t speak.

“which is the only evidence we have to consider other humans intelligent.”

Actually, you only have to consider yourself intelligent and then compare yourself to others. You don’t have to observe that they appear intelligent, you only have to observe that they are the same as you, which you can do without fully understanding how anyone’s brain works.

“The Turing Test was developed by [Alan Turing] in 1950 as an existence proof for intelligence: if a computer can fool a human operator into thinking it’s human, then by definition the computer must be intelligent.”

WRONG

Turing did not intend the test as a proof of intelligence, but simply remarked that since nobody actually knows what intelligence is, testing for it is impossible. The Turing test is simply a test whether a sufficiently complex non-intelligent(!) machine can fool a person to -believe- that it is intelligent, and what would it take.

If this chatbot was sadly able to convince 30% of the humans judging this into thinking this was a real human ,then it says more about the intelligence of 30% of the human race than about the inteligence of machines .

From reading the comments up to his point I see a number of issues in the conversation, so here is some food for thought:

1.) To understand what “intelligence” is AI is not the correct field to be researching. This question has been studied for a very long time in the field of philosophy, and is in fact one of the defining questions of the field. As one could imagine the amount of information available on the subject is considerable.

a.) In an attempt to stay with the conversation thus far, let us briefly examine “intelligence” using IQ. First off let us remember that IQ comes from the field of psychology, and was intended to provide a quantification of a specific human cognitive quality. IQ is not a measure of knowledge(in this context knowledge can be thought of as “stored information”), nor is it merely a measure of the ability to recognize patterns(pattern recognition is generally considered to be a component of IQ). IQ is a measurement of an individual’s capacity for problem solving(which can be thought of as one form of abstract thought). As an exercise, spend a few minutes trying to create a test to measure this quality. Creating such a test is harder than it initially appears is it not? In fact, it is probably nearly impossible to create a perfect IQ test. Memory(the ability to retain information) is also a very useful quality, and synergizes powerfully with intelligence. Memory is also much easier to test. Many, if not most, IQ tests also test various aspects of memory. I would imagine most readers can come up with reasonable tests for short term memory, so we will focus on other areas(for now). One way to test long term memory is to quantify the retention of information that the test taker has been provided at some point prior to the test. To do this perfectly is sufficiently difficult to be practically impossible, so tests address information that the test taker has probably been provided at some point prior to the test. To really test accurately(especially at high levels of retention), the questions need to address information that the test taker has been provided with on as few occasions as possible and without coinciding emotional stimuli. In other words, information that the test taker has not been repeatedly reminded of and that is not associated with some strong emotion(which tends to allow human beings to remember things associated with those emotions). This is why a question such as “Who wrote Faust?” may(or may not) be appropriate, but more to the point, why such(similar) questions might be found on an IQ test.

2.) Thought experiments are a tool used to explore an idea. They are not intended to be practical, otherwise they would be regular experiments. Good examples of thought experiments that can be used to understand their proper use include Maxwell’s Daemon, Schrodinger’s Cat, and the Bohr-Einstein debates(an excellent example of how thought experiments can be used to generate/refine ideas). Straying beyond the constraints of the thought experiment does not disprove it’s validity, and in reality robs the thinker of what may be gleaned from the thought experiment. In the case of Searle’s experiment, he is merely trying to make the point that “computational models of consciousness are not sufficient by themselves for consciousness”.

I can think of at least another dozen points pertaining to this subject that I would like to address, but this post is probably too long as it is. I would like to note though, that I agree with what appears to be the general sentiment of the comments, that this is merely a chatter bot that operating in a scenario that seems to have been engineered to pass the Turing test. Further, passing the Turing test does not indicate intelligence.

“1.) To understand what “intelligence” is AI is not the correct field to be researching. This question has been studied for a very long time in the field of philosophy, and is in fact one of the defining questions of the field. ”

Bingo. That was indeed what I was trying to get at but it went off into the tangent of an I.Q. test.

The problem is that Philosophy is the haven of pseudo-intellects, same with futurists, and even cosmologists. Anyone can claim to be a philosopher or futurist and neither requires any real intelligence beyond the ability to B.S. And Cosmologists fudge around with systems with too large of a scale to experiment, so no real way to prove or disprove their theories. We could debate that simply the creativity and thought involved in these could be called intelligent, but I really don’t want to debate that.

Anyway, I digress. My point is that the current attempts at A.I have been limited by the intellect of the programmer because they try to cram all the data in up-front. This is like saying a human will never be more intelligent than his parents. If this were true, intelligence would be on a steady decline (hmmm… lol)

True A.I would require a system that was as basic as possible and accepted input from stimuli and being able to judge positive and negative feedback or success and failure. But that judgement would need to come from a personality. How would it know what was negative and what was positive? In humans, this comes from various attributes of the personality. There would need to be punishment and reward in forms that are important to those attributes. Basically, it would have to care about such things. It would require simulated emotional response as you suggested in order to associate things to remember. It would have to learn based almost entirely from association.

Start small, how do humans learn to speak? By listening to their parents and others and observing what they do. If they say ‘cat’ and keep interacting with a fuzzy feline, the child learns that it is called ‘cat.’

And the number one thing that really separates a chatbot from A.I. is the ability to evolve. If it took your responses to what it said and used that information to refine it’s interactions, thus learning how to interact and respond, then it would be much closer to an A.I.

For example, I have quite a few friends that don’t speak English as their first language, and I don’t know their language either. But through talking to them, I learn more of their language and they get better at speaking English.

A chatbot that could recognize when it is being corrected by a human and incorporating those corrections could probably learn to fool a human in fairly short order. It essentially gets smarter and smarter the more you talk to it.

Would a computer be considered to be intelligent if it were programmed to give responses that would seem human to multiple ‘judges,’ but reduced/refined its functioning via its progressive (neural, or whatever) programming to simply patch one judge through to another, thus leading all judges to believe they were chatting with other people, since they would be–other judges, namely? Yet the derived solution is no more than a switchboard, but if it were the calculated result of a generalized system meant to give the impression of speaking with a human, would the system be intelligent?

What if the system had 13 years of human two-sided conversations (as would a boy that age) and used best-match algorithms to map an input to an output.

Those two possibilities, the switchboard and the memory store, are very different, and I think highlight how silly at least the lay version of Searle’s argument is. As someone already mentioned, the branching for any real world conversation, even with 13 years of data, would quickly out pace the available data for recovering a believable response. But the switchboard solution would almost by definition fulfill the purpose of passing the Turing test. As for Searle, the Chinese book could at best be the 13 year memory store. Given the scenario the idea that the Chinese speakers on the other side of the slot would ever receive believable answers beyond a few arbitrary direct-hits is contrary to fact. If they are satisfied, then they are probably German speakers with Chinese books asking questions about fields of study for which they have absolutely no knowledge whatsoever.

This also seems like it might be a little related to some animal testing where animals are evaluated on their ability to deceive.

On a lighter note, if you work for a government agency experimenting with Turing tests, please do not program your systems ‘not to fail’ the tests, as a Prolog programmer might be tempted to do. Eliminating all humans would be a solution. Spencer Tracy, William Shatner, or Tom Baker might not be around to shut things down.

Sorry but this fails the turing test big time! I could hack some chatbot in days that does better. Ask ‘how much is pi times two’ it responds 3.1415926535 (more digits than 99% knows by head. most people know 3.14…). Second question: That is almost correct, how much is this multiplied by two? The answer it gives: ‘Not so much so far. Why did you ask me about that? By the way, what’s your occupation? I mean – could you tell me about your work?’ -> FAIL! http://www.princetonai.com/bot/bot.jsp

Pardon my naivete, but I’m really at a loss in understanding what intelligence is. You see, I’m only a cognitive and experiment psychologist, and to tell you the truth my whole field doesn’t seem to have ala real definition (ie. reduction in abstraction). Some develop theories, some agree with some of those, more disagree, but there is absolutely no consensus on what such a definition should even include. It’s like love — everybody knows what it is, but nobody can define it.

Searle’s Chinese Room is a poor example to use here. It was never meant to relate to “intelligence” but rather self-awareness — a conscious mind.

As for the Turing test as it is practiced, it is fatally flawed. The interrogator knows what they’re doing. They know they are supposed to differentiate human from machine. Likewise the human player(s), they know they’re being judged. In addition, those conducting the test know who is doing what, and they’re not about to put some dolt in the player’s chair who might be mistaken for a poor attempt at a Turing program through sheer doltousity, so the human player(s) are not representative of average human smarity (don’t forget half the population is below 100 IQ, whateverthehellthatmeans). At every level the T-T is broken. It is a giant pile of bias-generating slop that would result in no result at all in terms of actual scientific knowledge. The test needs rebuilt. Those conducting it should not know which of the interrogatorS are human — yes there should be machines on that end to keep the human players from figuring out what’s going on. The human interrogators and players should not know they are participating in a Turing test, nor should they be selected, but rather randomly assigned from a pool of naive volunteers whose characteristics are hidden from the operators. It should be a triple-blind test. There are three groups: operators, interrogators and players, and all should not be aware of who is being tested. The interrogators and players should not know what is being tested. All data should be coded and evaluated by the operators only after all testing has been done but before it is revealed which interrogators were human (from zero to a maximum limit chosen) and which players were human (again, zero to whatever). See, now that’s how we run experiments in cognitive psychology and many other fields. Anything less wouldn’t make it through the first round of readings by journal editors. The test Turing described was a thought experiment, which is great for folks like Searle who’s a philospher, not a scientist. Turing was not a methodologist in the field he proposed test his ideas, the field of human (or not) behavior.

Lastly, in a discussion group with Searle, Basil Hiley (David Bohm’s partner), Roger Penrose and several others from various fields at one of the Appalachian Conferences on Consciousness Studies, Karl Pribram announced that he knew how to create something to pass the Turing test — for something artificial to be accepted as intelligent. When all ears turned to him, he announced “It’s simple. Just make it cuddly.” Had Honda put fleshy padding and/or fur on Asimo they would have sold a lot more, and the buyers would have no less doubt about their “intelligence” than people have of their pets. Oh, you don’t believe they are? Or maybe you do? Either way, that’s only beliefs; we have no objective way to determine “intelligence” in any carbon based life forms much less silicon pretenders, so we go with gut feelings. (Intelligent? If Asimo had been cuddly, by now several would have been adopted).