The folks at Return Infinity just released a new version of their BareMetal OS, a 64-bit operating system written entirely in assembly.

The goal of the BareMetal project, which includes a stripped-down bootloader and a cluster computing platform is to get away from the inefficient obfuscated machine code generated by higher level languages like C/C++ and Java. By writing the OS in assembly, runtime speeds are increased, and there’s very little overhead for when every clock cycle counts.

Return Infinity says the ideal application is for high performance and embedded computing. We can see why this would be great for really fast embedded computing – there are system calls for networking, sound, disk access, and everything else a project might need. There’s also ridiculously small system requirements – the entire OS is only 16384 bytes – lend itself to very small, very powerful computers.

With projects that are computationally intensive, we think this could be a great bridge between an insufficient AVR, PIC or Propeller and a full-blown linux distro. There’s just some questions about the implementation – we feel like we’ve just been given a tool we don’t even know when to use. Any hackaday readers have an idea on how to use an OS stripped down to the ‘bare metal?’ What, exactly, would need 64 bits, and what hardware would it run on?

Check out the Return Infinity team calculating prime numbers on their BareMetal Node OS after the jump.

[youtube=http://www.youtube.com/watch?v=ccLef8GLl6g&w=470]

180 amps!!!! Im sure he meant watts :)

How about for Rainbow Table Generation, Key Hacking, RSA Hacking, SSL Hacking, Crypto functionality using algorithms that use 64-bit int’s at their core, like ThreeFish or SHA-384/512

Now that is interesting, they developed something that is not only amazingo but a great learning tool

I can’t really speak from experience, but I do know that lots (if not most) of scientific computing requires 64-bit precision. I remember hearing that it was one of the early stumbling blocks for using GPUs in reseach calculations.

If they could get one, or both, of the high-end video card makers on board to either make GPU drivers for BareMetalOS, or to provide the BareMetal guys with enough technical data to make their own, then this might allow for even more performance to be squeezed out of clusters built from x86 PCs with GPUs installed on them. Also, this might allow for faster clusters designed for solving problems that aren’t well suited for being run on vector processors like GPUs.

I suppose this makes sense to me for embedded system applications that require a fair bit of high-precision math – I’m thinking video processing. So maybe useful for a rover or something.

Still, I’m a bit confused as hardware’s fairly cheap. I guess if I were building something to leave the planet, I’d be interested in this kind of thing.

If only consumer OS’s were programmed from the ground up like this…

I’ve been thinking about tackling a BitCoin miner on this platform. Without the overhead might be able to get some decent performance. Although GPU mining would likely still out pace it by a good amount.

I would love to have a copy of your miner once you’ve got a working model! :D

Keep in mind that the moment you cut straight to assembly, you make yourself platform dependent. Different processor? You could very well have to recode half your project.

Its a poor reason now when everything is intel based. When you had PDP8, 11, SYSTEM 360,8008, (8080, 8085, Z80) 68000, 6805, 6800, 32000, 68HC05, eclipse, vax, etc you could use that as an argument. The problem with portability is that nothing ever really was all that portable. When you moved your operating system from a 68000 to VAX or whatever you had to write all the device dependent code for that machine. And nothing was the same as far as keyboards, display, I/O etc. The “Abstration” layer meant you had to have serious programmers doing all the hardware devices (in assembler) and then i/o it with the slower then hell programming language of the day. Which never was the same between versions. The syntax changed. You have “quick” versions, “Visual” versions and then new compilers so C went another abstraction layer of slowness to C++, then C# where it was really slowed down. There were other languages like PL/I, Basic, Cobol, Fortran, Agol, Lisp etc that some people had a lot of code invested in. More and more people knew less and less about how anything really worked and just cut and pasted crap code that someone else wrote. Then to retain compatibility all the old routines and bugs had to be retained when some idiot decided there ought to be another layer of abstraction.

Perhaps it could be used as an RTOS for applications that require such a system like avionics and flight controls.

Reminds me of what was said about the first laser invented in 1960: “A solution looking for a problem.”

Someone mentioned to me when I mentioned this article to him: data processing for genomics and bio-modeling, you could use this doing base mathematical number crunching and 3D modelling as headless boxes that feeds the data back to a central server or machine that the scientist/physicist/astronomer/lab tech could actually interact with. They’ve made virtual universes for testing dark matter theory in server farms of 2000+ boxes running probably SUN Solaris or something for over a month to run one simulation of gravity models.

>>Any hackaday readers have an idea on how to use an OS stripped down to the ‘bare metal?

This is why people take Computer Engineering :P

I do wonder what computer advantages you gain versus a cluster of linux boxes; couldn’t you feasibly write code as a kernel module to gain a comparable hardware interface? (Not a CEng!)

So much could be done with this system! System could be used for robotics applications where high bandwidth data streams are in demand, i.e. robotic vision and ai, precise servo movement calculations, UAV auto-navigation and video streaming to operator. 64 bits of precision may also be used to reduce computational time and achieve higher accuracy in Finite Element Analysis/Modeling for Mechanical/Electrical/Physics simulations!

Customize rendering algorithms – assemble multiple BareMetalOS Boxes for better graphics rendering, like real-time ray tracing! (Maybe just a dream)

I haven’t looked at the specs in detail, but if BareMetalOS gives you hardware level control, why not experiment with linking multiple motherboards together over SATA? You can achieve higher bandwidth communications than with networking (4Gbps vs 10/100/1000 Base T) schemes and avoid network traffic. Massively Parallel Processing to the extreme – and is available to the public! Yeehaw people!

There is also MenuetOS which even has a GUI:

http://www.menuetos.net/

and comes in 32 and 64 bit flavors

in the video, why does he want to “1” (the number) to primes, as far is I know 1 is NOT a prime?

I don’t get people here. Overhead? What overhead? When have you seen the kernel actually take a reasonable amount of CPU time in any modern (say, +1GHz) CPU?

This may be useful for embedded devices, but for a decent machine the overhead is negligible. Of course, most embedded CPUs aren’t 64-bit either, so this is kind of limited. Especially since they programmed against x86 and not ARM.

I wander if you can put this in a linksys WRT54G router?

haybales: …they’d cost $10,000 per license and see one update per decade if we were very lucky.

@IceBrain

The overhead due to an OS is essentially negligible for one computer, i.e. calls to the processor from background programs do not inhibit performance of your main application. Like watching a Youtube video and having a chat client up. However, if someone builds a computational cluster, overhead due to an OS scales with the number of nodes used. So, in the movie industry where 1,000’s of nodes are used to process/render scenes where it may take hours to compute a frame, take that almost negligible OS overhead multiply it by 1,000 nodes and observe more inefficient computational time that may translate to lost money.

In addition, a high performance compute application typically requires a central location to store finished data, in which case hard drives at each node are a non-essential to the application. They are then a waste of space and since they require power to idle they waste money. Again, multiply the power used by each hard drive at each node by the number of nodes and here is wasted electricity that converts to wasted dollars.

What we need for comparison, is an OS written in Java, running on a Java interpreter written in Java.

If Java is half as fast [or faster] as people make it out to be, it would be the optimum solution.

This is useless except for fun.

OS overhead is not a problem in HPC and noone has the time to write apps in assembler.

Also not having a real scheduler means that you can not have more threads than cores, which is really useful to keep the cores busy while waiting for data.

All early operating systems were written in assembly language. IBM, CDC, DEC, CRAY, etc. Unix caught on because people realized that their software investments could outlive whatever proprietary CPU and operating system they were using.

If you are going to do floating point calculations all day long you won’t be spending much time *in* the OS kernel. So kernel performance isn’t that big a deal. Think applications like inter-process communications, networking applications that saturate the box with packet arrivals and context switches, etc. But apparently it doesn’t actually have a working TCP/IP stack yet… Oops, the reference TCP/IP implementation is written in C.

IceBrain most of them don’t know what PE and ELF are either..or how stacks machines work..do something productive..

let me know when they does what a compiler or kernel does more efficiently..

There are really good reasons modern operating systems aren’t written in assembly. If you don’t know what they are, you really shouldn’t be doing any assembly programming.

I remember coding Conway’s life in turbo pascal for a 10MHz 286. I was getting one screen refresh every 30 seconds running under DOS.

I redid it in assembler using the 80×25 character video RAM itself as the memory structure (array) for the program. I got 15 iterations per second, and then 45 per second when I ran it on a DX4-100.

So, there you have it, everyone need look no further for the problem supposedly awaiting this solution….

:-)

@Blue Footed Booby — No, sorry, I utterly disagree. If you don’t know what those reasons are, then you *SHOULD* be doing more assembly. That’s the only way they’ll learn.

(That being said, I love assembly language coding. It’s fun and challenging. And, with the right libraries, just as productive as C or other languages. But, if you have said libraries, you’re already basically coding in C or other said languages, but with a different syntax. So you might as well code in C or said languages to begin with, and skip the learning curve.)

@Anne Nonymous –

“Java” is a thing which comes in many profiles and environment. You can not benchmark “it” any more than you can benchmark Windows or Linux while not specifying what hardware you are testing.

Java does not provide OS level services either. It’s an abstraction layer which depends on them.

There is a Linux BIOS project which basically produces a less bare-metal, yet still stripped down OS service.

I know this is a 64bit written OS, but if I had the ‘know how’ I’d easily run this on the PSP with it’s 333MHz CPU and 64MB of Ram which should be plenty in running a small a distribution.

Sadly, the only project that Linux has seen on the PSP is the poorly done uClinux port which doesn’t really utilize anything :/

*wishes I was smarter*

I just want a consumer-level bare-metal hypervisor so I can stop VMing in a slower host OS.

Get esxi 4.0. It was free and is now the future of vmware and costs money in 4.1

@haybales – You’d see a lot more bugs. When was the last time you saw a compiler bug cause an application to crash? Or even a kernel to Oops?

Also, debugging assembly (machine code) isn’t the same as running your friendly debugger on a corefile. I dont know what tools they provide, but the reason for higher level languages is to make this bug-find-and-fix problem a little easier to live with.

I fail to see any value in writing an embedded OS in assembly for x86. This is like buying a Bugatti Veyron for your grocery trips.

They promote it for HPC usage? What the HPC area doesn’t need is yet another platform that will be unmaintained in 20 years time. There is a reason why the HPC world have OpenMP and MPI. HPC programs tend to live forever if they are doing something useful.

The guy just stumbled upon a bug in wolfram alpha. 1 is not a prime number =)

If I understood the parameters right, the program he used incremented by two, thus it would have skipped 2. Therefore, his program (mistakenly) had to identify 1 as prime to get the true number, as it missed a prime. Wolfram Alpha would have given the correct answer, as it most likely did not skip 2 and correctly identified 1 as non-prime.

I agree with IceBrain, despite the fact that actually any OS contains pieces of assembly, this is just pointless for computation because the overhead is minimal.

Someone needs to figure out how to use this to generate bitcoins.

Classic assembly OSs like MULTICS and the Burroughs MCP (of TRON fame) had huge advantages in that they were written on CPUs designed with multitasking, multiple users and networking in mind.

What people need to realize is that decades ago a MULTICS machines could be physically taken apart while users were logged in, moved part by part to another location and reassembled – all without the users knowing.

The popular modern systems are driven by economic utility to the sellers (proprietary lock in) not technical merit or good engineering principles.

@IceBrain: There is tonnes of overhead in modern O/S Even with a really optimized O/S in modern terms you are using a few hundred MB of memory for holding the O/S and all the data associated. Then there are the tens of thousands of clock cycles being used to monitor user input, sensors, memory management, hard drive activity. When you strip all of that out you have the ability to perform more calculations per second, thus making it better for numeric calculations. The only reason you don’t notice the overhead is because as CPU power increases the necessary clock cycles are a smaller percentage of the whole.

I think this would be a great application for cluster calculations, like rainbow tables or video processing, but there needs to be a lot more development and interaction with the orchestrator, but very cool.

And really, I think the true spirit of hacking is captured when someone does not ask “Why would you want to do that” and instead “Why wouldn’t I want to do that”.

A 16k OS? Sweet! Should turn some heads in the field!

@American I totally agree. Most of this situation was caused by the abusive Microsoft monopoly. IMHO, Microsoft did more damage to the technological evolution than good.

The value of simple assembly code is inversely proportional to the length of your CPU pipeline. The longer your pipeline, the more it hurts to handle branches the ‘simple’ way.

Say you have a piece of code that checks a bit and executes routine A on a 1, routine B on a 0, then returns and does some other math with the results. Following that path directly is fine if the CPU completely executes one instruction before starting the next, but practically no CPU does that any more.. they break the execution process into stages, and execute them in parallel.

The classic RISC pipeline is 5 stages long: fetch the next instruction, decode it, execute it, do memory I/O, then do register I/O. If we have to wait for the conditional to decide what the next instruction will be, it means there will be at least four empty slots in the pipeline. The first instruction of routine A or B won’t happen until at least 4 clock cycles after the conditional.

Microcontrollers like the AVR and PIC have short pipelines — only 2 stages — so that doesn’t hurt too much.

The IA-64 architecture, OTOH, has a 10-stage pipeline. It also has 30 execution units divided into 11 different groups, and its fetch mechanism can feed 2 instructions into the pipeline per cycle. The challenge is to keep them all working efficiently at once.

For that architecture, it would be more efficient to load both routine A *and* routine B into the pipeline before the conditional even finishes executing. That way, at least the one you need will be ready to go by the time you decide which branch to take. The CPU will just drop the branch that wasn’t chosen, but that doesn’t cost any more than letting those units sit idle while you waited for the conditional. It would also be more efficient to pipeline any code that follows the call to A/B that can be executed independently of A/B, or which can treat the result of A/B as a value in register R.

Point is, compilers don’t produce convoluted assembly code because the back-end programmers were dumb, and ‘simple’ isn’t necessarily a virtue when you have multiple paths of parallel execution.

I remember hearing that it was one of the early stumbling blocks for using GPUs in reseach calculations.

Just thought i’d mention that you can write your applications in c/c++, you are not limited to assembly. Just because the os and libs are written in assembly doesn’t mean that you are limited to assembly.

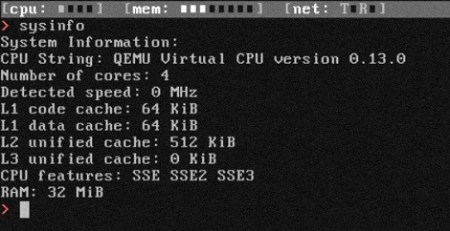

I have it fired up in qemu, and it is a pretty fun toy.

Okay, let’s think about it. You can use this incredibly small, fast OS to create whatever you want, because you could write a C compiler very easily. If you can’t, then you are not in a position to really see the advantages first-hand. And, C is portable and this implementation would be absolutely phenomenal for writing AI algorithms, code crackers, emulators, etc. I would love to have a plain vanilla OS for the best multi-core processor that supported little more than DOS and C. The things you could do with it!

First up, this project is brilliant (I like it), but the comments here have been really out-there. Writing a whole OS in assembler is fun and a useful thing to do as a learning tool, but for people arguing “oh but it’s faster!” for production use: I remain unconvinced that any speed advantages aren’t far outweighed by problems; portability (see next point), maintainability, etc.

The people wanting to run this on their PSP/ARM board/Car ECU are both hitting the nail on the head — good luck, x86-only and realistically cannot be ported without a total rewrite — and sh1t out of luck.

The people arguing that old mainframes had OSes written in assembler are ignoring the reasons: saving every byte was crucial when you had 2MB of memory and it cost you $2M, and old compilers were awful.

Anyone wetting themselves about the newfound possibilities of your x86 machine running this — ZOMG I can calculate X now, I couldn’t before! — probably doesn’t realise how little overhead running, say, Linux has. You will not suddenly get a 50% performance boost by not running an OS written in C. You will not suddenly get “more precise calculations”; you’ll find your FP is exactly as accurate as it used to be and if you find you now can do 64bit integer stuff on your x86-64, you were running the wrong 32bit-only kernel before.

Yes, GCC et al aren’t perfect. If you’re really concerned about your algorithm’s speed, write the core algo (and no more, go read Knuth) in assembler in userland AND hack on GCC to improve it. This is not the same as writing your OS in assembler. If your OS and compiler really do add lots of overhead, *fix them*, as they shouldn’t.

HPC is more than just performance; OSes like Linux have necessary things like clustered filesystems, varied I/O (anyone want to write a FC/Infiniband driver for this asm OS?), and god forbid, compilers. Even FORTRAN. ;-)

Rant almost over: Some of the memory footprint comments are both exaggerated and not as much of an issue as you make out. I don’t see HPC vendors desperate to reduce their kernel footprint from a few tens (yes) of MB to a few tens of KB. There are comments about your OS wasting cycles on managing devices, etc. Are you suggesting your OS polls all of your devices just for fun? If your OS is spending cycles managing your disc controller it is because, and only because, there is something disc-related that needs to be done! Whether your OS is baremetal or not, your data isn’t going to come off disc without you asking for it. The percentage of time “wasted” by this code being written in C is tiny, and the percentage of time your processor spends doing this is tiny, resulting in tiny^2 time wastage having a C driver doing this. I would much prefer my SATA driver/precious filesystem runs 0.1% slower if it’s less buggy!

Wait, not quite over: In many HPC scenarios your application code (be it hand-written assembler, C or FORTRAN, depending on how much of a pervert you are) is running on your CPUs 100% of the time; your I/O will often be separated on different nodes to the computational nodes. Before someone complains about a 100Hz tick interrupt reducing the number of L33t Algorithm Cycles available to play WoW via a neural net, on many systems you may experience NO IRQs unless you want them. (E.g. IRIX has a “switch scheduling tick off” syscall ;-) ) Tickless kernels, huge pages, pinned CPUs, your working set fully RAM-resident; at this point your OS may as well be written in BASIC because it’s having to actually do anything while your application runs :P :) Plus, you won’t have to spend thousands of personyears writing drivers for all the hardware you need to use.

Then there’s Kolibiri OS, a Russian developed system that’s been around for years and written entirely in assembly too:

http://kolibrios.org/

@mstone: It’s easy to handle race conditions like that with clever programming. Without having seen the code for this, I think that is likely one of the things they handled in this OS. It’s a pretty basic consideration in programming for a thread-capable architecture.

I’ll bet SETI could think of a few thousand signals to crunch with this.

Cool project, good learning experience, and somewhat useful.

The problem is who is going to write applications for this, assembler has one hell of a learning curve and most modern programs are written in C/C++.

If they want this thing to be used much, they need to port glibc or newlib to it. I would replace several virtual machines with it if they did. (I am only assuming they haven’t at this point.)

I tried writing my own OS, and it’s a lot of fun and I imagine they do it more for fun than they do for some made up overhead and computational power reasons.

For embedded though this is useless. 64bit x86 processors?! Embedded in what? A space shuttle?

Great Project!! As many have said before it’s fantastic for learning purposes but may very well never find a real industry use.

Either way, just great that someone took the time end effort to program, document and SHARE IT!!

So thank you guys at Return Infinity! ;)