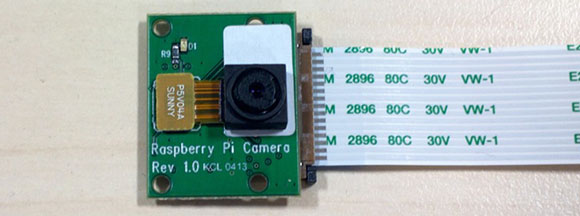

Your Raspberry Pi has on-board connectors for cameras and displays, but until now no hardware demigod has taken up the challenge of connecting an image sensor or LCD to one of these ports. It seems everyone is waiting for official Raspi hardware designed for these ports. That wait is just about over as the Raspberry Pi foundations is hoping to release a camera board in the coming weeks.

The camera module is based on a 5 megapixel sensor, allowing it to capture 2560×1920 images as well as full 1080 video with the help of some drivers being whipped up at the Raspberry Pi foundation.

Considering the Raspi USB webcam projects we’ve seen aren’t really all that capable – OpenCV runs at about 4 fps without any image processing and about 1 fps with edge detection – the Raspberry Pi camera board should be less taxing for the Pi, enabling some really cool computer vision projects.

The camera board should be available in a little more than a month, so for those of us waiting to get our hands on this thing now, we’ll have to settle for the demo video of the Pi streaming 1080p video to a network at 30fps after the break.

[youtube=http://www.youtube.com/watch?v=NDbxqI1yWwM&w=580]

Why is the video vibrating?

Possibly because of youtube video shaking correction

Actually that’s probably wrong

Looks like a noisy clock line scanning the sensor

kinect killer(replacement) ?

How exactly ? If you ignore the 3D, as you appear to be, then a kinect is like a USB 1.1 webcam ?

Kinect

——–

640×480 pixels @ 30 Hz (RGB camera)

640×480 pixels @ 30 Hz (IR depth-finding camera)

pi-camera 1.0

—————–

2560×1920 static images

1920×1080 @ 30 Hz

The rpi camera sounds like it has the stats needed to build a kinect clone . especially with the higher res it shouldn’t be all that hard to either build a break out board for the camera pins to add duel cams giving it 3d. Give it a servoed base and the right programming and you have an interactive notouch video based input interface i.e a kinect.

The Kinect is not just a stereo camera, but actively illuminates the scene with an infrared pattern. So, still not the same even if you add another RPi camera

There may be a way with 2 RPi’s. one for visible light and one for IR and a complex IR LED scanning device controlled by the GPIO pins probably of both RPi’s. But I can’t imagine it beating the Kinect in performance (P.S. I’m no fan of M$)

We need the price

I heard it was going to be in the $25 range, but could be wrong. Link here: http://www.raspberrypi.org/archives/3224 in the comments. “Liz”, who I thinks works with Raspberry Pi, quotes it as $25.

$25 same as the model A, it is in the comments on the RPi site.

The question is – are those drivers open or closed source. I do not see it mentioned.

If people could access the GPU for processing then the OpenCV results would be much better.

It will probably be done the same as everything else interfacing with the the GPU through a mailslot and only a specific number of functions.

One of the reasons nobody else has yet attached a third party camera to the PI is that it uses a proprietary bus standard called MIPI-CSI. It’s part of a suite of bus standards designed for the mobile industry (much like the MIPI-DSI bus used for the Pi’s LCD interface).

Unfortunately, it’s very difficult to get any kind of documentation for these buses without shelling out for a corporate membership in the MIPI organization (I’ve tried, for what it’s worth) and apparently it isn’t even a terribly tightly written standard so every different model of display has proprietary differences that you’ll only get documentation for if you get it directly from the manufacturer (usually after having signed an NDA and being a big enough company to make it worth their time to work with you in the first place).

100% closed. Rasppi foundation perosn said users were TOO STUPID to understand video processing done between camera module and hardware encoder (color correction) so they wont allow us to modify it. They also wont allow us to connect any 3rd party CPI camera modules (cheap cellphone ones) – that would require access to the code.

It will be all in GPU blob.

Do you have a link to the “TOO STUPID” quote?

http://www.raspberrypi.org/phpBB3/viewtopic.php?f=9&t=1140

“that takes many man months to get a decent image and you really need access to a image lab with proper lighting set up etc” = you are too stupid, get lost

He’s not saying you’re too stupid so you won’t be allowed to modify it. The GPU code is closed source because Broadcom won’t release the source (something that’s beyond the foundation’s control). Apparantly he IS trying to make you feel better about that fact by saying you’d be too stupid to take advantage of the source anyway.

To vpoko:

Err, huh? Broadcom won’t release the source, which is something beyond Broadcom’s/Pi Foundation’s control?

Or were you just not aware that the pi foundation is none other than Broadcom?

It’s not a matter of my awareness, the Raspberry PI foundation is categorically not Broadcom, nor an affiliate of Broadcom. James, one of the directors of the foundation, is a Broadcom employee – not a Broadcom director or executive mind you, just an employee. He has used his connections at Broadcom to get parts (including the CPU/GPU SoC) and have the almost-useless GPU shims released, but he has no ability to force them to release the full source for the SoC.

Incidentally, Broadcom is a publicly traded corporation, traded on the NASDAQ in the US. The Raspberry Pi foundation is a not-for-profit organization chartered in the UK. Most of their directors, save James, have no affiliation with Broadcom whatsoever. If you’re claiming to have “inside info” that says otherwise, I’m sure both Broadcom’s shareholders and the UK authorities that regulate non-profits would *love* to hear from you.

> Your Raspberry Pi has on-board connectors for cameras and displays,

> but until now no hardware demigod has taken up the challenge of

> connecting an image sensor or LCD to one of these ports.

Some call it a challenge, I call it a closed system without documentation. But it probably helps selling higher margin accessories.

Since the CSI cam goes directly into the GPU, I have to agree 100% with you that the only way a hardware demigod could do anything would be to backward engineer the GPU and update the closed source firmware to enable support for it.

it was never meant to be an open source product though. Don’t know why everyone bitches and moans about it being a closed system when it was never intended to be open.

Actually, they like to make a big deal about it being Open Source. I think that’s why they get so much flack for it.

Do you remember when they tried to claim that their graphic drivers are open source but were not?

Demigod? He’s got minions!

I have some stupid questions:

What does a camera connected to aforementioned camera port buy you over a USB camera?

Quality? Framerate?

Assuming there are advantages, do they out weight the possible disadvantages? (like cost, compatibility, availability, documentation etc)

it connects directly to the gpu. here you can run custom code and gain processing power. plus no extra load for usb overhead.

you cant run SHIT. All you can do is dump picture into hardware encoder. There wasnt even a plan to include access to raw video data (and probably there still is no plan to do that).

It will be the cheapest way to get your hands on 1080p h.264 stream. Currently you need >$150 Logitech usb camera for that.

Can’t wait to get one to create a plugin for the mjpg-streamer using the hardware accelerated MJPEG encoding feature.

Is the lens fixed or is there a way to remove it (and replace it with, say, a telescope)?

(From comments on RPi site) – “The lens is the same piece of kit as the lens on a mobile phone camera. So…no!” http://www.raspberrypi.org/archives/3224#comment-39387

If you’re planning a project exclusively based on recording quality with some sort of computing device at cheap i don’t see a reason of why using the Rasberry.

People get tied to the Pi just as if it’s the “Holy” Device,while at some points is Open yet more restrictive than a Closed source device which is closed but at least does the basics out of the box.

If the quality of the youtube video is the actual one and single available quality from the camera i’d be better off buying a 30$ HD Webcam and a 30$ Chinese Android Box with an 1.2ghz Processor and a lot more ram from Ebay that will also have better video recording capabilities.

Sorry but the facts of the Camera project on the Rasberry say it isn’t a good idea,and advertising a camera with 1080p resolution and image clarity compared to 320x240p CMOS based webcams without auto focus from the early 90’s wont make it better.

I’m not trying the project and the EFFORTS the Rasberry people have done,but don’t start with a woman you can’t satisfy,it will only offer displeasure.

Go ahead and start moaning your facts at me but you aren’t going to get an answer.

My comment forgot to login.

USB 2.0 isn’t capable of much higher than VGA, so an HD webcam needs to be USB 3.0 or TCP/IP.

What cheap webcams can do 720p VIDEO? I see some that claim 720p, but only for stills. I am looking for one.

I suspect there’s a codec chip built into the camera. The TV signal channels over UHF and VHF have far less bandwidth than USB HS, and obviously these work.

http://en.wikipedia.org/wiki/List_of_cameras_with_onboard_video_stream_encoding

it seems C920 went down in price (or got discontinued), starts at ~$80

Don’t forget FireWire. Most “1080p” cameras either compress/encode the video (like the Logitech webcams).

Only camera’s I’ve seen that spit out raw uncompressed HD video to a computer use some ridiculously high bandwidth interface like Firewire or SDI.

I’ve built 250 fps image processing (320×240, grayscale) on a beagleboard, starting with OpenCV and then eventually breaking things out. OpenCV is garbage code. There are a few things you can overhaul, though, which will make a lot of difference.

Can you please elaborate your last comment or give some pointers.

The implementation is proprietary, but I can tell you the deficiencies of OpenCV. (1) OpenCV’s V4L camera driver is absolute rubbish, author should be shot. (2) OpenCV is fundamentally OO, which creates an extreme memory bottleneck. Rework it to pass pointers, not objects. malloc and memcpy are the enemies. This is DSP 101… not even, it’s just common sense. (3) OpenCV uses double-precision almost exclusively. ARMs suck at double precision, and single-precision is more than adequate for real-time graphics. Some GPUs use half-precision, BTW. It’s a tedious port to take OpenCV from double to single, but it’s necessary.

Thanks!

I just remembered that the raw frame rate was… wait for it… 1100fps. This was on the original beagle with the ~740 MHz processor, and I wasn’t using the GPU or DSP, just pure CPU. 250 fps was after processing, which included histogram equalization, some gaussian blurring, a jacobian (edge detect), and a little bit of ray tracing (just a tiny bit). Anyway, the OpenCV based prototype ran at 10fps or so. I bring this up because I think the beagle products are worth the extra price if you need to do any kind of serious DSP. 3 times the price… 10 times the machine. I still love the RasPi, but I think it’s better suited for “connected world” apps.

Do you have this port available online? We’re going to run OpenCV on a BeagleBoard xM for a Senior Design (capstone) project. Thanks!

Which camera module were you using to get 1100fps?

I don’t remember the chipset, but it was a normal one. It maxed at 125fps. After that, we were just pulling the same frame multiple times. In addition to being plainly slow, I recall that the reference driver actually had a busywait to prevent this sort of thing — which I promptly removed. busywait is the way of the devil. The point is, if there were a 1100 fps camera, it could have been used. There were no shortcuts in the driver to skip frames.

These comments have convinced me never to do a hardware project for the community, because the amount of sneering negativity really wouldn’t be worth it.

Just dont run around announcing OPEN SOURCE drivers and calling real open source coders “nobodies” and you will be fine.

Unfortunately every single last article posted to hackaday over the past few years which never stated “open source” (or were even posted by the projects creator for that matter) seems to disprove your statement.

Although it is a bit humorous to see a person react to their project being posted here by someone else months prior, reading the comments and being accused of attention whoring for a worthless hack that they only documented online for their own use :P

That and do not lie about the price.

I would like to get one to integrate with my beagleboard … sounds like fun time ahead.

Why would you? afair beagleboard-xm has proper parallel camera interface. You wont connect CSI camera to it (not to mention you wont find CSI camera documentation)

I’d like to see a DSI LCD with touch before a stinking camera.

Raspberry Pi model A worldwide shipping from http://www.etsy.com/shop/bitcrafts