Just a few days after Christmas last year AirAsia Flight 8051 traveling to Singapore tragically plummeted into the sea. Indonesia completed its investigation of the crash and just released the final report. Media coverage, especially in Asia is big. The stories are headlined by pilot error but,as technologists, there are lessons to be learned deeper in the report.

The Airbus A320 is a fly-by-wire system meaning there are no mechanical linkages between the pilots and the control surfaces. Everything is electronic and most of a flight is under automatic control. Unfortunately, this also means pilots don’t spend much time actually flying a plane, possibly less than a minute, according to one report.

Here’s the scenario laid out by the Indonesian report: A rudder travel limit computer system alarmed four times. The pilots cleared the alarms following normal procedures. After the fifth alarm, the plane rolled beyond 45 degrees, climbed rapidly, stalled, and fell.

Pilot Error?

The media headlines focus on the latter steps in the failure chain, in part because the pilots were never trained to handle the type of upset that occurred. It wasn’t just AirAsia who omitted this training on the A320. All airlines did because Airbus, the aircraft manufacturer, did not expect the aircraft to ever experience such an extreme upset. Note that France, as the host country for Airbus, participated in the investigation.

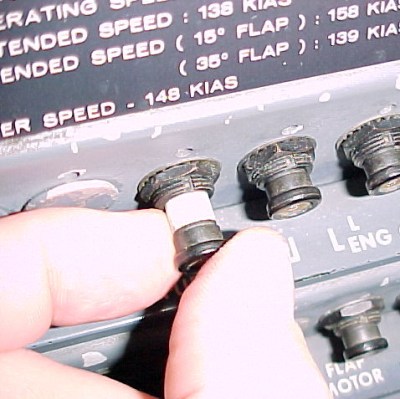

As technologists we need to look further. The technical root cause was cracked solder joints on circuit boards for the rudder limit control system. This system limits the amount of rudder movement at high speeds. A key point is this same system failed 23 times in 2014. This was considered minor damage and never fixed.

As in many situations, the failure chain is a cascade of human failures to respond properly to a technical fault. Little mentioned in most reports is how the pilots attempted to fix the fifth rudder control fault. They followed normal procedures for the first faults but the last time they opened and reset a circuit breaker while in flight. Somehow that meant the autothrust and autopilot were disconnected and never restored. This put the pilots solely in control of the plane through the fly-by-wire system.

Tragic Sequence of Events

To summarize, here are the three key failures:

- Bad solder joint,

- Cycling the circuit breaker,

- Inadequate recovery training.

We’ll ignore the mistake of not properly troubleshooting the board. That is a human failure but also a larger policy issue for AirAsia and not directly technical.

Bad solder joints occur despite best efforts to prevent them in manufacturing. Diagnosing an intermittent joint failure can be a nightmare so we can sympathize with the aircraft maintainers. How should we handle intermittent failures in critical or important systems? Clearly the system was checking its integrity because it kept issuing warnings throughout 2014. Is it feasible to have a system refuse to function if a certain number of failures occur? I’d suggest that after 6 faults it could have a heightened alert, like refusing to boot when powered on in a safe environment (i.e. parked on the ground). Basically the system says, “I know I’m bad, now fix me.”

Why did the pilots mess with the circuit breaker? One report says the pilot saw a maintenance worker cycle a circuit breaker to clear a fault. That’s fine on the ground but not in the air. Why would a pilot try this, especially since there are advisories to pilots not to reset circuit breakers unless the system is flight critical? The control system here is a safety feature, but not critical so why not just leave it off?

People in general get overly comfortable with technology since it abounds. There are all kinds of jokes about non-technical relatives doing something crazy to a computer because the same action fixed something else.

Unfortunately, this often means people don’t know what they don’t know. In this case, the pilots appeared not to know cycling that breaker would disrupt other systems. Yes, it sounds strange that would happen and I can’t explain it because I don’t know why that would happen. If true, it appears to be a systemic problem that should be addressed. In our work, we need to make sure that failures in one part of a system do not upset critical parts elsewhere.

The pilots weren’t trained to handle the flight upset because even Airbus, the aircraft manufacturer, did not expect the aircraft to ever experience such an extreme upset. I guess since Murphy isn’t French they don’t expect his effects to occur there. This assumption probably derived from the aircraft being fly-by-wire. The expectation being the aircraft would not let itself become upset to this degree. But the automatic flight systems were disrupted by the cycling of the circuit breaker.

Wrap Up

Failures in complex systems take a lot of effort to track down. In this situation we see how three separate actions lead to the failure with a fourth, the maintenance failure, contributing greatly. This points out that the total failure might have been avoided at multiple times: If the solder joints had not failed. If the pilots had not cycled the circuit breaker. If the pilots had restored the automatic flight computers. If the pilots had reacted properly after the upset.

Even as hackers we need to keep in mind when and how failures can occur. We’ve written articles on electronic door locks created by hackers. How do you get in if the power goes off or a bad solder joint fails after a few hundred door openings and closings? Hopefully a key will override the electronics. Fortunately, most of the hacks we see are not critical. Fortunately, failures would not be life threatening. Let’s keep it that way.

This is really a tough situation for a pilot and a maintainer. I have a lot of experience repairing aircraft wiring with the U.S. Air Force. We faced a very similar situation that led to the death of 8 members of my wing. Bad soldering was the cause.

In the case of this Airbus, I place the blame solely on the airlines. When multiple aircraft are experiencing a problem with their control systems, it’s time to stop and figure it out before someone gets hurt. The sad truth is that companies don’t do this because it’s less expensive to wait until someone is hurt than to be proactive.

How is this the airlines fault?

Aviation law mandates that pilots follow the Flight Operating Manual. And if they had followed the manual like they are legally required to do nobody would have died.

The rudder limit control system failed 26 times, and it could have failed 126 times. It really doesn’t matter how many times it fails, as long as the pilot sticks to the Flight Operating Manual. And the entire thing can fail without causing the aircraft to crash.

Aircraft are designed to tolerate failures. Hell an A320 could have an entire engine fail and if the pilot would stick to the Manual it wouldn’t crash.

96% of all aircraft crashes are caused by pilot error. Humans make mistakes, it’s a scientific fact. The only way this can be prevented is if the airlines got rid of the pilots and let the autopilot do everything, but people wouldn’t feel safe getting on a plane without having a pilot ironically.

And the autopilot would have prevented the crash if the pilot didn’t pull it’s circuit breaker and turned it off.

Thomas, that’s not entirely true that any multi-engined aircraft, even an A320, could suffer any conceivable type of engine failure and always be fine. An uncontained engine failure with shrapnel impacting flight controls during, say, critical moments of flight like takeoff, could easily result in a significant enough loss of control that it may render the aircraft irrecoverable, particularly if the aircraft isn’t stabilized at the time of the failure, such as during a bank on climbout. If you disagree, look at how much control was lost on the Qantas A380 when its engine blew.

Yes, this *is* exceptionally unlikely, especially today, but that doesn’t mean it’s impossible.

Insofar as whether or not this is the airline’s fault, well, this kind of repetitive fault that isn’t properly diagnosed by maintenance and repaired is usually a result of maintenance directives and pressure from leadership to keep aircraft in service.

I personally think that the blame should be shared. I worked on mission critical equipment in the engine room for a submarine for multiple years. Yes operator error happens and equipment failure happens. Although, when you see a common theme of the same failure occurring over and over again with the same piece of equipment I believe the manufacturer has a duty to go beyond to try to fix the problem, especially when it could end up in the cost of human lives. Yes, this could’ve been avoided by the pilots understanding their own systems better but as an engineer you should always remember the golden rule of when you’re doing your job right, someone else isn’t. Good design engineers have to remember that when people are put in control of their systems, it doesn’t mean that that person knows what they are doing. Hence, don’t fix something when it’s not broken, but fix something when it is. Especially when lives are at risk.

BlurD – You BUBBLEHEADS have one thing AIRBUS pilots do not. Hot-swappable PC boards. Like if your EAM system had a cold-solder joint your “Sparks” would not have to break out his soldering iron and reheat the joints. He (or She now) would just go to ship stores and get another PC board and swap it out live. You guys even fix your own electronic photocopier machine too (also board swapping).

AIRBUS pilots don’t have that luxury of redundant stores. Nor are they trained on board swapping. All they do is recycle a circuit breaker (and it works for the most part). I think your skipper would keel-haul you if you did that on a boomer or fast-attack. A lot of submarine systems don’t react well to hard resets like that. However a controlled reset is probably OK in an emergency.

I don’t think the Flight 8051 had anything to do with cold solder joints, rudder limit failures, computer failure, nor pilot error. I think the same country behind your infamous P.O.3C Ariel J. Weinmann (look it up) was behind this via a MITM* exploit via a critical maintenance telemetry VHF radio link AIRBUS calls AIRMAN. Why? To make Malaysia open up to US military base new installations in 2015-2016. The 7th Fleet (replete with boomers and FA’s) loitering off the coast in Indian Ocean. I’ll guess that PRC’s JIN’s and YUAN’s are also loitering there too, Remember the COLD WAR? It’s back!

*MITM – Man in the middle

ThomasHoffLTD – Autopilot (AP) automatically disengaged NOT because the pilot pulled a circuit breaker. That workaround trick actually worked as it always does on the AIRBUS A320. The airline did try and fix it but sometimes one gets through. It is NOT a critical avionics problem. It only makes the rudder intermittently lock into a too small deflection limiting the pilot’s turning right or left. The AP automatically disengaged because SOMEONE (maybe not even the pilots) put the plane in a nose up attitude causing the plane to stall. AP does this as recovering from stall is not its job. Its the human’s job.

I have reason to believe (yes conspiracy theory) that the ailerons command was sent from the ground via a VHF radio link built into the AIRBUS at the factory. Who would do such a dastardly thing? It’s a short-list…

SQTB

It’s actually difficult to really put blame on the manufacturer here. Fly by wire might cause problems as it also avoids problems. However, if the pilot puts the system into a state that they don’t know the boundary conditions of, well, the blame’s on them, I’d say. If you cycle your household fuse you shouldn’t be surprised if your opened word document might be lost…

Great watch is a series called ‘Airline Disasters’ possibly still on Netfix. Favorite is still the Gimli Glider, mostly because it was a really dumb mistake and in the end no one died.

I remember reading about the Gimli Glider in Reader’s Digest. Too bad the writer of the story used it as a rant about the stupidity of converting to the Metric System.

I just read that here – http://www.damninteresting.com/the-gimli-glider/

It’s interesting. In the end the pilot saved the day through using a skill set that was beyond his airline training.

I love that show (it’s called “Mayday” in Canada) and this article reminded me of another crash where the pilots used the circuit breaker to turn off an annoying warning but it also disabled, unbennounced to him, the flaps warning and during take off he forgot to deploy the flaps and the plane crashed, don’t play with the breakers.

Air Crash Investigation, aka Mayday, which people put up on YouTube, is my personal favorite. Not sure if it’s the same series, but I don’t think that’s one of its alternate titles. I prefer that series in general, especially over similar ones like “Seconds to Disaster.” It takes out the sensationalism from the story and tries to present it as factually as is possible. For a little bit of spice, yes, they do dramatize some things, but still.

The same system failed 23 times in 2014 before the crash? Since it would have been difficult to reproduce the issue on the ground and the high failure rate, why didn’t anyone pull the module out and look closely? They could have noticed the solder crack and put a bulletin to all planes using the same systems to take a close look.

Instead they played ostrich and let the pilot deal with it, which eventually lead to the disaster.

ahh ahaha… I see what you did there… “lead”…

Uhhh Genki meant to say “led” not “lead”. I don’t think he was trying to be clever…

Genki – OSTRICHES don’t really put their heads in the sand. That’s a fallacy. They are fearless and can kill even a Lion with their talons. Also the plane did not crash due to it’s solder joints. It crashed because the fly-by-wire system got conflicting commands making it go into a fatal stall and subsequently into “airplane upset”. The pilot’s could not recover as the system was not cooperating with their efforts to regain full control. I believe that something outside the plane was interfering with the FBW system.

Yup, couldn’t agree more.

Speaking of “lead”, I wonder if aviation electronics is subject to RoHS lead-free nonsense?

Yes.

There are exceptions, for example repairing old stuff, but civil aircrafts mostly use quite ordinary components (not military temperature nor space rad-hard…), particulary in pressurised areas, and as the whole industry is going to RoHS manufacturing and recycling, there is no choice but follow the trend.

For what I work on, HGS Systems, it depends on the contract. There are some contracts that require the use of RoHS, including switching to a water based conformal coating. Other systems are not under the same restrictions so we are free to use lead and rosin based chemicals.

The report explain that the maintenance procedure used put each incident in a category of events that was minor. Because of that there failed to identify the growing number of incident that passed over the limit set by the manufacturer. The report also detail how the design of the computer was improved over the years to address the physical fatigue of the soldering joints. This was a long term process because this computer was not critical for the safety of the aircraft and was already two channels redundant.

A basic problem this highlights is how problems are reported and handle.

If a problem is reported (by a pilot in this case) and no one takes ownership of the problem then the workaround becomes the fix. Then when the users become frustrated with the workaround, things start to go wrong, in this case in a very bad way.

Too many people work at identifying workarounds and not enough identify root cause mainly because it works against the metric.

Most accidents are put down to “human error” because the human is who touches the controls last after the computers have given up trying to figure out what the hell is going on.

This is the gold standard among test pilots. If all the fatalities are NOT from human error, why would you get in the plane? It is a rationalization in once sense but certainly true in another. The cracked wing spar is an error by the designer, the fabricator, the inspector, the last pilot to make a hard landing or fly too fast in turbulence, etc. Always a person at the end of the chain, and not the machine.

The point I was trying to make is, that in the public perception the machines never fault because it’s always the pilot who gets the blame – never the plane.

This leads to notions like “Let’s automate everything. Self-driving cars, what a great idea!” without understanding that the computers are far far worse at it than people are in actual reality.

The flight computer have not given up and it was safely operating the aircraft even when the rudder limiter computer failed. The aircraft would have continue flying safely if the pilot didn’t disconnected the flight computer.

The pilots did not disconnect the flight computer intentionally. A separate fault or flaw in the system caused the flight computer to disconnect when an unrelated system was power-cycled.

Imagine the fuse had blown on its own because of a proper short in the failing subsystem. The same fault would have happened without the pilot interference, with the same end-result. The pilots were ultimately not at fault.

The report say clearly that the both the flight augmentation computers units was de-energized at respectively 23:16:29 UTC and 23:16:46 UTC, causing the FAC 1+2 FAULT. The 2 flight augmentation computer units was part of the 7 computers units that form together the flight computer. Those units are no allowed to be de-energized like there have do. Each separate redundant unites have each there own fuse and supply so that a single fuse would not cause a such mess. You have to realize that the LTLU is one of the channel of the FAC, so it’s far from a unrelated system: it’s inside the system there disabled.

Ultimately the pilots was fully trained to flight without any of the flight computer units: this is the mode know as the alternate law where all protection are lost.

In this accident not only the pilots was faulty at disconnected both FAC without reactivating them properly, putting logically the aircraft in alternate law, but the pilots was faulty at not be able to aviate the aircraft, the highest task possible, and the most basic requirement to barely flight any aircraft.

Are you confirming or denying?

If the faulty circuit board in question had had a short circuit and the fuse had blown due to that instead of pilot action, would the flight augmentation computers power down? If yes, then the fault is not with the pilots; that’s a design flaw or an additional problem in the circuit.

The resetting of the circuit breaker should not have caused the computers to drop offline.

With computer servers it is assured and expected to have redundant hardware if something fails, this helps not just in reducing downtime but in troubleshooting because if you have two identical mirrored hard disks and one fails then you can use the other and be sure it’s not a problem somewhere else, e.g. a motherboard fault. I don’t see how the electronics controlling a plane can be so large or power hungry that it is unfeasible to have redundant critical systems available.

The rudder limiter computer was two redundant channels. Only two channels because this is not critical for the safety for the aircraft. If you look at the report you will find a photo of main parts of this computer. But adding redundancies to the computer will not address the real cause of this accident: Pilots that disconnect the flight computer while there are unable to aviate manually. Keep in mind that in the way aircraft are operated today, the pilots are supposed to be the redundancy of any computer failure.

I found it interesting that both channels had a solder crack per the report.

Agree. The report also detail the history on how there improved the soldering joint reliability over the years. There implemented the normal actions to lower the risk. But lowering the risk never completely avoid the risk.

real kicker? I doubt all the other planes in the air will have their boards checked.

“Does the failure of this cost more or less than maintianing it?” ugh…

I wonder if that may have formed part of the problem. It seems that people believe that there was some other event or failure apart from reverting to alternate law.

I could imagine if you were designing a redundant system that detects and unwanted limit then you would *not* want the overall system to take corrective action when only one input channel indicates and error condition. When you have two (of two) incorrect inputs then you could only assume (on the basis of probability) the the inputs were correct and in that situation you would expect the overall system to take corrective action.

Perhaps the “trim” computer didn’t act on a dual error input as it was getting the correct feedback from the flight path data indicating that rudder trim was correcting flight as expected.

What then happens in alternate law? Can this cause a rudder hard over?

A rudder never gets anywhere near limits in high altitude flight. At that speed, high rudder inputs would send the aircraft into a spiral.

Yup, one Coffin-Corner effect. Completely overlooked in this HaD post.

There wasn’t anything in the full report that explored what scenarios could evolve from a dual limit input failure.

Critical functions in aircrafts are both redundant and dissimilar. There are several flight control computers and they are not all identical. There are several independent electrical power generators and circuits. The A320 has 3 hydraulic circuits (fly-by-wire but electrically controlled hydro. actuators).

Some equipments are not indispensable, and the aircraft can fly without them. An airliner can even fly and land with only one engine.

The accumulation of failures makes disasters. Here, bad equipment + bad pilots.

Sorry, but this crash had little to do with the plane being automated and fly-by-wire. At least those were only indirect and contributing factors at best.The same accident could have happened even on a conventionally controlled (non FBW) plane. Despite the fault and even with the automation disconnected (the captain didn’t realize that resetting those circuit breakers will will not bring the computers back online – some button presses were required to do so), there was nothing physically wrong with the plane. It was perfectly flyable and controllable and the flight instruments were working as well. Only the automation was degraded, but not completely disabled (Airbus calls that regime “alternate law”). Basically only some “conveniences” like some protections were lost, but no flight controls.

Why this gets blamed on human error (as did the Air France crash in Atlantic) is that the first officer corrected the uncommanded roll (consequence of the automation dropping offline with nobody “flying the plane” suddenly) by pushing the side-stick to the side *and back*. AND KEPT PULLING BACK. That lead directly to the plane zooming up and stalling. Stalled plane doesn’t fly – there is no lift to keep it up, so it rolls and drops out of the sky.

The captain realized what was happening, but he didn’t override the first officer’s controls properly (one has to hold the override button until it latches, not just shortly press it as he did), so they were both controlling the plane. In such dual input situation the control systems sum the inputs, same as the old mechanical systems did – if one is pushing the stick forward and the other pulling back then nothing happens. And that kept going on all the way down … :(

Recognizing a stall and recovering from it by pushing the control column/yoke/side-stick FORWARD to lower the nose and regain airspeed is basic flight training. Unfortunately, this is where the reliance on automation can come into play – they have never experienced the problem before because pilots today almost never hand-fly (it is often even forbidden by the operating procedures of the airlines on economical and safety grounds!). That leads to stress, confusion in an unfamiliar situation and then incorrect reaction. Add to it the fact that with some of the current training systems one can enter the cockpit and start working as a first officer with only about 50 (!!!) hours of flight time, where a lot of it is simulator, not actual plane. So the manual handling abilities (flying the plane) are eroding or not even present in many. This was the direct cause of the Air France crash and also the Asiana crash in San Francisco last year where a crew was unable to land a perfectly healthy plane on a clear day by hand, without the automation.

The other reason why a human error is to blame is the fact that the captain decided to use an unapproved, non-standard troubleshooting procedure for what was at best a nuisance problem (loud caution every few minutes, but nothing affecting the controls or safety). He could have simply silenced the warning about the original system fault caused by the broken solder joint and carried on to the destination (it was a short flight only). In the worst case, when the standard troubleshooting procedure (computer restart) didn’t fix it, he could have decided to divert and land at the nearest suitable airport. He started to pull circuit breakers instead, directly causing the problems above and then failed to handle the situation.

Fully agree with you first sentence, because without automation and fly-by-wire the pilots proved in this case to be unable to flight his aircraft, despite the fact that it’s the highest priority task.

What’s disturbing is that the captain repeated 4 times to his officer to “Pull down” when the first stall warning occurred but nothing like that when the second stall warning started: It only say “Slowly” 5 times. The report point out that the captain didn’t call out whent he take control as he must do and that the “dual input” warning was suppressed by the stall warning. So the officer didn’t know that the captain wanted to control the aircraft and disagree on the officer action. The two pilots didn’t shared there very different point of view about the situation. This bad crew management also played a big role in the accident.

Ex-military helicopter pilot here.

This summing of inputs is something that bothers me about this event as well as the Air France 447 crash where both pilot and copilot were trying to fly the plane and ended up stalling it into the Atlantic.

When control was to be transferred from pilot to pilot in the military, there was always a three-way verbal transfer. The pilot, wanting the copilot to fly, would say, “You have the controls.” The copilot would take the stick, and would say, “I have the controls.” Finally, the pilot, releasing the stick, would say again, “You have the controls.” State intent, agree to it and perform the transfer, and finally confirm the transfer. This three-way exchange was mandatory, and drilled in from the first day we stepped into the cockpit.

I would be very surprised if such a system is not in place in the commercial airlines as well, and if it isn’t, it should be instituted immediately. If I were the Air France 447 captain and was actively flying, and I were alert enough to see the copilot’s hand was on his stick, I would have beat him senseless for trying to take over my flight without any indication.

AirBus is also at fault in this, as summing the input of two flight controls is never what anybody would want. When I have my hand on the control, I expect the plane to do what I’m telling it to, not what the other pilot is, and definitely not something halfway in between! There should be some force feedback between the two inputs, or at the very least, a stick shaker to warn that there’s a mismatch in inputs between the two.

My understanding is that AirBus is known for building planes that think they’re smarter than the pilots. Supposedly, the pilot should be unable to move the stick or rudder in such a manner as to hurt the airplane. A rudder limit control is exactly such an unneeded system. GermanWings flight 9525 notwithstanding, I’d rather have a plane that responds to whatever the pilot tells it to, and trust the pilot to do the right thing.

Another issue seems to be the pilots’ tendency to pull back as their answer to any control issue. As the adage goes, push forward to go down, pull back to go up, and pull back further to go down faster.

The page 80 of the report describes the standard operating procedures from the operator manual. The standard callouts to take control is almost a copy/past of what you describes for you military operations. Like in the AF447 there didn’t followed the standard callout, increasing the incoherence between the pilots.

I don’t think that Airbus view there flight computers as smarter than the pilots. My understanding is that there don’t designed the flight computer to solve disagreement between the pilots, because this is simply not the task of the flight computer. There expected that the crew management with standard callouts was enough and designed the sidestick control for situation that was normally expected. The dual input mode is supposed to be temporary to gently average the two pilots actions that are normally very similar, the time to passe the control from a pilot to the other.

It’s now clear that by doing so, Airbus created a control device with more possible states than the states possible with the more conventional control. The most problematic state is the disagree state, and because there decided that the crew management will avoid this disagree state, there do nothing to address it on the control/computer level. The aftermath of AF447 and this accident show that something need to be done to improve the situation. Maybe there will add a force feedback to the sidestick like you propose, but I would not be surprised is there choose to add a periodic warning like “pilots disagree”, because this is really the root cause of the problem.

I have read many aircraft accident reports and I have some doubt about the idea of “trust the pilot to do the right thing”. The pilots’ tendency to pull back as their answer to any control issue only increase my doubts. My analysis is that the automated function must be split as much as possible so that if a function is lost, the pilot only have to increase his attention to this function. Actually the automation have too high tendency of stopping many functions at the same time when there don’t have all the usual coherency at the inputs.

Ah, but the pilot hit the override switch. He just didn’t keep it down long enough.

Perhaps he believed he already had full control and there was some secondary mechanical failure. He kept saying “slowly” “slowly” … was this because when he moved slowly, the co-pilot didn’t notice the change and therefore didn’t counter react, giving the pilot the impression that he did in fact have some limited control despite the (perceived) mechanical fault but only if his input changed very slowly?

Human consistently fail in the same ways. The airline manufacturing industry could compensate for this in the way aircraft are designed and the procedures are executed. *BUT* that blurs the line of responsibility as to who is at fault when an accident does occur and the airline manufacturing industry certainly doesn’t want that. To them – it’s a good thing that a pilot can be blamed for the very same mistake that any other pilot would have made.

In the stress of the moment (startle factor), these crew(s) forgot some basics in the handover they were trained. New sidestick planes (like the gulfstream G[45]00) have actuators on both sides. You can even feel the autopilot via this scheme. As long as humans get startled, some will revert out if their training, w/o a system providing feedback whilst dropping the craft from protected to unprotected, these events will continue.

You’re f{n} kidding me. Is pilot training so bad now that they don’t know to *stick forward* in a stall. Wow – just mind blowing.

Most western countries still train pilots and expects them to be able to fly the GD plane. And they get their hands on with real aircraft not just limited sim time.

The sad thing is that this is leading to more automation. I would much prefer a trained human pilot that can asses and act in the moment rather than hope some coder has coded for every possible situation.

Popping a breaker is a staple of the cockpit. Loo pump not working – pop the breaker, cabin pressure low – pop the breaker, landing gear not indicating lock down – pop the breaker. The breakers should be color coded so they think twice before popping something that will influence flight control.

This was a high altitude stall. Altitude is your friend here and yet they flew a perfectly controllable aircraft into a fatal crash. That’s mind blowing – really.

And I still disagree with some in that I think it’s airline industry error for not providing the training and adequate hands on experience.

I can see the psychology of the events though.

Repetitious alarm causes irritation and frustration and leads to a need to act. Not knowing an approved action they then turned to a very common cockpit action “popping a breaker”. As the related breaker looks just the same as those they pop often it seems like a *safe* action.

From my perspective this is 100% pilot error. Nose up when a stall alarm is sounding reeks of panic. I was taught within 2 weeks of starting an aerospace degree that increasing angle of attack beyond certain limits stalls a wing. That’s really basic stuff. When I taught aerodynamics to aircraft fitters I taught them the same thing.

I have also experienced stall/stall recovery during a flight test, stick shaker activates and auditory alarm sounds, the pilot is left under no illusion as to what’s going on. Under no circumstances is stick back the correct response.

I now work in IT in the banking industry and I have little sympathy for the “faulty alarm” argument. While familiarity very much breeds contempt, IMO this is never an excuse. I’ve seen more outages than I could reasonably put an estimate on being caused by support teams clearing alerts because “that system always alerts” when they don’t understand the nature of the alert.

Even though the reason the alert occurred was not the cause the of crash, if a reasonable procedure had been followed (tolerate the alert and escalate to someone who knows what’s going on when the opportunity arises) then this tragedy would not have happened.

Mental2k – Are you saying that because the pilot in charge kept saying “pull down… pull down..” to the co-pilot? Maybe pull down is not what you think it means. Maybe that’s his way of saying nose down? To me “pull down” is confusing. Something was wrong with that plane that was not obvious to the investigators nor us. IMO that is.

I thought the pilot was French. He probably used the term “pull” because he has heard that in the simulator. The ground proximity alert says “pull up” repeatedly.

If I was confused then I would ignore the word “pull” and go with “down” as it is the primary adjective in English.

Oops, this was actually meant as a separate topic rather than a reply. I chose to ignore that phrasing as I find it confusing too. My understanding was that the co-pilot pulled the stick back to bring the nose of the aircraft up. This will exacerbate the stall.

I’d be curious to hear from a pilot if they develop an instinctive reaction like drivers do with the brake pedal. If I think a car I’m travelling in is going to hit something I instinctively hit the brake. Even if I’m in the passenger seat.

No I think the CO-PILOT was French and the CAPTAIN was Indonesian. They both spoke English as a common language. They both were experienced pilots with many hours. I don’t think the CO-PILOT “pulled” the sidestick down (or back) causing the nose up attitude exacerbating the stall. Since his stick was the only one with command access at that moment we can only assume it was him that did it. It appears the CAPTAIN was the one saying “pull down”. Why I don’t know. I think the phrase should have been NOSE DOWN and not PULL DOWN. Or maybe he meant “pull your nose down”. I think he was trying to bring the nose down by pushing the stick forward,. However, I believe something in the control system was preventing his input and being over-ridden by a phantom on the ground from the VHF telemetry radio on-board called the AIRMAN.

A certain national political ENTITY back in the 1980’s or 90’s invented a MAN-IN-MIDDLE exploit radio transceiver device to confuse the Libyans into thinking they were getting communications from an authorized Libyan commander, when in fact they were talking to a phantom posing as both sides of the digital conversation. A brilliant exploit that was real out of the box thinking and strategically confusing for the Libyans. The SAME entity could be at work here via an extremely rich American man who is pro-this country in question which will remain nameless to not offend many of it’s ubiquitous sympathizers here at HaD.

Just my conspiracy theory…

SQTB

Air Asia is a pay-to-fly airline. They hire people who’ve just got their pilots licence (who can’t get jobs anywhere else because every airline wants to hire experienced pilots) and literally make the pilot pay the airline for the privilege of flying. That’s right. I said the pilots pay money to the airline.

Pilot error is not surprising under the circumstances.

This could not have happened were it not fly by wire. Mechanical controls do not allow the two pilots to have different inputs into the controls. Had the system not been automated, the pilots would have had experience at flying the plane and not make a mistake that an instructor in a Piper Cub would not let by.

Airbus has a habit of making their fly by wire automated systems hide how the plane is actually performing from the pilots and this philosophy has destroyed a number of planes and killed hundreds of people in otherwise perfectly operational air frames.

If you had purely mechanical controls for an aircraft this size then you would need a hydrologic exoskeleton to move them.

Not nessecarily. Before fly-by-wire planes had hydraulically assisted controls, which were still mechanically linked. Like power steering in a car

You don’t need to have mechanical linkages all the way to the control surfaces. You just need the pilot and co-pilot stations to be mechanically linked so that they move together. The fly by wire system tries to simulate this but it doesn’t have as much torque as another human trying to wrestle the stick from your hands.

I don’t know but I think the AIRBUS A320 has a back-up mechanical system just in case of catastrophic FBW failure. I think any FBW system has this.What would happen if a FBW system was taken out by an EMP? You’d have to resort to mechanical controls. Of course it would be like flying a brick but good pilots are not stopped by this. They an fly a brick too.

SQTB

I would have expected the pilots to remember their Airbus-specific flight laws training, including the loss of the alpha-floor protections. Unless they assumed that even in Alternate and Direct Laws that the a-floor still kicked in on stalls so it would command TOGA and get them out of the stall.

Also… yeah, sheesh, they couldn’t EMER CANCEL the ECAM alert?

I think that’s a part of this. The reality is that if you get no ‘hands on’ training and no ‘hands on’ (actual) flying time then logic stands a good chance of going out the door first as stress takes over. Having the alarm panel lit up like a Christmas tree – looking at the altimeter continuously spin like a pending death clock and having the attitude indicator in a position that you have never seen it in before (even in sim) is going to make most humans overcome to stress and behave in an instinctive and non-logical manner. This is the fight or flight instinct.

To take control of a dangerous and threatening situation you need a level head and some confidence or instinct takes over. It’s easy to argue about what they should have done while we are here safe on the ground but would we have done differently? We want to believe we would but the reality may be different.

I have read the report. Like the AF447 the pilots lost a fully operational aircraft because there reacted inappropriately to the disconnection of a minor device, while the flight computer was still safely handling the aircraft. In both cases all the pilots have to do is simply nothing but monitoring the situation, as least as long as the flight computer is capable to do his job. In both cases there reaction caused the flight computer to be disconnected, only to discovered that there where unable to aviate in a way to avoid the most basic risk of a aircraft: stall.

jcamdr – I fully agree with RÖB – I do not believe it was pilot error. The 1st thing that the Captain said after he heard the “Calvary Charge” (a sound file to indicate auto-pilot disengagement) was in English: “Oh my god!” He realized that something was completely SNAFU. The report(which I posted down there somewhere) really does not support pilot error. It suggests just what the French co-pilot said in French which the Captain did not speak so he was talking to himself evidently and did not expect the Captain to understand him :“Qu’est-ce qui ne va pas!” or “What is going wrong!” Something was going wrong with the plane which was not the rudder limit problem, popping breakers, or loss of FAC’s. This should have been a “cake walk” UNLESS ‘something very strange was afoot’.

It is my understanding from Wayne Madsen (ex-NSA) that someone very powerful is trying to disrupt Malaysian air transport because Malaysia refuses to help out in the Pacific Rim Operation to thwart the PRC in their ongoing expansion efforts. To stoop to murder of 160 souls is just sociopathic. Am I jumping to conclusions? Probably. But that’s how I roll I guess.

Please tell me your not an social media OEV by Ntrepid? That would be quite disappointing to have OEV’s on HaD too. They seem to be everywhere lately. We don’t need them mucking up the works here at HaD. Don’t worry we are mostly all patriots here (at least me :-) )

RÖB – read my postings down there please (CTRL-F and type sonoft)

The surprise of the pilots was caused by the aircraft entering suddenly into the alternate law because the captain removed the power of the two flight augmentation computers, an action that the pilots was never supposed to do, even if both channels of the RTLU failed. The aircraft what perfectly flyable without any RTLU channels.

jcamdr – One can’t fly an Airbus A320 without FAC’s? Flies like a brick but still can be flown manually I would think.

I never say what you assign to me.

Yes, the A320 is fully flyable without any FAC units, so it’s even more flyable if it’s only the RTLU channels of the FAC units that failed like in this accident.

From the CVR transcript there is no indication that the captain prevent the officer that when he will disconnect the all the FAC units (something he are not allowed to do) the aircraft will immediately go into alternate law. So the surprise of the officer, that was the pilot flying at this moment, was understandable. It take 9 seconds to the officer to identify that the aircraft was unstable and from his word it’s clear that he don’t understand why the aircraft was now in alternate law: Maybe he focused too much at trying to understand why the aircraft passed in alternate law instead of simply put all his attention on aviate in alternate law as he is required to do at the highest priority. Anyway, the officer almost recover the stability of the aircraft but make the mistake of losing speed by a wrong nose up attitude. It was a unfortunately a grave mistake that activate the aural stall warning and reaction of the captain that say 4 times to go down. His first response was correct by lowering the nose and increasing the engine power to the max, but for a unknown reason the officer then wrongly put the nose up again. Without this mistake the aircraft would have continue to fly without any FAC units.

The last mistake was the bad crew management when the second stall warning started. The pilot disagree on the sidestick command and didn’t solved the issue. The CVR have no trace of clear standard callouts about the captain wanting to take control of the aircraft. While a A320 is flyable without any FAC units, it’s not flyable with two pilots that disagree on the inputs commands. Normally the crew management and standard callouts must prevent pilots disagreement, but this failed in this case. Then a “dual input” warning should take the attention of the pilot about the potential problem, but the warning was suppressed by the highest priority stall warning. So it’s plausible that the pilots never realized that there was both controlling the aircraft with opposite input action.

So the crew mistake chain was: 1) disconnecting all FAC units, 2) loss speed by nose up attitude, 3) failure to use proper crew management to solve the pilots disagreement.

Airbus is a European company not French (even if the aircraft are assembled in Toulouse/France)

[wink] I specifically weasel worded that by referring to them as the host country.

Seems to me that quality control for airplane parts should be a bit higher if there are bad solder joints leaving manufacture. These parts should be made with the absolute highest standards, especially where temperature and pressure fluctuations are in play. This of course isn’t the only point of failure but this tragedy demonstrates improvement needed across the board..

These components are not leaving the manufacturing process with cracks, obviously…

Firstly it is NOT Flight 805. It was 8051. Here is the final report: http://kemhubri.dephub.go.id/knkt/ntsc_aviation/baru/Final%20Report%20PK-AXC.pdf

Please read it carefully. They give a full report of the black box and the pilots’ backgrounds and verbal comments during the disaster. Something STRANGE was happening as the rudder limiting error was something he experienced before. The co-pilot was horrified and said in French “What is going wrong?” A rudder travel limiter failure stuck in 2° should not generate a stall condition and a “Airplane Upset (AU)”: An airplane in flight unintentionally exceeding the parameters normally experienced in line operations or training:

The pilot was saying: “Oh my god!” at 23:16:53 after auto pilot disengaged and just before stall warning. He had flight simulator training for AU before.There was joystick activity recorded that indicated that he was trying to take control back from co-pilot (I think). For some reason the Airbus AIRMAN system was active and manually downloading to ground (Indonesia Air Asia Maintenance Operation Centre) DURING the disaster. That has to generate a WTF thought in your head for at least a moment.

If it weren’t for a Chinese social media site mentioning a possible future Air Asia disaster just 13 days before this then one could just blame this on cold solder joints and/or pilot error. It appears that Malaysian air transport industry is taking a real hit in highly suspicious air disasters lately. And yes Flight 8051 is owned by a Malaysian company not Indonesian (i.e. Doric 10 Labuan Limited Co). The Indonesians only operate it.

IF this were a case of avionics tampering, I know you probably think that this is just UNTHINKABLE, then there is some pretty nefarious people out there with a real grudge or at least a hidden agenda against Malaysia air transport industry. One ex-NSA agent (i.e. Wayne Madsen – not a kook!) thinks it is the doing of one extremely rich man with dual citizenship in USA and Hungary. Wayne has a really interesting website at waynemadsenreport dot com

I just find it hard to believe that the pilot and co-pilot could not recover from an AU and how did they get into an AU with just a small rudder deflection problem. Resetting the circuit breakers was a no-brainer and was proven to temporarily fix the problem before. And who and why was someone manually downloading AIRMAN data at IAA/MOC during the disaster? I know your thinking AIRMAN is just a 1-way system and does not receive data from the ground. Does it really? 8-/

OK no flaming now! Just thinking out loud here… I never believe in coincidences either!

Oops! I meant SIDEstick not JOYstick!

I corrected the flight number. There is many a slip between cut and paste, it appears.

I think you misunderstand the report wording about the AIRMAN download. There describes that troubles are normally addressed by the maintenance management through the methods of an automatic communication by the AIRMAN system, but that the maintenance management actually download manually the report. While the automatic method can be done in flight, the manual download is done on ground. The words “at the time of accident” describes here the maintenance management method on ground, not something that occurred in flight to the aircraft.

jcamdr – One would tend to agree with your assessment. However, the wording is dreadfully suspicious to me, but I have a suspicious mind, it’s what I do for a living. I know AIRBUS would never enable such a 2-way remote control system I have intimated in my post. But I have seen remote control systems for full-sized aircraft before. Also the pilot and co-pilot should have been fully capable of recovering from an AU. But if the AU was being caused by a little evil-gremlin on the ground with a joystick then WTF?

By their panicky verbal expressions it was clear that they were baffled about what was going on. A slight rudder deflection of a couple of degrees should not change the AOA (angle of attack) and send the plane into a stall and then a catastrophic AU.

I can understand an AUTOMATIC AIRMAN download during the disaster. That seems plausible to me. What engineer at IAA/MOC would know to start a MANUAL download at that particular moment? The report clearly indicates that the manual download did not happen on the ground at the airport nor while it was crashed under the sea. Someone initiated it manually while in flight and around 23:00 hours during the disaster. And I don’t remember anyone calling a MAYDAY or declaring an emergency to ATC. So how would the engineer know to start the AIRMAN download? OK maybe I misread it… but my mind is going: “WTF?”

The PFR are listed with there date into the report: none are from the day of the accident.

jcamdr – OK granted. I can’t find one either. HOWEVER, that statement that the manual AIRMAN download was done on the day (time) of the accident is telling. When did they do it on that day? On the ground? Nope. After the crash into the sea? Nope. While in the air when they were experiencing AU? Probably. Why else do it manually if the AIRMAN system is automatic? All of the automatic downloads where probably BEFORE the accident. Manual intervention when there was no declaration of emergency and the other aircraft in the air that need attention. Why do a manual download on 8051 for no apparent reason? What’s the purpose of automatic downloads if you have to do a manual one coincidentally at the right time? OK it could be innocent but I just have a gut feeling it wasn’t.

So to summarize:

“Yes. It’s puzzling. I don’t think I’ve ever seen anything quite like this before. I would recommend that we put the unit back in operation and let it fail. It should then be a simple matter to track down the cause. We can certainly afford to be out of communication for the short time it will take to replace it.” – HAL9000

“This sort of thing has cropped up before. And it has always been due to human error” – HAL9000

Seems it’s all a reenactment then.

Whatnot – It was just a matter of time before we chimed in huh? I like your HAL9000 quotes. I’d be interested in a intellectual discussion about this disaster. I’ve been careful to be slightly obtuse so as to “protect the innocent [read: guilty]”. So any way let’s have it… :-P

Calm down Dave.

“I’m sorry [Drone], I’m afraid I can’t do that…” 8-P

Sheesh ! the peanut gallery of Microsoft Flight Simulator “pilots” here is pathetic ! As a 5000+ hr CMEL/ATP Part 91/Part 135 driver (Citation X, and Embraer ERJ-145), I seriously doubt 98% of the people posting here have even sat inside a cockpit of an aircraft. To address some points that I skimmed reading: Flight deck automation (ie. the Flight Management System – FMS, for laymen, the “autopilot”) does not alleviate responsibility of the PIC and PNF to monitor the aircraft position and EICAS indicators ! Certainly there are moments where not much is going on, but you do need to maintain vigiliance. For instance, the “autopilot” can keep trimming an aircraft with totally iced up wings. It will trim and keep wings level as long as it can – until it hits it’s limit – then – the warning chimes go off – AP will disengage and hand the about-to-stall aircraft back to the human (slacker, about to crap-their-pants-pilot). Point here is, the pilot “should have” been monitoring the systems and flight progress.

Also rudder limiters exist for a reason. Depending on the specific AFM, there are procedures to ensure rudder travel vs. airspeed is within a certain envelope.

Also, all the 121 drivers I know, hand fly – yes HAND FLY their aircraft on final. One airline I worked for required us to perform an “autoland” (CAT-IIIb ILS minimums) twice a month to maintain currency for flight crews and aircraft systems. It is not 100% computers landing the aircraft. It’s workload intensive (flight crew needs to closely monitor the instruments, and the ground controllers need to maintain a buffer zone for the ILS glideslope antennas). Also the quality of the landing is typically inferior to a skilled human pilot.

Now, for “routine” heading/altitude changes, yes, we usually let the FMS drive the aircraft. Or we can just “dial in” any new heading or alitude change and allow the AP to ‘turn’ the aircraft. No one is man-handling the yoke while enroute.

Bottom line, the mishap appears to be pilot error, and incompetent aircraft maintenance/management.

So tell me, did you ever set foot in a cockpit of a modern airbus yourself? Because you seem to be referring a lot to a rather outdated world. The freaking FAA even is scratching the whole old navigation infrastructure last I hear, and that’s in a country where most planes are boeings (and boeing seems reluctant to go all automatic)

Anyway, you are right that it’s clear that the personal of this airline, both flight crew and ground support, is well below the standard you can expect. And I’m starting to think all those people who said ‘I’m not flying with malaysian airlines anymore’ had the right thought.

P.S. Disclaimer: I know next to nothing about aviation.

Whatnot – I don’t have M/S Flight Sim. I only have JANES F/A-18 flight sim. But I haven’t loaded it yet. I have a really cool joystick made by M/S. It looks just like a sidestick. I don’t think he is “outdated”. He knows more about the subj matter than we do, However, if one reads the report the PIC has a lot of hours and was no rookie. They lost control of that AIRBUS because of something UNUSUAL. AP disengaged on him and and he heard the “calvary charge” sound he was wondering why – I think when he said “oh my god!”, Why? Because it was going into a stall obviously. But why did it go into a stall? The A320 KNOWN rudder problem only limits how far he can turn starboard or port it doesn’t mess with your pitch and yaw angles. If the ailerons or flaps moved or the throttle was automatically backed off then maybe that explains it. I think he was telling the SIC (co-pilot) to nose down by saying “pull down”. I know that is damn odd thing to say for nose down but these are 3rd world pilots. I think American AF & NAVY pilots use really cool standardized expressions (i.e. Like Holly Hunter in THE INCREDIBLES cartoon i.e. “buddy-spiked”, “angels-10”, etc.).

This PIC flew fighter jets for the Indonesian AF. So I think stall recovery was nothing new for him. The SIC was also AIRBUS specific trained and probably knew the A320 inside and out. He was no rookie either. To say these guys were goofing off or didn’t know what they were doing is counter-intuitive. The SIC was not Indonesian either. He was a Frenchman from France. He too had many hours as well. This beast just got away from them not due to pilot error or ground crew laziness. Popping breakers is common practice. The FAC’s came back on accordingly but who needs them? You can fly the A320 manually just fine. Those FAC’s only make your job easier but you should never put your life in the hands of a computer or a robot EVER.

If you look at the images in the file it shows the attitude of the craft. At one point into AU I can see why he was saying OH MY GOD! That thing looked possessed or something. Imagine trying to manipulate your sidestick and rudders and the pig is non-responsive like someone else was controlling it. That’s the crux of my conspiracy theory. Maybe someone was via the AIRMAN interface. I know that’s not how the AIRMAN is supposed to work. Its supposed to be only one-way VHF radio link. But maybe the AIRBUS experts here can shed some like on that. Is there anyway the AIRMAN can be modified to send signals to the aircraft’s FBW servo system? If not please explain why not.

SQTB

IOW is the AIRMAN system just a VHF transmitter or a transceiver? If it does have a receiver then why? To receive what?

Quote: “And I’m starting to think all those people who said ‘I’m not flying with malaysian airlines anymore’ had the right thought”

It’s not just Malaysian (or Asian) airlines. A lot of modern and budget airlines provide minimal (or less) training and expect pilots to use the Autopilot ONLY. Little wonder that when an emergency happens – they fail to correct the situation.

Western countries are generally better but that is changing as they compete more with the budget airlines.

It’s getting to the point that only the older pilots have the true flying experience and of course as time goes on – more of them retire.

You are likely Quite WRONG. An automated flight management sestem malfunction with a jet aircraft at it’s limit in the altitude/airspeed envelope coupled with a disoriented pilot’s erroneous input is a recipe for disaster. With all your flight hours I dare you to try it! You will certainly corkscrew in – especially when you add in vertigo on the way down. Forget trying it in the air, try it in simulation. Even without the vertigo, in sim you will LEARN that you have almost zero control authority in the “Coffin Corner” of the flight envelope and that recovery from even the smallest error in that situation is very difficult even in ideal conditions. In-fact recovery may best be left to the automated system, provided it is working!!

A culture of blame or one of understanding?

“Let’s get rid of the bad pilots.”

http://www.skybrary.aero/bookshelf/books/1232.pdf

This?

“criminal prosecution of professionals such as pilots, air traffic controllers, or mechanics is increasingly seen as a threat to safety”

http://www.researchgate.net/publication/48381508_Pilots_Controllers_and_Mechanics_on_Trial_Cases_Concerns_and_Countermeasures

This is exactly why Dassault doesn’t label circuit breakers on the fly by wire aircraft 7x, etc…. If you need to pull a breaker, get the AMM, figure out which one,and read the procedure.

CSE – The PIC (Pilot In Charge) already had ground experiencing in pulling the breaker on his A320 to correct the rudder limit problem. The cold solder joint is an intermittent thing and resetting the breaker seems appropriate. He knew how and when to do it. AIRBUS puts the breakers in question right on the overhead panel and is labelled accordingly.

I just saw SPECTRE (James Bond 007). Q forgot to label the new car’s systems properly and used a Dymano Label Maker to mark critical systems. The labels were horribly ambivalent and James had to guess at what they meant. It was hilarious. There was one marked “AIR”. Turns out it just meant ejection seat. :-D

Only the breaker for FCS1 is above the pilot within arms reach because FCS1 is the normally operating unit so you’re safe to reset it because FCS2 is there as a continuously running backup.

The breaker for FCS2 is on the breaker panel behind the co-pilot and that is probably because you don’t want to reset or turn off both of them at the same time as it causes the plane to revert to alternate law.

In light of the pilot error assertion, it’s a bit awkward to see the previous article’s headline and graphic immediately beneath this one on the front page: “TOWARD THE OPTIONALLY PILOTED AIRCRAFT”

100% of the parts of an aircraft are on it for each part’s specific reason. Even parts like the carpet and trim around the windows are doing their essential jobs.

If any part comes up with multiple and identical failures, it should be *required* for airlines to repair or replace the parts, even if it’s some piece of decorative plastic trim with a fragile retainer clip that breaks.

Nothing involved with the flight controls should ever be considered “minor”. Alarms exist to alert the crew to problems. If it’s the alarm itself which has a design defect which causes erroneous alarms, it should be treated with exactly the same urgency of repair as the system the alarm monitors.

If the alarm is faulty then there’s no way to know if the system the alarm monitors is faulty. In this case, if the government agency responsible for regulating commercial aircraft registered where this one was had *done its job* and ordered the faulty rudder limit alarms repaired, the crash would not have happened.

Part of the blame should land on Airbus. The company knew about their defective part and from what I’ve read here did not issue a warning to all A320 operators to get that part repaired ASAP. That’s every bit as awful as General Motors knowing about their ignition switch problem and doing nothing about it for years.

It doesn’t matter if a mechanical or electrical problem can only be injurious or deadly under a specific set of circumstances. That condition WILL happen ‘in the wild’ eventually. If you’re the company that designed/manufactured it, it should be your responsibility to put out an alert about the defect and the use case in which it will fail.

It only makes things worse to plead ignorance then later have it revealed you knew exactly what the problem was and how to make it happen, for years.

Galane – They knew about the solder joint problem since 2009. They had Tech Bulletins out about it. No one thought it would be a catastrophic problem as you could just pop a breaker and the problem is temporarily fixed. They could swap out the boards when they land. I don’t think this problem caused this air disaster. SOMETHING unusual put that plane into stall and caused an airplane upset. I don’t think it was a cold solder joint nor was it pilot error. I truly think the investigators should return to the debris and check the AIRMAN interface. They need to see if it was somehow connected to the FBW system in a 2-way mode. If some bad player had access to the FBW system remotely he could have rested control from the pilots and thrown them into AU. OK yes its a conspiracy theory but I am the suspicious type. I can think of some people who might want this to happen to Malaysia in a bad way because of their refusal to help stop the Pacific Rim expansion by PRC,

Your AIRMAN conspiracy theory don’t get any support from people that know how this A320 was designed. I am waiting for a comment with a verifiable reference where a such technology have yet be installed in a commercial A320 in exploitation. Yes this technology exists mainly to control military drone, but this is a completely other set of units to be installed if there even would be compatible with this A380 7 flight computers units. Not just a switch to flip like your theory seem to assume.

jcamdr – OK I can understand how my C.T. would take a lot of “out of the box” thinking. Probably the AIRBUS engineer that designed the AIRMAN interface probably would never think someone would try and circumvent it’s intended operation. However, IF IT IS A TRANSCEIVER and not just a transmitter, it MIGHT explain why there was conflicting input in the sidestick inputs. Most are just thinking that the PIC was doing it out of panic to try and commandeer command back from the SIC. However, there is no recording of any verbal commands to take control back nor is there any input of the PIC pressing the button at the top of the stick switch should toggle control back to him. This makes me think that sidestick commands were being sent to the FBW bus from another source. It’s easy for Monday morning quarterbacks (An American euphemism for outsider kibitzers) to just vilify the pilots unnecessarily.

I think that the nose up attitude was from another source NOT either pilot. I mean who would do that in a aircraft stall scenario? That would be insane. Then the AU attitude was caused by the pilot trying to regain proper attitude only to make things worse. Such a unauthorized control system modification is not outside the realm of possibility for some people in the world especially in Tel Aviv or Langley Virgina (or both). You’d be surprised what those military-scientist geniuses can come up with and make it look like an accident. It wouldn’t be the first time something like this was done either.

OK I know this is too much to believe in a sane world. However, there is plausible evidence to suggest that “certain unidentified entities” have a vested interest in Mr. Najib Razak (incidentally a Muslim) giving in to some sort of extortion or political pressure to give in on allowing military bases in his country by USA. These bases would help with surrounding the PRC and stopping their military expansionism. We have the 7th Fleet at places in the Indian Ocean like Diego Garcia, Australia, Philippines, and other Pacific Rim areas. However, Malaysia and Indonesia would be an excellent place to set up operations but Razak is actively against it. These “entities” have no discernible morals or scruples and would not stop at ruining his commercial aviation infrastructure or murder of 160 souls to help him change his mind. Not sure where Joko Widodo (also a Muslim) stands on US Bases in Indonesia. But I assume he will fall in line soon too.

There were no Americans on Flight 8501. Only one Brit.

I’m a US patriot and condone many things my country does without question. However, this is a bit much and no one should just sweep this under the rug and ignore it. I can’t prove ANY of this so all I can do is present a plausible conspiracy theory. Should I just stand down and STFU? Probably. But I just wanted my 2 cents thrown in for a moment of out of the box thinking.

SQTB

The nose up attitude resulted from officer action on his sidestick, the FDR trace this very clearly.

Take attention of the CVR transcript and you will understand that the pilots didn’t try to share there mind about why the aircraft was no acting in the way each pilot individually commanded on his sidestick. There no need to introduce a extra command input source to explain the situation caused by this bad crew management. If the crew management was good, then the captain would have noticed the disagreement and would have solved it by ordering to his officer to release his hand from the sidestick.

Finally using a A320 to test a extra command input source without the pilots consent is a terrible idea as this particular aircraft have mechanical wires as backup to command the two most important control surfaces. So if the two pilots was in agreement on how to command the aircraft, then a extra electrical input source would have been unable to overrides the coordinated inputs of the two pilots on the wire command backup.

> “I’d suggest that after 6 faults it could have a heightened alert, like refusing to boot when powered on in a safe environment (i.e. parked on the ground)”

And the faulting system, which presumably has errors, knows it’s on the ground.. reliably? No, just nope. The errors were recorded and tossed aside as ‘nothing’ this is all on the maintenance to take care of, swap out the faulty board for a good one. You can’t have a flight critical system denying operability, that’s akin to a HDD refusing to transfer any data when the ECC errors are too high, no recovery possible.

Cracked solder joints: A perfectly acceptable failure in the clock on my 13 year old Subaru Forester. A decidedly unacceptable failure in an entirely computer controlled aircraft with no redundancies to prevent departure from controlled flight.

“…entirely computer controlled aircraft with no redundancies …”

The humans are the redundancy. It’s called MANUAL OVER-RIDE. No one should be forced to fly a completely computer controlled aircraft. M.O. is always critical. When machines become AI controlled the power plug should always be accessible to the engineers/pilots. The AIRBUS A320 will not spin out of control it it’s computers fail. It will just return control to the pilots in M.O.

SQTB

The question should not be who is to blame. When it comes to safety, blame is unimportant to everyone except lawyers.

What everyone should be thinking about is the relationship between human and machine control. Each has its limitations, and each can be placed in situations that were not anticipated in training. This was a problem in airplanes long before fly-by-wire was even a concept. In his autobiography, Eddie Rickenbacker (World War I flying ace and former president of Eastern Airlines) was on a flight on a propellor-driven, non-turbine-powered airliner (I think it was a DC-6 or a Constellation). When one of the engines started to go “out of sync” (never mind what that means . . . it can be a prelude to failure), Rickenbacker waited for the pilots to shut that engine down. And he kept waiting. And waiting. Finally, the crew shut the engine down, feathered the prop, and announced an unscheduled landing. When Rickenbacker talked to the crew on the ground, they confirmed that the plane was on autopilot (when autopilots were new) and they hadn’t been paying close attention.

For every example of a human failure, it’s possible to name a failure of the machine and vice-versa. For instance, in 1972, an Eastern Airlines L-1011 with absolutely nothing wrong crashed into the Everglades on a perfectly normal landing approach. Why? Because the flight crew was distracted by an indicator light. Neither one was flying the airplane, and it settled in a landing configuration into the swamp. Clear case of human error. So is the answer to get humans out of the loop as much as possible? I don’t think so. Machines are fallible, too.

In 1993, a Lufthansa A320 ran off the end of the runway in Warsaw. The runway was wet, and the pilots were touching down with some extra speed to compensate for a crosswind exactly as stated in the manual. Trouble was the crosswind turned out to be a tailwind, so the plane touched down too far down the runway. The landing gear on the left touched down nine seconds later than the wheels on the right, which meant that the switch that detected a landing wasn’t tripped, so the spoilers, thrust reversers, and wheel brakes didn’t work for a few critical seconds . . . not that brakes were much use on the wet runway. Unable to stop, the jet ran off the end of the runway even though the pilots did everything by the book.

Technological evolution is no more orderly than its biological counterpart. Things happen in unexpected ways and decisions have unexpected consequences. We will never, ever have perfect safety. We can only have acceptable risk. The question of blame is moot. In fact, it is dangerous because it makes us talk about the wrong things, like correcting a “fault” instead of finding ways to break the event chain that led to the accident.

All we can do is mourn the dead, learn from the experience, and move on.

Blame is perhaps the wrong word, too many connotations, but finding out who was at fault and why is pretty important. The aircraft industry has such a good record precisely because they find out what went wrong and actively think about how to fix this in the future.

There’s much talk of how to try and impliment something similar in medicine. Errors by surgeons are apparently kept relatively quiet for reputational reasons. So nobody learns from anyone else’s mistakes. It’s stupid that people should die because someone wants to save face.

Your ([Rud]) gripe with the circuit breaker is wrong.

Airplanes are designed to withstand many single-point-of-failures. So one system not working is often not critical. And troubleshooting guides for various stuff will mention, if this doesn’t work, try restarting the unit by cycling the circuit breaker.

Added to that, the autopilot requires the rudder-limit system to be operational. Very logical. It will disengage when the rudder-limit system fails. Then you have to fly the plane by hand. Not a real problem. When then autopilot disengages due something else than the pilot pressing the disengage button, a chime is generated to alert the pilots. At least that’s the case on Boeing when I last researched this. It seems they didn’t hear it.

There is a human-interface problem that the plane can think the pilots are flying and the pilots think the autopilot is flying the plane. THAT is the problem. On the airbus, a sensor in the control stick should detect whether a pilot is flying the plane. If neither is flying an alarm should sound. This would fail if the pilots regularly “rest” their hand on that stick. This then can be prevented by enforcing “if the autopilot is flying, you’re not allowed to rest your hand on there for longer than XX seconds.”

I don’t know this plane but there seems to be some points in your argument that are incorrect.

Cycling the circuit breaker was a huge contributor to this accident because the crew couldn’t handle manual control of the aircraft.

The rudder limit “system” had built in redundancy so it was *NOT* dropping out the auto pilot systems – at least that is what is assumed because if both redundant channels of the rudder limit system fail at the same time then the equipment would interpret this differently.

No matter what the cause of the Upset Aircraft was – the crew failed to manually fly the plane. This is a airline problem because their policy is for crew to fly by auto pilot and don’t touch anything depriving them of the hands on experience they need in order to be *capable* of dealing with a situation like this. Anything – including weather conditions can cause an Upset Aircraft.

There would have been too many alerts for the pilots *not* to be aware of the auto pilot drop out and shortly after that they would have been ‘stick shaker’ alerts where the main control (stick) physically shakes.

RÖB

This is what the cockpit heard when the AP disengaged at 23:16:43

http://soundfxcenter.com/music/musical-instruments/da3829_Cavalry_Charge_Sound_Effect.mp3

http://soundfxcenter.com/music/musical-instruments/da3829_Cavalry_Charge_Sound_Effect.mp3

Wow WORDPRESS does not like MP3 files? Try this instead: http://tinyurl.com/hjcpmsa

Captain Lim Khoy Hing*, a famous AIRBUS A320 pilot, says that manual override is possible. Whatever was preventing it was successful in NOT allowing them to regain control manually. However, on all modern Airbus planes, starting from the A320 up to the A340, computers prevent the pilot from climbing above 30° (might lose all lift and crash) or pitch down below 15° (prevent over-speed). Furthermore, it would not allow the pilot to bank or roll more than 67° or make any maneuvers greater than 2.5 times the force of gravity. In other words, if a nutty pilot (or an intruder) decides to pull, push or roll the control to its maximum force, the computers would keep the plane from stalling or dropping off the sky.

So maybe the FAC’s came back on line at the wrong time interfering with manual control or an outside sinister force was at play. But in any case the pilots could have gone to total manual over-ride if they had time to initiate it. I imagine its not a easy process meaning they might have to unbuckle and get out of their seats to go below or something. AIRBUS does not like pilots to do this so I don’t think they are going to make it simple to go to total MO. They really trust their computers.

*Captain Lim Khoy Hing – Malaysian Airbus A320/A330/A340 Flight Simulator Instructor at AirAsia

Here is the A320/321 Flight Crew Training Manual – Look up anything you want in this 300+ page PDF manual. I’m too lazy to browse it but I’m sure all questions can be answered about this plane’s characteristics.

http://tinyurl.com/zosysp9

Please read the report that detail how the control of the A320 work and what happened in this accident, instead of using your imagination about how the A320 control work. The transition from normal law to alternate law was initiated by the disconnection of the two flight augmentation computer by the captain, and the alternate law was in place continually until the destruction of the aircraft. The alternate law allow the pilot to aviate the aircraft without some protection. There is an even more degraded state, the direct law that allow to aviate without any of the 7 flight computer units, and that basically correspond to a conventional aircraft. In addition the A320 have mechanical wires as backup command in case of fly-by-wire failure. I have not done an extensive search about this, but I can’t remember having read a report where the A320 the fly-by-wire failed and that the mechanical wire backup have to be used. Newer airliners use electrical backup only.