Ever wish you could guess more precisely? Or maybe just make your guesses look confusingly legitimate? Guesstimate could help.

It uses Monte Carlo simulations to add some legitimacy to the ranges given to it. For example, if you say the cost of lumber for your next project could be between 2 and 8 dollars a piece, you don’t typically mean that it’s equally likely to be any of those numbers. Most people mean that the boards are most likely to be around 3-5 dollars and everything lower or higher is less probable. Using different shaped distributions, Guesstimate can help include this discrepancy of thought into your pseudo-calculations.

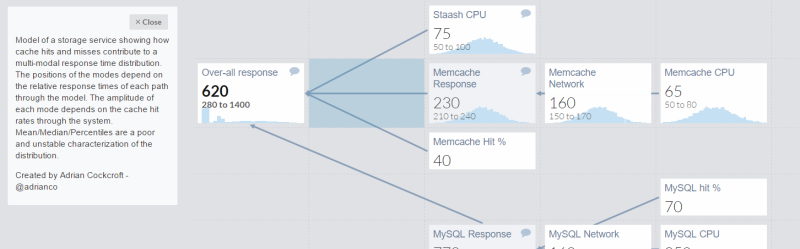

It’s a neat bit of code with a nice interface. There is a commercial side to the project for those who want to collaborate openly or pay someone to host it privately. It has a few neat example models for those interested.

Does anyone use anything like this in their daily lives? Is there another similar project out there? This kind of thing is pretty cool!

I have used this from time to time. http://nbviewer.jupyter.org/github/CamDavidsonPilon/Probabilistic-Programming-and-Bayesian-Methods-for-Hackers/tree/master/

I’ve also used that book. Very good I’ve found

From an economics/finance perspective, @risk by palisade is popular for Monte Carlo analysis as an excel addin with nice front end. Yasai/yasaiw is another option. Wikipedia has a page on such tools. Correlation between variables is fairly important to consider too.

The day job…

Specialist reliability engineering applications like Reliasoft Blocksim (http://www.reliasoft.com/BlockSim/index.html) employ Monte Carlo simulations run on readability models to forecast the performance of (populations of) repairable systems. These forecasts can be intractable to calculate algebraically, as the simplifications and assumptions needed to do so invalidate the outcomes. (However, this hasn’t stopped reliability engineers doing just that for decades, and garbage concepts like constant MTBF and associated standards like the defunct MIL-HKBK-217 are still widely used today). The problem is that the probability that a given component of a system will fail due to a given mechanism during a period of time does not remain constant. This is true of the failure modes of both electronic and mechanical components, with very few exceptions (software is a whole different discussion…). So depending on age, or another duty parameter (e.g.,operations cycles) and affecting stress factors (e.g., temperature), the place of each component is its “reliability function” is not constant. Different components will have different reliability functions and when components are replaced their reliability function is “reset”. Components may be replaced reactivity due to failure, or proactively as part of a preventative maintenance regime. (The difference between letting your car run out of gas, or topping up when the gauge is low). So, all of this might seem to make forecasting the reliability, availability and operation cost of anything other than a very simple system seem impossible to calculate, however Monte Carlo simulation enables comparisons to be made between, for instance different preventative maintenance regimes and for sensitivities in a system to be identified.

Typically, a reliability model to support Monte Carlo simulations is constructed and updated during product development. This is used in combination with other design analysis techniques like functional DFMEA, the overall aim being to reduce risk affecting time to market, development cost and product performance.

Reliability engineers are still rare beasts in product development, but the discipline has itself to blame to a significant extent as it comes with a awful lot of pseudo-scientific baggage.

Implementing this into your daily routine is as close I can think of to cyborgization as possible of today.

Especially good when non linear systems make outcome probabilities very hard to calculate.

My first thought its this would be nice for quick fit tolerance analysis. If shaft is 3.000 +- .001 and hole is 3.001 +- .001 how often will the parts not fit? The answer is it depends on the distribution, dont’ see a way to tweak stuff like stdev.

Creator here. It is possible, just with a different notation. You can type ‘3 to 8’ to represent an intuitive 90% confidence interval. If you would rather use mean and standard deviation, you can use the syntax ‘=normal(mean, stdev)’.

Here’s a model that does this.

https://www.getguesstimate.com/models/7456

The way it can run the math above is by testing how many samples have it fit. In this case, it seems like it should fit around 75% of the time.

Cool! You should consider playing up that usage case for your site, very neat to be able to whip up a statistical tolerance analysis like that. Next is the classic example of 4 parts with a width tolerance of +-0.01″ that sit next to each other and fit in some space with a tolerance of +-0.01″. You can plan for worst case and have a ridiculously loose nominal clearance of 0.06″, or you can use statistics and design a much closer fit while still ensuring the parts will indeed fit the vast majority of the time. The neat thing about using guesstimate would be just the ease of modeling something like that quickly, each part could have different distributions, etc.