Embarrassing confession time: I never learned my multiplication tables in grade school. Sure, I had the easy tables like the twos and the fives down, but if asked what 4 x 7 or 8 x 6 was, I’d draw a blank. As you can imagine, that made me a less than stellar math student, and I was especially handicapped on time-limited tests with lots of long multiplication problems. The standard algorithm is much faster when you’ve committed those tables to memory, as I discovered to my great woe.

I was reminded of this painful memory as I watched Charles Lohr’s 2019 Supercon talk on the usefulness and flexibility of lookup tables, or LUTs, and their ability to ease or even completely avoid computationally intensive operations. Of course most LUT implementations address problems somewhat more complex than multiplication tables, but they don’t have to. As Charles points out, even the tables of sines and logarithms that used to populate page after page in reference books have been ported to silicon, where looking up the correct answer based on user input is far easier than deriving the answer computationally.

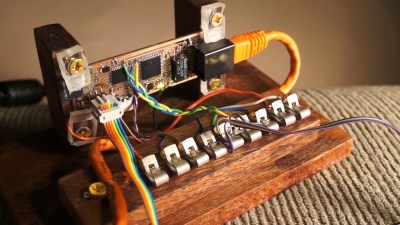

One of the most interesting examples of how LUTs can achieve the seemingly impossible lies in an old project where Charles attempted to build a Minecraft server on an ATMega168. Sending chunks (the data representations of a portion of the game world) to clients is the essential job of a Minecraft server, and on normal machines that involves using data compression. Rather than trying to implement zlib on an 8-bit microcontroller, he turned to a LUT that just feeds the raw bytes to the client, without the server having the slightest idea what any of it means. A similar technique is used by some power inverters, which synthesize sine wave output by feeding one full cycle of values to a DAC from a byte array. It’s brute force, but it works.

Another fascinating and unexpected realization is that LUTs don’t necessarily have to be software. Some can be implemented in completely mechanical systems. Charles used the example of cams on a shaft; in a car’s engine, these represent the code needed to open and close valves at the right time for each cylinder. More complicated examples are the cams and gears once found in fire control computers for naval guns, or the programming cards used for Jacquard looms. He even tips his hat to the Wintergatan marble machine, with its large programming drum and pegs acting as a hardware LUT.

I found Charles’ talk wide-ranging and fascinating. Originally I thought it would be an FPGA-heavy talk, but he didn’t actually get to the FPGA-specific stuff until the very end. That worked out fine, though — just hearing about all the cool problems a LUT can solve was worth the price of admission.

And for the curious, yes, I did eventually end up memorizing the multiplication tables. Oddly, it only clicked for me after I started playing with numbers and seeing their relationships using my first calculator, which ironically enough probably used LUTs to calculate results.

“Embarrassing confession time: I never learned my multiplication tables in grade school.”

Very handy when being a writer. ;-)

“Of course most LUT implementations address problems somewhat more complex than multiplication tables, but they don’t have to.”

Run into them in photography and video programs.

i once needed fast sines and cosines for fixed point numbers. i ended up making a spreadsheet to compute the numbers and dump the data to an eeprom. worked flawlessly.

Historically there was a single entry in a look up table in the Intel ‘386 math co-processor that was wrong and caused a recall.

I only knew about the Pentium FDIV bug. I’ll have to look that up.

https://www.verifsudha.com/2017/09/11/pentium-fdiv-bug-curious-engineer/ “Pentium’s SRT lookup table implementation is matrix of 2048 cells. Only 1066 of these cells actually contained the valid values. Due to issue in script loading the lookup table 5/1066 entries were not programmed with valid values.”

My favorite LUT trick: the old frequency-modulation chips from Yamaha had a LUT of logs of sines, and another LUT for exponentiation All of the sin(x)*sin(y) stuff is done as exp(log(sin(x))+log(sin(y))), with all the tricky math done in LUTs. That reduces the math to three lookups and one add.

Here’s a die-shot of the LUTs, for the skeptical:

https://docs.google.com/document/d/18IGx18NQY_Q1PJVZ-bHywao9bhsDoAqoIn1rIm42nwo/edit?pli=1

This was a great talk about a broadly useful “trick” to know about.

Nice trick ! Thanks for the reference.

Just one minor detail: I think the formula should be “exp( log(sin(x)) + log(sin(y)) )” you described it correctly in the text: “That reduces the math to three lookups and one add”.

Best regards,

A/P Daniel F. Larrosa

Montevideo – Uruguay

Thanks. Fixed it. Yeah, it’s a great trick.

Meh, just give it lookups for 0, 15, 30, 45, 60, 75 and 90 degrees and let it interpolate the rest.

I don’t know degrees. Radians are my bag. They’re the ginchiest! The rate of change of sine(x) is constantly changing so interpolating over a 15 degree interval? Not my first choice.

i for one like binary degrees where 256 = 360 = 2pi. therefore it fits in a single 8 bit data type. very lut friendly.

With a little bit of lookup index math (which you may get for free depending on your lookup ROM implementation) you only need to store a quarter of the sine wave as well, half of the wave is probably easier with only a negate on the result for the second half of the wave.

If you want to do shortcuts you can have a much smaller (bit width) LUT that just holds the difference between a sine and a triangular wave as the relevant parts of the latter is a simple slope. i.e. 16 bit sines using an 8 bit LUT plus some very simple math. Not sure but a LUTs that has a chain of vectors would be even more compact, and that would relate to the interpolation idea?

I’ve found GNU octave was worth learning for this. That way I could just write a script that spit out preformatted source code.

I just write a script:

https://github.com/sjlongland/atinysynth/blob/master/gensine.py

which I then call from the Makefile:

https://github.com/sjlongland/atinysynth/blob/master/Makefile#L42-L45

I once built an altimeter that employed a 16 bit ADC. I needed to translate a pressure sensor voltage out into a very non-linear relationship to altitude.

The top 8 bits were translated into an altitude table read using “Piecewise Linear Interpolation.” The bottom 8 bits translated into intermediate altitudes by prorating differences between successive upper byte ranges.

The process is described in Microchip Application Note AN942. It is still useful when using microcontrollers with limited complex math functions.

Come to think of it, all the 80s and early 90s 8 Bit ECUs, (OBD-1, EEC-IV, SMEC etc) use similar lookup tables in ROM for air fuel ratio, altitude and barometric compensation, anything not linear.

I worked with the guy that designed the first EFI for Chrysler. He said he used an 1802 and a simple set of lookup tables to decide the duration of the injector pulse. It used only vacuum, and RPM (and Throttle position?) and it still beat the crap out of the most sophisticated carburetor.

Funnily enough all modern EFI tables still work with lookup tables for ignition timing, fueling etc. With some long-term trimming added to it (and close loop control in certain scenarios)

I worked on an air-traffic control project in the mid-late 80s. They initially picked us because our floating-point chip did trig functions faster than almost anything else out there. There were other reasons we (fortunately for us, and possibly also for you) didn’t get the project, but for the floating point part, we found that the radar data was coming from 10-bit A/D converters, and a 1024-item lookup table really is MUCH faster than doing floating point trig.

I keep thinking of a way to make a mechanical altimiter to aide a correction screw to air mix on a carburetor so that I could ride non stop up Pikes Peak at full throttle on my old BMW despite change in air pressure.

Do you have any links to that project? I might end up going the electronic route

https://www.microchip.com/wwwAppNotes/AppNotes.aspx?appnote=en020511

The old Rochester Quadrajet carburetors used tapered metering rods to adjust the A/F mixture in changing conditions. Even the the larger GM I6 engines used a spread bore version of the Quadrajet, basically a Quadrajet split from to back. I have my doubts that adjusting mixture screws could work as fast as those metering rod, so yea EFI is probably in your Pike’s Peak future. Have fun,and stay on the road. Not often, going motorhead happens on HaD.

I, with my boss at the time, did EXACTLY this on what was the first electronic altimeter that was used in a missile target drone for Beech aircraft circa 1990

We used a 2x 24bit ADCs alternating between self calibration and reading to compensate for the temperature skew as the drone hit MACH 4 vertically and arched down, looking exactly like a SCUD at around 100,000 feet. (The drone cost $100k instead of the $1M we were paying Russia for real SCUDs to practice shooting down).

This thing could accurately measure between the floor and over your head, and go to 110,000 ft. with no loss of accuracy between -40C and 85C. I don’t remember the rated accuracy but none of my units were off by more than 500ft at 110,000ft.

The lookup tables allowed us to use the pressure sensors way beyond their rated accuracy and temperature range by simply characterizing each individual unit sensor & ADC at 7 temperatures and a bunch of altitude/pressures. We used excel to convert the data into tables for each temperature and pressure. Fortunately, silicon strain gauge pressure sensors are actually pretty linear except at the (beyond data sheet) ends of their scales.

Unfortunately, it has been 3 decades so I do not remember exactly how I meshed the pressure sensor output with the temperature.

The results were outputted into a DAC that gave the device a linear feet-to-voltage output. That function alone needed Piecewise Linear Interpolation (which I first saw described in a National Semiconductor App note circa 1975) because we couldn’t find a pressure to altitude equation accurate enough to calculate it with an 8 bit uP (your problem as well).

I started doing the calibration manually but later automated the process operating the Pitot calibrator and environmental chamber with VBA in a cell of the Excel data gathering sheet.

For Pete’s sake, why on earth did you feel the need to abbreviate Lookup Tables to LUTs? It is just painful and annoying. Please stop taking writing tips from the Department of Defence.

on a vaguely on topic front, back in ye-olde days of computing it was common place us lookup tables to replace cpu expensive calculations, generally trig or log functions, in game engines. It was nostalgic to seeing it being used again.

…Because that’s a n industry standard acronym for them? Having labels for ideas is generally a useful thing to do. That way, when someone says “CPU” or “LUT”, the audience can be pretty sure what problem space they’re talking about. Compare “int” vs “integer;” do you consider them to be the same thing? I don’t, despite “int” just being a shortened version of “integrer.” It has nuance attached to it. If we’re talking about C, that might mean it’s a 16-bit number.

Same with LUT vs Lookup table. LUT might have implications that the generalized term “Look up table” doesn’t.

Hey, lets not start LUT shaming here!

Haha! I had to read that out loud.

I’m using a LUT on an 8-bit PIC with four GPIOs to a hardware (R2R) DAC to generate a sinewave as part of an Automated Test Equipment bench. Since it’s running at a fixed frequency I was able to design an efficient filter for the output, and get less than 0.015% THD which is good enough for the test. $5 solution to replace the $4,000 instrument we usually specify.

Check Don Lancaster’s “Magic Sine Waves”.

When I needed to reverse the bits of a byte on a microcontroller – which is harder to do than it seems like it should be –I used a lookup table. Actually, I used a byte array where the input value was the index and the array value was the reversed byte.

Where does one draw the line between sample playback / DDS and “using an LUT for the sinewave”?

But yeah. Same-same.

My sine LUT is 126 bytes, and the whole program (in assembly) is about 45 lines (141 with all the comments and defines). You’re not going to do that with a sample playback.

In process automation, I have used LUTs to solve significant business problems while scoping out the requirements for a real business solution. Which is the inverse of what he was talking through. I create luts from the the output of humans being really smart, then ongoing maintenance shows what inputs are meaningful to the output. This then drives the RFP process to make sure when we tool the process we meet actual requirements without too much bloat. It seems backwards, but it allows us to take seemingly random work and turn it into deterministic ins and out.

I’d be interested in reading a case study or any sort of writeup about one of those projects.

sounds like it could be handy, but I don’t understand what you mean. Please given an example?

When I arrived at my employer we had word docs that described the standard security build in our main application. Depending on the role of a user we would configure about 150 fields.

I exported those 150 fields for all users, then used the hr database for those users to identify individual roles. Did a huge crosswalk to normalize build for each role, and put it all in a big excel spreadsheet.

We built from that combining user demographics and look up table role info until we could clean up the inputs to the bare minimum required for a standard build.

Once we had employees under control we used the same process to map out all the other authoritative requestors. Once we had that done we built a similar look up table for 5 other apps.

In building out the look up table, we noticed that ongoing maintenance was huge because the hr identified roles were super dynamic, but the required build was not. We also noticed that some fields were tied closely to the job title and some were tied to the location. So going into writing our RFP we have specific requirements that we will need so reduce the need for maintenance.

The look up table also allowed us to double our capacity without doubling our staff because we clustered the human pieces, scripted the process around the look up table, and partially automated. We also did that while reducing our processing times by 50%

In the upcoming year we will be retooling the process to meet the business need. That includes basics like functional role group management, dynamic role lifecycles, exception lifecycle management, entitlement management, and we will take over managing all the major apps in the org.

So, I get that this is super not hardware hacky look up tables in silicone. But… I recognized so much of the talk in my own work that I thought I would mention it working on the business process side.

The calculator cams in old navy guns have always fascinated me. I’d love an article on how they were developed

Assume you’re referring to the ballistic cams?

https://archive.org/stream/B-001-002-575/B-001-002-575_djvu.txt

“Embarrassing confession time: I never learned my multiplication tables in grade school.”

Why is that embarrassing? I’ve never even heard of multiplication tables (or never learned and immediately forgot them) in grade school, gymnasium or university.

The following isn’t quite right and a bit broad but I like math because every problem can be solved by reducing it to (the) basic rules.

So I’m glad I was never required to rote learn such tables because that’s the exact opposite of that principle.

Same reason why math in university wasn’t as “fun” because e.g. “complicated” integrals with sines, pies, roots and so on can not just be integrated as logically without a (memorized) table of the – lets call them – wired integrations.

My personal philosophy in that regard is that I don’t want to “waste” capacity by just storing information when it can just be stored elsewhere (paper, computer, etc.). My emphasis is on being able to use, combine and process such “externally” stored information.

Example: I don’t care to remember the exact DIN/ISO norms that contain the rules on how and where electrical wiring should be put in a private home – just the meaning/intention of the rules but not the literal text.

This has major drawbacks too. Like, it might happen that I need to solve the same problem twice because I forgot how I solved it the first time or sometimes I can’t immediately explain why I did something in a specific way, but I’m kinda okay with that.

On that not I’ve never thought much about pros and cons of rote learning and it is more interesting than i anticipated (read the English and the German one).

Are multiplication tables still taught in the USA? Just some states? Only some school-forms?

I couldn’t find useful/definitive answers on that for Germany or USA.

When you do have it memorized and use it, how exactly does the thought process work? eg. for 7×8 and 13×17?

Some interesting and or “scary” links:

https://www.nytimes.com/2014/07/27/magazine/why-do-americans-stink-at-math.html

https://www.quora.com/Should-children-memorize-the-multiplication-tables-If-yes-what-is-the-upper-limit

So much arrogance…

Seems to be a member of the “memorize never, multiply infinite times” school of thought. Wouldn’t want to use up that “capacity”! SMGDMFCSH.

Listen to me now and believe me later: the multiplication table IS a lookup table (LUT for the cool kids).

I’m pretty sure even addition is done by memorising. You do a lot of practise of it as a kid. Would the human brain have evolved an accurate discrete mathematical facility when everything else in there is a shortcut for drawing rough conclusions quickly?

You remember how much each pair of digits adds up to. You don’t count them, do you? You don’t do a XOR and AND like a computer would.

For multiplication I remember all the squares and a few other results, then adjust by adding or taking away as necessary. Over the years more multiples have lodged in there.

I don’t recall learning them by rote at school, and if I did I forgot them, like I suspect everyone else did. So it’s partly remembering some and reconstructing others. Most people are pretty bad at maths, so rote learning, chanting in unison what seven nines are, doesn’t appear to work.

A method that is not used is guaranteed not to work.

He missed the basic of a lookup, that your data is used as the address of the desired result. Building such a table is the “baking in”. I’m a serious enthusiast of look up table tricks. In processors with easy memory mapped I/O, like the 6502, big lookup tables can fit in a couple bytes of RAM and can speed up even simple operations by a factor of 10 (or more).

For example, to check that an ASCII char represents a number from 0-9. The 6502 can read or write to the first 256 bytes of ram (Zero )age) in 3 cycles. A EPROM/ROM can be mapped to a single byte of RAM in Zero Page. The first 256 bytes of ROM is loaded with all hex FF except the locations 48 thru 57, which have 00. To use, write an ASCII char to the ROM mapped input where it is used as the address. Read the same location to get the table content, which will be zero if the char is a number.

But go the next step. Fill the ROM with hex FF except locations 40 thru 57, where you put the binary value of the numbers 0 to 9. Now the single write/read operations return FF if the ASCII is not a number and the correct binary value if it is. This takes six cycles and only 4 bytes of code. The usual code method takes a couple compares and branches with a subtract and or shifting that takes 50 to 100 cycles. The LUT method is 10 or more times faster.

You can apply this to a plethora of snipets, like instant 8 bit multiply. Or with the very big EEPROMS today, instant 16 bit multiply. On little micros, a ROM used this way can shrink the needed code space and give a dramatic speed increase. Many LUTS can fit in one ROM with creative addressing schemes.

Also, LUT routines are so short that they can just go inline instead of subroutined.

Sorry if I’m repeating, but I was not able to watch the whole presentation yet. I did all this in the 1980’s with 6502’s in scientific instruments. With 1MHz processors, the gain was fantastic. (Now I have to look at the AVR328 assembly language to look for fun tricks with Arduino’s.)

– Just wanted to say your comment was appreciated, and quite interesting!

Ooohh that’s a really good one – I will try to remember that for the future. I use it A LOT in MANY of my projects, and lets you “fold” the lookup table into a much smaller size! I use it to handle the logic of NTSC by saying “what the line does” in any given case, then another LUT actually does the outputting.

The IBM 1620 couldn’t natively multiply, so every program that needed multiplication had to load up a low memory LUT multiplication table. (Didn’t watch the talk, sorry if he already told you.)

It couldn’t add or subtract either; its entire ALU was implemented as a lookup table.

I was wondering if a LUT can be used for evaluating hashes in cryptocurrencies? Essentially what a miner does?

Like a rainbow table?

Theoretically you can use a LUT for absolutely anything. The main drawback is they get big very quickly. IE for each extra bit in the input you want to calculate, you have to double the size of the table. A table to look up 8 bits’ worth of stuff is 256 bytes. For 16 bits (say multiplying 2 8-bit numbers, or doing anything to 2 8-bit numbers) it would be 64K long. For 32 bits it’d be 4GB and for much more than that, impractically enormous. How big is a hash?

LUTs are a well-known thing to even a novice programmer. Since coin mining is a millions of dollar business (what’s the value of the currently existing Bitcoins at the current price?) I suspect if this were revolutionary, or even gave a 5% advantage, they’d have done it already. Very smart progammers understand the sort of encryption stuff Bitcoin is based on. Similary You-Know-Who do a lot of cracking of encryption, or try. They know about LUTs.

I realise this doesn’t directly answer your question, but I can at least tell you, if it’s any good they’d have done it already, and might well have. You’re not going to make your millions that way!

OK, I looked it up. Bitcoin hashes are 64, 128, or 256 bits long. For a 64-bit lookup table you’d need 17179869184 gigabytes of storage, though would be able to look it up in a fraction of a second. Would the storage space you’d need (somebody look up how much cloud storage exists in the world) cost less than the coins you could mint? But that’s only the smallest 64-bit ones.

In the future of course storage and memory will get bigger. Til they hit the bump, like CPUs did with Moore’s Law. But I’m told Bitcoin has that accounted for, where each coin gets harder to mint, Satoshi thought of that. Which is good because a touted global currency that melts down cos hard drives got bigger wouldn’t be a lot of use. So the answer is definitely “no”. It’s the sort of thing where you’d need to start using all the atoms in a planet to store your table.

Sorry to double-post but I’ve just realised, you’d have to calculate all the hashes in order to store them in the table in the first place! So assuming you were doing it with the 128 and 256-bit values, and had managed to access extra universes to store the data in, in filling up the table you’d have mined every bitcoin anyway. The moment you completed it would be the moment it became obsolete.

I’m a bit pissed off cos I remember the start of bitcoin, just about when people were buying graphics cards, with bitcoin, to mine more bitcoin. The people who sold the graphics cards must be happy now. At the time I thought they were a bit stupid, and didn’t bother mining any cos the whole thing looked like a bit of a nutty waste of time from a few libertarian computer programmers (the two often being coincidental for reasons I won’t go into) that’d never amount to anything. I bet a lot of the early miners are absolutely loaded, if they held onto the coins up til the value went really high.

Mind, I’d probably have blown mine on drugs 5 minutes after the Silk Road opened for $5 a bitcoin. So never mind.

I u a look up table for my audio spectrum plot for a couple of my projects. It computes 20* log10( sqrt() ) for the sums of squares reslts from a FFT. i.e. complex magnitude to dB UV + pixels scaling and plotting as well as the code do a linear search.

Why bother with floating point log10 and sqrt functions when I only need a low resolution int8 result?

For Square Roots, an even faster method than a lookup table is “just don’t do them”; Jon Bentley’s Programming Pearls book talks about how a lot of the time that you’re calculating sqrt( a**2 * b**2 ) you’re going to compare that to other values that were calculated the same way (e.g. to find the pair of points with the shortest distance between them), so just do the comparisons with the squared value, and then if you later need to get the actual distance, you can calculate it just once instead of for every pair of points.

I remember those little quick connectors from my childhood, they were on the Lionel train set at my grandad’s. What are they called?

I wonder if i can use LUTs on ESP32 to bitbang 433MHz RF signals to control that cheap AC power switches…

Charles Lohr could! Look up the insane stuff he’s done on here to show that. My favourite was transmitting modulated RF NTSC video from software.

Also, a very nice explanation on how to approximate a first step of Newton-Raphson in a floating point division algorithm using a lookup table: http://www.acsel-lab.com/arithmetic/arith9/papers/ARITH9_Fowler.pdf