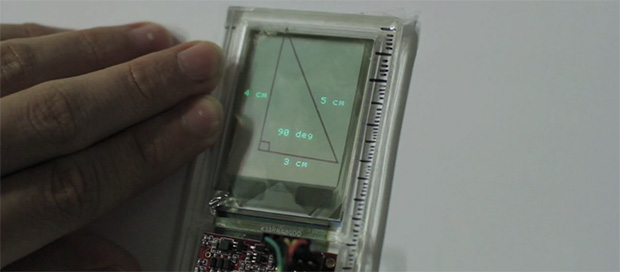

Just about every engineer needs to take a drawing class, but until now we surprisingly haven’t seen electronics thrown into rulers, t-squares, and lead holders. [Anirudh] decided to change that with Glassified. It’s a transparent display embedded in a ruler that is able to capture hand drawn lines. These physical lines can be interacted with or measured, turning a ruler into a bridge between a paper drawing and a digital environment.

For the display, [Anirudh] mounted a transparent TOLED display with a digitizer input into a ruler. The digitizer captures the pen strokes underneath the ruler, and is able to interact with the physical lines, either to calculate the length and angle of lines, or just to bounce a digital ball inside a hand-drawn polygon.

There’s no word on how this display is being driven, or what kind of code is running on it. [Anirudh] said he will have some schematics and code available up on his website soon (it’s a 404 right now).

So cool!

Anyone know where to get these transparent OLED displays?!

sparkfun

Hmm, I am somewhat sceptical that this is an actual video of the device working and not only a proof-of-concept, pre-recorded (“faked” if you want) demo.

There isn’t any obvious sensor on the ruler that could actually capture the pen strokes, furthermore, some of the things, such as the bouncing ball and the angles require image understanding code to be running on the device – complex stuff, not something a tiny microcontroller could do. E.g. how would the ruler know whether to draw a ball or display the measurements? Or how would it recognize the direction of the arrow? There seems to be a lot of stuff missing in the video.

I wouldn’t be at all surprised if there was a camera above the desk (and out of view) and the device was only used to display the results computed by an off-board computer. That cable is obviously plugged in somewhere.

It is a neat idea, though.

It looks like from the video he has a pen graphics tablet to get the pen input.

Ohhhh. Yeah, I wondered where the “digitizer” part of that was. Good catch.

I do think the device is being run by a PC, as I think the pen is the type with a sensor which uploads the drawings to a PC and then the PC generates the display and sends it out over that serial cable and the user is lineing up the display by eye.

If you notice, the line up is being made to the edge of the piece of paper being drawn on. So X,Y coordinate system.

I see your point but I don’t see why. If the board space was a little bigger it could easily be a Raspi or a BeagleBone. Why anchor oneself to a PC for something like this?

Because this is a prototype. The goal is getting it working, not making an elegant little DIY project. PCs are just about everywhere and they’re easy enough to work with. That’s all you need, really.

That’s the thing that people don’t get about using arduinos and raspberry pis for simple tasks, too. It’s a nice platform that’s easy to work with and you can implement an idea quickly.

More inför here:

http://www.creativeapplications.net/objects/glassified-ruler-with-transparent-display-to-supplement-physical-drawing/

I doubt this is fake so much as an “example” of use case. It may be using an external camera and other stuff, but so what? This is feasible to do inside of the ruler, just maybe hasn’t been done YET. Open CV can do everything shown, it could do it with a tiny cell-phone style camera and an ARM CPU. Maybe it’s not available NOW, but it’s POSSIBLE now..

As the article linked by Rob describes it uses a wacom for input and a PC for the calculations.

As for your suggestion of a camera, that cellphone camera has a tiny sensor and it would need to be well away from the surface to capture, but then your hand and the pencil is in the way, so how the hell would you do this with a cellphone camera module?

But anyway, it’s still a nice gadget and what he can do for now is making it wireless so that serial data doesn’t need that silly cable. Although – that cable also seems to supply the power so you’d need to have a battery on the ruler too.

A cellphone camera at an angle could do it. It doesn’t need to be far away if it’s pointing mostly up. You’ll potentially lose accuracy if it’s far from the edge. Then again, you could also use a low resolution “scanner” element that you drag across the space first. Sure, it’s one more complication, but not terribly cumbersome. My point was not to say that I knew how he did it, as I didn’t read the article (it was down when I tried to visit earlier) but only to say that it was possible and that the video wasn’t fake so much as a proof of concept..

Fair enough, and as for the camera, I think if you make a box and a glass top and put the camera underneath you might be able to solve the vision issue since it could see the lines from the bottom then through the paper! Maybe if you don’t use an IR filter it will work even better and doesn’t need too translucent paper either.

Yo can get these displays from sparkfun.com https://www.sparkfun.com/products/11788

they have a micro controller and microsd slot on them

Handy link, but at $179.- more pricey than I expected, you buy a full HD IPS-s monitor for that kind of money.

Still, it is neat though :)

I guess there’s a digitizer under the square of paper that he has to draw in.

A brilliant idea

Can something like that be used as a portable scanner? For example – you see an image or text in a magazine. You then scan it and save the scan to an attached memory card. Could that be possible with a screen like that?

Is there any particular reason why a limited resolution scanner cannot be made just like the display is done? I see a problem with transparency, obviously a transparent sensor cannot sense light, but the sensing elements could be relatively sparse, capturing some light and still leaving a lot of transparent area.

Actually for a traditional geometry class, all you need is a compass and ruler, because it’s really about the process of doing proofs in an ideal world, where you don’t need to worry about surfaces that aren’t perfectly smooth or angles that aren’t quite right :-) But yeah, this is cool stuff.

It says in the link that it uses a digitizer. My guess is that the digitizer is underneath the paper and therefor you actually need to draw your lines in order for them to be captured. I.E. you can’t just place this ruler over a pre-existing drawing and expect it to capture the image.

This could be also feasible using 1 or more linear pixel array sensors like those in a scanner mounted at the sides of the display, combined with 1 or 2 optical mouse sensors, then by dragging the device above the image it could be possible to digitize it. Probably could even be combined with accelerometers or gyros for better precision.

Just a couple of ideas..

Another idea would be using a cheap cellphone camera as said above and a mirrors and lenses for special optic arrangement to give a better field of view.

That’s a neat idea.