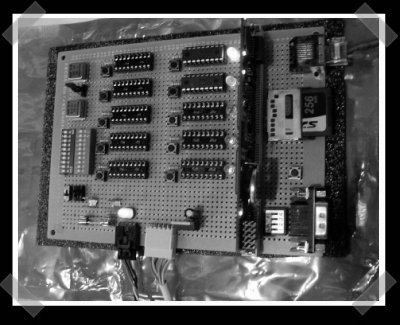

[silic0re] sent in this uh, totally different take on micro-controller applications. The hardware is impressive. It’s built to carry up to 10 dsPICF3012 controllers (30 mips each), and has ethernet, SD, Serial and i2c thanks to an imsys SNAP module (similar to gumstix). This is, as far as I know, the first PIC controller cluster built. The software is still a work in progress- for now it’s just pretty, but he deserves points for originality. His site’s a bit slow, so try the coral cache.

(I woke up this morning thinking that I’d end up eating my words on my ‘first time’ statement.)

sweet, i’ve been think ’bout doing a similar project with the already awsome parallax propeller ( http://www.parallax.com/propeller/index.asp ) controller.

p.s. does anyone know where i can put in my password so i don’t have to do the email verifaction thing?

Yea, people have been doing this forever, and no, this isn’t the first time somebody has wired up a bunch of pics and had them talk to each other on a bus. Neat project though, props for the leds.

crgwbr: I’ve been wondering that too.. and what happened to the stars thing? also do you have a goal in mind for a parallel parallax set? I’ve wondered from time to time about whether something like this could be used to do intelligent job distribution on an smp machine, help out with maintaining job queues and maybe improving cache locality by shunting lines around, sort of like a control plane over the top of the number crunching.

I want something like this only for the AVR, so that such things as an polyphonic AVRSynth would be doable .. that would rock. Need more voices, just plug in the AVR ..

hey, this dude goes to the same university as me! (go canada)..

anyways. I think that this is an awesome project. It would be cool to create a system with many dsPICs, each implementing a small set of basic instructions as well as some unique special functions like floating point divide, or factorial, or any weird thing you want to make an instruction for. Now you can swap out different PICs to create a custom microcontroller cluster (a supercontroller, if you will) that can be controlled like a single core from the code on the SNAP module.

This kind of system would lend itself to a CISC type architecture, where the individual chips carry out rather complex tasks. I think it would result in a pretty cool programming interface.

Without the software this is really just a board with pics on it. I would like to see some cool software!

I had a student who implemented a 4-way Atmel Mega32 cluster running a multitasking operating system and message passing library. A fifth Mega32 was the switch.

I remember seeing something like this back in 1989 or 90 in circuit cellar ink. was modular (up to 64 processors, 8031s iirc) and programmed to compute the mandelbrot set.

hi all,

it’s really cool to be up on hackaday. so to your questions:

1. I thought about the propeller too, but unfortunately it lacks a hardware multiplier. On floating point performance with the current open-source floating point library for the propeller I think I calculated a dsPIC30F3012 to be approximately 3 times faster than a given system using three propeller COGS (one to run your program, two to do the floating point computations). With 8 cogs per propeller, its still in your interest to use a dsPIC if floating point performance is your primary concern.

(Propeller people may correct me on this — I’m just basing my numbers off those provided with the floating point code)

2. You’re quite correct, this definitely isn’t the first time a number of microcontrollers have been connected together, but its certainly one of the few times that they’ve been connected together with the purpose of doing some purely mathematical task (rather than for the task of having more i/o, or controlling a large, complex machine). As far as I know only one other person has constructed a cluster of PICs, for a fourth year engineering project. This cluster was used to generate fractal images, but unforunately the website for it has long since gone. I think this may be the first dsPIC “cluster computer” (with the above purpose in mind).

Also, yes, the LEDs are /very/ important!! :)

5. I agree, and software that does more than blink lights is on its way! For a first cluster project I’m thinking of something that just verifies if a given number is prime or not. Seems like a perfect task where the communication between processors is far less frequent than the processing time required.

I entirely encourage you to post here, but for those interested in the technical specifics and some further information on the thought that’s gone into the cluster (in addition to the information available on my site), there is a sparkfun forum posting here on the topic:

http://forum.sparkfun.com/viewtopic.php?t=4930

thanks for your comments and e-mails!

@12: that does sound like it would be an interesting project, but building a ‘big’ CISC architecture out of multiple dsPICs means that most of the time the majority of the dsPICs would be inactive — it’d be the same bottleneck you find in pipelined CPUs. The performance gain you’d be able to get might be something close to the gain provided by hyperthreaded CPUs.

The really interesting part about using multiple progressively smaller CPUs is that you tend to use more of the gates in your CPUs more often. If you think of a traditional von Neumann based machine, at any given time only a very small number of gates in the CPU are active (executing a single instruction), and a very small amount of memory is active (a few bytes out of mega- or gigabytes). If you have many processors, each with a very simple instruction set and a little bit of memory, you can increase the total number of active gates in your design (and, ideally, the overall performance of the machine).

If this idea sounds neat to you, its exactly the reasoning behind a computer called “The Connection Machine”. Try googling it, and there’s a wiki article on it too.

Neat that you’re at McMaster too. Computer science/Engineering student?