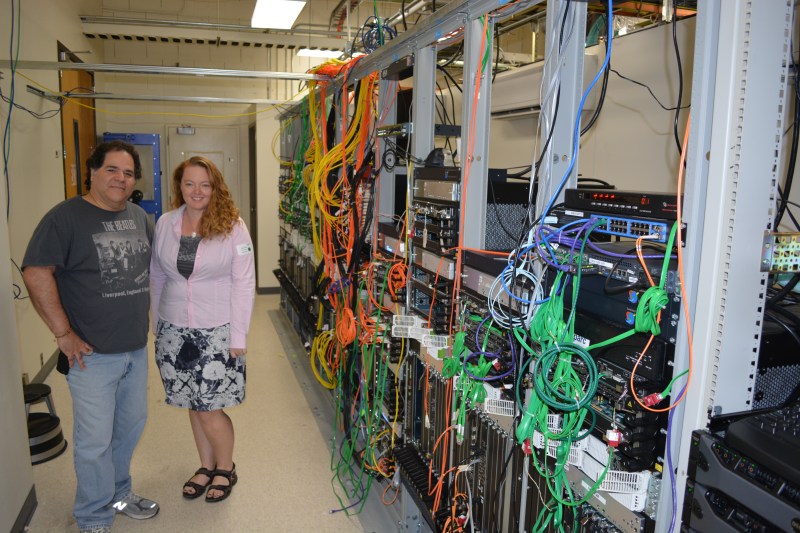

You may be used to seeing rack mounted equipment with wires going everywhere. But there’s nothing ordinary about what’s going on here. [Elecia White] and [Dick Sillman] are posing with the backbone servers they’ve been designing to take networking into the era that surpasses IPv6. That’s right, this is the stuff of the future, a concept called Content Centric Networking.

Join me after the break for more about CCN, and also a recap of my tour of PARC. This is the legendary Palo Alto Research Company campus where a multitude of inventions (like the computer mouse, Ethernet, you know… small stuff) sprang into being.

I’m going to get back to CCN in a minute but let’s go in chronological order:

The Museum

[Elecia White] — embedded engineer, host of Embedded.fm, and Hackaday Prize Judge — was kind enough to offer me a tour. We started in the museum room of the building where we were met by [Dick Sillman]. He has quite a CV himself, including Director of Engineering at Apple and CTO of Sun Microsystems. It’s no surprise these two are working on something to reshape the technological horizon.

The story I was told while in the museum is that PARC was founded as a free-thinking research arm of the Xerox corporation. The gamble paid off because there were a multitude of innovations that are still around today. I saw the “Notetaker” portable computer, the Alto with its portrait-form-factor CRT monitor, and the original laser printer.

One of the most enjoyable parts of this room is a wall plastered with a photograph from the early days. Note the lack of furniture and the comfort of bean-bag-chairs. The only thing differentiating this photo from today’s start-ups is the indoor smoking (and maybe the lack of laptops and smartphones).

The little machine shop

I wasn’t able to take photos of everything, but this machine shop was a fun stop. You walk in and see all the equipment and just know that there’s awesome stuff being prototyped here.

What is surprising is that you walk to the threshold of the next door and if you’re lucky you can peek inside. That’s where the “real” machine shop is and if you’re not an on-duty machinist the threshold is as far as you go. The idea is that you use the small shop to make an example of what you need, then take it to the real shop and they will fabricate however many you need.

To keep the machinist from losing their minds there is a computer monitor next to the door that shows the production state of each job… nobody pesters the machinists!

Lots of rooms with warning signs

Here are just two shots of some of the warning signs you’ll find throughout the building. I somehow missed taking a picture of the biohazard warning. If it’s a piece of equipment useful for research I bet that they have it here.

The building itself feels like a secret fort. Maybe that’s not the best of descriptions, but it’s built in a series of pods placed one after another and all of them have the exact same layout. There are a few different floors in each pod, and sometimes the stairwells simply dead-end despite there being more levels above and below. It would have been a real challenge to find my way back to the front without a guide!

Parts on hand

If you just need that one component to finish the project, there’s a room for that. I found it amusing that [Elecia] and [Dick] were just as interested in poking around to see what is on hand as I was. I suspect they’re usually busy enough that they know exactly what they want before heading to the stock. On the way out I asked if I should shut the door, but this one just stays open, beckoning to every unsuspecting engineer who passes by.

The Future of the Internet

Back to the story at hand: Content Centric Networking. I’ve already mentioned that this is an alternative to IPv6. There are so many addresses available with v6, when are we ever going to run out and need to replace it? That’s not really the point.

CCN looks at a better way to address the transfer of data. Right now everything is based on IP addresses; one specific address maps to one specific location. But our devices aren’t exactly stationary any longer and that trend is going to continue. CCN focuses on the data itself and the device it’s intended for — agnostic of the location — by using names instead of addresses for routing.

There’s a lot to consider with this, like security. I was a bit shocked to find that the system signs every single packet. It doesn’t really matter how the data gets somewhere, or if it falls into the wrong hands. Man in the middle, spoofed addresses, and a slew of other issues can be solved this way. But back to my shock: how can you sign every single packet without a huge speed hit compared to what we have today? And how can you figure out where content is going if there’s no address to send it to?

The answer to speed is the hardware that [Elecia] and [Dick] are working on. They showed me one of their dinner-tray-sized 26-layer router PCBs that gets slotted into the racks in their work area. Impressive to say the least.

The answer to the rest is not completely clear in my mind. But I think that’s about par for the course. Even demonstrations are a bit tough to put together. Above are a few pictures of the test rig for the concept. Each node in the network is named (alpha, bravo, charlie, etc.). They are all connected to each other and all have the credentials to view the data packets created by others. This builds something of a “dropbox” of networked data. Each unit snaps its own images, but all images are displayed on the slideshow of every unit.

To truly grasp CCN you’re going to need a lot more reading. I’ll add some resources below. But before I do I’d like to thank [Elecia], [Dick], and PARC for an exciting and fun morning! I’d also like to mention that I was a guest on embedded.fm this week, talking about all things Hackaday and The Hackaday Prize.

![DSC_0089 [Elecia] hanging out in the machine shop](https://i0.wp.com/hackaday.com/wp-content/uploads/2014/09/dsc_0089.jpg?w=262&h=175&ssl=1)

I’m not certain how to tackle the subject you have raised. Anymore I am turning into a network specialist and am frequently knee deep in the stuff. I have a stupidly loud rack right next to me. I’ll try to explain it in the best way I can, a full explanation would take dozens of pages just for the background work.

Okay really short answer: CCN is not viable. There is nothing it does better than what is already available. It has higher overhead, higher delay, higher jitter, lower robustness, more difficult setup, far more expensive hardware (CCN would require $100 routers to be replaced by $10,000 varieties) and virtually no market penetration. It is a dead-end in the way that IPX/SPX, multipath tcp, or sdn is. In some specific situations it is better, but the negatives are so enormous that it just isn’t going to happen at a large scale.

Hell, we are still having issues about whether or not to mandate NAT/PAT in IPv6 and that is objectively superior to what we use 90% of the time already. Nevermind powers that be keep butting in and trying to neuter the native IPSec.

It takes a mountain of knowledge to understand how networks work at a deep level and a great deal of critical thought to see the beauty that it was designed that way because it must be designed that way to be practical. Another way to think of this, networking protocols are like cryptography in that there is a never ending flood of people that have claimed to have made something better. Under scrutiny they fail. For your own sanity it would be wise to ignore things like this until there is a IEEE standard if you have a masters degree in a related field. Ignore them until there are products on store shelves if you have less ‘skin in the game’.

Funny but I remember this same type of discussion about TCP/IP. It was too complex for lans and was only really useful for a new lager companies and it was hard to configure because you had assign an IP address to each system. I also remember the same type of discussion over Ethernet vs TokenRing vs Arcnet and so one.

Never dismiss anything still in the lab but also don’t bet the farm on any technology. IPX/SPX kind of ruled the roost for a good long time.

CCN is an alternative way to implement Ted Nelson’s Xanadu vision – I don’t see it as replacing TCP/IP, but it’s a protocol designed to support specific usage models. It’s more tied to applications where you use a name to access resources, instead of the connection oriented system we have today.

I think of it as the grandchild of CMU’s AFS system and plan 9, perhaps.

If enough content-centric applications drive adoption, then we might finally

Cable management? I like the cables water falling off the tray at the top of the racks.

researchers dont have time for housekeeping. especially if they end up ripping it apart every few months to start a new project.

I would think trouble shooting networks would get vastly easier if it was moderately clear what hardware was hooked up to what.

Maybe it’s just me, but what’s the significant enough advantage of this that the world would give up on IPv6? Or am I missing the point, and it’s not meant for large-scale adoption? It just seems like it’s going to be a lot more work for everyone to deal with than sticking with IPv6, without enough up-sides to make it worth the trouble.

Take a stress pill. This is research, not a standards committee. It’s not shipping in your next PC, and your ISP isn’t mandating it.

I could see this being better in a LAN dealing with a nonSQL architecture, but not for the internet en total.

I really like the idea of the small shop for prototypes and a seperate shop for initial runs for testing. Looking at the photograph I think I see a nice Bridgeport in the background. Nice to see they didn’t scrimp.

It is unlike Tweeter much deeper…

Like Twitter’s Manhattan, sometimes what we need to do is a better technology driver than what we think we need.

I do hope you guys realize that 90% of internet traffic is the same thing sent to many different addresses. This allows a better way to cache stuff. That is just one small part of this.

That is called multicast. That exists. Caching exists too. They are not the same.

That is not at all the same thing as caching.

Denial the predicable human response to change.

For a group that gave us the foundations to so many life changing technologies you may want to cut this group some slack.

I remember cruising the “internet” on GOPHER (PRE WEB)! on a dial-up, on a Commodore 64! I have seen the development happen in my lifetime. Statements like this: “there is nothing it does better than what is already available”. Makes me think you haven’t configured or dealt with a 10,000 node network, or 100,000. IP, routers, dhcp, dns, content servers, proxy, nat, firewall rules. I am pretty sure that you have never tired to FAX over the internet either. (the fallacy of the thought not withstanding) there is room for many improvements. Bit-torrent is a prime example of that, TCP/IP hasn’t been so wonderful! Just ask Google, Facebook and most large agencies that need to distribute content. (Makes me think Netflix’s local cache’s use something like it).

If your tasked with envisioning where to improve the current internet and expand on the idea of where do we go next. This is a good start. Sure its rough etc. And if its not free then it won’t be implemented. SMTP, WEB, the entire layer of “servers” would just become another gear in the cog of the content machine.

Networks only move bits around, some bits are for overhead, ports, apps, layers, services etc, other bits are for content. Pictures, movies, email, HaD posts. If you look at it from the 10,000′ foot view your just trying to find a way to get a pile of bits from over there to over here or vise verse, then as a new approach it seams like a good idea to explore. Sure the nodes have to get smarter, but that’s what Ethernet and TCP/IP did over time. Thick/Thin net to twisted pare, hubs to switches, switches to integrated routers. Services changed from gopher to web, ftp to bit-torrent etc. Overall systems evolve and improve.

On the topic of “higher delay, higher jitter, lower robustness”

You missed the fact that it becomes a giant cache for content as it distributes. Sure it may take more time for the first file to go from node A to node Z but if that path took it on a common path to B-X then it will improve the overall response by distributing the content, entirely eliminating jitter and delay expect for the single hop or two to the nearest cached copy of that content. Jitter and delay are virtually nil after the cache populates. Robust as well because localized outages such as the primary content server for resource X going offline only have a local effect, clients would just pull from another available cache.

Take 3 minutes to read a paper before pulling the dead on arrival line, but I will grant you some slack in that there is a lot to do before this can be anything more then a lab experiment.

Appeal to authority, same as others, is a fallacy.

“Makes me think you haven’t configured or dealt with a 10,000 node network, or 100,000. IP, routers, dhcp, dns, content servers, proxy, nat, firewall rules.”

Ad hominem. What do you think is next to me right now?

Fax services are still quite popular, thus why they are generally still installed on at least one AD system (linux alternatives are poor).

You undermined yourself in the second to last paragraph. You didn’t even refute any of my points. Why assume when you can know? If you have ran a network of significant size, you know how untenable highly distributed caching is for all but very specific use cases. If everyone had huge pipes and much cheaper space than we do now, sure. But then, why would we need this when everyone has huge pipes and massive drives? It just isn’t going to happen.

Alternatively, here is the insightful answer: http://xkcd.com/955/

I don’t claim to be an authority on anything, I do however understand that 100,000 iterations of anything is a lot of effort to put in place if all you want to do is move a pile of bits from room A to room B. You may have a pile of network hardware next to you, so what, congratulations you have a pile of plumbing. Forgive me I don’t know anything about you. I am not trying to impress anyone, nor be an authority or more knowledgeable.

I will say that the man (Van Jacobson) that invented or had major design contributions to, traceroute, tcpdump, the RTP protocol, header compression and the MBone, and works in the research center that invented Ethernet, might just know more about networking then you or me.

If you want me to find issue you said it here “It takes a mountain of knowledge to understand how networks work at a deep level and a great deal of critical thought to see the beauty that it was designed that way because it must be designed that way to be practical”

“practical” at the time of there invention, not necessary relevant to today’s limits/needs/capabilities

“critical thought” – pretty sure that’s what CCN proposal is.

Just because you have done it that way for years doesn’t mean there isn’t room for improvement. What’s more practical to 5 billion users?

“What do you think is next to me right now”, a very expensive and impractical heater, an investment worth nothing in 7 years. The value of a system as large as the internet is no longer in the computers, long ago a companies like Google realized the value was the information contained within the network. The concept of the CCN is a hard sell.

To quote the CCN people “Internet is not bad by design, the problem has changed”

Keep an open mind.

[youtube http://www.youtube.com/watch?v=RFO6ZhUW38w&w=560&h=315%5D

This is nothing personal at all I simply love the answer to the “open mind”.

Personally I think (that’s a gut feeling totally) that the Internet of today can provide both direct communication (quite well) between nodes and content deliver (well enough) it is going to stay. If anything from the CCN project will be seen in the wild it will probably be implemented on top if IPvX.

Love the vid!

I agree with your feeling about today generation of the internet, however as it scales with another few billion devices things will change. NAT evolved to fill a need because some assumptions about IPv4 were wrong, people thought IPv4 would be long gone by now.

The network stack for CCN’s appear to have a provision for overlay in an IP network. One can reasonably assume vendors won’t forklift out IP to place CCN’s. However a CCN greatly simplifies many aspects of a large network like the internet. They are lab experiments, you have to start somewhere and its highly likely to evolve, I am sure there will be many competing ideas as well.

Wow – I’m seriously jealous of this visit. Getting to see the PARC museum and the current research is beyond awesome!

So, if I am reading this right–and someone correct me if I am not:

*this approach stores data (databases, w/e) in lots of places on the assumption that memory is cheap and transport costs are high.

*assumes that not everyone wants the same thing all the time, and distributes data (databases) in incomplete form (where the user wants data {a/b/c/f}, and get data from separate datacache carrying {b/c/r}, {a/d/e}, and {a/f/h/k}

*User makes their request, something magically figures out the closest/largest/cheapest pipe(s) available

*works out some sort of security arrangement on each packet (or packet analog) that will shield data in transit keeping The Illuminati and/or your mom knowing what is there, while still managing to know where its supposed to go

*works off a name, not IP; meaning something like a MAC address (?)

.

.

.

*profit

Which suggest to me that this approach has a constantly changing/ad hoc mesh topology, permissions and priority is either a mess or non-existant, and there is a constant flow of Stuff in quantities of “Lots” to “ZOMG the wires have melted”.

That one way this would (sort of?) work is by having everyone with a connected device that supports local traffic and actes as a data cache for surrounding users. Which would require hitherto unimaginable degrees of goodwill.

It might be cool for stuff that lots of people use (twitter feeds from the rich and brainless, pictures of cats, news.google, etc.) and rather less so than someone digging through an online archive of French National Ministry of Snuff transactions from AUG1797-MAY1798.

Or have I inhaled too many paint fumes over the years?

I think they need to go and do the math again when it comes to the rack setup.

They have used old racks that were intended for very heavy telephone equipment.

These racks were intended for use where the center of gravity of the equipment units was at the center of the rack support. Now the center of gravity of equipment units is far to the right (as pictured) of the rack support.

They have tried to compensate for this with the top support rails going to the left wall so in this case the support rail is under expansion stress rather than compression stress.

I can see that that have used rails on the wall to distribute the load but it doesn’t look like it’s enough. In any case these support rails should go to the wall on the opposite side of the room.

In short, this rack definitely looks like it would collapse before it’s fully populated.

This reminds me of the “internet” as described by Neal Stephenson in his book the diamond age..

“The interest leaves state as it traverses the network.” says the Wikipedia article. That sounds like ATM which makes me think this is less probably to happen. I we could handle some CCN ideas with ORCHID (http://tools.ietf.org/html/rfc7343).

Then, there is one very political issue with CCN. It does not match the economy of today because it does not answer the very basic question who is going to pay for all those distributed caches? The Internet gets it quite right, although it hits the net-neutrality issue too.

then the economy of today will simply have to change. it not the first time nor will it be the last.

Onions, anyone?

Well now that has has been posted, Apple should be “inventing” CCN any minute now. ;’)

The computer mouse was NOT invented at PARC. Doug Englebart invented the computer mouse while working at SRI International, which was known as Stanford Research Institute back in those days.