Every time we watch Minority Report we want to make wild hand gestures at our computer — most of them polite. [Rootsaid] wanted to do the same and discovered that the PAJ7620 is an easy way to read hand gestures. The little sensor has a serial interface and can recognize quite a bit of hand waving. To be precise, the device can read nine different motions: up, down, left, right, forward, backward, clockwise, anticlockwise, and wave.

There are plenty of libraries to read it for common platforms. If you have an Arduino that can act as a keyboard for a PC, the code almost writes itself. [Rootsaid] uses a specific library for the PAJ7620 and another — Nicohood — for sending media keys.

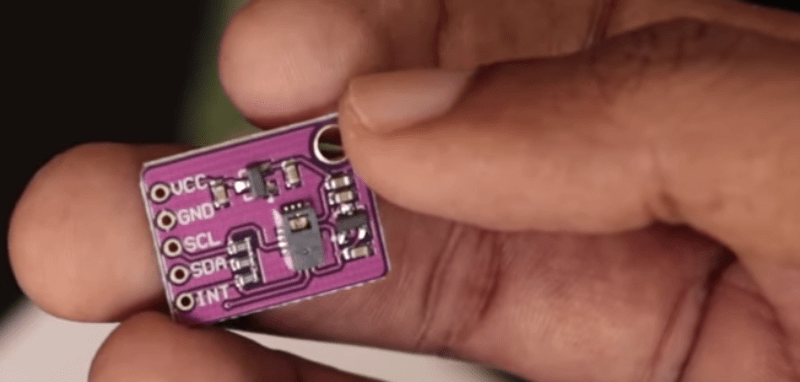

With those two libraries, it is very simple to write the code. You simply read a register from the sensor and determine which key to send using the Nicohood library. The serial communications is I2C and there’s a tiny optical sensor onboard along with an IR LED.

Of course, you could send other keys than media controls. We wouldn’t mind going back and forward on web pages with a gesture, for example.

We’ve seen gesture recognition with radar. We’ve also seen it with ultrasonics.

u

I once did a study on which are the best gestures to control an alarm clock. In the end, I concluded that the best gestures were no gestures at all: tangible controls are better from the user perspective. If done correctly, they are more intuitive to use or easier to learn, provide instant feedback and are more comfortable to use. Gesture sensors have a certain degree of inaccuracy, which ruins the experience. That conclusion also ruined my Master’s Thesis.

The full P/N is PAJ7620U2. On Ebay the breakout boards go for around $6 each with free shipping from China or Hong Kong. There are actually three modes of operation with this sensor: 1. Gesture Mode, 2. Cursor Mode, and 3. Image Mode. Apparently in Image Mode you have direct access to the 30×30 pixel sensor data. The sensor has an infrared (IR) emitter and a 30×30 pixel IR camera in it. The object moving in front of the sensor has to be close enough to reflect IR light from the emitter back to the camera. But you might be able to control it from far away if you wave an IR LED at it ;-) Here’s the datasheet download direct from the manufacturer in Taiwan:

https://www.pixart.com/products-detail/37/PAJ7620U2

Have Fun…

Personally, I don’t care about the gestures, I’m interested in that ir sensor. And cursor mode.

Lots of things I can do with an ir camera, maybe even a diy leap motion or something.

I’m knee deep in writing out the code for reading out the camera, hard part is these are NDA locked datasheets. If you’re looking for a dirt cheap 30*30 camera look into the ANDS-3080. PLENTY of software for those around and they run at 100hz dump with standard M12 lens support on the breakouts

Edit; ADNS-3080. Here’s your example code too https://github.com/Lauszus/ADNS3080

This IC effectively replicates what is used on optical mice (the cheaper ones at least).

The site quotes a maximum distance of 20cm, which I very much doubt is achievable in every day usage.

That’s interesting Joaquin. I think gesture sensors fit well in at least two places: Automobiles (control radio, navigation, etc. without having to divert your eyes from the road to look where you touch), and the Kitchen (control oven/stove/timer/TV/radio, I have wet hands, don’t want to touch unsanitary buttons, etc.) Unfortunately I never see cars or appliances with gesture control. It’s really a shame. The smart sensors exist and they are quite affordable. Maybe the software is harder than I think, or maybe there are some Greedy Lawyers lurking in the background waiting to sue everyone over something associated with gesture control errors, or maybe there are frivolous Patents preventing the technology from emerging (we’re back to Greedy Lawyers again). I dunno.

i’m quite surprised the sensor isn’t used more often… i would love to see some exaples of the raw image mode – i think it could be used in multiple ways, like vision for a robot or more advanced hand/gesture recognicion in an external more powerfull uC (maybe an ESP32)…