As the world sits back and waits for Coronavirus to pass, the normally frantic pace of security news has slowed just a bit. Google is not exempt, and Chrome 81 has been delayed as a result. Major updates to Chrome and Chrome OS are paused indefinitely, but security updates will continue as normal. In fact, Google has verified that the security related updates will be packaged as minor updates to Chrome 80.

Chinese Viruses Masquerading as Chinese Viruses

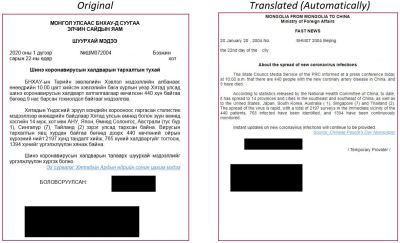

Speaking of COVID-19, researchers at Check Point Research stumbled upon a malware campaign that takes advantage of the current health scare. A pair of malicious RTF documents were being sent to various Mongolian targets. Created with a tool called “Royal Road“, these files target a set of older Microsoft Word vulnerabilities.

This particular attack drops its payload in the Microsoft Word startup folder, waiting for the next time Word is launched to run the next stage. This is a clever strategy, as it would temporarily deflect attention from the malicious files. The final payload is a custom RAT (Remote Access Trojan) that can take screenshots, upload and download files, etc.

While the standard disclaimer about the difficulty of attribution does apply, this particular attack seems to be originating from Chinese intelligence agencies. While the Coronavirus angle is new, this campaign seems to stretch back to 2017.

HTTP Desync

It’s a fairly common practice to build web services with a dedicated front-end server, and then a back-end server or group of servers. I just recently migrated a handful of websites that I host to this paradigm, using an Nginx server as a shared front-end that routes traffic to the appropriate Apache back-end server. Nginx scales better than Apache, and it helps ration public IPv4 addresses. There is an attack that takes advantage of this arrangement: HTTP request smuggling.

When using a dedicated front-end, common practice is to share a TCP connection, and potentially an SSL connection, and send all the traffic to the back-end in a single shared stream. Particularly when using SSL, the performance gain is substantial. Using a shared stream does introduce a dose of extra complexity. What happens when the front-end interprets a request differently than the back-end, and how does the back-end make sure to keep requests separate?

Back in 2005, an attack was devised that took advantage of the problems inherent in these two questions. The original HTTP Request Smuggling attack (whitepaper) was as simple as including two “Content-Length” headers in a request. It was found that in some combinations of front-end and back-end software, the front-end would use the last “Content-Length” header to interpret the request, whereas the web server itself would use the first header. With a bit of careful request crafting, then, an attacker could send a single HTTP request to the front-end, and have that single request interpreted as two separate requests by the back-end. This seems like a rather unimpressive attack, until you consider that many deployments rely on the front-end server for request verification and security controls. If you can sneak a malicious request past the front-end by embedding it in one that is harmless, you may have a path to attack the back-end server directly.

Request Smuggling didn’t catch on as a viable attack, and so much time has passed that all the major products automatically catch and mitigate this particular attack. Revealed at DEF CON 27, HTTP Desync is a new take on this old attack. Rather than specify content-length twice, this attack uses both content-length and chunked encoding. It’s another approach to the same end goal, give two different lengths that are understood differently. There are a handful of clever techniques that [James Kettle] covered in his DEF CON talk, like adding non-standard white spaces in the “Transfer-Encoding: chunked” header. One end sees the header as non-standard and ignores it, and the other might clean up the whitespace before processing the headers, leading to desync.

You may think that SSL protects against this technique, but we’re describing a scenario where the SSL certificate is installed on the front-end server. All the incoming requests are decrypted and interleaved together, and then may or may not get re-encrypted en route to the back-end. Because it’s that interleaving that gives rise to this class of vulnerability, the SSL connection doesn’t have an impact.

What can you actually do with this sort of attack? Bypass source IP restrictions to a certain endpoint, to name the simplest. Have your WordPress site’s /wp-admin page restricted to just one IP address? An HTTP Desync can bypass that restriction. In another example, [James] was able to dump all the custom HTTP headers the front-end was using, and then spoof some of those headers to gain admin access to an entire web service. The whole talk is great, check it out below:

The related news from this week, [Emile Fugulin] took a look at HTTP Desyncs and discovered that Amazon’s Application Load Balancer is potentially vulnerable in its default configuration, when paired with a Gunicorn back-end. If you’re using ALB, he suggests looking at the “routing.http.drop_invalid_header_fields.enabled” option, and turning it on if you can. Gunicorn has been patched, so go make sure you’re running the latest version there, as well.

Remote Code Execution in Security Product

Well this is awkward. Trend Micro disclosed a set of five security bugs in its products, and revealed that two of them have been actively exploited by attackers. The details are a bit sparse, but it seems that the two attacks found in the wild require some level of authentication before they could be exploited. The two vulnerabilities that seem the most alarming are CVE-2020-8598 and CVE-2020-8599, both of which allow remote compromise before any authentication. It’s humorous to see that the vulnerability bulletin lists a mitigating factor, paraphrased: You have a firewall and NAT, right? If you use Trend Micro, make sure it’s up to date, and maybe do a quick audit on what ports are open on your workstations.

Bugcrowd, Netflix, and Ethics

This story sneaked in just in time. An unnamed security researcher discovered a flaw in Netflix’s handling of session cookies, combined with their use of unsecured HTTP connections for a few endpoints. Yes, Netflix is still vulnerable to Firesheep.

That could have been the end of the story — Netflix should have made their bug bounty payment, fixed their unsecured subdomain, and all would be well. Instead, when our anonymous researcher submitted his finding through Bugcrowd, the firm that handles Netflix’s bug bounty program, the official response was that this finding is out-of-scope for a reward. That’s not surprising, it’s normal for a researcher to disagree with the target company about how important a vulnerability is. As one might expect, once the researcher was told his findings were out-of-scope, he made them public — and shortly got an official scolding from Bugcrowd. Apparently an out-of-scope bug submission is still in-scope enough to be kept secret. Even more concerning, Bugcrowd’s documentation doesn’t seem to include a set timeline, but implies that all disclosure must first receive the target company’s permission.

Bug-bounties are great, but Bugcrowd puts researchers into an ugly catch-22. I think it’s ethically rotten to refuse a payout, and then continue to hold a researcher over the barrel on an issue.

That’s it for this week, stay safe and do some security research!

[On viruses masquerading as… viruses]

Quoth the blurb: “these files target a set of older Microsoft Word vulnerabilities […]

While the standard disclaimer about the difficulty of attribution does apply […]”

I’d say this one is clearly on Microsoft.

Isn’t it usually, though?

> Chinese Viruses Masquerading as Chinese Viruses

Not cool, clever or funny. This Trump line of “Chinese Virus” as a label for SARS-CoV-2 is causing a ‘pandemic’ of racism in western countries. People have been demanding that Asian kids be banned from schools, while they are still open; and adults have been reporting a sharp rise in hate crime.

Don’t do this again.

The Spanish Flu, MERS (Middle-East Respiratory Syndrome), Ebola (Named after the Ebola river), and German Measles would all like a word.

Take a look at something. A trivial google search at who was using the term “Chinese virus” before a couple weeks ago: https://www.google.com/search?q=%22chinese+virus%22&safe=active&sxsrf=ALeKk02dSZGanvYIYS34D535FDVFSa5vSQ%3A1584834351136&source=lnt&tbs=cdr%3A1%2Ccd_min%3A1%2F1%2F2020%2Ccd_max%3A3%2F4%2F2020&tbm=

It’s fascinating to me how that term became racist only once someone discovered they could use it as a political cudgel.

Looking through the hits in that search, most of them are claiming that the use of the term “chinese virus” is racist. A few of them are pointing out violence and exclusion of asians more broadly based on the fact that this virus originated in China, even though the people in question are Americans.

Jonathan, racist terms don’t start out as racist. Words are just sounds, after all. It’s their use in context that defines meaning. And this particular phrase, from your cited sources, is looking pretty bad.

I went back and took a look at this… The internet needs a tool to accurately snapshot google searches. Not only did you get a different set of results than I did, I saw my search results change within the few minutes I spent looking at this. Same search before and after a refresh:

https://imgur.com/ztEJmOD

When I first ran the linked search, every hit on the front page used the term “Chinese Virus” or “Chinese Coronavirus” in the same way we would use “Ebola” to refer to that outbreak.

I’ll see your Chinese Virus and raise you one Black Death. And I’ll have my Dutch Uncle send you a French Letter and enclose some Spanish Fly. Of course it won’t get there until Indian Summer.

I agree with the author that it is pretty low of Bugcrowd to refuse to pay for a bug report, but then come down hard on the person making that report for releasing details publicly.

Either it is a bug that needs to be fixed, and hence is elible for a reward, or it is not, and by definition will therefore not cause any harm, so releasing the details should not be a problem.

Either pay up, or “bug finders” will not bother to go to you and sell their findings on the dark net.