We’ve seen projects test the lifespan of an EEPROM before, but these projects have only tested discrete EEPROM chips. [John] at tronixstuff had a different idea and set out to test the internal EEPROM of an ATmega328.

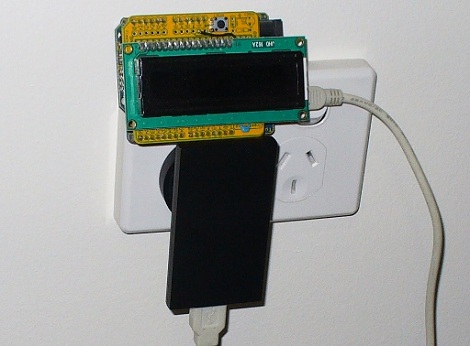

[John]’s build is just an Arduino and LCD shield that writes the number 170 to memory on one pass, and the number 85 on the next pass. Because these numbers are 10101010 and 01010101 in binary, each bit is flipped flipped once each run. We think this might be better than writing 0xFF for every run – hackaday readers are welcomed to comment on this implementation. The Arduino was plugged into a wall wart and sat, “behind a couch for a couple of months.” The EEPROM saw it’s first write error after 47 days and 1,230,163 cycles. This is an order of magnitude better than the spec on the atmel datasheet, but similar to the results of similar experiments.

We covered a similar project, the Flash Destroyer, last year, but that tested an external EEPROM, and not the internal memory of a microcontroller.

Check out the hugely abridged video of the EEPROM Killer after the break.

Presumably it then went insane and locked him in an airlock?

Note that you almost always get a lot better specs from a chip then the specs say. The specs are worst case, guaranteed values. In all temperatures, under all conditions. So if you run room temperature for a few days, the results will be much better then putting the same chip in a temperature chamber and put the chip trough -55C +125C cycles (absolute maximum ratings for AVR chips)

For hacking goals it’s also fine to overclock 16Mhz AVRs to 20Mhz, and the 20Mhz AVRs to 32Mhz. They get a little bit warmer, but function fine.

Intuitively I would say that the recorded number of write cycles could be doubled, because each bit was only set once every two cycles (0x55, 0xAA writes). Can’t really be bothered to think about this too deeply, it’s been a long morning.

FYI – Eeproms are erased to 0xff before a write, and then the zero bits are written.

A better test for random data would be to store an xor of the adress byte[s] and a pass number –

this would show up faults and cross-coupled bits better.

smoke, I’d say that the number should be halved since each bit was actually only programmed 600,000 times, not 1.2 mil.

See http://electronics.stackexchange.com/a/21234. Each write includes an erase operation

@k-ww:

That is the case for many (and certainly the newer eeproms) but it appears that some that allow overwriting at an address without clearing to 0xff. It just happens that bits already pulled to 0 do not change (not sure if 0->1 transition happens either).

I could however be misreading data sheets…

i think it would be best to completely fill the memory with a pattern that can be read to determine what sectors are failing.

depending on how evenly the semiconductor media is distributed across the working area on the chip will determine where it fails (just like the floppy and cdr media).

As for overclocking AVRs, we have tried running 8MHz ATmega8515L at 12MHz and it really does not work: while the CPU and most peripherals seems to run fine, UART is completely unusable.

I know I’m 5 years late here but I can’t believe nobody dignified this comment at the time with at least a “lol” or something. :)

@holly_smoke & gridstop:

On each write cycle EVERY bit changes – the ones that are already 1 go to 0 and vice versa. Thus, the number of write cycles is as reported.

Of course, this number is specific to this particular chip (which is now destroyed!) – this may or may not be a good representation of the normal life of the AVR’s EEPROM. The best way to work this out would be to destroy some more, and do some statistics on the results ;)

Why do eeproms die? A means of killing them is the afore mentioned one, but why do they die=))?

I’m guessing something going wrong with the sillicon…electrons flipping…dunno…

Short explanation here:

http://en.wikipedia.org/wiki/EEPROM#Failure_modes

A lot better than the 3 or 4 writes I was used to getting on my NES cartridges before I lost my save games. And a lot better up time than the 47 minutes between cartridge resets (At best). Technology has come a long way.

Last I checked, EEPROM is a capacitive-based memory technology. Here’s my crude understanding: this means that you charge a tiny physical cell with electronics in order to set a bit. The electrons going in to set the bit, however, must pass through some sort of thin barrier in order to enter. We know that electronics have a measurable physical size, so each time they pass through the barrier to attach to the capacitive substrate they damage the material, essentially breaking away atoms and leaving little holes in the barrier material. Therefore, each write to the capacitive EEPROM memory wears down the barrier. When the barrier gets worn thin enough, the charge (electrons) do not remain on the capacitor as long. The charge (ie: data on the EEPROM) is meant to last for X number of years, at something like 100 deg C (check the datasheet to be accurate), before it naturally dissipates enough (ie: before enough electrons leave the cell) that the memory state is lost or corrupted. Therefore, an EEPROM test is inconclusive unless we know *how long* the memory state could be retained before it would naturally get corrupted from electrons self-discharging off of the capacitive cells.

So, how long did the code wait before checking the memory value in the EEPROM? There’s a big difference between 1 second, 1 minute, 1 week, and 100 years, for instance. Perhaps you get 1 million writes before it “fails,” but at 500,000 writes the time the memory will be retained has dropped from 100 years to 10 days, so for many applications it’s as good as dead anyway.

Just some food for though. Interesting test, regardless. Just rememeber: time is a factor. How long can the little capacitive bits retain their charge?

Was this during one consecutive power cycle? or was the power cycled on and off to the system?

If there was a power cycle between each write, I would guess it would have failed at closer to the manufacture’s spec of 100,000.

Ok, but what about EEPROM reading?

Does it influences the lifetime?

No.

If the goal was to find out when a specific EEPROM location stops being writeable, it is better to do repeated writes to just one address instead of the whole EEPROM?