Multi-node RasPi clusters seem to be a rite of passage these days for hackers working with distributed computing. [Dave’s] 40-node cluster is the latest of the super-Pi creations, and while it’s not the biggest we’ve featured here, it may be the sleekest.

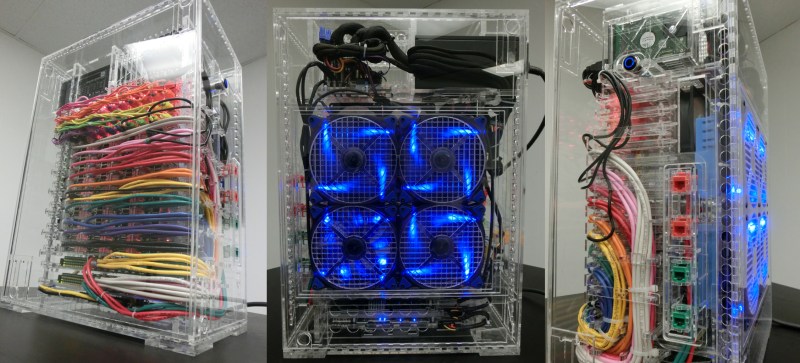

The goal of this project—aside from the obvious desire to test distributed software—was to keep the entire package below the size of a full tower desktop. [Dave’s] design packs the Pi’s in groups of 4 across ten individual cards that easily slide out for access. Each is wired (through beautiful cable management, we must say) to one of the 2 24-port switches at the bottom of the case. The build uses an ATX power supply up top that feeds into individual power for the Pi’s and everything else, including his HD array—5 1TB HD’s, expandable to 12—a wireless router, and a hefty fan assembly.

Perhaps the greatest achievement is the custom acrylic case, which [Dave] lasered out at the Dallas Makerspace (we featured it here last month). Each panel slides off with the press of a button, and the front/back panels provide convenient access to the internal network via some jacks. If you’ve ever been remotely curious about a build like this one, you should cruise over to [Dave’s] page immediately: it’s one of the most meticulously well-documented projects we’ve seen in a long time. Videos after the break.

[Thanks Luke]

Rite of passage?

I <3 those swastika fans.

Hail Pi!

If you wanted to research distributed computing, wouldn’t it be easier to run 40 instances of a node on a single desktop PC ?

Yes, definitely.

Yes.

Might be easier in some respects but probably not as much fun!

I assume you’re referring to using virtualization to run everything on one big PC. A virtualized approach may not represent the network latencies between nodes as accurately as a real network. It’d also be hard to do HW trade off studies between say USB drives and NAS drives. Some of these types of timing issues can have a surprising impact on your results when you’re trying to get maximum performance.

If you’re trying to learn how to write basic distributed software, then a virtual setup probably is easier and cheaper.

The RasPi’s Ethernet via broken USB host makes your argument invalid.

Not really. Even with RasPi’s “less than optimal” networking, I’d still go the a NAS solution for the hard drives. Then I’d replace the RasPi’s with something that has a working gigabit NIC. Maybe something like a BeagleBone Black or one of the many COM/SOM boards out there.

Maybe replace the ethernet with the SPI bus? That alone would be a major hack.

The CPU on the BeagleBone Black (AM3359), does support dual gigabit (2xRGMII), but as far as I know the current hardware revision of the board only supports 10/100 Ethernet.

Perhaps one of the Odroids. They’re nice boards with gigabit nics.

There are numerous software utilities for simulating high latency network environments. Not that I know why you would want to since this is meant to simulate a super computer which always try to have low latency interconnects.

Would it be possible to use this computer for something simple, like surfing the Internet on Midori?

Buaahjahahahahahahahahahahahahaha! Cough, Chortle, Choke,,,,cough, cough….good one. so, you are going to open up 40 virtual machines on one PC? All said and told, you are dividing the processor to 1/40 of it’s computing power. This is not to mention the overhead of running those 40 virtual machines. It would be too slow, even if it ran….

Master Rod

What, 40 1GHz ARM cores on a PC with 8 superscalar cores at 4GHz?

If you take into account the huge latency and narrow bandwidth of the slow USB Ethernet on the cards, a decent PC running emulators might be able to run the same codes faster than that box can. I wouldn’t like to bet on it either way.

Nice Republic serial villain laugh though, Master.

Cable management in clusters tends to be a big issue and you’ve done an excellent job! I would have gone with NAS drives instead of a USB interface but life’s full of trade offs. Great job!!

With 4 fans blowing air into the case, I didn’t see any vent holes mentioned in the videos. Is the top open? Do you have problems with back pressure?

Thanks!

The fans I selected run at only 1000 RPM. With the resistance provided by the filters, the resulting flow rate is easily detectable, but still fairly slow. As a consequence, the power supply and the small cracks all around the outside of the case are more than adequate for all exhaust to escape.

This might be the worse comment yet but HOLY MOTHER OF PI.

Superior cable management there as well, hats off.

A work of Art. Bravo!

Probably fun and everything but probably not the easiest way to test distributed computing and probably not most bang for the buck but what the heck.

Nice build.

“My goals for this project were as follows: Build a model supercomputer”

Wow this guy is a deluded if he thinks this is a super computer. And since he says this cost $3k, he could have gotten a few i7s, and linked them together with some infiniband cards.

I think you missed the MODEL part of Model Supercomputer.

There’s enough there to get a vague idea of some of the issues you need to deal with – RPC, distributed storage, distributed processing (map reduce etc), network congestion, network latency, storage latency etc etc.

Yes, it’s not he same as a supercomputer – but it is a good learning model.

Plus it’s got flashy lights. Who doesn’t love flashy lights on a model!

This doesnt model a super computer at all, show me any super computer which uses ARM processors or ethernet for the interconnects. Also to continue with his remarks:

“In the practical sense, this is a supercomputer which has been scaled down to the point where the entire system is about as fast as a nice desktop system.”

This isnt anywhere as fast a nice desktop system.

“…which structurally mimics a modern supercomputer.”

Using a few i7s and infiniband cards would structurally mimic modern super computer clusters far more than some ARM chips and and the horrible USB 100mbit ethernet as implemented on the RasPi.

I honestly have no idea why someone would spend $3k building something like this, when if you really wanted to study cluster computing, you could do so in a virtualized environment.

*sigh*

I think what he meant by a model is, literally a model. Similar to dioramas, it may represent something of a larger scale, but not really being made of the same materials. Just like how replicas of buildings for displays are made. Some may be made of cardboard, and obviously the real building would be made of something stronger than cardboard.

Thanks. This is a good explanation.

This isnt a model in that sense either. Here is one which would be, a 1/10th scale model of a Cray 1 implemented on a FPGA

http://www.chrisfenton.com/homebrew-cray-1a/

I’m actually really glad I chose the Pi (with its Ethernet speed just as it is) for this application. If I allowed myself fast interconnects from the beginning, I would not be forced to use bandwidth efficiently. I see this as a major concern because of the relative growth rate trends of CPU speeds and network bandwidth. With that in mind, the network adapter on the Pi may be faster than the ideal for the kind of testing I want to do. (I believe it will be close enough though.)

I have other reasons too, but the most pertinent is that I intend to spend a lot of my free time over the next several years writing distributed code. I’m doing what I can to make sure I enjoy it as much as possible. It is my free time, after all.

lol wut? What super computer is ever designed with a low bandwidth interconnect, especially one less than 100Mbps? And how could the network adapter possibly be faster than ideal? Are you really a MCSE who doesn’t know to change network adapter link speeds? Are you really a software developer who doesn’t know how to limit the bandwidth their software uses?

Well after reading your last sentence this all becomes clear, you dont understand the difference between a super computer and distributed computing. Thank you for confirming how worthless certifications are.

You’re not getting this “model” thing, Matt.

The point of this, isn’t to run codes as fast as possible. The point is to simulate a supercomputer, particularly the unusual style of programming required. Designing parallel loads, with limited communication between them. It forces you to design your data sets in a particular way, so you can run code that fits the strengths and weaknesses of a supercomputer.

As Dave mentions, the Pi’s slower interconnection is instructive for this. It presents a large delay that he has to make an effort to cope with. That’s how supercomputers are programmed. The delays are smaller with Infiniband, etc, but they’re still large relative to the local code per processor.

This isn’t about speed, it’s about architecture. Supercomputers use a specific and unusual architecture. You’re going to need to learn more than a few brand names for whatever high-end equipment is called these days, if you want to know what you’re talking about.

Interesting economics puzzle.

If this cost $3K, how would 40x 1Ghz ARM CPUs stack up against equivalently priced Intel chips, be them lots of cheap i3 chips, through i5, i7 or maybe a Xeon system adding up to $3K?

I suppose then you need to work out decent parallel processing measurement metrics to compare them properly.

Not easy.

For x86, cost probably isn’t the limiting factor. The “full tower desktop” form factor probably limits the number of processors before the budget does. If you used the Intel “NUC” form factor, maybe you could pack enough in to push the budget. Hmm… Interesting “what if”!

Well if you go with 6 of these PCPartPicker part list: http://pcpartpicker.com/p/2V90P

CPU: Intel Core i7-4771 3.5GHz Quad-Core Processor ($299.99 @ Newegg)

Motherboard: MSI H81M-P33 Micro ATX LGA1150 Motherboard ($42.98 @ Newegg)

Memory: Patriot Signature 2GB (1 x 2GB) DDR3-1600 Memory ($17.99 @ Newegg)

Power Supply: Diablotek 350W ATX Power Supply ($9.99 @ Amazon)

Other: Cooler Master HAF Stacker 915F ($64.99)

Total: $435.94

(Prices include shipping, taxes, and discounts when available.)

(Generated by PCPartPicker 2014-02-17 21:34 EST-0500)

And this for the NAS

PCPartPicker part list: http://pcpartpicker.com/p/2V9kW

Price breakdown by merchant: http://pcpartpicker.com/p/2V9kW/by_merchant/

Benchmarks: http://pcpartpicker.com/p/2V9kW/benchmarks/

CPU: Integrated with Motherboard

Motherboard: Asus E35M1-I Mini ITX E-Series E-Series E-350 Motherboard ($126.71 @ Amazon)

Memory: Patriot Signature 2GB (1 x 2GB) DDR3-1600 Memory ($17.99 @ Newegg)

Storage: Hitachi Ultrastar 1TB 3.5″ 7200RPM Internal Hard Drive ($50.96 @ Amazon)

Storage: Hitachi Ultrastar 1TB 3.5″ 7200RPM Internal Hard Drive ($50.96 @ Amazon)

Power Supply: Diablotek 350W ATX Power Supply ($9.99 @ Amazon)

Other: Cooler Master HAF Stacker 915F ($64.99)

Total: $321.60

(Prices include shipping, taxes, and discounts when available.)

(Generated by PCPartPicker 2014-02-17 21:38 EST-0500)

And boot off the network or USB drivers. And you have a 6node i7 Intel based cluster. The stacker cases all link together so if you wanted to get real slick you could in theory use a singe powersupply for the the bunch.

Impressive specs, but it still doesn’t answer the original question.

If the guy who designed the RPi 2 years ago ever thought that so many people would buy it to make a “nice” box around and post the picture,

he should have think twice before choosing the placement of his f…. connectors.

For once, it’s not made of lego, so i’ll give an upvote.

Im not sure he went with using 12v to 5v buck converters (especially cheap and nasty ones). one or two SMPS would be around 150 to 200 so a little more expensive but smaller and more reliable.

nice and fancy case.

I’d be a lot more interesting how he actually uses this setup, than in the pretty acryllic case.

just the same way that i am more interested in how a nuclear power plant operates, than how pretty the cooling towers are painted.

My youtube videos used to sound similar to this. Then I started using the ‘remove background noise’ feature in iMovie. [Dave] should check if his video editing software has a similar option.

Thanks for the advice.

What you heard in my Youtube video is already filtered with a simple bandpass filter. I managed to clean about 70% of the noise out of the original that way. Granted, it’s likely I could have done more if I’d spent time hunting for a better noise filter; In hindsight, I probably should have.

I intend to use other audio hardware for future videos, as the source audio from my point-and-shoot is of poor quality. If that doesn’t solve the problem, I’ll be hunting for a better filter to use for this kind of thing.

Virtualization is how many of us learn.

Models are how we experience. You can get a feel for how things work in a palpable way pretty easily.

This is probably the most portable 40-node cluster that exists. It can be used as a teaching tool to show people what distributed computing does and when you fry one, a node can be replaced at minimal cost. You also don’t have to worry about the part lifespan as much as RasPI boards are going to have the same form factor for a long time and be available “somewhere” for at least the next 5 years. That is something that you just cannot say for even some mid-grade server systems. Then, there is the power…

That being said, I would have gone with a BBB(Sitara) or iMX6 or Samsung Octa based ARM system. At the very least, Gigabit ports (just wired my whole house with shielded 10Gbps -24 ports). However, that would bring up the cost significantly.

The future is in distributed ARM computing and the BILLIONS of dollars invested in ARM-BASED server systems research by Samsung, Canonical, IBM, HP, and Cisco should be easy evidence of this.

This is an Open Source Project, so much of this can be modified to newer, better equipment. There is no reason why this can’t be made for microITX systems as well.

Thanks, StacyD.

I bought my first 16 Pis in 2012. It’s possible I would have done this project with Beaglebone Blacks (launched 4/23/13) if not for that. I considered it, but ultimately decided that Pi was good enough. Designing a card for the BBB should be easy though; I still intend to do it eventually, for people who want other options.

If a board fits in an Altoids tin and you can access USB, power, SD, and ethernet from the short sides, a compatible card can probably be made that holds 4 of them. I’m hoping makers of these boards will release more compatible platforms; I’m looking forward to upgrading this thing to Gigabit Ethernet switches and 8+-core boards eventually.

Just loving the “nay” Sayers!

My first “Beowulf” cluster was 20 386 boards, then I went to 20 486DX4- 100’s.

Seriously boyz, if you’re going to build one of these it will be to run POVRay, yafray, Aqusis.

Virtualising 20 cores on an i7 or whatever is a dumb sugestion and show s you simply just dont “get it”

40 Raspberry Pi’s gets you 20 gig of RAM, this is what we are after, nothing else really matters

Awesome machine, I knew some one was going to do this.

To the people suggesting to virtualise the entire rig, I’m building a similar cluster out of Pi’s for hardware demonstration purposes as well as of course distributed computing, I can’t demonstrate 40+ VM’s hardware if it’s a single computer. Clearly [Dave] has built this cluster according to his own spec which only a few of the commenters seem to understand.

Great work [Dave], looks awesome.

as of feb15, its a shame this as with so many other projects is so quickly superceeded by the raspberrypi2. i hope the case can allow for the new boards.

DOES IT CAN PLAY FACEBOOK

But can it run crysis

I want to take this to a cafe to do my study.