There’s a dreaded disease that’s plagued Internet Service Providers for years. OK, there’s probably several diseases, but today we’re talking about bufferbloat. What it is, how to test for it, and finally what you can do about it. Oh, and a huge shout-out to all the folks working on this problem. Many programmers and engineers, like Vint Cerf, Dave Taht, Jim Gettys, and many more have cracked this nut for our collective benefit.

When your computer sends a TCP/IP packet to another host on the Internet, that packet routes through your computer, through the network card, through a switch, through your router, through an ISP modem, through a couple ISP routers, and then finally through some very large routers on its way to the datacenter. Or maybe through that convoluted chain of devices in reverse, to arrive at another desktop. It’s amazing that the whole thing works at all, really. Each of those hops represents another place for things to go wrong. And if something really goes wrong, you know it right away. Pages suddenly won’t load. Your VoIP calls get cut off, or have drop-outs. It’s pretty easy to spot a broken connection, even if finding and fixing it isn’t so trivial.

That’s an obvious problem. What if you have a non-obvious problem? Sites load, but just a little slower than it seems like they used to. You know how to use a command line, so you try a ping test. Huh, 15.0 ms off to Google.com. Let it run for a hundred packets, and essentially no packet loss. But something’s just not right. When someone else is streaming a movie, or a machine is pushing a backup up to a remote server, it all falls apart. That’s bufferbloat, and it’s actually really easy to do a simple test to detect it. Run a speed test, and run a ping test while your connection is being saturated. If your latency under load goes through the roof, you likely have bufferbloat. There are even a few of the big speed test sites that now offer bufferbloat tests. But first, some history.

History of Collapse

The Internet in the 1980s was a very different place. The Domain Name System replaced hosts.txt as the way hostname to IP resolution was done in 1982. January 1st, 1983, the ARPANET adopted TCP/IP — the birthday of the Internet. By 1984, there was a problem brewing, and in 1986 the Internet suffered a heart attack in the form of congestion collapse.

In those days, cutting edge local networks were running at 10 megabits per second, but the site-to-site links were only transferring 56 kilobits per second at best. Late 1986, links suddenly saw extreme slowdowns, like the 400 yard link between Lawrence Berkeley Laboratory and the University of California at Berkeley. Instead of 56 Kbps, this link was suddenly transferring at an effective 40 bits per second. The problem was congestion. It’s a very similar model to what happens when too many cars are on the same stretch of highway — traffic slows to a crawl.

The 4.3 release of BSD had a TCP implementation that did a couple interesting things. First, it would start sending packets at full wire speed right away. And second, if a packet was dropped along the way, it would resend it as soon as possible. On a Local Area Network, where there’s a uniform network speed, this works out just fine. On the early internet, particularly this particular Berkeley link, the 10 Mb/s LAN connection was funneled down to 32 kbps or 56 kbps.

To deal with this mismatch, the gateways on either side of the link has a small buffer, roughly 30 packets worth. In a congestion scenario, more than 30 packets back up at the gateway, and the extra packets were just dropped. When packets were dropped, or congestion pushed the round trip time beyond the timeout threshold, the sender immediate re-sent — generating more traffic. Several hosts trying to send too much data over the too-narrow connection results in a congestion collapse, a feedback loop of traffic. The early Internet unintentionally DDoS’d itself.

The solution was a series of algorithms added to BSD’s TCP implementation, which have now been adopted as part of the standard. Put simply, in order to send as quickly as possible, traffic needed to be intelligently slowed down. The first technique introduced was slow start. You can see this still being used when you run a speed test, and the connection starts at a very slow speed, and then ramps up quickly. Specifically, only one packet is sent at the start of transmission. For each received packet, an acknowledgement packet (an ack) is returned. Upon receiving an ack, two more packets are sent down the wire. This results in a quick ramping up to twice the maximum rate of the slowest link in the connection chain. The number of packets “out” at a time is called the congestion window size. So another way to look at the issue is that each round-trip success increase the congestion window by one.

Once slow-start has done its job, and the first packet is dropped or times out, the TCP flow transitions to using a congestion avoidance algorithm. This one has a emphasis on maintaining a stable data rate. If a packet is dropped, the windows is cut in half, and every time a full window’s worth of packets are received, the window increases by one. The result is a sawtooth graph that is constantly bouncing around the maximum throughput of the entire data path. This is a bit of an over-simplification, and the algorithms have been developed further over time, but the point is that rolling out this extension to TCP/IP saved the internet. In some cases updates were sent on tape, through the mail, something of a hard reboot of the whole network.

Fast-Forward to 2009

The Internet has evolved a bit since 1986. One of the things that’s changed is that the price of hardware has come down, and capabilities have gone up dramatically. A gateway from 1986 would measure its buffer in kilobytes, and less than 100 at that. Today, it’s pretty trivial to throw megabytes and gigabytes of memory at problems, and router buffers are no exception. What happens when algorithms written for 50 KB buffer sizes are met with 50 MB buffers in modern devices? Predictably, things go wrong.

When a large First In First Out (FIFO) buffer is sitting on the bottleneck, that buffer has to fill completely before packets are dropped. A TCP flow is intended to slow-start up to 2x available bandwidth, very quickly start dropping packets, and slash it’s bandwidth use in half. Bufferbloat is what happens when that flow spends too much time trying to send at twice the available speed, waiting for the buffer to fill. And once the connection jumps into its stable congestion avoidance mode, that algorithm depends on either dropped packets or timeouts, where the timeout threshold is derived from the observed round-trip time. The result is that for any connection, the round-trip latency increases with the number of buffered packets on the path. And for a connection under load, the TCP congestion avoidance techniques are designed to fill those buffers before reducing the congestion window.

So how bad can it be? On a local network, your round trip time is measured in microseconds. Your time to an Internet host should be measured in miliseconds. Bufferbloat pushes that to seconds, and tens of seconds in some of the worst cases. Where that really causes problems is when it causes traffic to time out at the application layer. Bufferbloat delays all traffic, so it can cause DNS timeouts, make VoIP calls into a garbled mess, and make the Internet a painful experience.

The solution is Smart Queue Management. There’s a lot of work that’s been done on this concept since 1986. Fair queuing was one of the first solutions, making intermediary buffers smart, and splitting individual traffic flows into individual queues. When the link was congested, each queue would release a single packet at a time, so downloading an ISO over Bittorrent wouldn’t entirely crowd out your VoIP traffic. After many iterations, the the CAKE algorithm has been developed and widely deployed. All of these solutions essentially trade off a little bit of maximum throughput in order to ensure significantly reduced latency.

Are You FLENT in Bufferbloat?

I would love to tell you that bufferbloat is a solved problem, and that you surely don’t have a problem with it on your network. That, unfortunately, isn’t quite the case. For a rough handle on whether you have a problem, use the speed tests at dslreports, fast.com, or speedtest.net. Each of these three, and probably others, give some sort of latency under load measurement. There’s a Bufferbloat specific test hosted by waveform, and seems to be the best one to run in the browser. An ideal network will still show low latency when there is congestion. If your latency spikes significantly higher during the test, you probably have a case of bufferbloat.

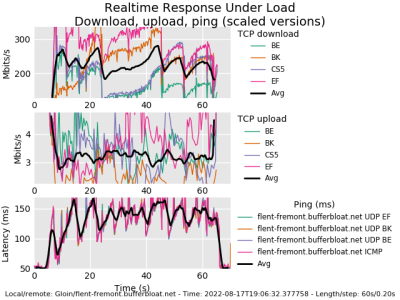

For the nerdier of us, there is a command line tool, flent, that does an in-depth bufferbloat test. I used the command, flent rrul -p all_scaled -H flent-fremont.bufferbloat.net to generate this chart, and you see the latency scaling quickly over 100 ms under load. This is running the Real Time Response under Load test, and clearly indicates I have a bit of a bufferbloat problem on my network. Problem identified, what can I do about it?

You Can Have Your Cake

Since we’re all running OpenWrt routers on our networks… You are running an open source router, right? Alternatively there are a handful of commercial routers that have some sort of SQM built-in, but we’re definitely not satisfied with that here on Hackaday. The FOSS solution here is CAKE, a queue management system, and it’s already available in the OpenWrt repository. The package you’re looking for is luci-app-sqm. Installing that gives you a new page on the web interface — under Network -> SQM QoS.

On that page, pick your WAN interface as Interface name. Next, convert your speed test results into Kilobits/second, shave off about 5%, and punch those into the upload and download speeds. Flip over to the Queue Discipline tab, where we ideally want to use Cake and piece_of_cake.qos as the options. That last tab requires a bit of homework to determine the best value, but Ethernet with overhead and 22 seem to be sane values to start with. Enable the SQM instance, and then save and apply.

And now we tune and test. On first install, the router may actually need a reboot to get the kernel module loaded. But you should see an immediate difference on one of the bufferbloat tests. If your upload or download bufferbloat is still excessive, tune that direction’s speed a bit lower by a couple percent. If your bufferbloat drops to 0, try increasing the speed slightly. You’re looking for a minimal effect on maximum speed, and a maximum effect on bufferbloat. And that’s it! You’ve slain the Bufferbloat Beast!

“Thompson Router” by Simeon W is licensed under CC BY 2.0 .

I was prioritizing TCP ACK’s to speed up my internet access. (ref: https://www.benzedrine.ch/ackpri.html ). Of course if everyone did that *ponder*, I’m not quite sure what the overall outcome would be.

A lot of ISPs do do that. More common nowadays is some form of filtering out redundant acks. It’s the only way to get full download speeds out of 20×1 down/up ratios. A lot of products do this fairly badly, too. cake has an ack-filter you can enable on the x1 side of the connection. Example of use: https://blog.cerowrt.org/post/ack_filtering/

This was called “TurboTCP” on my old router. Prioritize ACKs, limit the torrents to 80% max throughput, then mom can watch youtube haha. SQM looks even nicer.

[Brain experiences bufferbloat while reading article.]

P. S. Is there some checklist for setting up a home router for security,

Such as which ports to disable.

I assume you mean ports on the WAN side?

If it’s a home router then you’d expect all incoming ports to be firewalled. Some may expose a web interface to the router but even that’s bad practice so rarely done.

Well, for me it is certainly a case of not knowing what I don’t know!

B^)

The problem is that there is a LOT to know, so it’s hard to cover everything and still make it short and simple.

This is why you should be using supported hardware, you can get answers to your questions instead of “we don’t know how that works on your hardware”.

For example. There may be hardware tweaks for your platform to prioritize network activity over user interface. If this stuff is not right then your performance will suffer greatly.

Also if you have problems installing on your odd setup then nobody will be able to help you.

I have been there and done that with this stuff many times, and the only guaranteed roads to success are the ones that have already been mapped.

“Since we’re all running OpenWRT routers on our networks…”

No way. I’ve had my fill of flashing open firmwares onto consumer router hardware. All that work, you finally get everything just the way you want it and then… spontaneous total brick, or not a total brick but the behavior becomes really sporadic. I’m not talking about making a mistake during installation or configuration. I mean days to months after that. One day it’s working, the next it just isn’t! I don’t know but I suspect it’s the flash memory running out of writes because they never intended consumers to use it that way.

Years ago before purpose built, consumer grade commodity routers were a thing I used an old PC for sharing my connection. Who remembers WinGate? Ever set up connection sharing with Linux kernel 2.x? Ah them were the days!

I didn’t want to go back desktop hardware because of electricity consumption. But PCs have come a long way in power efficiency since then. About a year ago yet another router box gave up the ghost. I had enough. This one wasn’t even consumer grade, it was a used Watchguard FireBox from the office. It had a internal CF card so if it was a write issue I could have swapped that out but this time the problem was the serial interface, necessary for initial setup became flaky. In frustration grabbed an old PC (also discarded by my workplace), added a second Nic and installed OPNSense.

That was a TEMPORARY solution.

OMG, everything is so much easier to get working! And I happened to have a line current monitor lying around so I plugged that in too. Looks like I might spend somewhere between $5 and $10/year to keep this thing going. I can live with that. And if anything breaks, well, grab more hardware off the PC junk pile. Who cares? I guess maybe one of these days I ought to back up the hard drive. But I can do that, it’s a real hard drive, not a BGA chip soldered to a board. Wonderful!

A wise man once told me temporary is 12 years, permanent is 6 months. I’m not sure I will be ready to go back after only 12 years!

I too got tired of running on consumer routers, but I went the route of running an OpenWRT VM on a home server. Works like a charm. Upgradable, too, as evidenced by the 2.5G Ethernet adapter currently talking to the cable modem.

This samknows piece ( https://samknows.com/blog/bufferbloat ) goes into some of the history of this project with vint cerf an jim. Has some good graphs of typical latencies under load in the USA. US DSL has a LUL of 853ms.

Off-lease USFF corporate desktops with skkylake chips are sub-200 these days, and haswell sub 100. They usually have 128 gig ssds and 8-16 gigs of ram. They work great as routers, and as easy replacements for unavailable raspberry pis as long as you don’t need GPIO.

Just run an OpenWRT based router, like TPLink

Don’t forget to close the “diagnostics” port they ship with their products…

Ahh WinGate! The joys of sharing a 28k, later upgraded to some flavour of 56k, between 4 or 5 PCs connected via 10Base2 in some unholy cabling configuration – not star, not linear, sort of linear with a few branches.

Then came along ADSL and I think the modem supplied was USB so not Linux compatible, so forked out a few quid for a vaguely supported PCI ADSL modem. Had binary blobs that worked with some 2.4 kernels, but often lagged kernel releases by many months.

As for OpenWRT, indeed it can be hit and miss. Some devices are ‘supported’ with caveats, so you need to read carefully before you get a router if you want to run OpenWRT on it. If you pick the right hardware though then there are a few gems with good hardware and stable support, all for a reasonable price.

I used an old desktop PC from work as my OPNSense router, too. In the end I ended up with a PCEngines APU2 with ECC memory; I have all my networking stuff in the basement, and apparently the radon content in the soil around my house causes an awful lot of bit flips and subsequent crashes. Took a while to discover the root of that problem (by switching my NAS to ECC memory after discovering some nasty bit rot on its array, and then watching the ECC corrected error counts continue to go up and up…). The much reduced power draw is nice too. :)

Also, for anyone using OPNSense (or the Pf equivalent), you too can reduce bufferbloat with queues, pipes, and CoDel. There’s a couple of guides floating around to point you in the right direction.

Since this article reminded me to go check my config and update it… https://maltechx.de/en/2021/03/opnsense-setup-traffic-shaping-and-reduce-bufferbloat/

I too often use a repurposed devices- such as a laptop – or raspi – as a firewall/gateway device. This was easier back when we still had had CARDBUS, as usb-dongles are not pleasing.

I wanted to make a couple points on the article. Cake has been part of linux since version 4.18, and fq_codel has been in there since version 3.3. Applied with the htb + sqm-scripts or cake by itself you can make any linux box of any stripe do better network queue management. All the 3rd party router firmwares have at least fq_codel now, as do many commercial off the shelf products like those from evenroute, eero, google chromebooks, and some of their APs, ubnt, firewalla, etc, etc – it’s quite the long list, and does in most cases require the teeny bit of manual configuration of upload/download bandwidth and link type to turn on. Recognition of having a bufferbloat problem has to come first… and then this step.

However a larger point is that smarter queue management is needed for every transition from fast to slow – not just 10Mbit to 56kbit as in the 80s, but as two prime examples, stepping down from 10Gbit to 1Gbit, or from Gbit to wifi, and so long as you are running at the native rate of the interface, not an artificially set one by your ISP – just putting one of these qdiscs on that interface suffices nearly eliminate the bufferbloat problem on that step-down. (fq_codel is now the near universal default on linux). Most of our work in recent years has been on taming wifi, for which there are now native (no need to configure) implementations for the ath9k, ath10k, and mt76 chips in openwrt and in linux and in other distros that are reasonably up to date. Our latest work is in openwrt 22.03-rc6, and already mostly upstream in linux mainline.

As other posters have noted the BSD’s also have fq_codel, and it can be configured to shape, but it does not have the native “step down at line rate” implementations that linux does.

RaspberryPi 4 with OpenWRT is fast and stable while using very little power.

Been using OpenWRT in consumer devices for over a decade.

It’s fine… if you can deal with hardware to some degree. Understand how it boots, understand u-boot, solder the headers for serial port and/or JTAG and so on.

I’ve managed to “brick” devices with snapshots or custom builds before, but nothing I couldn’t quickly fix through u-boot’s shell via uart.

If you’re an end user and don’t want trouble: Buy only supported devices, read the install process before buying. And please stick release builds.

“Who remembers WinGate? ”

ME…. shared a 31,2k dial-up on a 2nd phone line.

Used for Limeware, etc…lol Had a 10bT coax network

in my house. Man..those were the days!! haha

I got my start on a Timex-Sinclair(spelling?) in ~ 1981/82?

So I have been around for a day or two!.lol

Ohh and before the 31,2k I used a 300b-HF/1200b VHF

packet connect to other hams on the local mtn. top

node. I still run a 1200b mtn top node….now if I just

had a “user” :(

There’s nothing wrong with using a PC as a router, I do it myself. But still, OpenWRT goes well out of its way to make sure it is not wearing down the flash, all you have to do is be even mildly familiar with it to see that. Unless you’re installing a package, writes go to /tmp, like you would expect, and configuration changes are set up to use NVRAM, not flash. People have known about flash wear for years, dude. Are you sure you aren’t conflating experiences with things like DD-WRT which is neither free nor particularly good?

If you run OpenBSD and pf for your router (and you should), here’s a site that talks about bufferbloat and how to fix it. Yeah, it says “6.2 or newer”, but it still works perfectly on the 7.1. I have 0ms bufferbloat for both upload and download using this method.

https://www.pauladamsmith.com/blog/2018/07/fixing-bufferbloat-on-your-home-network-with-openbsd-6.2-or-newer.html

I just did the ping test, and I get 8 ms (to my provider) without load, and 11 ms under full load. Not so bad.

I try to stress that web tools are not enough to thoroughly diagnose potential bufferbloat problems because none of the web tests run long enough (especially at rates above about 200Mbit), nor do they test up and download performance simultaneously. Flent is available for most linuxes and BSDs and is really good at diagnosiing a variety of network problems besides bufferbloat, and the 60 second rrul test the gold standard for overall up/down throughput and latency.

Also, the bufferbloat project operates a fleet of flent servers all over the world, if you need access to a nearby one one, they are called de,london,singapore,mumbai, atlanta, fremont, newark .starlink.taht.net), but we encourage people to setup their own to test their own networks and routers.

We are finding more and more ISPs are indeed deploying bufferbloat solutions, whether native to the CPE (ubnt and mikrotik are prime examples, also those few cable providers such as comcast that have turned on DOCSIS-pie), or a middlebox solution that does the shaping right in the middle of the network. Preseem is pretty popular in the fixed wireless and WISP markets. LibreQos is also being used in this way. It’s much lower cost (initially) to stick a middlebox in there for smaller ISPs with a deployed and unfixible CPE base, although admittedly slightly less effective. One ISP I worked with recently deployed LibreQos and got such fantastic results (calls about “speed” to tech support vanished), and with the freed up resources, they immediately moved to upgrade all their mikrotik CPE to run cake…

My hope is generally that now that solutions are so widely available, people will fix it for themselves, fix it for their friends, and nag their ISPs to do more of the right things in this area, but first there needs to be that “aha!” moment, that bandwidth !=speed if you have too much buffering on that self-same link.

I’m really encouraged by getting so many reports back that show their ISP has already addressed this issue. I wish we had more data back on what they were actually deploying. Cake, in particular, has some really advanced features like per host/per flow FQ that make home and small business networks scale to a lot more devices, more effectively.

thanks for the description of the capacity estimation mechanism, i always figured it had to be something like that. i used to have problems where some sort of mismatch between the two sides (like a 2010 version of linux talking to a 1999 version of freebsd, i don’t know if that was the real cause) caused it to estimate the available bandwidth as asymptotically approaching zero, often timing out the connection…regardless of what the actual link capacity was. add it to the list of things i never got to the bottom of.

but it was one of those things that was amenable to artificially limiting the bandwidth used…i made a tiny ‘cat’ clone that only let bytes go from its stdin to its stdout at a low rate, and that managed to obviate any OS-level bandwidth management.

around 2006, i established a fairly intricate set of rules for the then-newish linux “QoS” queue management. interactive >> bulk >> guest, and all of them were limited to less than the local link bandwidth. it actually worked really well. in hindsight, the only reason i needed it is that my cable modem was at an awkward phase in development where it had a large buffer but limited bandwidth. a few years later, they upgraded my bandwidth so draining the buffer didn’t take perceptible wall-time, and i haven’t had to deal with it since. it’s amazing how simply draining the buffer faster solves all problems.

Thank you, Jonathan! I knew OpenWRT supported SQM but never looked into how to enable it, sort of assumed it was there by default and I just didn’t care enough to dig deeper.

I just got luci-app-sqm running and improved my Waveform grade from B to A and then with a little tuning to A+, in the span of about 6 minutes’ work. Piece of CAKE, indeed!

https://arxiv.org/pdf/1804.07617.pdf details some features in cake that don’t show up on a test like that. My favorite is per host FQ, where someone running a heavy duty speedtest or torrent application on one machine has no effect on someone else doing anything else on another.

If only we could convince another 100m people to burn the 6 minutes you just spent… it would be a better world.

Problem I think people will have is with CAKE and the low-powered hardware in modern routers especially dealing with gigabit service.

This article was a great help. I turned on QoS in my router, tuned it a little bit. It went from a C rating to an A+ rating, but I tuned it for a little more speed and get an A rating. My upload was causing +180ms to my ping. I set my in/out to 85% of my bandwidth, then increased that number until ping was adding about 5ms. Great tool.

That’s an interesting test waveform has, I’ve not seen it before but I’m happy with the results considering I have fixed wireless and my ISP is 90 miles away and I live in the middle of nowhere. I don’t know how many microwave links it goes through but I think it’s about 10.

I do miss my gigabit convection from the city but I don’t miss living there.

https://www.waveform.com/tools/bufferbloat?test-id=55fecc3e-8149-4e52-9f90-e6fd0dc2a907

That test was on my phone over WiFi, would likely be a bit better over LAN.

It looks like the article interchanges 56 kbit and kbyte multiple times, also alternating capitalization. LANs were indeed 10 Mbps (=1.25 MB aka megabytes per second) at the time many people had 56k modems, but those modems weren’t 56 KBps, they were 56 kbps (=7 KB aka kilobytes per second).

I know most of us know the difference but if anyone reads this who wasn’t around when dial-up internet was a thing, it might get confusing if we’re not precise. It was extremely confusing to me as a kid, who thought something was wrong with his 14.4k modem because he was getting 14.4 kbps links when dialling in but only ever saw sub-2 KB/sec download speeds.

I don’t know whether I did that when writing too late at night, or if it got munged during editing. Either way, I’ll give it another pass to fix those.

I might have introduced some errors in there during editing as well. If so, sorry!

How dare you and Jonathan be human. We demand perfection, even if we knew what you meant despite the minor errors.

Accelerant might have helped.

Wingate, modems…. these comments have made me feel old

Biggest threat to these fixes is ‘Net Neutrality’ laws.

Do you trust a city full of god damn clueless arrogant lawyers not to outlaw QoS?

Like LBJ said, people get the government they deserve. He just made sure they got it good and hard.

A challenge with bufferbloat tests is that they are artificial. What the user really cares about is their responsiveness with their actual traffic scenarios. A bufferbloat test generates traffic to create and force bloat. Reduce the sender by a smidgen and bloat goes away (See iperf 2’s -b option) Also, other things besides full or standing queues can create poor responsiveness. In WiFi environments the number of active WiFi devices matters, or the AP to STA densities. So does RF energy from the apartments next door. So does the channel typically assigned by the ISP typically selected to minimize trips to the customer premise which doesn’t necessarily align with the optimal responsiveness settings. So do mesh devices vs things like Corning’s clear track fiber.

A more thorough analysis of responsiveness would go beyond bufferbloat. Yes, a one lane road backs up during commute hours. How is it during non-commmute hours? Does it matter to the vehicles using the road for transport only during commute times? Of course not. It matters all the time a vehicle is using it.

We’ve tried to help here with iperf 2’s new bounceback test. This can be run by a raspberry pi or other in the background where it has minimal to no impact on the network capacity. The server can be compiled and run on openwrt (though the maintainer seems to no longer be around.)

Anyway, “your responsiveness will vary” and there seems to be no single part of an end/end network that is always the problem, particularly when tech like WiFi is so dominant for the last meters. Buffebloat matters but it’s really not the only thing that matters and it might not even be the problem without proper tools that can diagnose with accuracy.

A challenge with bufferbloat tests is that they are artificial. What the user really cares about is their responsiveness with their actual traffic scenarios. A bufferbloat test generates traffic to create and force bloat. Reduce the sender by a smidgen and bloat goes away (See iperf 2’s -b option) Also, other things besides full or standing queues can create poor responsiveness. In WiFi environments the number of active WiFi devices matters, or the AP to STA densities. So does RF energy from the apartments next door. So does the channel typically assigned by the ISP typically selected to minimize trips to the customer premise which doesn’t necessarily align with the optimal responsiveness settings. So do mesh devices vs things like Corning’s clear track fiber.

Interesting, I JUST fixed this on my OPNsense setup (PC Engines APU4D2) yesterday. Dramatic difference.

For those intrigued how we tackle this in the SP world I can highly recommend the below. read.

https://archive.nanog.org/sites/default/files/wednesday_tutorial_szarecki_packet-buffering.pdf

I am sad to see those slides contain no discussion of how to apply RED to a device that ends up having 100ms of output buffering, in the end. i’ve long hoped that juniper and others would be making available PIE (https://www.rfc-editor.org/rfc/rfc8033.html) or codel (https://www.rfc-editor.org/rfc/rfc8289.html) in these circumstances as they preserve full throughput at only 16ms or 5ms latency, respectively. I’ve figured that further applying fq-pie, fq-codel, or cake was kind of hard in this environment, but those manage to achieve near zero queueing delay for sparse flows also.

RED, at least, seems applicable.

Where to get some SQM software to my Fritzbox 6591?

Sadly, I can’t use OpenWrt now because I’m on a 4G LTE wireless link. I can’t even get into the provided router and pull the SIM card out — it’s a sealed box, even if a FOSS system could do hypothetically do something with it. Any suggestions?