Backing up. It’s such a simple thing on paper – making a copy of important files and putting them in a safe place. In reality, for many of us, it’s just another thing on that list of things we really ought to be doing but never quite get around to.

I was firmly in that boat. Then, when disaster struck, I predictably lost greatly. Here’s my story on what I lost, what I managed to hang on to, and how I’d recommend you approach backups starting today.

Best Practices

Industry standards have moved on, but backup evangelists used to swear by the 3-2-1 rule. It’s simple, straightforward, and covers you in the event of a wide range of disasters. It states you should have three copies of your data, two of which are on different devices locally, and one more which lives off-site. This protects you against data loss from a single failed hard drive or computer, as well as covering you in the event your home or business is suddenly on fire, under water, or occupied by enemy belligerents.

It will not shock you that my own backup regime was not so robust. Oh, I had many excuses for why it wasn’t. Over the years my work and files had spread across two laptops and two PCs. Important files were scattered across multiple hard drives, lurking in folders across the digital Savannah.

Backing up for me would be no simple matter. I’d have to figure out where everything important was, then find a way to organize it or back up the entire sweaty mess as-is. Just trying to find all that stuff and drag it onto a portable drive would be a pain. Let alone having to do that manually every week!

Plus I’d then also have to make a copy and truck that somewhere off-site on the regular to truly protect what I had. Even worse, I had on the order of 8 terabytes of data built up from years of video production and other creative works. Backing up would be difficult and expensive. Instead, every time I thought about backups, I thought “too hard!” and moved on with my life. I trusted my hard disks, after all, and figured if I’d been fine for 20 years, I’d be fine forever.

Like Tears In Rain

That all came crashing down when some enterprising criminals broke into my house and stole everything of value that I had. Every laptop and PC walked out the door, along with multiple guitars, a prized synthesizer, and just about everything else electronic worth over $50. One small victory was that my television was too big for the thieves to carry, so mercifully, they let me hang on to it.

When my computers left the building, so did the vast majority of my data. YouTube videos, T-shirt designs, robot projects, websites, logos, PCBs… all gone. It was a crushing blow. The worst losses were the various files that made up the tools of my trade. I write over a thousand articles a year, and to do that means having a streamlined workflow. Things like image templates, logos, audio and video stings and other ephemera I use for producing media were all gone, and that was really painful. Similarly, a few filmclips and other projects laid unedited, and those shoots are now gone forever.

The loss put a lot in perspective for me. I realized I’d been holding terabytes of data, unwilling to lose any. It was surprising, but the vast majority of data I was keeping was almost meaningless to my day to day life. Funnily enough, the next day, I borrowed an old laptop and was able to get to work without too much trouble. I simply have had to start recreating my tools as I go.

In the following days, I was glad to realize that not all was lost. I found bits and pieces of data wherever I could. My phones have backed up my photos to the cloud since 2015, so all my photographic memories were preserved. My source files for YouTube videos were all gone, but my finished output still survives on YouTube itself. Thankfully, my darling robot cum autonomous mower had been in pieces, and ignored by the thieves. That meant I could make a new backup of all the code on its SD card, containing the sum total of hundreds of hours of my engineering effort. Having to recreate that code would take me months; finding a copy was truly glorious. Finally, I’d also seen fit to spend some money on cloud backups for my studio computer. That meant my last decade of musical output was similarly protected.

Moving forward, I took this traumatic event as an opportunity for a new start. With virtually no files left, backing up would be easier. I no longer have files scattered across four machines because I no longer have four machines. Nor do I have 8 TB of data to deal with.

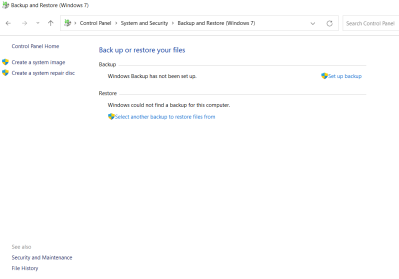

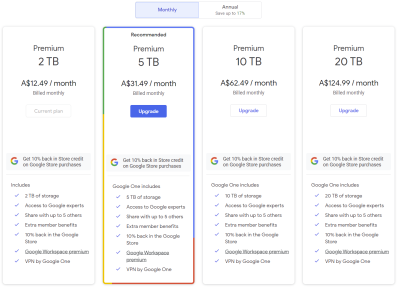

I’m still not sure I’ll go for a full 3-2-1 backup regime, though. Instead, I’ll invest in a cloud backup of my main data. Previously, I’d considered this, but avoided it for reasons of cost. 8 TB would have cost me on the order of $60 a month to secure back when I’d looked into it, and I’d found this too expensive to bear. Now, I can choose a much cheaper backup plan, at least until I start building up a huge cache of video again. Having a second local copy of my data doesn’t seem particularly useful, other than if the cloud backup and my main storage fails on the same day. It could happen, but I feel like I’m still willing to gamble it won’t.

Ultimately, I’m doing okay through a combination of luck and forward planning. I knew my musical works were impossible to recreate, so I’d spent a few bucks backing them up in the cloud. That was smart. As for the design files stored on my robot, I simply got lucky that they weren’t stolen as well. Had I suffered a major house fire instead of a theft, I might have found myself significantly worse off.

Actions To Take

If you’re reading this story and fretting yourself, that’s normal. The best time to start backing up your files was a long time ago, but the second best time is today. Depending on the route you take, it may be expensive to backup your files, or require a lot of mucking around. Cloud services charge a fee for storage, for example, and buying portable drives costs money. Trucking your own backups on- and off-site on the regular also takes a lot of effort.

In reality, the best backup scheme is the one you can actually implement and stick to. There’s no point in setting up a cloud service to backup your data if you have to cancel your subscription in three months because it’s too pricy. Similarly, if you forget to keep running your backups, you put yourself at risk of loss once again. The trick is to manage these risks. If you can’t spend the time to manually back up your stuff, look at using a backup manager or building an automated tool to handle it for you. If you can’t afford to pay for cloud backups, consider stashing some drives at a friend’s house and setting up a automated service to sync your files.

Overall, I learned that while backups are expensive, losing valuable files is expensive too. Valuable being the key word. For me, the things that would be most expensive for me to recreate, in time or money, were saved largely by luck. These were things like code or design files that I still regularly need and use, and would have to recreate from scratch. Now I realise I need to spend the most effort backing up that data.

As for things like old game saves, old university assignments, and source files for old videos I finished years ago? 99% of that stuff, I would never touch in a decade anyway. It’s a shame to lose it, for sure. But because I couldn’t afford to protect everything I had, I ended up protecting none of what I had. I could have lost so much more.

The upshot is that if you want to protect your data, you need to take action to do so. If you can’t protect it all, focus on protecting what is most valuable to you. Then, find a way to protect it that is feasible for you and that you can readily keep up with. Then, when the worst happens, you’ll bounce back better than I did. Stay safe out there!

I’m feel terrible about your loss, but thank you seeing the positive side and sharing your experience and thoughts for us all to learn from.

I had a very VERY close call once with me stupidly leaving a minimally secure VNC server open to the internet “because I just needed it for 5 minutes” and forgetting to lock it up when I was done. Someone noticed my IP replying on VNC’s port, brute forced the password, and then tried to buy a bunch of stuff with my saved credentials (which the bank immediately flagged because they were over $1000 each), but it gives me chills how bad that could have been. Yes, I realize how stupid that was and don’t need to be chastised; lesson learned. Never again.

oooof, never even contemplated such a hack. glad you got out largely unscathed!

I might recommand a tool called syncthing. It allow you to sync your data between the devices you own (linux, windows, android in my case). It’s free and allow you to keep them in sync. There are options for keeping a history of all the change : . From nothing to a simple X day trashbin to a full blown version history.

I made a small NAS with a rockPro64 and a few drive that are also synced using syncthing. I’m currently planning to “gift” anotger NAS to someone so that i would sync a copy of my data there and they would sync their to my place

That’s what I was thinking. But a sync is not a backup. Delete the data erroneously or by hack or virus on your main machine, and your sync is also gone. To my mind the best is a syc, and automated periodic backups made by your cloud hosting machine of the sync folder.

Thay gives you automation, seamless common data over multiple platforms, and periodic backups on a dedicated, wireless, hidden device. The second device at your friend’s place syncs the backup folder. That gives you fire and flood protection.

That is pretty much my backup plan. Two (or more) at home and one yearly off-site (or more depending on if critical). Also I consolidate data to my home server. So any data that needs backed up needs to be on that machine. Makes backups much easier than spread across a bunch of machines. Only ‘data’ is backed up as the Linux OS and applications can always be easily restored to any machine when a drive goes bad.

> applications can always be easily restored t

You can end up unpleasantly surprised in least expected moment. Apps can be recreated now but in i.e 6 months it can be gone from vendor site etc. Another thing are apps settings so ensure you back that up too. I personally run 3 backup types. I backup all my configs daily. Then I do complete OS backup (just system + separately generated list of installed packages) and finally i backup my data. The last two go with lower frequency.

I keep copies of various legal documents, tax filings, and IDs on an encrypted thumb drive that I carry with me. When I need a copy of my diploma, driver’s license, passport, etc., I always have it ready to go, and if my computer craps out, no matter- I still have that thumb drive.

Almost everything else can disappear without me worrying about it.

+1, didn’t consider this. Thanks

And if your thumb drive is lost/stolen, damaged? Hope the thumb drive is backed up occasionally.

neat solution!

I have some similar solution, but instead of encrypted thumb drive, i have encrypted disk image, i tend to copy it to as many drive as possible (but not to the cloud)

Please be aware that flash memory is one of the less reliable storage methods. Replace them every 5 years or so.

Carrying around every document to forge your identity down to the smallest detail sounds like the recipe for an absolute nightmare.

I pay google a dollar a month for cloud space.

None of my data is save in the cloud. That is a form of ransom ware in itself. Pay to play. And data is no longer private.

What happens when Google has a failure, locks you behind a much bigger paywall, or implements enterprising AIs to scan your files… arbitrarily declaring your content “objectionable” and freezing your access to it? Trusting one of the nastiest corporations on the planet isn’t what I’d call a “backup solution”.

When and (a big) IF it happens. Until then Google is the best at what they do.

A big if? That’s pretty much in line with Google’s modus operandi: Promise the world while harvesting your data for use in questionable practices, arbitrarily changing the rules based on fluid (and non-public) criteria, buying up (and shuttering) competitors in order to pump their solutions, deliberately manipulating search results for political gain, consolidating power and forcing reliance on their infrastructure… then switching on the paywalls. Par for the course when it comes to companies who call the police for “wellness checks” when whistleblowers come forward.

Do what you want, obviously, but pretending Google is altruistic or “the best at what they do” is pure, unadulterated delusion.

Google locking people permanently out of their data happens all the time. Cloud is fine, but make sure it is not your only copy. Applies to email also.

Yep, that’s why I use Thunderbird. All email is downloaded. Then /home periodically backed up to the home server just in case.

If you decide to keep backups on someone else’s computer it would be wise to encrypt them.

Then I move it to the Microsoft cloud. It’s just snapshots, CAD files and (badly written) source code, they can scan it all they want.

If you’ve got more sensitive stuff then you could probably encypt it before it ever gets there.

As long as your aware of the pros and mostly cons, and it isn’t your only “copy” of your files that are out ‘there’ — then go ahead use the cloud. It just isn’t for me regardless of the data sensitivity as I would be putting my data (encrypted or not) into someone else’s hands (they can do whatever with it) and in some cases paying them to hold it for me. I don’t pay up, I loose access to the data,… or simply the loss of the internet cuts of access. Backhanded ransom ware in my mind :) . No, I’ll keep my data local … and safe.

BTW, I feel the same about cell phones. Shooting your photos conveniently to the cloud (say ICloud) and then try to change phones… Can you say vendor lock in? Unless one saves them locally to a PC/laptop your ‘hooked’ so to speak. Better pay the bill!

I pay Google $12 a month for cloud space and they give me 2TB. It’s not enough for a full-time creative.

Seems pricy. Check out AWS Glacier S3 storage. I pay around £1/Tb monthly in the UK. Backup frequency is weekly for all my media, around 3Tb so far. Synology have a simple tool for managing this too, so I just drop anything important on my NAS drive and forget about it 👍 Also consider a RAID setup on the NAS – this has saved my bacon twice in 10 years when the drive failed. Not needed the cloud backup so far except for recovering from human error 😂. Also check your backup is actually working regularly! This is the classic failure mode for IT backups…

As the local “computer guy” growing up, I learned this lesson very early through others. A sizable chunk of issues brought to me were to recover some irreplaceable files from a dead or dying harddrive. Sometimes recovery was possible, but usually not.

Always treat any drive (spinning or solid state) or media (CD, DVD) as if it could fail at any time without warning and take all it’s data with it. I’ve had all of the above do exactly that at one time or another. My simple advice to everyone was to always keep your data in more than one place. My advice to more savvy types breaks it down more:

The 3-2-1 rule is a great way to protect your stuff.

If your house burns down, you have the remote copy.

If a single drive fails, the local copy is way faster to restore if you have a large amount a data. Though not strictly required if downtime isn’t an issue.

Something not mentioned in the article is ransomware. If your backup is, for example, on a simple network share that you have direct write privileges to, that could be lost at the same time as your local files.

Something else important to mention is that RAID is *not* a backup. RAID protects you from downtime in the event of a drive failure. One power surge will happily take all drives in an array down at the same time, as will the aforementioned ransomware.

RAID is a backup against many forms of failure that are single-drive specific, just not all. Cloud storage doesn’t protect you against ransomware if you use a desktop update app or your sync process is automated.

RAID being a backup against only certain failures means that RAID is not a backup.

Single drive failure? Yes.

Multiple drive failure? Depends.

Power surge? No.

Ransomware? No.

Accidental deletions/overwrites? No.

Filesystem corruption? No.

RAID is not a backup.

Some automated cloud services (Dropbox, for example) let you download previous versions of files. This could help out with many issues even if synced.

Personally, I could never rely on a backup that had to be done manually. I’ll get forgetful or lazy and it will bite me eventually. It’s another thing I’ve seen time and time again from others. Not that it couldn’t work for some, but I know me.

That is why mine is a manual process. The local backup drive is unmounted until I need to do a backup. Of course other backups are to an external drive that again are only connected when I deem a backup is required.

Also the server is not connected to the internet as an extra precaution.

“As the local “computer guy” growing up, I learned this lesson very early through others. A sizable chunk of issues brought to me were to recover some irreplaceable files from a dead or dying harddrive. Sometimes recovery was possible, but usually not.”

IBM Deskstar comes to mind.

https://www.extremetech.com/computing/326292-why-lying-about-storage-products-is-bad-an-ibm-deskstar-story

DeathStars! Jeez, I forgot all about those. Every single one I ever had succumbed to the click of death.

When I was a teenager I used to do backups for big companies professionally, and seeing the fallout of one particularly failed recovery drove home not only the practice of doing backups but occasionally drilling a recovery.

All hard drives fail – I know two IT professionals that have lost personal RAIDS.

I love the 3,2,1 plan, but this is the first I heard of it. (I’m not a fan of personal cloud storage due to cost and and the risk of policy changes in the rapidly changing cloud landscape).

I offer my regime for anyone interested.

1. Separate OS and data. I do not keep any projects on my boot drives so they can be backed up and maintained separately

2. All drives with valuable data have a folder named “B” in the root. Everything in the B folder will be mirrored to a separate physical drive nightly. Nothing wasteful is in B, nothing outside of B is protected.

3. All my backup scripts along with a text document of my backup procedures are in a single \B\Backup folder. The document is a useful reminder/checklist for quarterly or annual archives

4. I use Windows Robocopy to do nightly incremental backups from each B folder onto a set of (currently 5TB) drives and the backup logs are captured in the backup folder. This not only gives me hardware redundancy, but provides an accessible file backup if I accidentally delete or damage files in my working directories.

5. Monthly I use Clonezilla to create compressed encrypted images of my OS and data mirror drives on external drives that are kept offsite (at my in-laws house). I keep the latest 3 full backups. (there is a risk during a small window while all the drives are colocated and connected at the same time)

6. I copy and separate a few handpicked copies of the drive images abut every 6 months or so, the longer timeline as a weak defense against time triggered malware.

Big USB drives are cheap and the cost amortizes over many years

All storage fails.

I knew I’d forget some things…

Windows Robocopy is similar to Linux rsync

I forgot to mention that I use a script (dd|gzip at its core) to do live backups of all my Raspberry Pi’s before monthly backups on my local network to drives.

I use Clonezilla on my Windows, Linux, and dual boot machines.

;-)

I used clonezilla to make backups definitely an easy way to restore a windows machine or Linux if you have a fairly simple single drive system. Especially good at ” I should make a backup now ” and don’t want to futz around. There is also the vhd tools in windows to make a live clone. Not a fan of it for most of my Linux machines with multiple drives a rsync and tar or zfs clone are more appropriate.

One pitfall to anything not magnetic storage is the endurance with no power – leaking away data just for being turned off. So Big USB drive may not be the backup you think it is.

I guess your CD-R type is also safe from data loss to lack of power, but writeable CD/DVD have issues as well for longer term storage and RW ones are as far as I know NEVER a good choice for an archive.

They said they refresh every half-year or so. That should be more than good enough to keep data from degrading. I like the “break glass in case of emergency” thumb drive, as long as it’s a tertiary or quaternary line of defense.

Surprising how quickly these drives can go dud, I’ve never personally seen one that won’t last 6 months (infact the few older ones I’ve got kicking around seem to still be good after quite a few years), but I have heard of it happening that fast and seemingly much more often recently – its not a ‘safe’ option that is probably worse on the more modern denser memory.

Thing is, I don’t expect to go back more than a year at the most. So longevity is not a big deal — unless media degrades in less than a year of course! From what I have read, unpowered HDDs should last at least 8 years or so…. This is way longer than needed as one ‘usually’ only cares about the most ‘current’ backup when you go back to restore lost data. My data is not ‘historical’ in nature in the since I would ever need to go back 10-20 years ago to restore any data… and when we are six feet under, no one is going to care, so looking for say 50 years+ of data retention is not even in the cards. That said, places like the national archives or some such… Or a company…. Then they would care, but not the individual who is only on the planet for a relatively short time.

This is a common nihilistic argument that I don’t understand.

I have videos from rooms with my kids growing up. I go back and look at them in their cribs.

Data is powerful, why do you think the biggest tech companies care so much about it? Do you think because you don’t care we shouldn’t either?

The data itself can be ‘old’, old as the hills. But since it is always ‘spinning’ (in my case on my server, each backup gets it all. So when you pull that ‘year’ old backup to restore, you restore it all. That’s how I approach backups. I do NOT ‘archive’ old data and expect to pull it out of storage 10-20, or 100 years from now. It is always spinning. Of course corporations and government have to approach the problem differently as they can’t keep it all spinning, so archive off to tape for long term storage.

Sorry I didn’t make my self clear on that. With TBs of cheap storage media you can do this. Well at least I can. Not that much data. I’ve only accumulated around 1TB of data which is nothing to be backed up.

I have 2TB of images and video to back up, however 50% of it is garbage that I’ve not had time to filter. The other 50% is treasured memories and irreproducible history. I should probably value that more than I do, and invest the time to curate it properly, but I do find that with the huge range of software approaches to organisation and storage of data, it’s a real pain in the ass. I keep a local copy, a RAID NAS copy, and I planned to invest in a cloud backup too. This reminds me to do something.

There are only two kinds of data storage: storage that has failed, and storage that will fail. Plan accordingly! :-)

Remember that “offsite backup” doesn’t have to be cloud, it just has to be not on your premises. For years I had three 4TB removable drives that held my backups in weekly rotation (so the oldest was no more than 2 weeks out of date); each week I’d take the newest of those drives to my friend’s house where we held band practice and leave it on the shelf next to where I played. Cheap and easy insurance in case of fire, theft, or stupidity (worst case if I needed the most current backup I could just drive over there if the local drives hadn’t gotten more current by then!)

Times have changed, the band broke up, and there’s more data to backup now, but a few portable drives for the onsite, ZFS redundancy for the “oops” factor, and encrypted offsite backups pushed to Backblaze just in case of real disaster lets me sleep at night. Backblaze B2 or Amazon’s Glacier aren’t bad options for offsite storage – they’re relatively cheap to write, it’s the retrieval that can cost you – and if it’s for an emergency you expect will never come, that can be a good tradeoff.

3,2,1 rule of backup:

3 copies

2 different types of media, CDR,DVD,HD,NVME,SSD

1 offline copy – very important for ransomware protection

For example, I have 1 on BDR, 1 on HDD and 1 on SSD, my “offline” copy of the BDR, I also use onedrive for encrypted backup.

I remember decades ago.losing floppy disk content. And itwas less about what I lost and more about not remembering exactly what was on there.

It would just nag me.

In those days (mid-late 80s), my father stored his files on both floppies and QIC tapes.

The floppy backup was done by PC Backup (part of Central Point PC Tools 4.x or older),

which created a control floppy with an directory overview.

The software was quite intelligent and detected the inserted floppy withouth an extra key press.

It also had some sort of compression and error correction going on.

The second one was an SCSI streamer using 100MB QIC cassettes.

Software was SyTOS, I believe. Both media were still intact last time we checked.

Probably because it was safely stored away (card board boxes with news paper).

Anyway, all this doesn’t protect from one thing: thieves.

After we moved into another town, a part of the stuff was gone.

The moving helpers kept some of it, apparently, the electricians at work, too..

Gratefully, it were just “things” that got lost, not photos or personal mementos.

I have the bad habit of just not deleting anything ever and it has brought me to a miserable place where I have every hard drive from my laptops of the past decade, plus externals, plus my nas and backup drives in various stages of sync.

I am slowly moving forward on cleaning it all up but I have so many copies of the same data I am honestly considering the drastic approach of mass-deleting anything that is not “family photos” or “personal finances” because otherwise I am looking at dozens of hours reviewing and merging hundreds of directories and purging unnecessary duplicates. That teraterm installer from 2013 can probably be deleted…

I am also in this dangerous no-mans-land of having no real backup strategy and really need to implement something; I am considering just buying a new drive for my desktop computer and pushing everything to that to live on and sync to backblaze and then I can muck around with cleaning it all up into something more realistic… but the odds of me actually cleaning all that up are probably low.

There is probably a support group for people who never delete things, right?

De-duping filesystems help.

Yes – you’re in the same quandary I was. I have a lot less to backup now and it’s much easier. :P

I feel you. I had/have similar problem with photos. My photos, my wife photos, some scanned books, screenshots, which somehow get between photos.

One trick I found is to use software, which reads EXIF data and categorize photos acording to them. So, the first level in my hirearchy is Camera model. That was incredibly helpful. I could quickly throw away a lot of garbage.

Another important resource for me is software for deleting duplicates. That helped me a lot, to go through backups od backups.

Now stand in front of me the hardest point. Throw away all the bad photos. I’m looking for some AI software, which could do that. That would be incredibly helpful.

Do an md5sum of every file, that will allow you to sort the duplicates (even files with different filenames but the same data)

Dude, I am very sorry to hear. And thank you for raising the topic.

I always think about hard drive failure — and have experienced that. But I have never thought much about thieves.

My current strategy is two drives in my main machine and one is daily mirror of the main one. Unlikely that both would fail, but weird stuff like a power supply gone wild could kill them both. So a third removable drive comes into play. Any every real hacking project is “on the cloud” (i.e. Github). How could this be improved? Well, by storing that removable drive off-site (or in my gun safe). Maybe a fourth removable drive that a friend would store for me, but I am doubtful about doing that.

Another thing the pros recommend is testing your backups. I have heard many stories about outfits grabbing their backups after a disaster and finding out that they weren’t what they thought they were.

Thank you for the kind words!

Yes, testing is absolutely vital. No good finding out your backup’s not recoverable… after your data is gone…

You wouldn’t be thinking of the nation’s air traffic control system’s recent issue, would you?

Another tip, store a backup above the Flood Zone.

Years ago a friend’s basement was flooded when heavy rains overloaded the local sewage plant. She regretted having stored photographs and papers in a lower bin than the Halloween costumes.

I feel obligated to mention mega.nz – after taking much more time than I care to admin researching a cloud backup solution, I found them to be refreshingly hacker friendly. 20GB on the free account, although I’ve paid for the first tier. They don’t assume you’re computer illiterate (unlike the big names), and allow setting as many local / cloud sync pairs as you like. You can delete the default pair if you want (I don’t want to be forced to have a DropBox / OneDrive folder on my PC!). Different sync pairs on your different PCs. Android app so you can get you stuff on the go.

When I messed up and recharged my client’s account instead of my personal one, they responded within the hour and sorted out my mess, even though the sun had set in New Zealand when I emailed them.

PS: I have absolutely no affiliation – just a happy customer since 2015 – and think it’s the right mix of non-pushing-stuff-down-my-throat, and get-out-of-my-face-and-do-what-I -tell-you.

A very painful lesson I first learnt in the days of MS-DOS and floppy disks.

del *.asm is too close to dir *.asm, and one day the inevitable happened.

Oh well, sorry to hear. I can relate to that.. Loosing source files is bad. :(

Um, maybe just use “ERASE” as an alternative to “DEL” then.

It’s a lesser known command. I learned about it when reading an old PC-DOS manual.

– I know it’s a bit too late for that, maybe.

I recommend MSP360 (aka Cloudberry) for backup. Very sophisticated backup strategies including, block level incrementals, encryption where you control the key, bare metal restore, automated recovery from intermittent network outages, and automated data retention times and cleanup. My favorite feature is what I call automated two-level back that first backs up to local disk followed by backing up the backup to another location or the cloud of your choice. I use AWS glacier for second level. Been using it for 6 years … very solid. I test restores at least annually and never had a failure. I don’t get anything from this promotion. At the time I selected MSP360 I evaluated almost every home user backup known to man. No others compared.

Can you provide a pointer to getting started with “cheap” AWS archival options? While I have previously spun up virtual machines on AWS (primarily to run faster hashcat), the whole AWS world is confusing for me. YouTube always seems to be out of date.

I did this via MSP360 backup. I think you may need to use an API to connect directly. Look at Glacier at AWS. I set this up six years ago so the details are lost.

I had a teacher accidentally overwrite a proto-database Apple II program I wrote for him when I was in 9th grade. I started making copies regularly after that. Then I found SCCS in college, then RCS. I looked on “smuggly” when some peer students lost a major project, and had to retype an old version from print outs. Eventually moving on to CVS, SVN, and now git.

I’ve had my files separated into two different categories for years and years already: the stuff I really do not want to lose and the stuff I won’t like losing, but that is not irreplaceable. The first category weighs in at about ~5GiB, the latter at 70TiB.

It’s obviously far easier to back up the first category. Those files are stored on cloud-storage and are synced automatically between my devices — including my phone, since ~5GiB of files is nothing nowadays on a modern phone –, whenever files change, but also the system maintains 5 previous versions of those changed files, so I can easily go back to a previous version, should a file get corrupted. I also take offline-backups once a week. All in all, those files are reasonably safe for a home-user without enterprise-level resources to avail oneself to.

very cool setup!

The basis of my backup is a network drive. This has two benefits: first, all valuable files from the six-seven computers working in my house are backed up in a single place, from which they can be regularly copied to an offline disc (so no ransomware can touch it). The second advantage is that the NAS has limited space, so whoever is in charge of the given computer must manage their data in such a way that only important files are backed up. They are often reminded that they _should_ expect their drives to fail at any given moment and that they _will_ lose every bit of data that is not included in the ‘plan’.

I also make a separate mirror of my system drive, but this is rather for convenience than safety – that way, in case of trouble, I can just swap the drive, download the backups and keep working without reinstalling everything. Although it rather _was_ convenient, as it is now the mirror of M.2 copied on a regular SSD cannot be booted up :(

Anyone know of a good article on curating your decades of poor backups? I fell into the bad habit of duplicating entire directories as revision control and now have tons of duplicates that need to be cleaned up. I had a bad experience with Duplicate File Finder about a decade ago. Before doing a cleanup I practiced on some test directories and thought I had it all figured out. But I still managed to delete the wrong version of a bunch of files…

Other times I’d find that after backing up from one drive to another that my pictures or pdfs would somehow get corrupted… do any of these backup programs actually verify the file is correctly backed up?

tools like Kdiff3 or BeyondCompare are great for identifying what is different between your duplicated directory and between the ‘same’ file as well.

See my longer comment above on restic.

Start backing up with restic (to Backblaze B2, and locally to USB).

Then grab “fdupes”. And use it to find your duplicates. (If you are on windows, you can use WSL, and install it there)

restic dedupes internally, so you can use it before deduping manually. Then deduping manually to reclaim space. restic has a verify where it can verify a specified percentage of blocks. I have it all configured to run every couple of hours for my working directories. For my historical “library”. I run that manually when I’ve made changes.

If you are going to work over many computers setting up a fast local NAS for all your data to sync to when at home or an internet facing sync if some of these computers will spend prolonged periods away from your local network is a must. Keeps all the important files in a consistent place across machines and means you naturally end up with backups as each computer syncs and keeps the updated versions of every file and folder it should.

Though likely not every file on the NAS. So you don’t even need big drives in every machine to benefit somewhat, having at least two and sometimes more copies of everything – I assume your music studio machine for instance has no need to archive your writing tools and video stuff, and the roving laptop you write on needs not store all the music or video, while the editing PC probably does want local access to all your old video projects (etc). So while the NAS must have the capacity to hold it all the machines themselves need only enough to sync the data important to their role (or even none at all if they are only ever used at home and can work over the network – keep meaning to set up network booting for various machines here so they don’t need local storage at all).

Several discussions have mentioned the issue of worrying about historical backups. My advice is simple. Forget about them. You will probably never need them and if you do, you can dig through them then. Just put them on a shelf or cabinet, label them, and get on with life. Drives are cheap, your time is precious.

What is worth worrying about is what you are doing now and getting some kind of organization going. If you find you need a certain historical item, then dig it up and put it in the proper place in the wonderful new scheme you have developed.

“Several discussions have mentioned the issue of worrying about historical backups. My advice is simple. Forget about them. ”

Good thing someone didn’t

https://www.vice.com/en/article/pky7km/usenet-archive-utzoo-online

Hmmm, I would like to find if there are any Larry Lippmann posts there.

I found some under sci.electronics,

But his last name was Lippman

Sweet.

I thought the early tasteless posts were gone forever. Trolling from a simpler age.

May I make an advertisement for SyncThing? Not per definition a backup program, but a nice tool to multiply folders at any location. From the cabin on my boat to the soundproof shed deep down inside to the land home base, depending on whether there is a net or not.

Can recommend syncthing, or resilio, both of which are windows/linux. Both are a bit finicky in terms of setup, but do the job of keeping multiple sytems “in-sync” as per their names. Again, they are not “backup” programs per-se, as they copy 1:1, but use deduplication on the copy side. Versioning is also done, but copies are “in the clear”.

Personally, I use rsync, and use “duplicati” for off-site, cloud backup. 1Ttb for 6 accounts on onedrive for $109 is a damn good price, can barely buy a drive for that price. Backups are encrypted of course, and filenames are even obfuscted.

Jesus saves. Everyone else has to do backups.

Jesus saves, but Buddha makes incremental backups.

I feel for you.

For the last many years I have had a backup regimen based on rsync where I use the –link-dest option to make what is basically incremental backups but where each backup is a separate, complete directory structure where unchanged files are hard-linked on the server and only changed or new files copied over.

This offers protection from my own stupidity like being able to go back in time and find an earlier (or existing, have I done over-zealous deletions) version of a given file as well as malicious destruction of my files as in ransomware and it’s ilk. Backing up mangled files will only affect the current backup but will keep previous backups intact.

At regular intervals cron-jobs consolidate previous backups so I do not have a gazillion backups I will never look at.

My brother, a friend and I have a pact where we use each other’s NAS systems for this purpose, so I do backups to my own NAS, my brother’s and my friend’s; my brother to mine and my friend’s; my friend to mine and my brother’s.

In an emergency I could have either copy my data to a separate harddisk and have that sent by courier, but in normal day-to-day use it has only been necessary to copy the occasional file in over the network.

We each have had to invest in larger drives than otherwise but since none of us are doing anything in the music or video industry, it is not too bad, really and I have still plenty of space left on my 14TB NAS.

So now each of us has three backups scattered over three locations in two countries.

What do you when you have 35TB of local data? 3:2:1? Really?

su ; cd / ; rm -rf .

B^)

Yep. 3:2:1 . Doesn’t matter how much data you manage. You need to spend the time and money to make it safe and backed up.

if you care that is!

My backup is a nextcloud server on a machine I pay for to be up 24/7. That is synced in full to several computers , simple and transparent. No relying on Google or other questionable third parties

If you can’t smash your data with a hammer then it’s not your data. For this reason, I strongly distrust cloud storage providers–not because I think they’ll be snooping on my data (though that is a concern) but because I think they’ll be lazy with security and eventually expose it to the internet or lose it in a breach.

For a non-cloud solution, this is what I do (which is a solution for nerds, not your grandmother):

A 20tb TrueNAS server which serves as a network file server for large files like Raspberry Pi Images, media files, Windows backups, etc.

It also runs an instance of NextCloud/OwnCloud.

Each of my computers and phones has a client for Nextcloud and files are backed up/shared using that system. The phones use the NextCloud “instant upload” feature to save pictures and videos taken with the phones.

OpenVPN allows remote connectivity. Since I also run pihole at home, I’m usually connected to VPN while away because ewww…ads?

At my mother’s house is a computer with 6tb worth of storage. The storage is encrypted at rest via a certificate. Once a day, it connects to my VPN, decrypts the storage using a keyfile that is somewhere else, and syncs certain file shares that contain the data I would absolutely hate to lose.

It runs the NextCloud client, too, so all the files in that system get copied over as well. Then it disconnects from the VPN and reboots, waiting for the next daily sync.

If someone steals that computer, I can revoke the VPN certificate and they’ll never be able to get the data. If my house burns down, I can still get that backed up data.

I think it was Bruce Schneier that said its very easy to design a system that you yourself cannot defeat, but very difficult to design a system that other people cannot defeat.

What am I not thinking of?

Probably depends on availability of your VPN/Certificate Management.

Because if your house going on fire, you need guarantee that had backup or high period expiration for the key file to restore the data in your mother’s house.

But I really liked the setup.

backup of personal projects is sort of also related to our legacy when we die. The best gift we can give those who come after is to find the time and be very selective about what we think is our most valuable work and to separate it out so it is findable. Preserve that legacy well in multiple ways, but make sure it is not more than a few hundred things at most. In the public cloud is great, whether youtube, instagram, github, etc. As in the story, the youtube edits are more useful than the raw footage. And for things like photographs, print the most important ones, hang them on your wall, give them away to friends and family

Lewin, very sorry. When did this happen? Any leads on the culprits?

Benchoff is a prime suspect.

B^)

I don’t think Brian holds me in such enmity!

Alas, no. Now contributing to the cloud-based surveillance state in an attempt to prevent further occurrences.

I’ve been broken into twice in the last decade. The thieves never got anything of much value, and I *do* have a video surveillance system. After each break-in I took my footage of each crime on USB thumb drives to the police (I’m in.au too). Sure, the IR night footage wasn’t great, but you could make out enough for a decent description (white male, dark hair, tattoo on right forearm etc) and the cameras clearly showed them doing illegal stuff – one time I had a video of the guy actually picking up my belongings, the other time I had a clear video of him climbing my perimeter fence onto my property.

The only outcome is that now I am also down two thumb drives, because not only did the local constabulary do nothing to catch the thieves, they also never gave back my USBs.

So in my anecdotal experience, video surveillance is more trouble than it’s worth.

I heard about the 3,2,1 method on ‘The Tech Guy’ with Leo Laporte. Been using FreeFlleSync for both windows and Linux for backup/versioning and to verify backups made with other apps. Having a physical backup hit home when my Synology DS1515 failed and data was unreachable. I did have a older Synology with full backup but I am dumping the Synology platform. Really upset that the DS1515 had a known failure mode and would need to buy a new Synology replacement. Now running two TrueNAS Scale NAS at home on old PCs with one full HDD backup offsite.

Seems like a few commercially NAS providers have had issues, QNAP and Synology for example. Any comments as to more or less reliable pro-Sumer NAS? I’m not interested in homebrewing a raspberry pi NAS or utilizing an old computer (I have a basement with quite a few old computers that are importantly collecting dust for me).

Honestly, home-brewing a NAS can be a lot of fun and a good learning experience, and it’s a good use for older but functional gear. A hefty power supply and plenty of bays for drives would be the most important thing – dump a Linux build onto it and look into ZFS, it’s flexible enough to build an array that can withstand multiple drive failures, it’s easy to replicate to other storage, and with snapshotting you can even give yourself some insurance against ransomware as long as the NAS itself doesn’t get compromised (and if it does, that’s why you kept offline backups too, right?)

ZFS snapshots can make for very fast incremental backups, and snapshot cost is infinitesimal, so it’s very handy for periodically bringing offline storage up-to-date.

My current server has more horsepower (I use it for graphics processing and a few VMs in addition to file storage and backup); but basically it’s got a set of shared directories to receive backups from other systems in the house, automatic ZFS snapshots periodically to give “recover to here” opportunities and protect against accidental deletions, and about once a week I’ll refresh the offline backups. The snapshots also give a good indicator of “how much has changed” – too much in a short time can be one indicator of ransomware at work.

“(I have a basement with quite a few old computers that are importantly collecting dust for me).”

(Chuckle!)

ZFS is great. I use 4 SSDs on an Asus Hyper m.2 card on my main machine for redundancy and speed, and I have another array made from 4 2.5″ hard drives in an older machine that I now use only for backups. Every few weeks I power it on, and run a script that makes snapshots and pushes them to the backup machine. So all the data could be online instantly without restoring from backup first, if necessary. I also use syncthing to sync current projects among several machines. Offsite is easy that way; but as others have pointed out, unless you get syncthing to make its own backups, you could accidentally delete or make regrettable modifications to files, which would get replicated in short order.

Yeah probably that offsite backup is a good idea; I should also sync the zfs snapshots to an external large single drive, assuming that’s possible. I did burn a BluRay m-disc with some highest-priority stuff, but didn’t find a suitable place to lock it up offsite, and I probably won’t get around to burning more of those very often.

I’m the family geek and work on all our computers. And mostly retired but I still have a few small business clients I support. I preached and preached to everyone for years about backups but no-one listened. I finally gave up and just started backing up everyone else’s computers myself once a month. In some cases paying for the backup drives myself. Good thing too, It has saved the butts of family members on several occasions although they failed to understand or appreciate just how much trouble I saved them. I suppose a good deed is its own reward. So be a hero even if it is unrecognized and back up granny’s PC.

I’ve got back-ups of back-ups of images, etc, etc.

If you’re serious about backing up semi-properly, then get a USB=HD adapter, a nice big disk, and a blu-ray burner.

Copy all your data, or a set of drives to the big disk.

Get an app that will find identical files (size, date, etc) and let it delete all the duplicates.

Move all of that to a folder on the big disk, then rinse and repeat through your remaining storage.

Spend hours classifying and making folder structures if you want.

Then start burning to your BR using good media and a good forensic quality app that will do a bit check after burn.

Or something like that.

You just inspired me to do that though I’ve had most of the bits and bobs laying around for the last year or two. Only thing is, as I’ve started to go through my copies I’ve found that 90% is actually stuff I don’t care about anymore.

…”C-beams glitter in the dark near the Tannhäuser Gate.”

All is lost…

Huh?

“Time to die…”

My main PC is a Dell that came with Windows 7.

After boot it would prompt me to put a recordable DVD in for backup/recovery.

After the next time I booted, it asked the same thing. I would put in the disk from the previous session, and it wouldn’t recognize/read it. After a number of attempts and trying other recordable DVDs, all with the same (lack of) results, I gave up on it.

Now it defaults to boot Linux, and I store backup files on an auxiliary drive.

The DVD drive works fine otherwise.

Yeah, the open ended disc finalization was ridiculous. Won’t miss old storage formats like discs, tapes, weird parallell port drives, incremental blobs and such.

Sorry for your loss. The value of the 2nd onsite backup is that if you have a drive crash or laptop stollen it can take days or even weeks to do a full restore from cloud depending on the service.

Very good point.

I am working on a publicly hosted cloud back up option. Check out collectivefs.com

Decentralized file storage like IPFS without the crypto.

“without the crypto”? looks you are encrypting files, whereas IPFS doesn’t encrypt by default. If you meant cryptocurrency, it’s only FileCoin that has it, not IPFS. Also, I question something in your readme: IPFS doesn’t have version control built-in AFAIK. But you can store a git repo if you like. (something I need to play with more) Without a git repo, if you pin the same file tree twice, with changes to some files, then unchanged blocks, files and subtrees will be implicitly shared between those pins, but otherwise they look like two independent trees. Did I miss something?

This reminds me of an experiment I tried a couple of months ago, which I just pushed: https://sr.ht/~ecloud/litd/ I don’t really use it for backups yet, but it’s an experiment to use extended attributes to tag files and directories to indicate what to do with them; so far I can ask it to add files to IPFS. The way I see it, IPFS isn’t much of a filesystem yet: it’s too static (re-publishing changes on IPFS+IPNS is a manual process and too slow); storage is only on top of an existing filesystem (and yet finding your files again is hard, in spite of that); you can’t put all your pins into one big file tree; it doesn’t support metadata well enough (no xattrs, nothing that could be pressed into service as a resource fork, unless you want to do something ad-hoc outside the kubo daemon itself); and the mechanisms for mounting are terribly incomplete. But with this daemon I can keep files organized in the usual way on my usual filesystem, and just use IPFS for sharing and replication, without copying into separate IPFS-managed storage locally. So maybe it could go towards being like syncthing eventually. But eventually it should be the one listener that notices changes in files and does everything necessary when that happens (total-filesystem monitoring is expensive, so there should be exactly one daemon on my system doing that). So ideally it would replace locate, and kick off some full-text-search thingy when files change (beagle, strigi and such – I don’t like any of them so far on Linux, but occasionally miss having something transparent like spotlight on macOS. One time I was using swish++ for that: it was really not bad.) And I was thinking of adding a backup-priority xattr. That blu-ray experiment got me thinking: if my goal is to have one disc that holds everything I want to last no matter what (data that will hopefully even outlive me), I have to keep track of which files are sufficiently high-priority, where they are, and how much it adds up to. But what should be backed up or replicated to other places, given that there are differences in availability, total size and speed of different storage devices and services? So maybe just use a numeric priority, or some tags for different purposes (work projects, spare-time projects, notes and stuff that I want even on my phone, reference docs, private media, publically shared media, blog and social posts and other published material). The way that I use syncthing, I need a lot of separate shares for different directories that go to different subsets of machines; I haven’t figured out yet if the tagging-based approach would really work better. And if IPFS is for sharing and replication, then why does syncthing have to do that work in its own way? I want to leverage IPFS to fulfill its promise instead.

I suspect IPFS could be built as a layer on top of venti, the immutable key-value store on Plan9. The fact that it’s not doing a good job of being a filesystem so far really highlights the value of layering multiple tools and daemons that each do something unique, self-contained and valuable, rather than being so ambitious as to say they want to build a universal filesystem in one daemon. Key-value store on a bare partition on the bottom; then a layer that builds the one big system file tree on top of that; and sharing is another layer, another daemon. Monitoring changes and triggering reactions is another. All of that in userspace, not in the kernel: that’s the way to go. ZFS works great, but it’s also rather a lot of functionality in one big chunk of kernel code. There is presumably a merkle tree underneath, but it’s not exposed and not standardized. I was thinking: why do IPFS and ZFS have to do the same work twice? They both need to generate a hash for every block, file and subtree in the filesystem. Maybe the hash that the key-value store generates could be the canonical one for that block; then the filesystem daemon would combine those into a merkle tree, thus generating the canonical hash for each file and subtree; and the IPFS daemon would generate CIDs from those, in a reversable way. But to get such a clean architecture is system engineering: Linux doesn’t have quite the right architecture for it yet.

But I have too many projects. ;-) Most of us are building on top of what’s available, not so willing to work on the lowest layers. The lower layers are daunting and time-consuming. But all the top-layer projects seem to be more ephemeral than the low-level ones; so which is a better use of time?

I was in a similar situation around a decade ago, something happened to the data partitions on my singhe HDD, leaving me with nothing. I managed to get the majority of it back with data recovery tools as the hardware was OK, but it scared me to lose things in the future & some things i did genuinely lose as they were non-recoverable.

I now run an SSD for my OS/apps/games etc and a separate larger SSD for my “documents”. Daily backups to my NAS are ran using “Backup4All” as a straight data copy so at the very least i have a live 24 hr backup in-case i lose a drive on my PC or i accidentally delete a file.

I also run a full disk image every 4 weeks using “Macrium Reflect Free” to my NAS so that i have a full OS and Documents archive, these archives are stored for 3 months before being deleted to make space for more.

These two steps achieve the 3-2 parts of the 3-2-1 backup scheme.

I am also in the process of setting up a “Storage Box” from Hetzner, its € 12.97/mo server with 5TB of space that you can access in any number of ways. I will set up jobs on the NAS to automatically copy my documents backup to this storage box once per week to i can have the one off-site copy there too.

All of this is set up with automation and reporting so i never have to think about it, i just get flags when things do fail which is nice, but it means i can never miss running a backups. I have 24 hrs of ‘live’ data accessible at a moments notice, 3 months worth by simply opening an encrypted file and probably 6 months worth of offsite archives on the Hetzner storage box.

All-in, its saved me a couple of times by me accidentally overwriting or deleting files. It’s not expensive, the NAS cost me around £400 to build (TrueNAS) and the Hetzner box is € 12.97/mo, well worth it for peace of mind.

I am a data horder and the cost would likely be lower if i/you don’t feel the need to backup 10+ years worth of code, movies, music etc and just backup what’s actually irreplaceable, but if you have the capacity, just back it all up, even replacing stuff that’s replaceable is a pain.

Been there, and am still sort of there.

I have a /mnt/oldhomes directory, which I collected all my erstwhile backup copies of …. everything….

It’s been a mess, and I occasionally get in there and clean things out or remove dupes.

1. Look at restic. IMO It’s a MUCH better than rsync, or syncthing, or lots of others. Encryptyed, incremental, and de-duplication. So even if you have lots of copies of stuff, it’ll only save it one time.

2. Backup remotely to Backblaze B2, and locally to USB drive. Backblaze is cheap, and reliable. (There are a few cheaper, but real reviews are sketchy.)

3. Once you are using restic for backups…. If you are in the linux/macos/WindowsWSL spaces Look into fdupes. It can find dupes, and then you remove the dupes except for the one you want to keep. Start this AFTER backups, because your data is already de-dupped in the backup, and if you make a mistake you have the backup.

4. Actually question stuff you need to keep. One thing I gave up on was my old MythTV archives. close to a terabyte of old TV shows. At this point I accepted that if I haven’t watched a recording of a show in 10 years, I probably don’t really care.

12.01am nightly: incremental. Saturday, weekly: freshly archived. – Automated, idiot-proof.

Apologize for the ambiguity – Yes, I meant cryptocurrency. I am using reed-solomon encoding for redundancy and symmetric keys for encryption.

I understand IPFS/Filecoin is separate but what motivation does a node have to handle other’s data the network besides Filecoin or altruism?

With collectivefs, the motivation for hosting other’s files on your infrastructure is simply because you want them to host yours. This has a similar game-theoretic as BitTorrent and is a big reason why it has outlasted so many other file sharing systems.

Then there is pinning mechanism which a centralization of sorts and prioritizes some data over others – this makes the system more unbalanced and is a huge flaw from my perspective.

With collectivefs, no data is prioritized over any other data – this is one reason why Internet Protocol is so successful because packets are just packets and not prioritized. If you want more reliability you can have that through the reed-solomon function which you will give up more disc space in exchange for redundancy.

On the technical side, I am trying to use FUSE based file systems to add the collectivefs features. It needs to be as easy as pasting a file into a folder.

I really like your idea about ZFS already building the tree so leverage that.

My google chat is if you want to talk further: physiphile at gmail

?? My comment was put at the bottom for some reason ??

Apologize for the ambiguity – Yes, I meant cryptocurrency. I am using reed-solomon encoding for redundancy and symmetric keys for encryption.

I understand IPFS/Filecoin is separate but what motivation does a node have to handle other’s data the network besides Filecoin or altruism?

With collectivefs, the motivation for hosting other’s files on your infrastructure is simply because you want them to host yours. This has a similar game-theoretic as BitTorrent and is a big reason why it has outlasted so many other file sharing systems.

Then there is pinning mechanism which a centralization of sorts and prioritizes some data over others – this makes the system more unbalanced and is a huge flaw from my perspective.

With collectivefs, no data is prioritized over any other data – this is one reason why Internet Protocol is so successful because packets are just packets and not prioritized. If you want more reliability you can have that through the reed-solomon function which you will give up more disc space in exchange for redundancy.

On the technical side, I am trying to use FUSE based file systems to add the collectivefs features. It needs to be as easy as pasting a file into a folder.

I really like your idea about ZFS already building the tree so leverage that.

My google chat is if you want to talk further: physiphile at gmail

I have about 6 external drives with backups, and one NAS with backups.

All in a nice stack on my desk at home. :P

I should know better. In around 1993 I bought a new harddisk (80MB!!!) for my computer, transferred all the date, wiped my old harddisk (20MB), and gave my old harddisk to a fellow student.

12 months later my new harddisk crashed and I had lost everything. :'(

Luckily I did backup all my school work and source files to floppies, so I didn’t loose anything important, except my money. The harddisk was a Kalok 80MB, which was quit a failure: they all crashed after a while. And of course Kalok was already bankrupt when my harddisk crashed and I lost my money (600 Dutch guilders at the time, I had worked quite hard in a summer job for that money :'( ).

Wow, so many comments on how to backup right, I remember my Mum who taught said := Image first, addendum second and archive third (my mums Cobol saying ) ; but these sayings are based on tech on paper tape :-)

Not to needle you, but did you have a security system installed and active BEFORE the crime? And if not do you now have such a thing?

In prep classes for CCNA they make the tiniest mention of physical security for your systems, but I bring up that physical security is one of the best investments you can make for your property and data. If you can’t access it, 80% of your security is complete. If bad actors can physically get to it, no amount of software security is going to keep it from getting stolen, even if they can’t access it.

This hits home, as I’ve had a few data losses or near-misses over the years.

Here’s my setup for personal files (personal documents, photos, projects). We have a few laptops in the house. I would welcome comments or suggestions from experts here.

1. I have a basic OneDrive account. A copy of stuff I work on frequently on different machines gets stored there, for convenience.

2. I don’t save anything of importance on any laptop’s hard drive. Rather, “long term storage” goes on shared drives on my Synology NAS (“H: drive” etc.)

3. The Synology has two 3-TB hard drives that are mirrored (not quite RAID but similar effect).

4. Every night, the Synology incrementally backs up the contents of its HDs to a Backblaze B2 account. I end up paying about $6/mo for this.

Does this seem reasonable?

What is an ” my darling robot cum autonomous mower” – it sounds like weird suburban kink.

Takes all kinds.

What is a mower but a sheep? Not Baaaad. Daaaaady.

What was that terrible preachy movie about the gay shepherds? They called them cowboys because everybody would assume gay shepherds just preferred rams, male ones anyhow.

“Broke Butt Mounting”

It’s a common phrasing: A cum B

“This is my bedroom-cum-study.”

I built a robot that I’m now turning into an autonomous mower.

Everyone has a story or some advice on what you “should” do – but it’s actually very simple.

Decide how important your data is, and go from there; there is no such thing as “too expensive” or “too much work” when it comes to backups, only “my data is not important enough to pay that much/take that much of my time”, or “the loss of this would impact me to the point that I want to be serious about my backups.”

I back up some personal stuff using a couple of old consumer grad external drives that are chucked into a drawer, and I back up some work stuff to several different offsite providers who operate regular swap schedules (with drives and tapes couriered by armed guards no less) as well as to multiple cloud services as well as, get this, source code printouts stored with schematic printouts of the hardware.

Both my personal and work data is backed up in a way that matches it’s importance.

Yep. I have about 20 TB of data on my NAS. That gets backed up weekly to a set of external drives that generally stay on-site. Then I have a padded case full of drives that everything gets backed up to monthly and kept off-site about 30-ish miles away. All of those are encrypted ZFS filesystems. About the only thing that could take it all out is an asteroid or nuke, in which case I probably have more than data to worry about. And most of the time while the monthly backup is happening, I move the weekly backup drives out to the car so hopefully they won’t all get taken out at once while in close proximity. While I like the idea of using something like Backblaze, $125 a month will buy a LOT of extra spinning rust drives over the period of a year.

After a recent 14 day raid 5 rebuild I decided to rework my setup.

Primary nas: raid 10, btrfs with hourly snapshots and generous retention policies.

Snapshots replicated to physically separate raid 5 nas (with additional retention policies).

Cloud sync to AWS S3 with Deep Glacier life cycle policy: $1/TB/mo (egress is $$$ though).

Scheduled quick/extended smart and data scrubbing to catch drive failures.

Ransomware, accidental deletes/edits: Snapshots.

Flooding / Electrical: backup nas.

Theft / house on fire: cloud.

You can tune volumes for different levels of protection. For example, I have temp and scratch volumes that have less generous retention snapshots and aren’t replicated or synced to cloud. PCs and macs are backed up but not replicated or sent to the cloud (theft/accidents being the primary concern).

Looking at this from a user who started with floppy disks in the 80s, my recommendation is (beyond 3, 2,1):

1: Keep all your data spinning (figuratively as probably most of us are now using solid state)

2: back it up with the latest technology ( but not the cloud in my opinion as it is a form of ransomware in waiting).

My backups started with floppy disks, then tape drives, then cds, dvds, blue ray, thumb drives, and now external USB 3.0 HDDs are used, and eventually external SSDs will take over when prices get competitive. Problem is all these devices have or will become ‘obsolete’ eventually. But if one keeps up with the technology and keeps all the data spinning you’ll always be able to get back to where your were when disaster hits using. All those floppies, cds, dvds, etc. can be tossed in the round file, and be just a faint memory of media that served its purpose for the time and place.

RAID is not a backup. All this does is increase your ‘up-time’ (availability). Your server doesn’t have to down when the dead drive is rebuilt behind the scenes. For home use, I don’t use RAID as down time is not an issue. So what if I have to wait a few hours to get access to my server as data is restored to a new replacement drive. Or if motherboard dies, power supply goes up in smoke, etc. …. Not a big deal as a backup is available. Ie. Use the KISS principle.

Archives are different than backups and this is where you have the longevity headaches. Luckily as a home user I don’t have this problem, but the Library of Congress does.

Nuff said.