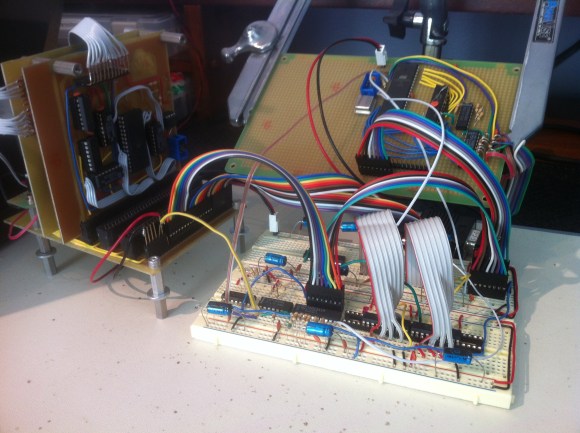

[Quinn Dunki] pulled together many months worth of work by interfacing her GPU with the CPU. This is one of the major points in her Veronica project which aims to build a computer from the ground up.

We’ve seen quite a number of posts from her regarding the AVR-powered GPU. So far the development of that component has been happening separately from the 6502 centered CPU. But putting them together is anything but trivial. The timing issues that were so important to consider when developing the GPU get even hairier when it comes writing to the VRAM from an external component. Her first thought was to share a portion of the external RAM between the CPU and GPU as a way to push rendering commands from one to the other. This proved troublesome both in timing and in the number of pins available on the AVR chip. She ended up using something of a virtual register on the AVR chip that can receive commands from the CPU asynchronously. Timing dictates that these commands be written only during vertical blanking so this virtual register also acts as a status register to let the CPU know when it can send the next command.

Her post is packed with the theory behind the design, timing tests on the oscilloscope, and a rather intimidating schematic. But the most important part is the video showing her success in the end.

impressive as usual

i find that some of the “hacks” here are waaaayyyyy over my head and i must say i often times cannot even fathom what is being done. with that said, i still enjoy this site and find it to be quite enjoyable to see what people are coming up with.

This:

http://hackaday.com/2012/12/25/magnets-keep-the-shower-curtain-from-groping-you/

May be more your speed

LMFAO!

Tred carefully on HaD, last week we had a post on how to misconfigure your /etc/motd.

I suspect these sorts of problems are exactly why mainstream computers went with a ring buffer approach. Although even with something as simple as a ring buffer coming up with a reliable way to lock the buffer when it’s full without blocking the CPU or losing commands would require some clever hardware and software to handle.

Indeed! Shared memory is always tricky. In software, this is equivalent to the the problem of passing data between threads. There are many techniques, including semaphores, double-buffering, mutexes, and other forms of critical section locking.

In software, the solution generally involves restricting the amount of shared memory as much as possible so that controlling access is efficient and bug-free. I basically applied that same logic to this problem, and currently have a shared register, which can be considered a maximially reductionist ring buffer (of size one). I may need to extend it to three or four entries, depending on the speed of my slowest rendering command (which remains TBD until more of the driver is written).

dont gpus use fifo and just dma buffers and objects? I think I heard a driver dev mention bit banging once too..

the reason its such a gray area is because gpu arc is expensive to RE. Other than that it’s a FPGA on PCI in most cases..

Most gpus are definitely not fpgas, they’re massively parallel simd asics. Whilst some tasks can be implemented efficiently by both, fpgas are completely reconfigurable and gpus are merely programmable.

im curious what can be done with just a single one byte command with little or no data for parameters. a command to ‘render sprite number z at screen position x,y’ seems like you need at least 2 or 3 bytes of data in addition to the command to do something like that. perhaps a command stack done in software or something. the command value then would imply the number of bytes that must follow it, and when they all show up the command gets queued or executed.

I don’t know much about bus protocols, but in internetworking a single flag bit can do a great deal. It is more about timing, or position if you will.

If the third bit is a 1 that may signify a complex set of actions to perform, or maybe if it is a zero then the next bits will determine n number of times the previous command should be iterated through.

With this we are talking about whole bytes. 8 whole pieces of information to do stuff. It would be similar to subnetting, making stuff like this possible:

CCCCCCCC

256 different commands

CCCC DDDD

16 different commands with up to 16 different pieces of data

CDDDDDDD

8 different commands with up to 128 different pieces of data

or the reverse ordering having just 2 commands and 254 data

Essentially it comes down to what the protocol dictates. If the communication medium can be trusted then there is no need for error checking, control, or any context whatsoever. Every single bit can mean something complex if both the sender and receiver are talking a language set up for it.

Very well put! A single byte can indeed convey a great deal of information. In any case, it’s helpful to remember the separation between the interface and the protocol. This one byte command buffer is a platform upon which any number of software protocols could be layered. Heck, you can move the Internet over a single bit, with the right protocols layered on top. The width of the pipe limits speed, but not the theoretical capabilities of the interface.