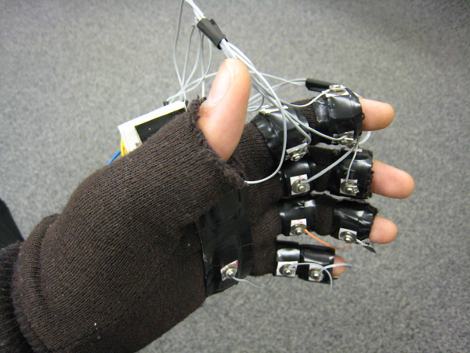

This two handed glove input setup, by [Sean Chen] and [Evan Levine], is one step closer to achieving that [Tony Stark] like workstation; IE, interacting with software in 3D with simple hand gestures. Dubbed the Mister Gloves, the system incorporates accelerometer, push button, and flex sensor data over RF where an MCU converts it to a standard USB device, meaning no drivers are needed and a windows PC can recognize it as a standard keyboard and mouse. Catch a video of Mister Gloves playing portal after the jump.

While amazing, we’re left wondering if gesture setups are really viable options considering one’s arm(s) surly would get tired?

[youtube http://www.youtube.com/watch?v=guslOmc6bbI&feature=player_embedded%5D

Thats nice… But not quite handy as a gaming-device, it seems a little out of control for fps, but it could be handy for a robot-hand programming device, we just need someone to make a programming-interface that works with this thing :)

is mister gloves is applicable to physically challenged?

A standard input setup? Maybe not… But I think this would be great for people that move around a lot, like in development labs, workshops, standing desks, etc… I would love to have that kind of setup for making catia models when I’m at my CNC workstation.

If they can develop a glove-less version (using cameras), this would be perfect for kiosk systems with 3-D displays! No more worn out touchscreens, overlays or keypads.

if this was conected with a sign language library and a voice synthesizer it would be an excellent way for those who can not communicate with people who don’t know sign-language… also paired with video-goggles it would be a cool interface for worn-computers.

Not that I would ever admit to having watched this movie but this reminds me of Johnny Mnemonic

[youtube=http://www.youtube.com/watch?v=bL_8Ugp9zI4&hl=en_US&fs=1&]

Anybody else remember this?

I notice we’re getting quite a few posts out of the Cornell ECE 4760 class projects page lately.

“Gorilla Arm” is a potential issue, yes.

I conceive of gestural systems as one option among a multimodal interaction toolset, which is to say that they should be used where most convenient or when most suited to a task but that you shouldn’t force users into using them extensively or exclusively. For a somewhat analogous case, consider the keyboard and mouse. There are many things which you can do with both devices, and in these cases the user is free to choose according to comfort or just according to where ever their hands happen to be resting. There are other cases where one or the other is particularly suited. For example when inputting text it is possible to use the mouse but much more efficient and comfortable to use the keyboard.

Good HCI is not about providing something novel, it is about providing something which improves the comfort or efficiency of the interface, or extends its function. Neither is “the interface” simply your new device – it’s the complex of all physical and cognitive factors involved in your interaction with the machine. This almost always involves the melding of several modes of interaction to form a (semi)coherent whole, and it is here that we may find use for things like gestural input.

That and sign language.

seems like a great update to the peregrine, without the need for sketchy dlls,

I would love to be able to use these on and off with a droidesque keyboard and goggles

though thats mainly since i huntand peck

Pretty neat project… it would be quite interesting to see this updated using conductive thread.

And why not make the left and right hands symmetric ($?), that would give a lot more options for input customization in game… especially for lefties