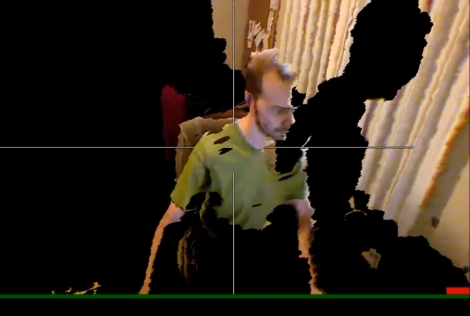

[Oliver Kreylos] is using an Xbox Kinect to render 3D environments from real-time video. In other words, he takes the video feed from the Kinect and runs it through some C++ software he wrote to index the pixels in a 3D space that can be manipulated as it plays back. The image above is the result of the Kinect recording video by looking at [Oliver] from his right side. He’s moved the viewer’s playback perspective to be above and in front of him. Part of his body is missing and there is a black shadow because the camera cannot see these areas from its perspective. This is very similar to the real-time 3D scanning we’ve seen in the past, but the hardware and software combination make this a snap to reproduce. Get the source code from his page linked at the top and don’t miss his demo video after the break.

[youtube=http://www.youtube.com/watch?v=7QrnwoO1-8A]

[Thanks Peter]

I wonder if you took 3-4 of theses and synchronized them, putting one on each wall of a room, you could get a higher quality environment.

Hmmmm, this would be interesting to see with TWO Kinect’s, that’d fill all the blanks, right?

I love it!!!! Can you take 3 of these and point them at the center of the room so as to build a complete 3D image without shadows???

Thats aweswome…………

this is probably one of the cooler Kinect hacks I’ve seen… I was apprehensive about the device at first, but now I want one just to fool around with.

Its a nice start towards the right direction. I might buy one soon if cool stuff like this comes out. Would not buy it to play stupid kinecct games.

@Michael Bradley – 3x Kinect == Cheap Mocap? Im looking at getting one for some form of live production visuals.

This is incredible. The Kinect is going to open up a lot of avenues of research.

Really really cool…

Will using more than one kinect sensors work? My understanding is that it projects a “grid” of IR dots over its field of view and uses them for the measurement. Will intersecting grids confuse the sensors?

I really want to see someone do something with 4 of these, you could do so much…

@Garak – i imagine this could be done my quickly turning the dots on/off and sampling them in a continuous cycle.

ok, how long until someone builds a esper machine out of all this?!

@xeracy, I think so, and this guy did a great job. I am impressed with how when he rotates it, how much information is available around the corners, ie: the front of his face, when the cam is to the side.

When he rotated, I had flash back to The Matrix, the first scene when the girl is in the air, and all stops, camera rotates, and she continues. Just imaging, that was done with several still cameras all positioned, etc…. with this, just freeze, rotate, and continue!!!

Next stop 3D PORN!

Anywho …

Would interfacing the kinect with a wii be HaD worthy?

I may have a crack at it later this week if so …

@Michael Bradley – and the kicker? ITS ALL REAL TIME! I really wish i was skilled enough to do this on my own.

Wow, I did not realize the system was that precise. I thought in situations as this that the depth stepping increments would be closer to a foot or so in distance if not more.

Judging from the coffee mug and torso shots however, it seems the distance granularity is much smaller! Now I want one

Just use real time photoshop content aware fill to fill in the gaps ;)

Hopefully, someone will write a “Parser” environment for Kinect data. To make files that could somehow be rendered into Skeinforge Etc parameter/object details.

Enlisting the commercial solid print bureaus like oh-Shapeways and their peers in a scheme of “printed object credits for prize funding” might kickstart the ideas.

It would be way cool to have 3D busts of my Grandkids..

i would like to see this guy manipulate a virtual object in real-time if even a ball perhaps

I’m wondering… what would it look like if you added a mirror in the kinect’s vield of vision?

Sorry guys but you likely can’t do more than one at the same time, it projects a IR pattern as part of gathering data, that would interfere and fail with more than one in a room.

Now someone just needs to hook this up to a cheap 3D display for live holographic video calls.

My bets are on something like the DLP project+spinning mirror combo. Or possibly a spinning LCD if someone can get all the power & signal connections to it.

In response to the can it use more than one kinetic. If you changed the frequency of the IR, would you be able incorporate more kinetics without having them step over each other?

Quick Thought: You can use different IR wavelengths. You would have to replace the IR LEDs. And code how to detect them.

>Sorry guys but you likely can’t do more than one at the same time, it projects a IR pattern as part of gathering data, that would interfere and fail with more than one in a room.

It seems that your shape evolved did a huge work in this direction for its player projection as its silhouette is very clean compared to what you see here … It opens the door to augmented reality stuff with a cheap device :)

kind of reminds me of that software from movie Deja Vu

IR projector in Kinect means you cant use more than one at the same time. You can sync them like ToF cameras.

But you could use few more normal cameras and use Kinect depth info to reconstruct/simulate/cheat the whole scene.

There are algorithms that reconstruct 3D scene from ONE video feed http://www.avntk.com/3Dfromfmv.htm

Having few at different angles + one with 3D data should speed things up.

Not sure if the kinect requires any reference points for calibration – but instead of different wavelengths (which I imagine would be difficult/impractical) couldn’t you setup a shutter system, solid state. Block the IR of other units, sample data on one and cycle. Would slow down your available refresh rate.

Sweet hack mate, very impressed.

^^^CANT sync them like ToF cameras.

I like the idea about different IR wavelengths, but i think Kinect uses laser instead of led.

I guess you could use two Kinects directly in front of each other just making sure IR dots dont end up at each other cameras – that would give you almost 90% of 3D and texture data.

@TheZ

I don’t know about different wavelengths for multiple kinects. Alot of it depends if the kinect can differentiate between different wavelenghts AND being able to hack the firmware to do stuff appropriately. Hasn’t all the hacking been on the computer side of just controlling it and getting useful information back? I’d either go with very narrow filters or synchronize all the kinect together and some multiplexing. If it is possible, I’m sure someone will figure out.

Wasn’t it in Hitch Hiker’s Guide to the galaxy that they mention the progression of user interfaces as: physical button -> touch interface -> wave hand and hope it works? Isn’t the third stage upon now? I’m wondering how long I have to wait before I can control my Mythtv box with hand gestures in the air.

Hmm, instead of replacing the IR LEDs, wouldn’t it be possible to place IR filters in front of both the LED and Detector of different Kinects? That way, each Kinect should only detect the wavelength of IR light it was emitting.

you could put on a gimp suit where each joint is a different colour (forearms, hands, thighs etc). The computer could use the colour coding to identify each joint, do measurements etc…then it could do motion capture….add the motion capture to a real time or post calculated 3d scene with digital actors….hurray. How long till we get kinect to bvh converters?

The trick would be to calculate SIFT points on some frames, and use those to track objects as they move. This is the basic mechanism behind current reconstruction techniques, whether they use one or two cameras. The depth map would improve the fidelity of the representation, and should provide shortcuts that would let this run faster.

Keep up the good work everyone! Kinect is coming along nicely :)

Outstanding work…

I swear I’ve imagined doing this for years, and how cool the glitches and shadows in some set ups would look.

That is pretty amazing. Picture does not do it justice, video is awesome.

In regards to those arguing against using multiple Kinects at once, one could consider putting something along the lines of the ‘shutter glasses’ (used for many of the current ‘3D’ displays) over the IR projectors, and dropping the (depth) frames not associated with the ‘currently projecting’ Kinect. I’m sure that a bit of crafty software design could interpolate the two 15Hz (normally 30Hz IIRC) streams fairly well, too.

Better yet if the exposure time is less than 1/60th of a second (30Hz/2) and the sync can be intentionally offset…

After this seeing this, I’m absolutely getting one. Very cool.

da13ro beat me to it… I really have to refresh the page sometimes before posting things. Still, this thing is full of awesome capabilities, and it’s great to see that so many skilled people are making use of it.

Could you polarise the IR coming from two kinects at 90 degrees to each other. Then use filters on the cameras to block the other set.

polarization would work just fine provided that there was enough light remaining after the fact for the camera to work properly.

So would strobing them on and off alternately — it’s a very common procedure when you have multiple sensors operating on the same band (ultrasonic distance sensors are a notable case, since there’s not much ability to reject returns).

Filtering for wavelength might work, provided that you had physical bandpass filters on the camera. The depth sensor is monochrome and would react basically the same to any frequency it’s responsive to (different brightness but because it’s an uncontrolled environment you can’t rely on that to differentiate two sources).

I would guess that polarization would be the cheapest and quickest to implement, with bandpass filters being not that much more complex. Time-division multiplexing would only be a good idea where you absolutely cannot modify the kinect hardware in any way for some reason…otherwise it’s just a waste of effort.

I do really want to see what happens if you put a mirror in the path, though. I’m imagining a “window” in the feed through which you can look and see the other side of your room, just as if the mirror were actually a window into an alternate dimension :P

about the IR grid stuff I would assume they modulate the IRs by some frequency to avoid influence of other IR emitters. Either that, or one has to modulate the IR by themself. After that it would be relatively easy to use several units simultaenoulsy by giving each of them a different modulation frequency and using an electrical filters or an FFT algorithm to isolate the indvidual frequencies from each other.

No need for different wavelength, optical filters, etc.

Its unlikely that they modulate the IR output (as in pulse the laser/LED whichever it is) because the camera would have to be able to capture at least that fast. >120Hz for US incandescent lights. So, a camera that captures video at greater than say 300-400 FPS to adequately figure out whats noise and whats signal. Doubt they used anything like that. I think the polarization would be the best bet without opening up the connect. Would try it if I had another connect and time…. Maybe someone can try using two pairs of the free 3D theater glasses. One glasses lens for each projector and depth camera.

Could you hook up multiple kinects to capture the other angles of the room and have a full 3d map?

I just looked at this guys youtube page, OMG, this guy is on it! I thought I was fast with code (only uControllers) this guy rocks! He did some augmented vr, addressed the mirror question, etc..

I’m not wanting live video like that, but if it could fix a Kinect to a rotating base and scan environments in 3d! This would be functional I could use this.

Or software written to take slices so an object could be rotated in front of it and scanned. This would make a relatively cheap 3d scanner.

Seems as if one could use this with a program like Zbrush to sculpt with your hands.

As a follow up to my comment I just saw another video of his, with a cg model of a creature sitting on his desk moving and all in real time. He’s really not that far from the Zbrush idea.

Imagine a Kinect above your monitor as your sculpting with your hands the model on the monitor?

re 2 kinect: in theory should be easy.

get 4 linear polarization filters (2 for each kinect).

Polarize kinect 1 @ 135º and kinect 2 @ 45º

(place a polarizing filter on depth cam as well as upon IR source)

now both kinects can’t see each other but they can see their own beam.

@EquinoXe

Polarization is normally not sustained under diffuse reflection, so even though the light would be polarized, the light coming back from the scene wouldn’t. I guess a shutter system as suggested above could do the trick, but then you’d have to hope that the kinect doesn’t use any type of temporal coherence.