In the 1930s, as an alternative to celluloid, some Japanese companies printed films on paper (kami firumu), often in color and with synchronized 78 rpm record soundtracks. Unfortunately, between the small number produced, varying paper quality, and the destruction of World War II, few of these still survive. To keep more of these from being lost forever, a team at Bucknell University has been working on a digitization project, overcoming several technical challenges in the process.

The biggest challenge was the varying physical layout of the film. These films were printed in short strips, then glued together by hand, creating minor irregularities every few feet; the width of the film varied enough to throw off most film scanners; even the indexing holes were in inconsistent places, sometimes at the top or bottom of the fame, and above or below the frame border. The team’s solution was the Kyōrinrin scanner, named for a Japanese guardian spirit of lost papers. It uses two spools to run the lightly-tensioned film in front of a Blackmagic cinematic camera, taking a video of the continuously-moving film. To avoid damaging the film, the scanner contacts it in as few places as possible.

After taking the video, the team used a program they had written to recognize and extract still images of the individual frames, then aligned the frames and combined them into a watchable film. The team’s presented the digitized films at a number of locations, but if you’d like to see a quick sample, several of them are available on YouTube (one of which is embedded below).

This piece’s tipster pointed out some similarities to another recent article on another form of paper-based image encoding. If you don’t need to work with paper, we’ve also seen ways to scan film more accurately.

scanning21 Articles

Institutional Memory, On Paper

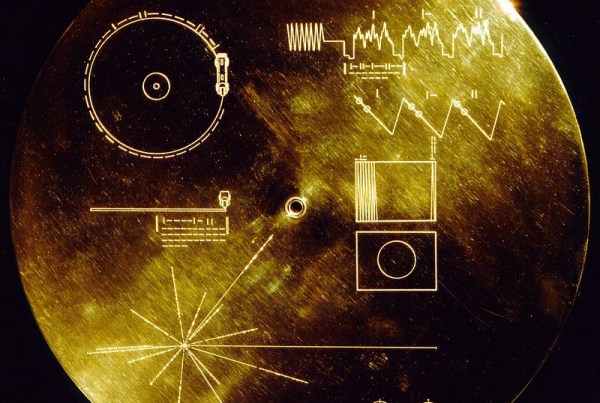

Our own Dan Maloney has been on a Voyager kick for the past couple of years. Voyager, the space probe. As a long-term project, he has been trying to figure out the computer systems on board. He got far enough to write up a great overview piece, and it’s a pretty good summary of what we know these days. But along the way, he stumbled on a couple old documents that would answer a lot of questions.

Dan asked JPL if they had them, and the answer was “no”. Oddly enough, the very people who are involved in the epic save a couple weeks ago would also like a copy. So when Dan tracked the document down to a paper-only collection at Wichita State University, he thought he had won, but the whole box is stashed away as the library undergoes construction.

That box, and a couple of its neighbors, appear to have a treasure trove of documentation about the Voyagers, and it may even be one-of-a-kind. So in the comments, a number of people have volunteered to help the effort, but I think we’re all just going to have to wait until the library is open for business again. In this age of everything-online, everything-scanned-in, it’s amazing to believe that documents about the world’s furthest-flown space probe wouldn’t be available, but so it is!

It makes you wonder how many other similar documents – products of serious work by the people responsible for designing the systems and machines that shaped our world – are out there in the dark somewhere. History can’t capture everything, and it’s down to our collective good judgement in the end. So if you find yourself in a position to shed light on, or scan, such old papers, please do! And then contact some nerd institution like the Internet Archive or the Computer History Museum.

Building An Electron Microscope For Research

There are a lot of situations where a research group may turn to an electron microscope to get information about whatever system they might be studying. Assessing the structure of a virus or protein, analyzing the morphology of a new nanoparticle, or examining the layout of a semiconductor all might require the use of one of these devices. But if your research involves the electron microscope itself, you might be a little more reluctant to tear down these expensive devices to take a look behind the curtain as the costs to do this for more than a few could quickly get out of hand. That’s why this research group has created their own electron detector.

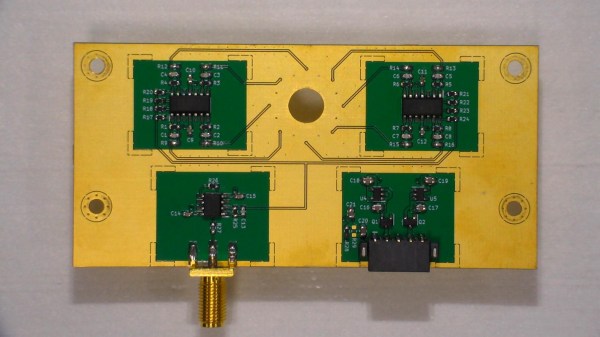

Specifically, the electron detector is designed for use in a scanning electron microscope, which is typically used for inspecting the surface of a sample and retrieving a high-resolution, 3D image of it compared to transmission microscopes which can probe internal structures. The detector is built on a four-layer PCB which includes the photodiode sensing array, a series of amplifiers, and a power supply. All of the circuit diagrams and schematics are available for inspection as well thanks to the design being licensed under the open Creative Commons license. For any research team looking to build this, a bill of materials is also included, as is a set of build instructions.

While this is only one piece of the puzzle surrounding the setup and operation of an electron microscope, its arguably the most important, and also greatly lowers the barrier of entry for anyone looking to analyze electron microscope design themselves. With an open standard, anyone is free to modify or augment this design as they see fit which is a marked improvement over the closed and expensive proprietary microscopes out there. And, if low-cost microscopes are your thing be sure to check out this fluorescence microscope we featured that uses readily-available parts to dramatically lower the cost compared to commercial offerings.

Proto-TV Tech Lies Behind This POV Clock

If it weren’t for persistence of vision, that quirk of biochemically mediated vision, life would be pretty boring. No movies, no TV — nothing but reality, the beauty of nature, and live performances to keep us entertained. Sounds dreadful.

We jest, of course, but POV is behind many cool hacks, one of which is [Joe]’s neat Nipkow disk clock. If you think you’ve never heard of such a thing, you’re probably wrong; Nipkow disks, named after their 19th-century inventor Paul Gottlieb Nipkow, were the central idea behind the earliest attempts at mechanically scanned television. Nipkow disks have a series of evenly spaced, spirally arranged holes that appear to scan across a fixed area when rotated. When placed between a lens and a photosensor, a rudimentary TV camera can be made.

For his Nipkow clock, though, [Joe] turned the idea around and placed a light source behind the rotating disk. Controlling when and what color the LEDs in the array are illuminated relative to the position of the disk determines which pixels are illuminated. [Joe]’s clock uses two LED arrays to double the size of the display area, and a disk with rectangular apertures. The resulting pixels are somewhat keystone-shaped, but it doesn’t really distract from the look of the display. The video below shows the build process and the finished clock in action.

The key to getting the look right in a display like this is the code, and [Joe] put in a considerable effort for his software. If only the early mechanical TV tinkerers had had such help. [Jenny List] did a nice write-up on the early TV pioneers and their Nipkow disk cameras; we’ve also seen other Nipkow displays before, but [Joe]’s clock takes the concept to another level.

Drones Can Undertake Excavations Without Human Intervention

Researchers from Denmark’s Aarhus University have developed a method for autonomous drone scanning and measurement of terrains, allowing drones to independently navigate themselves over excavation grounds. The only human input is a starting location and the desired cliff face for scanning.

For researchers studying quarries, capturing data about gravel, walls, and other natural and man-made formations is important for understanding the properties of the terrain. Controlling the drones can be expensive though, since there’s considerable skill involved in manually flying the drone and keeping its camera steady and perpendicular to the wall it is capturing.

The process designed is a Gaussian model that predicts the wind encountered near the wall, estimating the strength based on the inputs it receives as it moves. It uses both nonlinear model predictive control (NMPC) and a PID controller in its feedback control system, which calculate the values to send to the drone’s motor controller. A long short-term memory (LSTM) model is used for calculating the predictions. It’s been successfully tested in a chalk quarry in Denmark and will continue to be tested as its algorithms are improved.

Getting a drone to hover and move between GPS waypoints is easy enough, but once they need to maneuver around obstacles it starts getting tricky. Research like this will be invaluable for developing systems that help drones navigate in areas where their human operators can’t reach.

[Thanks to Qes for the tip!]

Camera And Code Team Up To Make Impossible Hovering Laser Effect

Right off the bat, we’ll say that this video showing a laser beam stopping in mid-air is nothing but a camera trick. But it’s the trick that’s the hack, and you’ve got to admit that it looks really cool.

It starts with the [Tom Scott] video, the first one after the break. [Tom] is great at presenting fascinating topics in a polished and engaging way, and he certainly does that here. In a darkened room, a begoggled [Tom] poses with what appears to be a slow-moving beam of light, similar to a million sci-fi movies where laser weapons always seem to disregard the laws of physics. He even manages to pull a [Kylo Ren] on the slo-mo photons with a “Force Stop” as well as a slightly awkward Matrix-style bullet-time shot. It’s entertaining stuff, and the effect is all courtesy of the rolling shutter effect. The laser beam is rapidly modulated in sync with the camera’s shutter, and with the camera turned 90 degrees, the effect is to slow down or even stop the beam.

The tricky part of the hack is the laser stuff, which is the handiwork of [Seb Lee-Delisle]. The second video below goes into detail on his end of the effect. We’ve seen [Seb]’s work before, with a giant laser Asteroids game and a trick NES laser blaster that rivals this effect.

Continue reading “Camera And Code Team Up To Make Impossible Hovering Laser Effect”

3D Print Your 3D Scanner

[QLRO] wanted a 3D scanner, but didn’t like any of the existing designs. Some were too complex. Some were simple but required you to do things by hand. That led to him designing his own that he calls AAScan. You can see the thing operating in the video below.

In general, you can move the camera around the object or you can move the object around while the camera stays fixed. This design chooses the latter. You’ll need a stepper motor with a driver board and an Arduino to make the turntable rotate. You also need a computer running Python and Meshroom. The phone also has to run Python and [QLRO] used QPython on an Android device.