The reports of the death of the VGA connector are greatly exaggerated. Rumors of the demise of the VGA connector has been going around for a decade now, but VGA has been remarkably resiliant in the face of its impending doom; this post was written on a nine-month old laptop connected to an external monitor through the very familiar thick cable with two blue ends. VGA is a port that can still be found on the back of millions of TVs and monitors that will be shipped this year.

This year is, however, the year that VGA finally dies. After 30 years, after being depreciated by several technologies, and after it became easy to put a VGA output on everything from an eight-pin microcontroller to a Raspberry Pi, VGA has died. It’s not supported by the latest Intel chips, and it’s hard to find a motherboard with the very familiar VGA connector.

The History Of Computer Video

Before the introduction of VGA in 1987, graphics chips for personal computers were either custom chips, low resolution, or exceptionally weird. One of the first computers with built-in video output, the Apple II, simply threw a lot of CPU time at a character generator, a shift register, and a few other bits of supporting circuitry to write memory to a video output.

The state of the art for video displays in 1980 included the Motorola 6845 CRT controller and 6847 video display generator. These chips were, to the modern eye, terrible; they had a maximum resolution of 256 by 192 pixels, incredibly small by modern standards.

Other custom chips found in home computers of the day were not quite as limited. The VIC-II, a custom video chip built for the Commodore 64, could display up to 16 colors with a resolution of 320 by 200 pixels. Trickery abounds in the Commodore 64 demoscene, and these graphics capabilities can be pushed further than the original designers ever dreamed possible.

When the original IBM PC was released, video was not available on the most bare-bones box. Video was extra, and IBM offered two options. The Monochrome Display Adapter (MDA) could display 80 columns and 25 lines of high resolution text. This was not a graphic display; the MDA could only display the 127 standard ASCII characters or another 127 additional characters that are still found in the ‘special character’ selection of just about every text editor. The hearts, diamonds, clubs, and spades, ♥ ♦ ♣ ♠, were especially useful when building a blackjack game for DOS.

IBM’s second offering for the original PC was far more colorful option. The Color Graphics Adapter (CGA) turned the PC into a home computer. Up to 16 colors could be displayed with the CGA card, and resolutions ranged from 40×25 and 80×25 text mode graphics to a 640×200 graphics mode.

Both the MDA and CGA adapters offered by IBM were based on the Motorola 6845 with a few extra bits of hardware for interfacing with the 8-bit ISA bus, and in the case of many cards, a parallel port. This basic circuit would be turned into a vastly superior graphics card released in 1982, the Hercules Graphics Card.

Hercules offered a 80×25 text mode and a graphics mode with a resolution of 720×348 pixels. Hercules’ resolution was enormous at the time, and was still useful for many, many years after the introduction of the superior VGA. Most dual-monitor setups in the DOS era used Hercules for a second display, and some software packages, AutoCAD included, used a second Hercules display for UI elements and dialog boxes.

Still, even with so many choices of display adapters available for the IBM PC, graphics on the desktop was still a messy proposition. Video cards included dozens of individual chips, implementing the video circuit on a single board was difficult, resolution wasn’t that great, and everything was really based on a Motorola CRT controller. Something had to be done.

The Introduction of VGA

While the PC world was dealing with graphics adapters consisting of dozens of different chips, all based on a CRT controller designed in the late 70s, the rest of the computing world saw a steady improvement. 1987 saw the introduction of the Macintosh II, the first Mac with a color display. Resolutions were enormous for the time, and full-color graphics were possible. There is a reason designers and digital artists prefer Macs, and for a time in the late 80s and early 90s, it was the graphics capabilities that made it the logical choice.

Other video standards blossomed during this time. Silicon Graphics introduced their IRIS graphics, Sun was driving 1152×900 resolution displays. Workstation graphics, the kind used in $10,000 machines, were very good. So good, in fact, that resolutions available on these machines frequently bested the resolution found in cheap consumer laptops of today.

By 1986, the state of graphics on the Personal Computer were terrible. The early 80s saw a race for faster processors, more memory, and an oft-forgotten race to have more pixels on a screen. The competition for more pixels was so intense it was defined in the specs for the 3M Computer – a computer with a megabyte of memory, a megaFLOP of processing power, and a megapixel display. Putting more pixels on a display was just as important as having a fast processor, and in 1986, the PC graphics card with the best resolution – Hercules – could only display 0.25 megapixels.

In 1987, IBM defined a new graphics standard to push the graphics on their PC to levels only workstations from Apple, Sun, and SGI could compete with. This was the VGA standard. It was not built on a CRT controller; instead, the heart of the VGA chipset was a custom ASIC, a crystal, a bit of video RAM, and a digital to analog converter. This basic setup would be found in nearly every PC for the next 20 years, and the ASIC would go through a few die shrinks and would eventually be integrated into Intel chipsets. It was the first standard for video and is by far the longest-lived port on the PC.

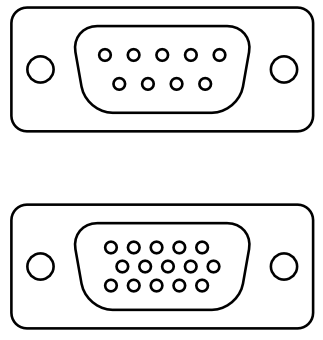

When discussing the history of VGA, it’s important to define what VGA is. To everyone today, VGA is just the old-looking blue port on the back of a computer used for video. This is somewhat true, but a lie of omission – the VGA standard is more than just a blue DE-15 connector. The specification for VGA defines everything about the video signals, adapters, graphics cards, and signal timing. The first VGA adapters would have 256kB of video RAM, 16 and 256-color palettes, and a maximum resolution of 800×600. There was no blitter, there were no sprites, and there was no hardware graphics acceleration; the VGA standard was just a way to write values to RAM and spit them out on a monitor.

Still, all of the pre-VGA graphics card used a DE-9 connector for video output. This connector – the same connector used in old ‘hardware’ serial ports – had nine pins. VGA stuffed 15 pins into the same connector. The extra pins would be extremely useful in the coming years; data lines would be used to identify the make and model of the monitor, what resolutions it could handle, and what refresh rates would work.

The Downfall of VGA

VGA would be improved through the 1980s and 1990s with the introduction of SVGA, XGA, and Super XGA, all offering higher resolutions through the same clunky connector. This connector was inherently designed for CRTs, though; the H-sync and V-sync pins on the VGA connector are of no use at all to LCD monitors. Unless the monitor you’re viewing this on weights more than 20 pounds and is shooting x-rays into your eyes, there’s no reason for your monitor to use a VGA connector.

The transition away from VGA began alongside the introduction of LCD monitors in the mid-2000s. By 2010, the writing was on the wall: VGA would be replaced with DisplayPort or HDMI, or another cable designed for digital signals needed by today’s LCDs, and not analog signals used by yesteryear’s CRTs.

Despite this, DE-15 ports abound in the workspace, and until a few years ago, most motherboards provided a D-sub connector, just in case someone wanted to use the integrated graphics. This year, though, VGA died. Intel’s Skylake, their newest chip that is now appearing in laptops introduced during CES this month, VGA support has been removed. You can no longer buy a new computer with VGA.

Despite this, DE-15 ports abound in the workspace, and until a few years ago, most motherboards provided a D-sub connector, just in case someone wanted to use the integrated graphics. This year, though, VGA died. Intel’s Skylake, their newest chip that is now appearing in laptops introduced during CES this month, VGA support has been removed. You can no longer buy a new computer with VGA.

VGA is gone from the latest CPUs, but an announcement from Intel is a bang; VGA was always meant to go quietly. Somehow, without anyone noticing, you cannot search Newegg for a motherboard with a VGA connector. VGA is slowly disappearing from graphics cards, and currently the only cards you can buy with the bright blue plug are entry-level cards using years-old technology.

VGA died quietly, with its cables stuffed in a box in a closet, and the ports on the back of a monitor growing a layer of dust. It lasted far beyond what anyone would have believed nearly 30 years ago. For the technology that finally broke away from CRT controller chips of the early 1980s, VGA would be killed by the technology it replaced. VGA was technically incompatible with truly digital protocols like DisplayPort and HDMI. It had a storied history, but VGA has finally died.

Editorials from Brian make baby Jesus cry…

“the H-sync and V-sync pins on the VGA connector are of no use at all to LCD monitors” – Sigh…. and you are a technology expert?

“VGA support has been removed” – They only removed the internal DAC. 24-bit parallel RGBHV will always be there and you can make a VGA output from that with a few dozen resistors.

“VGA has finally died” – Really? Just because PC motherboads are no longer stuffing a DB-15H? Insightful…

Yup, many good reasons for why VGA is far from gone.

Especially when one thinks that Mini VGA was released only about 5 (maybe 10) years a go.

And that VGA is embedded in most DVI port. And lots of graphics cards use DVI. (And there is also mini DVI)

I think that VGA is going to stay for at least another 5 years. (And that is very pessimistic)

DVI does not implies that it will have analog VGA signals. DVI-D: carries digital only.

I have stated that, “VGA is embedded in most DVI port”, but not all.

DVI-D is rather rear. (In my experience) As most people that buys anything with DVI on it, practically are looking for Digital and Analog.

Majority of devices I’ve seen with more than one DVI port have at most one DVI-I port and the other is DVI-D, zero things I’ve seen in the last several years have DVI-A. At this point most people who buy things with DVI are buying things with HDMI or Displayport (mostly professional GPUs) that happen to have DVI attached.

That first one is just bafflingly stupid. Perhaps the author might explain how you identify the beginning of a field or the beginning of a scanline reliably without the sync pulse. I’m not holding my breath…

DisplayPort/HDMI/DVI are digital and special packet are use for HSYNC and VSYNC. So yes the author here is dead right about uselessness of dedicated HSYNC/VSYNC signal in digital world.

That’s because DisplayPort/HDMI/DVI are intended for longer-distance transport of video, and they’re packet-based.

Go ahead and look up any larger LCD panel, and check out its direct interface. Chances are you’ll see 8 bits for red, 8 bits for green, 8 bits for blue… and HSYNC/VSYNC lines.

sometimes they use some kind of combined sync, but they need a sync signal.

24bit RGBHV is still useful for driving LCDs, as its digital.

And connecting Laptops with HDMI port to Video projectors with HDMI port via analog VGA and thus reduced resolution should be a punishable crime. :-) Unfortunately still committed often in conference rooms.

So I will not cry a tear if this analog connection vanishes.

Funny, last I tested, I could quite happily set a high-end conference room’s SUXGA (1920×1200) projector to run at its native resolution over VGA without any trouble, but HDMI insisted on falling back to 1920×1080 instead, as other than the newer 4K resolutions that’s the highest mode defined for the standard. And quite often it’ll glitch out and decide to run in a stupid overscan mode that’s closer to 1850×1000 displayed so you lose all the UI elements around the edge of the picture…

VGA supports a free choice of mode from 640×350 up to at *least* 2.5K resolution (including 1920×1080 when connected to a suitable screen), and there’s few projectors that actually support resolutions even as high as that. Laptops will typically drop to 1280×800 or 1024×768 when connected to a data projector *because that’s the projector’s actual native resolution*. They’re remarkably low-rez beasts in the main, and until very recently typical base models still only sported SVGA (800×600) resolution.

If you have a VGA laptop and an HDMI laptop connecting to a midrange projector, and the VGA model sets 1280×800 / 1024×768 or less, but the HDMI sets a higher resolution, probably 1080p… it’s most likely that the HDMI model is setting an incorrect mode (thanks to only having a very limited range of preset resolutions), not the VGA. The only exception would be with pretty old machines using a low-end integrated graphics chipset (and I’m talking i965 or earlier, possibly even i945/i845, i.e. stuff that’s 10 years old or more by now) which don’t have firstparty or generic Windows driver support for widescreen resolutions despite being capable of at least 1280×1024 (and commonly 1400×1050, even 1600×1200) non-widescreen… but even then you can force a custom resolution with easily downloaded utilities.

And in any case, Powerpoint (by far the most commonly used program for presentations on large-format displays) designs its slides for 4:3 by default, so unless your presenter has bothered to switch to 16:10 or 16:9 (a bit of a gamble if they don’t know how up to date a projector or large-format LCD they’ll have available to show it on), it makes little difference in the end if it sets 1024×768 instead of 1366×768 (and only a small difference if it sets 1024×768 instead of 1280×800) because the image will appear exactly the same on-screen in the end.

Native 4k projectors have been around for a while. They just aren’t cheap so you often find old clunkers in hotels and homes. Check out the Sony Sony VPL-XW6000ES ($12k) or VPL-XW5000ES ($6k) . Both do true 4k, HDR10 – although they both lack Dolby Vision and only do 60hz at 4k. Also, they’re HDMI 2.1 rather than shitsplay port.

“RGBHV will always be there…” — More information, please?

I think Alan is assuming that all that’s happened is the video card has had the physical DB15 removed along with the DACs driving it, but the actual chip still retains the 26 output lines required to digitally feed said DACs (or possibly it even retains the analogue RGB pins if the DACs are built in, but they’re not connected to anything). Which is maybe an unsafe assumption if we consider newer chips that shave their die areas and pin counts to save money and make space for newer features, and so have only fully digital outputs (HDMI and so on).

However, as far as I know, most desktop chipsets still retain some measure of analogue output, either through DVI-I ports or the Displayport standard – both of which can produce VGA compatible signals through simple passive socket adaptors. It just doesn’t make sense to abandon it completely when there’s still such a *huge* installed base of screens that are VGA compatible but might not be able to understand any of the purely digital standards, and keeping the compatibility within the chipset somewhere doesn’t really take up any meaningful silicon real estate. The only things to have seemingly fully abandoned it are fully mobile chipsets (like those used for phones and Android/iOS tablets), and in that case it’s rather less that they’ve lost VGA, just that they didn’t acquire it at the same time as they gained MHL (ie the cut down flavour of HDMI sported by various mobile devices) output through their USB/charge ports…

I really should purge those old cables out of the crate under my bench…

I have enough useless cabling I’ve collected over the years to wire a small apartment building, if I’ve scavenged 1% from it, I’d be surprised,

Exactly. I use shielded cables autopsied from VGA cable very often in my projects. I love them as they are free and usable as a thin 75 Ohm coax up to some 100MHz

Shooting x-rays into your eyes. A bit of an exaggeration, no? Would be a good article if you remove some of they hyperbole.

https://97520abd-a-62cb3a1a-s-sites.googlegroups.com/site/bartopcabinet/construction/build-log-part-1/IMG_3398.jpg

X-RAY emission is only in a fault condition. ie at high voltage

The X-rays actually go out to the side, at right angles to the impact path of the electrons. So you’d only be getting X-rays in your eyes if you’re standing to the side of the monitor looking at it edge-on.

And all the shielding was removed.

Any x-rays generated by a CRT are absorbed by the grounding surrounding the display itself. Most of it ends up going right back into the power supply with the rest dissipating as heat. X-rays contain a lot of energy, and display designers don’t want to waste a watt when they don’t have to. There is also the fact that no company is going to let anything out of the factory that could poison the consumer, litigation is very, very expensive; and a dead customer isn’t going to be able to buy the next model.

I could feel a very strong electric field on my monitor. My hair would point at it. At one point I got one of these mesh things to put in front of it. Worked very well in shielding the field, ecept the ground clip sometimes fell of end then touching the thing was quite shocking.

I do hope that you’re just adding an anecdote here and not equating static charge accumulation to ionizing radiation emission?

This field can be a nuisance, but it is in no way dangerous.

Someone forgot the EGA system, slotted in between CGA and VGA.

I was thinking the same thing myself. There was also the oddball PCjr video system from roughly the same time period which, if memory serves, was 16-color CGA or something like that. Piece of crap computer, but the graphics were quite nice looking for that era.

The Radio Shack / Tandy machines of the era had the same thing. Using some of the system ram to allow 320x200x16 colors.

The PCjr video was sort of a ‘low rent’ EGA, supporting the same bit depths as the low resolutions of EGA but with a different addressing scheme.

Radio Shack thought the PCjr was going to be a hot item and added the 16 color modes to their Tandy 1000 PC compatible line, but for some reason made a slight change so software had to be specifically written for the Tandy modes so if a programmer wanted a game to run with the same graphics on PCjr and Tandy the code would require options for both.

Fortunately there’s a rather easy hardware mod for the PCjr that makes it simultaneously compatible with software written for PCjr video and Tandy video.

PCjr and Tandy also use the same sound chip (same as in the TI-99/4A) but again addressed slightly differently, and again there’s a simple hardware mod for the PCjr to do the same trick for its sound.

For a few years there was a rather large upgrading and hacking community for the PCjr, including some companies producing addons for more RAM, a second floppy, hard drives, Soundblaster and VGA upgrades and even somehow adding DMA that provided a big speed improvement. Very popular was swapping in an NEC V20 CPU. But at its core it was still an 8088 and fully tricked out couldn’t run any system newer than Windows 3.0.

There was also the weird video that was “semi EGA” in things like the Amstrad PC1640HD30.. former friend had one… they were an “interesting” system…

Owned a PC1640 at the time and can assure you it was fully EGA compatible, and not worse than any EGA or VGA implementation from back then. Worked with Sierra games, Deluxe Paint, Geoworks (someone remembers that?) and even Windows 2 (yes, two). Must say Amstrad got that part right for such a cheap machine. A few other things on it were shifty, though. The mouse, for one. And the floppy controller was a disaster, even if in paper was standard.

I found it’d work with some things and not others – can’t remember what it was, maybe Shufflepuck Cafe that had problems?… and the 360K floppy drives certainly made it fun to load stuff in. To upgrade it to DOS 6.22 I had to copy from an already installed system, sys a: c: off a floppy, then fdisk /mbr as the DOS floppies were 1.2MB :)

Yeah, bit of a goof… it was rather closer to VGA than it was EGA, and VGA seems to have essentially been IBM making a new standard for the PS/2 line that incorporated some of the good ideas pioneered by the makers of “super EGA” cards (such as turning the PGC’s 640×480 resolution from a bit of an oddball also-ran to a quasi-standard for multisync monitors) and made them official, along with a few of their own (full analogue signalling versus EGA’s 64-colour limit borne of the available number of lines in the cable, and further extending the EGA’s own onboard acceleration features). EGA low in 16 colours particularly remained a common video mode for a lot of software, particularly games (indeed, for a period it was pretty much *the* defining PC graphics mode, and you could always spot something that had originated on the platform then been lazily ported to the competing 16-bits), a remarkably long time into the VGA era… possibly because its data was compatible, with just a slight change to the graphics drivers, with everything from the PCjr through to VGA itself.

Also missed that VGA originally could run perfectly happily with only a 9-pin port, and it was as common to find that connection as a DE15 in the early years (9 pins are enough for R,G,B analogue plus their grounds, H/V sync and a sync ground – the separate returns being necessary for a high frequency analogue system, whereas TTL digital is happy with a shared ground).

The 15-pin one just got adopted as the final industry standard because it provided a few extra features (automatic vertical scaling for the modes with different line-counts, automatic monitor type detection, voltage supply for accessories etc) and maybe more importantly avoided some of the confusion that, until then, could be rather too common between the various different standards that used the same 9-pin plug… including at least four different and partly or wholly incompatible video/monitor standards that required you to carefully set the correct dip switch settings for (CGA-alike including PCjr/Tandy 1000, MDA-alike including Hercules, EGA-alikes, and a brace of 25kHz 400-line oddities), serial ports, bus mice, Atari style joysticks… all of which could end up causing or being caused serious damage by a misconnection.

i think the reason that rumors of the abolishment of vga may have come from the “vista compliance effect” (horror stories that to be vista compliant the vga port had to be disabled or severely pixelated if premium content was displayed like a bluray movie or an hd movie from itunes or netflix streaming)

It was a part of HDCP compliance that the analog outputs for both audio and video have to be resolution limited while playing protected content. Video was limited to DVD resolution and audio was limited to 44.1 kHz/16 bit stereo. Full resolution and audio was available through HDCP compliant digital outputs. No HDCP compliant video card and monitor = no picture.

That is still the case.

And then the open source scene kicked in.

Pretty sure the rumours of “analogue sunset” were essentially just bluster; I worked somewhere that had a bunch of cowboys come in to install a load of upgraded AV systems in the conference rooms for which I then had to pick up the pieces on, but one of the things that I never actually had to address was how they cheaped out by hooking up the Blu-Ray players to the full HD projectors using analogue component cables instead of HDMI (which would have needed a CAT5 extender system because of the long cable run). Played just fine in full resolution.

Can’t speak for the computer side of things, as the computers never had BDROMs installed, but they were still routinely making the connections from desktop machine to projector over VGA until I left last year and not suffering any issues, with Windows 7 and even 10.

If you’re going to cap the resolution of HDCP video for output from your computer, it’s surely far easier to just limit the resolution of the decoder rather than forcing the entire machine to run a lower resolution from the video card outwards? It seems like a very backward tactic. What if you only want to run the video in a low resolution taking up part of the screen whilst you do something else in another window? How does it work with multiple monitors? And if it’s decoding in full quality and merely downscaling to fit the low screen resolution, what’s to stop someone taking the decoded output and retargeting it in a way that makes the full resolution available in some other way – sending it to another machine using VLC server, or splitting it across four monitor outputs and joining them back together afterwards?

This is quite silly VGA is absolutely not dead or going to be for a long time. I work in the AV industry as an IT professional we still use and will continue to use VGA for a HUGE number of solutions for a long, long time to come. MDP to VGA, HDMI to VGA and DVI to VGA converters will remain in all of our switcher kits probably forever. All this sounds like the “death of the desktop” which also will never ever happen.

Interesting write up aside from the nonsense.

Interesting insight. I work on the other side, that is I give presentations very often. I have been to many places in the US and Europe and there is hardly any problem if your laptop has VGA out. All projectors and TVs used for that purpose support it. People using macbooks need to carry their dongles with them, since usually other ports are not connected (the projector is fixed far above, on the ceiling and only VGA in is connected – no way you are going to plug anything else without a ladder).

But VGA does not support fullHD resolution. Any modern Laptop has a HDMI port, so why are people not connecting HDMI cables to ceiling mounted video projectors?

“But VGA does not support fullHD resolution.”

Really? That means that my 1900×1200 CRT is a lie? :(

Clearly I must have imagined running 2048×1536 CRTs back in the early 2000s. Also apologies, accidentally reported instead of replying :L

There’s often confusion between actual VGA, ie 640×480 16 colors, and the extensions/improvements to it while using the same analog interface SVGA and so on

Just out of curiosity I searched a random online store for Socket 1151 mainboards with d-sub (vga) port. I found over 100 Skylake mainboards with VGA port, so VGA does not seem dead yet :)

I believe that socket was available pre-skylake though, for at least a few controllers

You’re thinking of LGA1150.

LGA1156: Nehalem

LGA1155: Sandy Bridge, Ivy Bridge

LGA1150: Haswell, Broadwell

LGA1151: Skylake, presumably Kaby Lake and Cannonlake

“When the original IBM PC was released, video was not available on the most bare-bones box. Video was extra, and IBM offered two options.”

In what way is that “not available”?

By that same standard, video wasn’t available on many other computers being sold at that time either, other than the microprocessor-based “personal computers”.

Perhaps surprisingly, this was correct, though somewhat crudely stated.

If you bought an IBM PC system unit (for the price of a small car), the video adapter was not included. Neither was a monitor or even a keyboard. You had to buy those separately and install them yourself. Of course, your Authorized IBM Dealer would be happy to help you put together an order for a complete working machine, and they would put it together for you and deliver it to your business.

This was marketed as flexibility (you could choose between the monochrome or CGA adapter) but of course it was also a way to keep those Authorized Dealers happy and in business. Not to mention a way to allow separate engineering teams to work on different projects at the same time, and finish the IBM PC design in a year.

English isn’t your language eh?…you can’t take a part of sentence, and question it’s context.

He didn’t say it wasn’t available, “video was not available on the most bare-bones box.”….which makes this statement a specific case, and is also 100% true. especially considering that the Original IBM PC, and PC-XT were somewhere in the 2000 plus range, and the CGA graphics was probably about 800-900 dollars (guesstimating from my memory), which also meant most purchasers at the time skipped the video card until later when there were more options.

How were the machines usable without video boards?

skipped reading the article huh?….

The Monochrome Display Adapter (MDA) could display 80 columns and 25 lines of high resolution text. This was not a graphic display; the MDA could only display the 127 standard ASCII characters or another 127 additional characters that are still found in the ‘special character’ selection of just about every text editor. The hearts, diamonds, clubs, and spades, ♥ ♦ ♣ ♠, were especially useful when building a blackjack game for DOS.

and in case you don’t understand…this is a CHARACTER display, VIDEO and CHARACTER displays are not the same! Character displays generally only allow you to display characters, which were normally stored in static rom’s (some times ram) but were always predefined. As things progressed, we had the ability to load new character sets into ram/rom. This is also the way most printers were able to use different fonts. There was limited access to build objects from pixels at that time, except by using specific hardware designed for that, like the old Targa (Targus?) boards etc.

Actually character-only displays were still video displays. It’s the fact that the output is displayed on a CRT, video hardware, that makes it a video display.

TARGA! i remember that!

i had an ILLUMINATOR board with TARGA modes.

i got it for free since it was so outdated at the time, by then, people were using miniature chips on PCI busses to do multiple VGA and NTSC in and out

i miss it so much, it was one of the most densely packed through-hole boards i’d seen

too bad it was so old the only software for it i could find was for config and testing.

i thought it was sooo cool to use it as a video converter from NTSC (or pal) to VGA

and even had a NTSC out for previewing or something on a 3rd screen

unfortunately it used so many IRQs, DMAs, and memory addresses that you really could NOT simultaneously have a ton of expansions cards. (soundcard, 2ndIDE, nonIDEcdrom ect). the software talked of multiple cards in one system… must have been a config nightmare!

Skipped thinking eh? Commenting without knowing is your thing huh?

MDA is a video adapter. It outputs video signals. Claiming otherwise is ludicrous.

BTW while there were software that supported MS-DOS running over serial terminals (hooking the video card interrupt) it was extra and not included in the original PC, so no, the PC wasn’t usable without a video card. But better to spew shit than knowing shit, right?

“[The 6845 and 6847] had a maximum resolution of 256 by 192 pixels”.

I have to admit I’m not that familiar with the 6847 (color video generator) but the 6845 was intended as a TEXT video generator helper chip. Basically it would generate the timing pulses for synchronization, and the addresses into a video buffer and a character generator ROM. The hardware would have to pull the row of pixels from the character generator ROM, based on the address coming out of the 6845, and it would have to convert it to serial. If you wanted graphics (especially in color), you had to do some electronic trickery. If your trickery was smart enough, you could do color text or graphics.

The IBM PC Color/Graphics Adapter (CGA) generated 320×200 and 640×200 graphics using a 6845. The IBM EGA adapter generated 640×350 using a 6845. The Hercules graphics adapter generated 720×348 using a 6845. They all had some smart circuitry that enhanced the 6845 so that this was possible.

The 6845 was an awesome chip, and in most cases, the limitations (e.g. low number of colors or low number of pixels) were due to a limited amount of memory.

And VGA is not dead. It’s only a flesh wound.

Pedantically: The 6845 supports a maximum resolution of 256 horizontal, 128 vertical, and 16384 total character cells. Each character cell could be up to 32 scanlines high. Character cells could be clocked through at a maximum rate of 2 or 2.5MHz (depending on datasheet, I think). This character cell constraint is why the CGA & Hercules graphics modes are so goofy.

The EGA doesn’t use a 6845—it actually supports true graphic modes (i.e. “give me 350 scanlines of data”, not the Hercules’s “give me 87 characters high, each 4 scanlines tall”).

I still want to get my hands on an old MDA/Hercules monitor for an hour or two so that I can build the support hardware and drive it from a VGA port.

Oh, right, and I completely failed to mention: there was absolutely NO CONSTRAINT on the number of horizontal pixels (or bytes!) per character cell. Powers of two were just easier.

MDA used 9 horizontal pixels per character cell. CGA used 8 or 4 depending on video mode.

You want to build an analog to TTL video adapter?

Just to see it, really. I know I could drive an IBM 5151 from a VGA port, just by setting up the correct modeline and discretizing analog to TTL, but it’s another thing to have done it and take pictures. (And measure aspect ratio and phosphor halflife)

One thing I recall were the semi-religious debates in Boca when the VGA design was being finalised. The Monochrome adapter had a couple of bugs in – one relating to the 6845 CRT controller. The cursor signal was also generated by the 6845, but unfortunately they neglected to delay it, so it would match the timing of the character being displayed. This meant that it appeared on the screen two character positions to the left of where it should .. no biggie for the Monochrome adapter, people just coded around it. Now, when designing the VGA, do you emulate this bug in the VGA’s simulated 6845 registers? Do you fix it? Do you find room for a register bit that comes up in ‘compatibility mode’ with the bug in effect, and reset the bit if you want the VGA to put the cursor under the correct character ?

There was a palette bug with the EGA functionality as well, can’t recall what it was. VGA had two palettes – the 16 -> 64 color internal logic, and then an external Inmos palette/DAC that took the digital outputs from the VGA and drove the rgb signals to the tube.

On CGA, why did they give it 16 colors then hobble it with a few hideous 4 color palettes? https://en.wikipedia.org/wiki/Color_Graphics_Adapter#Standard_graphics_modes

Then IBM twisted the knife by only allowing black to be swapped for one of the 16 colors, which was rarely, if ever, done because you nearly always need black in graphics.

16 color graphics but the only way you can ever see them all is via tricky software hacks that only work on a 4.77Mhz 8088 computer with a CGA adapter that’s 100% compatible (including various bugs) with the IBM original.

Or run ANSI graphics games like KROZ and ZZT, or software that does the 160×100 hack of text mode. That was made an official mode in the PCjr and the original King’s Quest was contracted by IBM specifically to be a high profile use of that mode.

Almost no one used black as a color. For one, it was a waste of precious pallette, but also not all cga implementations were the same and some would bail if you tried poking new values outside defined palette.

You would see this in shifty programs that didn’t clean up where the new pallette appeared stencilled on the old one and the black areas were clearly transparent.

Ah yes, the CGA 160×100 hack!

CGA had a 16-colour 80-column text mode, right? And it also had half-bar characters, vertically aligned character half-filled with ink colour, other half filled with background colour. So by setting the back and foreground colours appropriately, you have 160 pixels. In 25-line mode, that’s 160×25, not great.

But there was a timing hack where you could tell the controller to start a new text line every 2 pixels, instead of 8. So you’d get 100 lines of text, with the half-bar character adding up to 160×100. Great!

You didn’t have to use the half-bars of course, some games used other characters, anything that has something useful in the top 2 rows of pixels, you could press into service as a bit of graphics.

Something similar was used in the ZX81, which also only had a text-mode (no colour at all, no way ever) display. By changing the line of text per scanline, you could fill each 8×1 cell with any pattern of bits you had available. The patterns of course had to be ones that occurred in the character set. But there was a wide selection. This hi-res mode was claimed to be impossible by Sir Clive Sinclair, and the people who designed the machine! Never underestimate the power of hack! There were even art programs available, that would subtly nudge your pixels around, if you tried to use an arrangement that wasn’t possible. Overall, there were some pretty good-looking hi-res games.

Finally, one more CGA hack, one exemplified in the King’s Quest. The circuit IBM used to construct a composite signal from CGA’s internal RGBI, wasn’t very good. So for people using composite monitors, software could use tricks to get more colours from the 4-colour 320×200 mode. By placing pixels of a particular colour next to each other, in patterns, you could get extra colours.

The Apple ][‘s graphics was pretty much based on this same hack on purpose. Other computers like the Atari 8-bit series could do the same. It helped that NTSC was such a crappy system, but I’ve seen an Atari do it on a PAL TV. The colours on a PAL Atari could vary, so you’d have a quick config routine at the beginning just to check which patterns showed up as what colour. Far as I know no games used it, just demos.

https://en.wikipedia.org/wiki/Composite_artifact_colors

“Artifacting” is what it was often called. On CGA in particular it could make a very big difference. It’s also why, if you ever play old CGA games, they often have those weird stripes, Ultima II being the big example. On a composite monitor, they weren’t stripes.

Unrelated to VGA, but can you comment on http://www.os2museum.com/wp/isa-bus-8514a/

I was sent out to the Boca Lab in Florida to help represent the Hursley Lab in England. They were developing the next family of video adapters, and one of its challenges was to hook in to the new PS/2 bus being developed. The Microchannel 8514/A adapter was the primary target, I would say , but there was a development of rather strange, long and thin XT bus adapter * with a VGA on it, that accepted a daughter board onto multiple connectors. The daughter board had the 8514/A chipset along with a megabyte of VRAM. 8514/A was essentially a hardware engine – as well as doing the 1024 x 768 8-bit color, its hardware could do things like a bitBLT so fonts and so on could be held on the adapter, off-screen and could be used to quickly draw on the display RAM.

There was a separate team in Hursley who were developing a different PS/2 card that eventually became the IBM Image Adapter/A .. plugged into PS/2 as well, but had its own RISC engine on board. It could also handle much larger screens than 8514/A, along with optional daughter boards for things like scanners. However the 8514/A work led on to XGA, which found its way on to motherboards, AT bus adapters, 16-bit PS/2 microchannel adapters and 32-bit Microchannel adapters for RS/6000 as an entry level 2D adapter for AIX.

*(codename Brecon, if I recall. Actually, now I have written that, the long VGA card with the connectors on was Brecon-B for base, then Brecon-D was the daughter board with the 8514 chipset, and Brecon-R was the PS/2 version. The “R” referred to the internal code name for the PS/2 family, which was “Roundup”)

The Wang PC used the 6845 to generate a 800×300 video signal, 80×25 text mode (using the base card) and 800×300 pixels monochrome via an optional expansion card. The cool thing was that one could mix graphics and text as both boards worked in parallel mixing the output to one screen.

I wonder how that worked. Just an OR gate for the 2 sources of pixels? Would work in mono, you might have to keep the sync signals out of the way though. Also both boards in sync.

You’re sure it didn’t just use the graphical display, and simply plot the text characters as pixels? You could OR the pixel outputs of the 2 pixel sources together, like I say, the problem would be the sync. Certainly not un-solvable, but maybe easier just to draw the text straight into the graphics RAM. Perhaps using the character ROM from the text card, if the ROM was available in the processor’s memory space.

If you have a TV capable of component input, you’ll still have VGA as well, since the RGB signals are cross-compatible. Both even support sync on green.

Isn’t component input on TVs usually “luminance component” (YPbPr)? I believe that is thoroughly incompatible with component RGB input (which is more often seen on projectors, AFAIK).

Though I think many TVs do still have D-sub VGA connectors.

Yes ist is, but you had RGB at most SCART inputs.

BUT this is only PAL (or NTSC) timing, with line frequency of only 15,625kHz (or similar for NTSC) – flickering, interlaced pictures with low frame rate and resolution.

The original VGA didn’t support sync on green though. I think the PGC did ..

The Apple 2 threw zero CPU time at it’s display. The raster is done with discrete logic, and it happens in tandem with the CPU, which isn’t interrupted for video DMA, by some clever abuse of the 6502 timing.

Exactly, the DRAM refresh circuit was the video generator, and the video generator was the DRAM refresh circuit. The CPU wasted Zero cycles in outputting the signal. However, the CPU did all the heavy lifting for drawing into memory. Woz’s video memory layout (which was done for the DRAM refresh) required a bit of extra math on every raster operation to generate the correct memory address to write. And sprites were done 100% by the cpu, there were no helper chips. There was an unpopular sprite graphics card that was only supported by a couple of programs, most of which came with the card.

I found a motherboard on Newegg that has a VGA out:

http://www.newegg.com/Product/Product.aspx?Item=N82E16813157491

I’m sure there is some other new motherboard with VGA out *somewhere*, but that one is out of stock and the fact that it is branded as “Windows 8.1 Ready” means that it’s likely not current/updated.

Most video cards include VGA on the DVI-I connector, so the signals not dead yet, though the connector may be.

You can’t stop the signal.

And all Displayport connectors support VGA.

“The first VGA adapters would have 256kB of video RAM, 16 and 256-color palettes, and a maximum resolution of 800×600”

The VGA specification may have optionally accommodated 800×600, but the first VGA cards maxed out at 640×480. 800×600 became available on SVGA cards, so we consider this to be an SVGA resolution.

For 800 x 600, though there was enough RAM to just about fit it in, you’d have needed another crystal oscillator. These days you just program the clock signal to your chosen frequency. The, there were discrete oscillators on the board near the VGA. 25.175MHz for 640 (or 320) modes, 28.321MHz for 720 modes. For 800 x 600, you’d also have needed a 36MHz oscillator that could be gated in. I’m not even sure the original VGA could have managed the timing.

There is also 640x400x8-bit which was also possible with the 256k video RAM but IBM decided not to design the VGA to support it.

All of this careful homage to VGA and you forget about EGA and PGA? Yep, in 1986 there was PGA, the predecessor to VGA. The PGA monitors were actually usable on a VGA card. Go lookup PGA.

Don’t forget EEGA. It stood for Enhanced Enhanced Graphics Adapter. It was only around for a few months before the VGA came out. The resolution was 640×480, the same as VGA. I do not know what the difference was.

EGA did 640×350 in 16 colors max. It had a digital output compatible with cga monitors except for the highest resolution for which you needed an ega monitor, which was just a souped up cga monitor. That was good for games as you could now have 16 colors instead of 4 colors in 320×200 or 16 colors in 640×200 instead of just monochrome. Vga uses an analog monitor, has a higher top resolution of 640×480 and could display 256 colors in the ever popular game mode of 320×200.

Realizing some of you may have never heard of eEGA (enhanced EGA,) here are a few links:

The New York Times tries to sort out the graphics alphabet, 5/29/1988:

http://www.nytimes.com/1988/05/29/business/the-executive-computer-sorting-out-the-graphics-alphabet.html

An article on Enhanced EGA in March 1988:

https://archive.org/stream/byte-magazine-1988-03/byte-magazine-1988-03_djvu.txt

Reflecting on the magazine issue 20 years later, in March 2008:

http://techreport.com/blog/14452/it-was-twenty-years-ago-today

Color Graphics Boards in October 1986, and predicting the future:

https://books.google.com/books?id=mzwEAAAAMBAJ&pg=PA37

The Professional Graphics Controller (PGC) predated VGA by a couple of years and displayed 640×480. I think the dot rate of 25.175MHz came from PGC .. If he’s still with us, Keith Duke would probably be able to confirm. I had the chance to work briefly with the team in Boca designing the VGA, and I have deep respect for the leap that they took.

“This year is, however, the year that VGA finally dies.” – Really? According to whom, you? I just bought a mobo last week with a VGA connector and it was not hard to find. In fact, while I was shopping around, I saw all kinds. Here are some connectors that I would love to see die: Display Port (why does this exist?) and HDMI (integrated sound and DRM – no thanks). My favorite is DVI.

“Here are some connectors that I would love to see die: Display Port (why does this exist?) and HDMI (integrated sound and DRM – no thanks). My favorite is DVI.”

display port exists to allow higher refresh rates at larger resolutions than hdmi 1.x could allow for and also dvi tops out at 2560×1600. basically hdmi and displayport are there for those with 4k and 8k monitors or those with fast refresh rate monitors above 60Hz

Good. Have 3 outputs on my motherboard but can only use 2 of them because 1 is a VGA out which I dont have on my monitors. If they really want it they should use a dual mode DVI socket along side the HDMI and display ports and let people who have antique monitors go buy the adaptor.

“There is a reason designers and digital artists prefer Macs, and for a time in the late 80s and early 90s, it was the graphics capabilities that made it the logical choice.”

No, there -was- a reason and that reason was being too broke to afford and SGI box. As time went on, any benefit of Apple hardware went away and now Apple hardware is actually detrimental to video professionals due to the lack of expansion options and the fact that there is no actual difference between a modern PC and an Apple computer other than Steve Job’s / Tim Cook’s Iron Fist type control over the platform. The only reason you see Apple hardware in the video industry is purely market momentum and lock-in. The same reason that people still buy Xerox copiers and IBM Mainframes.

I think you’ll find the main reason people buy Macs and Mainframes is the same reason – they want to run a particular bit of software. My weaknesses include Omnigraffle, iPhoto and Pixelmator.

And that would be lock-in.

Oh, oops. For some reason I thought of lock-in as market dominance, rather than customers having a preference to buy it because of its inherent properties.

“Display Port (why does this exist?)”

So that self righteous man bun wearing lumber sexual Hipster fan boys can buy an apple specific monitor for about 3x the cost of the equivalent sized Vga monitor.

Or, simply because Steve Jobs was an a$$hole.

wow so much information in 2 sentences.

display port was created to address the slow adoption of standards from the hdmi consortium. namely 4k and 120hz or greater refresh rates. when display port was started hdmi couldn’t go beyond 2560×1600 and at that resolution couldn’t handle over 60Hz refresh rates. it was basically just DVI with audio tacked on. HDCP is just a software layer that can be enforced at both ends of the cable. it can also be enforced on DVI-D or for that matter any future digital display connector standard.

“This year is, however, the year that VGA finally dies. After 30 years, after being depreciated by several technologies, and after it became easy to put a VGA output on everything from an eight-pin microcontroller to a Raspberry Pi, VGA has died. ”

You mean “DEPRECATED”, not “DEPRECIATED”.

You can take my word for it, or join the battle here: http://stackoverflow.com/questions/9208091/the-difference-between-deprecated-depreciated-and-obsolete

need to do better research before posts false statements. You can still get motherboards from Newegg with VGA ports.Board manufacturers have changed the color of the VGA ports from blue to black. Example: http://www.newegg.com/Product/Product.aspx?Item=N82E16813130770 and http://www.newegg.com/Product/Product.aspx?Item=N82E16813132512

Okay, at least I am glad you called it a DE-15 and NOT a DB-15.

A 25 pin LPT sized shell with space for only 15 pins would be rather weird. :) Now where can I find those 13W3 connectors that use the 3 large holes for gas or fluid connections?

Nice idea, but for anything except air that should be self sealing on disconnection at both sides.

Someone needs to hack one of these into being a computer. https://en.wikipedia.org/wiki/Professional_Graphics_Controller It has an 8088 CPU, why should it need a PC to plug into?

Or how about one of those weird NuBus network adapters that have a Motorola 68000 CPU? That’s what the A/ROSE extension is for. Apple / Realtime Operating System Environment. Can one of those network cards be hacked to run other software? Give it a side job to do in parallel with an old Mac, like the ThunderBoard 68040 cards could. They weren’t a CPU upgrade, they were a single board computer capable of running Mac programs, and more than one could be used in the same Mac.

Small timeline niggle; LCDs began to be affordable in the 2000/2001 timeframe. You could get cheap LCDs by 2005. In 2001 I begged for a 1024×768 LCD to go with my new college computer, and fortunately got it. Strangely, it had a VGA port and nothing else (ie DVI). I gave it to a friend a few years ago, and to my knowledge it is still working!

If memory serves, it was an NEC model that was rebranded as Dell. It was also used as the main prop display in Star Trek: Enterprise :)

Thirty years? That’s not old. In the words of Selma Diamond, “I have pantyhose older than that.”

It seems Dell was not informed of VGA’s demise. When my dad’s old CRT died last year, I ordered him a new Dell monitor and a DVI video card. I called Dell and asked if this monitor came with a DVI cable and they said yes. But when I unpacked the monitor, it only had a VGA cable!

That’s interesting, almost every motherboard we have in stock includes VGA, DVI-D, and HDMI…

Benchoff is correct there are X-rays coming towards me from the CRT, I can see them :) Predicting he exact date period the VGA will no longer be available would be as much nonsense as is predicting the return of of a very popular God figures. How long new VGA Equipment will be available for special application is really unpredictable. There is little doubt VGA will disappear eventually. As long as consumer video equipment remain digital so will computer displays, because of the economies of parts interchangeability. I’m keeping a CRT VGA monitor for use in the shop.

so should I pack some vga cables into the travelling hackaday box?

Server boards often still have VGA, because it’s the de-facto standard monitor on Ye Olde Server Room Crash-Cart, and servers don’t need fancy QHD or whatever. Often (and now everywhere, since Skylake), it’s integrated into the BMC chipset rather than the CPU.

Very interesting article, but I only regret one thing: like in most American articles, the Atari and Amiga 16/32bit computers (Amiga 500, Atari STF/STE…) are notably absent from “history”, maybe because they did not have these, the way we did in Europe…

Most PC from this era were put to shame by any one of those computers.

68000 family was so much better than 8088/8086. The other factoid I learned when I was in Boca, was that IBM wanted to use 68000, but it only came in a 16-bit bus variant at the time, which was a massive 64-pin DIL Ceramic package. It was so much more expensive just because of the package than the 8088 was, in its plastic 40-pin DIL. Motorola promised IBM the 68008 would be coming, but the pressure was on to produce the first PC, so they went for 8088.

That cost the industry years of delay in software development. Just think how much quicker we would have got to 32-bit computing. Just think, we may never have made Microsoft the company it is. We probably would never have spent billions developing OS/2.; and we might all have seen Unix on the desktop sooner than we did.

Just bought a brand new LCD monitor, and it came with only a single 15-pin VGA cable despite also supporting DVI and HDMI. It’s an HP 25vx, has decent specs for the price. Damnable glossy bathroom mirror-grade screen coverings aside, the low point was the exclusion of any relevant cable…

Perhaps the article (justifiable) wishful thinking rather than fact. I certainly wouldn’t be sad to see it go. Have loved pixel-perfect displays, even since the day of monochrome laptop LCDs, in preference to blurry VGA no matter their response speed and brightness.

YES

In what way is VGA “blurry”? Have you been running desktop LCDs in non-native resolutions or without properly auto-syncing them so that they’re sampling each incoming pixel in its actual centre?

To actually make VGA blurry you have to use a very poor quality, extra long cable with a chipset that can’t output enough power to overcome the resistance and more importantly inductance of said cable. But if you used a cable of that poor quality with a digital link it’d have trouble signalling at high enough rate to even *match* what analogue VGA could manage over the same physical wire.

(and particularly, versus early LCDs? Are we talking the 640×480 panels in mid 90s laptops, of exactly the same resolution as regular VGA but not as able to scale up 400 and 350 line resolutions as gracefully, or show 720-pixel-wide modes properly at all, particularly the nasty old dual- or even single-scan STN passive-matrix types which had terrible ghosting effects across both neighbouring pixels *and rows*, often across the entire screen (or one half of it for dualscans) which had a much worse effect on clarity than even the world’s worst analogue VGA cable? Or going back a bit further to the 640×400 panels in those offering only CGA and EGA (and sometimes the custom Tandy etc) modes? Or maybe a little more recently, the 800×600 active matrix displays that were the first decent, full colour upgrades from those older laptop screens, but couldn’t upscale *any* typical VGA mode, especially text modes, to fill the entire panel with any kind of panache unless their video controller happened to include specific 800×600 textmodes, instead making you choose between either 1:1 pixel sizing with large letterboxing all around the active area or really nasty nearest-neighbour upscaling that made even the blurriest, coarsest-dot-pitch colour CRT seem sophisticated and upmarket? … FWIW, you should maybe sample some actual classic monochrome CRTs, which don’t have any of that dotmask/aperture grille triad-pixelation and curiously coarse blurriness, but instead can be every bit as crisp as a contemporary LCD… just with infinite capacity to adjust to different resolutions.)

All those old monitors going to waste (sniff sniff)…

I saw three down at the charity shop, cheapest was £2.50. LOL!

All VGA, people evidently don’t realize that the Pi Zero will work with a handful of components notably an LM1881 and resistors.

I’m a tightwad, so keep that in mind as i explain! The desktop PC i am on right now has VGA out, HDMI out on the mobo and VGA and DVI out on the video card. I have 2 LCD monitors, one is VGA only and it is attached to the DVI port with an adapter i already had, the other is VGA/DVI so its hooked to the VGA port. My LCD tv has HDMI x 3 and VGA x 1, the pc i have hooked to that has HDMI and VGA. I went with VGA for that. I am not operating optimally, but i didn’t have to buy any new cables, either.

even the newest DVI output nvidia I got you just need an adapter if you want VGA out. It still does it. It’s even in the box.

who use VGA today. apart yeah in office to plug the old video projector to your laptop and show your nice excel or powerpoint

I am posting this in 2025 to point out that I can go to a supermarket in Verbania, Italy and they still have VGA adapters in the electronics section.