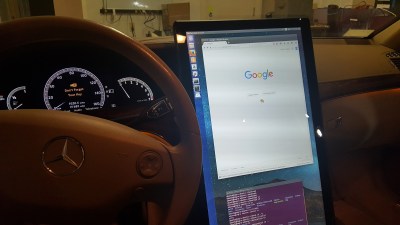

George [Geohot] Hotz has thrown in the towel on his “comma one” self-driving car project. According to [Geohot]’s Twitter stream, the reason is a letter from the US National Highway Traffic Safety Administration (NHTSA), which sent him what basically amounts to a warning to not release self-driving software that might endanger people’s lives.

This comes a week after a post on comma.ai’s blog changed focus from a “self-driving car” to an “advanced driver assistance system”, presumably to get around legal requirements. Apparently, that wasn’t good enough for the NHTSA.

When Robot Cars Kill, Who Gets Sued?

On one hand, we’re sorry to see the system go out like that. The idea of a quick-and-dirty, affordable, crowdsourced driving aid speaks to our hacker heart. But on the other, especially in light of the recent Tesla crash, we’re probably a little bit glad to not have these things on the road. They were not (yet) rigorously tested, and were originally oversold in their capabilities, as last week’s change of focus demonstrated.

On one hand, we’re sorry to see the system go out like that. The idea of a quick-and-dirty, affordable, crowdsourced driving aid speaks to our hacker heart. But on the other, especially in light of the recent Tesla crash, we’re probably a little bit glad to not have these things on the road. They were not (yet) rigorously tested, and were originally oversold in their capabilities, as last week’s change of focus demonstrated.

Comma.ai’s downgrade to driver-assistance system really begs the Tesla question. Their autopilot is also just an “assistance” system, and the driver is supposed to retain full control of the car at all times. But we all know that it’s good enough that people, famously, let the car take over. And in one case, this has led to death.

Right now, Tesla is hiding behind the same fiction that the NHTSA didn’t buy with comma.ai: that an autopilot add-on won’t lull the driver into overconfidence. The deadly Tesla accident proved how that flimsy that fiction is. And so far, there’s only been one person injured by Tesla’s tech, and his family hasn’t sued. But we wouldn’t be willing to place bets against a jury concluding that Tesla’s marketing of the “autopilot” didn’t contribute to the accident. (We’re hackers, not lawyers.)

Should We Take a Step Back? Or a Leap Forward?

Stepping away from the law, is making people inattentive at the wheel, with a legal wink-and-a-nod that you’re not doing so, morally acceptable? When many states and countries will ban talking on a cell phone in the car, how is it legal to market a device that facilitates taking your hands off the steering wheel entirely? Or is this not all that much different from cruise control?

What Tesla is doing, and [Geohot] was proposing, puts a beta version of a driverless car on the road. On one hand, that’s absolutely what’s needed to push the technology forward. If you’re trying to train a neural network to drive, more data, under all sorts of conditions, is exactly what you need. Tesla uses this data to assess and improve its system all the time. Shutting them down would certainly set back the progress toward actually driverless cars. But is it fair to use the general public as opt-in Guinea pigs for their testing? And how fair is it for the NHTSA to discourage other companies from entering the field?

We’re at a very awkward adolescence of driverless car technology. And like our own adolescence, when we’re through it, it’s going to appear a miracle that we survived some of the stunts we pulled. But the metaphor breaks down with driverless cars — we can also simply wait until the systems are proven safe enough to take full control before we allow them on the streets. The current halfway state, where an autopilot system may lull the driver into a false sense of security, strikes me as particularly dangerous.

So how do we go forward? Do we let every small startup that wants to build a driverless car participate, in the hope that it gets us through the adolescent phase faster? Or do we clamp down on innovation, only letting the technology on the road once it’s proven to be safe? We’d love to hear your arguments in the comment section.

It’s not safe until it does everything, a human is going to be off in cloud cuckoo land while autopilot is on and not “page back in” fully for a couple of seconds when the bleeper goes off, so basically you’re just giving them 2 seconds to be imminently aware of their impending death. It’s unpossible to offload the majority of driving tasks and still expect the human to be paying attention well enough with full situational awareness to be able to take over instantly. It works in testing because of the element of danger of “it could explode any moment” and the excitement of development.. but when you don’t have that relationship with it, it’s a washing machine, how exciting are those, hmmm wonder what I can make for dinner.

The level of complexity of any “autopilot” at this moment is more or less that you have to squeeze the wheel knuckles white in wait of it trying to kill you. Any less is a guarantee that it will catch you off-guard.

There’s an anecdote that one of the Google cars mistook the side of a truck for a canyon wall and “understood” itself to be one lane-width left from its actual position, and promptly tried to drive into the canyon by steering right.

Basically this…

http://media.advisorone.com/advisorone/article/2012/08/16/IA_0912_Herbers_Chart.png

A smart manager would keep their workers on the left slope of that curve. If you try to keep people at the peak all the time, any temporary increase in demand will put them right into distress and cause a spiraling breakdown in productivity because they start to lag behind in their work and that causes them more stress.

Right, the point in relation to this though, was if autopilot puts you way down on the left side there’s not very much time on handover for hormones to work and put you at peak performance/arousal.

Another kettle of worms is that different people have their curve apex in different positions up and down the stress/stimulation axis, might be the case that people who you think “can barely drive” would perform better in an emergency autopilot handoff than “superb drivers.” From this you should also see that for people who are driving at peak of curve in normal busy traffic at normal speeds, a phone call could shove them over into the task saturated over stimulated region and make them unsafe. however certain other folks who are riding on the left side in the same conditions, phone call might not detract from their driving at all.

If just driving along normally puts you right on the peak stress performance, then any situation that is more demanding puts you into distress.

That’s not what driving should be like.

Newer drivers and drivers in unfamiliar city going to be there though.

Why presume that a “smart manager” can’t act as the control, using the feedbacks you described? I don’t think a 1-speed open loop control is a “smart manager” at all. If you example was a dumb manager, sure, I’d agree.

Sure, but, whats lost in productivity can be gained on the life insurance and critical illness policies.

It’s not safe until he can buy enough support at the NTSA

Yah, or until GM-Ford-Chrysler write/buy the high barrier to entry rules only they can follow… but leave a loophole for the sneaky.

this article was poorly worded. check what the NHTSA actually sent to him.

http://jalopnik.com/the-feds-were-right-to-question-the-safety-of-the-999-1788330916

and here’s the full letter he was sent

https://www.scribd.com/document/329218929/2016-10-27-Special-Order-Directed-to-Comma-ai

they didn’t say he couldn’t release it at all, only that they had safety concerns that they needed addressed, and firmly suggested he hold off on releasing it until he answers their questions (they never said he couldn’t release it). geohot was the one that went off the deep end and decided to take his ball and bat home instead of answering what should have been very simple questions for any company that has a vested interest in autonomous vehicle technology.

I can see the choice he made though as “Do I want to keep doing what I do, or do I want to make this my whole life for the next 5 years?”

Well, that is the _point_. You better make ensuring safety your (and that of many other engineers) absolute priority if you build technology that can (and, assuming enough market penetration, will) kill people.

Chip design for example is 10% design and 90% validation. And that’s only killing money, not people. The difficulty of a project like this is not “let’s build a system that works 99.999% of the time” (which is mostly a design problem), but rather to have a system that doesn’t kill you with a 0.001% probability, which is still 1-in-a-100000.

So if geohot isn’t willing to spend enough resources on proving that the thing works, then this is simply not the right project for him.

+1 to this

They demanded he answer a long list of questions and provide complete sale channel information (which likely doesn’t exist) and gave a short deadline and a TWENTY THOUSAND DOLLAR A DAY fine for noncompliance.

Not for selling the device, mind you. For not answering them fast enough.

The legal costs just to answer them alone would have cost a small fortune. This wasn’t an attempt to “start dialog” like Jalopnik claims. This was a clear “you’re not one of the big boys, go home.” They’re protecting the established industries and labs.

Who would get sued if an ILS flew an aircraft under its control into the ground? Yes there are pilots onboard that have the final say supposedly, but in the wake of an accident like this there would be several named in a lawsuit and I would hazard a guess it’s going to be the same after an incident with self-driving cars.

I thought that was the point of ILS :-p

I guess you mean, less than softly.

“Into” I wrote, not “onto” the ground. As in: “Rapid deceleration due to controlled flight into terrain” as the official reports euphemistically phrase it.

Cases involving airplane autopilots always end up with the verdict “human error” precisely because it’s already decided that the machine cannot err, and should it nevertheless make a blunder or have a fault, it is always the fault of the pilot anyways – for not catching and correcting the error, even if it was practically impossible.

Aircraft accidents generally follow the rule of “last person to touch the stick”, with emphasis on “person”, because, should the manufacturers admit fault it would affect their sales. For Boeing or Airbus to admit that their autopilot put the plane down would mean such great liabilities that it could put the whole company down.

Not necessarily. Airbus in particular had an aircraft fly itself into the ground when the onboard computer overroad the pilot at the Paris air show and this was a very public failure and no question as to cause. Airbus did not fail over this event. There have been other incidents, but the fact of the matter is that air crashes just don’t get the coverage they once did. There was a time when the whole airline industry suffered for months after every crash, but that’s not the case anymore.

I suspect the same thing will happen with self-driving cars – the first few deaths will be fodder for the news for days, with much handwringing and pontification by talking heads, but in time these will not get covered except in extreme cases. In fact eventually, deaths caused by human drivers will become news and will bring on discussions if this should still be permitted, as most of those still capable of driving will then be older members of the population. A full reversal of attitudes.

It’s not a hard and fast rule, but if you follow historic investigations into airplane accidents there is a trend to over-emphasize the pilot’s responsibility even in cases where the fault would be rightfully pinned on the manufacturer.

The habit is to say “pilot error” and then quietly fix the hardware flaw, so that the public never gets the idea that they’re flying in planes that are unsafe by design. That in turn then contributes to the illusion that computers and AI are infallible, which is most apparent in the question of self-driving cars where people outright assume and have unwavering faith to the point of argument that the robot is superior to man.

If only they knew how little the AI actually does.

As Jan Ciger points out below, that is just not the way it is. Look I spent close to forty years working on the technical side of aviation and accident investigations are both thorough and generally clear of influence. I have been through a few of them, including being a witness once, and everyone is looking for the truth, not trying to pass the buck or cover things up. Things like flight recordings, ATC recordings forensic analysis of the aircraft are mitigate the transparency of the process. We will need the same level of accident analysis, at least at the beginning with self-driving vehicles.

“If only they knew how little the AI actually does.”

I don’t know how the car driving AI actually works, as I haven’t read much detail on that, but I sure hope the aim is that it will eventually do absolutely EVERYTHING that a human driver would do that’s related to the task of driving a vehicle! Also, once we’re talking AI, I think it’s true to say that AI WILL sometimes make mistakes just like humans do – maybe for different reasons, but mistakes nonetheless. There needs to be some expectation and even acceptance of that, I think.

Besides, airplanes are generally much safer than driving. Accidents are by nature very rare because there’s plenty of space in the sky and the technology is tried and tested, engineered to the tightest standards and well maintained. It’s completely different from cars on the roads.

On the average flight, the pilots are in control of the plane for about three minutes – enough to taxi on the runway. Most of it goes under computer control, and yet accidents happen – typically when the computers are overwhelmed and toss the ball back to the pilots, leaving them to sort out the mess.

The same thing will happen with self-driving cars: accidents will happen because the cars may never be quite as smart and accomodating as people. If and when autonomous cars are the majority, the majority of accidents will be caused by the computer.

The question then is whether the accidents are blamed on the computer or on the person who is left scrambling for the wheel and pedals in the last second.

“pilots in control of the airplane for 3 minutes” ??? You have NO, ZERO clue what the hell you’re talking about ! As a 5000+ hr CMEL ATP, with multiple type ratings, I can tell you unequivocally your statement is totally incorrect. WTF do you think is going on upfront ? hell, even with a CAT3 approach, we (as pilots) need to program the FMS, monitor the flight path, and do the radio calls, there’s a LOT going on.

Now enroute altitude or course changes, usually what happens is we “dial-in” any of the changes and the ‘computers’ will make the required control surface (and auto throttle) movements.

And yes, enroute phases of a long flight is pretty much monitor the systems.

A good example of what happens when flight crews fail to monitor the automation is from a fatal mishap years ago. An ATR-42 flying in icing precip. The AP kept trimming the aircraft, and trimming, and trimming (due to ice accretion on the wings)… then when it hit it’s limits – boom – sounded the warning chime, and disengaged itself, giving control back to the human flight crew – who promptly realized their aircraft was in an unrecoverable stall….and became another NTSB statistic.

Meh how hard can it be….

i) run away from the ground.

ii) don’t let it catch you!…

iii) don’t let it catch you!…

iv) don’t let it catch you!…

v) almost miss the ground gently.

;-)

Airplanes are much safer for two reasons: the tech is much more deeply scrutinized, and the pilots are significantly better trained and much more professional.

Car accidents happen because someone is checking their hair in the rear-view mirror and swerves lanes. Car accidents happen because someone’s drunk or texting. Airplane pilots don’t mess around like that.

And the tech in airplanes is also much more serving the pilot than the proposed self-driving cars — the tech helps keep the pilot in control rather than take control away. This makes planes safer because the pilots are better. The hope with self-driving cars is that they’ll make up for bad drivers.

What I’m saying is that automation in commercial aircraft and in driverless cars are entirely different, and that the lessons drawn from one are likely to be wrong for the other.

>” there’s a LOT going on.”

I’m referring to the time the pilots spend actually and directly controlling the plane, hand-on-stick. Not programming the computer to do it for you.

>”An ATR-42 flying in icing precip. The AP kept trimming the aircraft, and trimming, and trimming”

Which is another perfect illustration of the point. A smarter AI or a person would have noticed, “Hey, I can’t keep trimming the plane forever, something must be going wrong” well before it runs out of trim. The fact that the pilots have to babysit the computer because it’s too dumb to understand what’s happening is an argument against the computer, not the pilots, because the computer is supposed to take that workload off of them.

The problem with the autopilot and the self-driving car is that people believe the AI is doing some sort of self-evaluation and double guessing like that to assess whether its doing the right thing, but it’s not. It’s just a clockwork that swings one way when you poke it, and another way when you poke it differently, and it is quite happy to do whatever dumb thing it is programmed to do until everyone on-board dies.

You are probably thinking of the Habsheim Airshow crash. The pilots flew the aircraft into the ground, the aircraft overrode the control inputs they made in the recovery attempt which would have resulted in the aircraft stalling. The aircraft was at 30 feet, engines idle and just starting to spool up, nose high near stalling speed when the pilots pulled back on the stick to clear the trees and the computer overrode the command. In this instance the pilots regard that as a failure, but the computer responded perfectly, in a no win situation. One it was only in because it’s “alpha floor” function which would have prevented the situation from occurring in the first place had been intentionally disabled. It’s also worth noting that with the model adopted for self driving cars, the computer would allow the plane to stall if the pilots took command.

That’s a small comfort…. but then you still have ABS, and traction and stability control to contend with (Read, potentially fight against.) Then with this unintended acceleration BS, the time is probably past when you can unbalance the car with left foot braking while maintaining throttle. (Because of extra sensitivity to the brake torque parameter) … I know right, you’re all “Why the hell would you need to do that?” …. it’s a skill I maintain…. mostly for avoiding 4x4s coming at me sideways in winter because the driver hasn’t figured out that 4 contact patches are just 4 contact patches whatever your drivetrain.

The incident is known as Air France Flight 296

And it is still controversial. The flight data recording indicated that when the aircraft hit the treetops, the engines had spooled to near full power and they would have been just able to pull off from the stall, or the stall might not have happened at all, but the alpha protection function prevented them from pre-emptively lifting the nose up and attempting a recovery.

In such a “no win” situation, the computer should let the pilots decide how they want to die. Maybe the pilots know something the computer doesn’t, maybe the safety margins don’t apply to all cases, or maybe the pilots are just lucky.

The alpha floor function is irrelevant to the case, because it would have prevented the pilots from taking the planned low flyover in the first place. Blaming the pilots for the fact that it was off is like saying “Well you shouldn’t have tried the trick in the first place” – hindsight 20/20.

Sorry, but that is nonsense.

There are actually very very few aviation accidents that could be attributed to the malfunction of the automation. When the pilot programs the computer to fly into a mountain/ground or to attempt to fly in conditions that the machine was not designed to handle, it really isn’t the computer’s (or their manufacturers’ ) fault. The same if there is some sort of mechanical problem – such as frozen sensors (the Air France crash in the Atlantic ..), an engine failure or an in-flight fire.

Airplane avionics is designed in such way that it doesn’t replace the human. It is explicitly designed to not do that – not only for legal reasons but also because pilots themselves want to be in control (very understandable, IMO). When something goes just slightly off nominal, the automation will disconnect with a loud warning and hand the plane back to the human – if they didn’t take control themselves already. Pilots are expected to be on the lookout, watch for traffic and monitor the flight even when the plane is on autopilot. There are also two of them in that cockpit. The crews are trained for this and there is usually sufficient time to react – in most cases there is plenty of space and altitude to deal with the problem, unlike in a self-driving car where you may have only a second or two to react when something goes south. When a crash happens in such situation it is mostly because the crew screws up at this point, even though the plane was still perfectly good and flyable (again the AF crash comes to mind).

The machine certainly *can* err, but the system is designed in such way that there is high redundancy – typically 3 systems running in parallel and at least 2 of them have to agree before any value is considered to be valid. And even then if for some reason something bad happens, there is always the wetware in the cockpit to override it and save the day (one reason why the pilots have the circuit breakers in the cockpit …). The aviation automation is there to lighten the (very high) pilot load, not to replace the humans, unlike the self driving cars which are explicitly designed to work without the human in the loop.

Also, when a plane crashes (or any accident happens, not only a plane crash), there are always multiple factors at play. The crash is only the consquence of the “holes in the swiss cheese aligning just right”. If one of those wrong decisions didn’t happen, the crash wouldn’t have happened neither. Unsurprisingly, the humans end out to be the weakest link there in the majority of the cases, because it is them who make those fatal decisions, not the computers.

It really isn’t some sort of “conspiracy” to blame the (now dead) pilots for the failings of the expensive hardware – especially with so many planes flying the same systems all over the world something like that wouldn’t stay secret for long. E.g. the infamous Boeing rudder problems (too hard rudder application could lead to the horizontal stabilizer tab detaching and crash) or the Airbus freezing probes. That’s what airworthiness directives are for and those are regularly issued and the airlines have to apply them or their planes would be forbidden from flying. We can only wish a similar system existed for (autonomous) cars, really.

If I remember correctly, the “program the plane into a mountain” was a case of the designers making a readout display that displayed 3% slope and 3000 ft/min drop in an almost identical way, so the pilots thought they were doing one thing but they had actually programmed the computer to crash.

Possibly. And if the crew had actually cross-checked the values that were entered (as they should – it is a standard procedure), they would have caught the error and nothing would have happened. Another oportunity to prevent it would have been while monitoring the flight and noticing the altitude excursion. Again, something the crew is supposed to do – airspeed, attitude and altitude monitoring is basic flying, regardless of whether you are in a Cessna or Airbus 380 because your life depends on it.

Heck, even the poor design you are arguing with was a result of a human decision somewhere.

See the “swiss cheese” analogy again? You can’t reduce an accident into a singular failure, it is always a chain of events that must happen just right for it happen. And unfortunately most of those involve humans, not machine errors.

I suggest you read some actual accident investigation reports, they *always* list both the primary and contributory factors, along with every avenue and hypothesis that has been investigated and their findings (if any). Like this it is only clear that you don’t know what you are talking about.

>”Heck, even the poor design you are arguing with was a result of a human decision somewhere.”

Indeed. You can always trace the fault back to the programmer, who can then blame their mother for dropping them as a baby. There’s always a human somewhere in the loop who you can blame instead of the machine.

But the fact remains that the system, as designed and operational, is prone to failure and dangerous to use. Shifting the blame to the pilots or the designers is just a red herring. The actual point is that the system was unsafe, and should have had gone through more scrutiny before it was put to use, but it wasn’t because like Elon Musk says, “don’t let concerns get in the way of progress”.

He actually says that.

He gets a friendly request for more information from a regulator and suddenly acts like he never expected that?

He could just send a friendly reply like: “Dear regulator, we are currently nowhere near selling anything but we will send you the requested information and wait for approval before we start sell our product to customers.” and then let a 3rd party deal with regulators, approvals, lawyers and customers.

Please refer regulatory inquiries to our chief compliance officer Somil Gupta who we just hired off upwork (elance)

Tesla is taking a different approach now: they’re including the AI part of the self-driving software in all cars but not actually giving it control over the vehicle.

They’re apparently going to see where the AI and the actual driver differ and use that to train the AI. In the best case they’ll realize that either the sensors systems is or the AI is totally inadequate for the task and put the whole thing back on the drawing board to wait for better AI systems and sensors. In the worst case, and in the most likely case, they’ll find that they can make the AI mimic the driver at say 98% accuracy just by teaching it a few “rules of dumb” and then calling it “good enough”.

The problem with the latter is – and the argument that Elon Musk is pushing – is that the self-driving car is safer simply because it causes fewer accident per driven mile on average. That however means the self-driving Tesla car is actually a worse driver than most humans on the road. That is because the majority of accidents are caused by a minority of drivers, whereas the probability of accident with the self-driving car is spread evenly across the fleet so for most people engaging the autodrive is more risky than driving manually despite the fact that there are fewer accidents per mile.

Furthermore, the whole point may be moot anyways because the autopilot will refuse to drive in conditions which are beyond its abilities, such as in the snow or heavy rain/fog, or it may find the road too slippery and simply refuse to go, at which point the human driver takes control and the usual pattern of accidents remain.

And even further more, it’s completely plausible that self-driving cars like Teslas are infeasible in large numbers because they rely on radars and sonars. Imagine a busy intersection full of such cars: they’re jamming each others’ sensors. Even with complex modulation schemes and coded transmissions, if you blast your transmitter right at the other car’s reciever, it will saturate and blind it, or at the very least severily interfere with the AI which isn’t intelligent enough to know that it’s being compromised.

Very good point, I have had plenty of situations where, not necessarily a bad driver, but a less reactive driver, would have made contact with the wildcard joker.

Dax, you make good arguments that seem believable. The safety is a statistical problem that should be solvable. Perhaps the record of accidents per mile could be compared to insurance company categories, like drivers outside the 16 to 25 year old category. Regarding when the autopilot refuses to drive, can you imagine in a rainstorm handing the wheel over to someone who has been driven around by autopilot for the last three seasons and forgotten how to drive? On the issue of saturating the receiver, your argument makes sense logically but is it true? I am curious if you can reference any data or reports confirming this. Certainly it would be a showstopper for the developers if it was true that there can only be so many lidars or radars or 3d vision systems together at once. If there is not an algorithm to deal with multiple autonomous vehicle vision systems this community would be a good place to discuss a solution to the issue with all the creative minds that read these posts.

>”can you imagine in a rainstorm handing the wheel over to someone who has been driven around by autopilot for the last three seasons and forgotten how to drive?”

What other option do they have? Hand the wheel over to a remote operator over a flaky laggy cellular connection?

>”On the issue of saturating the receiver, your argument makes sense logically but is it true?”

Yes. They’ve made a proof of concept attack against a tesla car, pointing a transmitter at it and making it ignore a simulated vehicle right in front of it.

https://nakedsecurity.sophos.com/2016/08/08/tesla-model-ss-autopilot-can-be-blinded-with-off-the-shelf-hardware/

Unfortunately it’s pretty easy to blind a human driver with say a laser.

or hi beams

Yes, but pointing lasers at the eyes of people is not necessary for driving – at least for humans.

The self-driving cars all depend on some sort of “active polling”, whether it’s radar, sonar, or laser. They send out a signal and wait for an echo. It’s conceptually the same thing as a blind man tapping around with a cane. It’s no substitute for actual sight, but it’s the only thing they got since computer vision is still so primitive and notoriously unreliable.

“or hi beams”

Which illustrates the problem exactly, although human eyes have an incredible dynamic range compared to cameras and radio recievers etc. and can mitigate the problem by looking away from the bright light because it affects only part of the retina.

Humans are adaptable, we can squint, we can move our heads, hold a hand up to partially block the offending light. Cars don’t and won’t do this. Humans have a remarkable way of predicting things much quicker than a computer can because we have pattern recognition like a sonofabitch. Cars are great at driving, in the hands of a human. It’s how they were designed. Now we are trying to design smarts around a traditional model of travel, rather than the other way around. It’s flawed.

A proof of concept attack is not the same as demonstrating that multiple cars will jam each other’s sensors. I just skimmed the paper, and the jamming and spoofing attacks on both ultrasound and radar sensors were carried out within 1-2 meters (if you’re that close to another car, you’ve already got problems) and I didn’t see any mention of how the required power compared to the car’s output power in either case. Those aren’t really deficiencies in the paper, as it’s addressing attacks, and isn’t interested in accidental interference; however, they make it useless to prove (or disprove) the idea that such cars will jam each other under normal driving conditions.

True, I can do a proof of concept attack like that on a human guided car with a can of spray paint, if it’s gonna be assumed you can be only a meter away.

Though if as mentioned in another comment, we end up getting telemetry between vehicles, I’d like to see a “virtual tow” mode implemented, so car with messed up sensors or faults can be linked up to another car as a master and it can just follow.

>”1-2 meters (if you’re that close to another car, you’ve already got problems)”

That’s a very usual distance for city traffic. 1-2 meters is just the distance you’d be to an oncoming car in the opposing lane – their sideways facing radars would be swiping your front and side as they move past. If that’s a problem, then millions of drivers are in deep trouble all the time.

And just the fact that you can have two dozen cars in the same intersection – if every single one of them is blasting their radars away, the whole place is going to be just full of reflected signals and plain incoherent noise – the ultrasound or radio equivalent of everyone honking their horns at the same time. It’s going to drown out the more distant echoes and make the self-driving car unable to see anything across the intersection.

>”The safety is a statistical problem that should be solvable. Perhaps the record of accidents per mile could be compared to insurance company categories,”

The problem with that is you have to let the cars on the roads and driving for trillions of miles before you have the required statistical body to prove that the car performs as claimed and expected – and what if they don’t? In order to prove it you have to let the cars on the market, and once millions and millions of people already own them you can’t really pull them back. It’s like opening a Pandora’s Box.

If they turn out to be marginally unsafe, the manufacturer can always dispute the finding by appealing to the fact that they’ve made multiple models and the software is upgraded along the way and there’s outliers and special circumstances, shifting the blame to the drivers at every opportunity, push out bogus studies that serve as “evidence” to the contrary etc. etc. and pull off a courtroom theater that would eclipse the tobacco industry trials.

Once you get the ball rolling, it will be difficult to stop, and the likely outcome is that the society will kinda sorta accept that self-driving cars are a bit crap, and you can’t really do anything about it because everyone’s already addicted.

All of this reasonable discussion is making me question the feasibility, practicality and possibility of so called “driverless” cars and not just from a safety perspective, but most significantly, making me ask, “So what’s the actual point of AI-driven cars???”

The point is that you can have a bagel and a coffee while driving to work

The more immediate point is for Tesla to pretend they’re further ahead of the technology curve than they really are, because Elon Musk can’t take back his promises without losing face (and investors).

Heh the first snowfall of the year is like that around here anyway…

“OMG I totally forgot snow is slippy!”

I wonder how well a self-driving car can even see the road under the snow.

That’s another issue, because the lanes are not always in the same place between seasons – it depends on where the plow truck has gone, so relying on GPS will get you stuck, and relying on computer vision is a a hell of a stretch at the current level of technology.

“If there is not an algorithm to deal with multiple autonomous vehicle vision systems this community would be a good place to discuss a solution to the issue with all the creative minds that read these posts.”

Well, Seymour, the luck would have it that maybe there has been one, with a lot of mileage at that:

https://en.wikipedia.org/wiki/Carrier_sense_multiple_access_with_collision_detection

“When this collision condition is detected, the station stops transmitting that frame, transmits a jam signal, and then waits for a random time interval before trying to resend the frame.”

That doesn’t sound very useful. Collisions still cause the radars to jam and block, and with multiple transmitters on the channels it will lead to actual collisions.

If 3D vision is passive (cameras) then there should be no limitation.

That makes no sense. An automated driver with higher accuracy and dependability would be 10x better then humans. You’re assuming humans are good drivers, they’re not, its the 20th leading cause of death, and number one outside the functions of the human body. Just because people are capable of something, didn’t mean they really do it. Most people after years of driving still can’t predict traffic, and/or drive aimlessly behind the car in front of them, not actually thinking about the weekend around them. So to have something that would overall reduce the amounts of mistakes on average, statistically then, less people would be seriously injured, and quicker drive times by a massive amount.

Take my neighbour for example – he is still driving at 91 and the only qualification he needed to get his first licence was $2 and a signature!

That’s not an argument against people driving – that’s an argument against the licensing policies.

Unfortunately they can’t retroactively change the laws, so he is literally grandfathered in.

Of course the law can be changed – at a minimum he now must pass a vision test every year to renew, something that was added here only a few years ago.

I see nothing wrong with that, he grew up with the cars and the traffic. A modern style road test would have been next to pointless, administered in a rural area back then, because you might not have seen another car while doing it.

Also, standard car controls were still settling down up until the 50s, Model T had gear shift pedals on the floor and hand throttle, and a transmission brake on the right on floor, and there would have been a bunch of those around as DDs for years.

What is wrong with it is he could potentially find himself in a situation that he cannot handle simply because his body cannot react fast enough and someone might die.

And we trust that your gramps isn’t trying to drift around the block like he was 17, because he knows his own limits.

Of course the ultimate trouble with elderly drivers is that they tend to die behind the wheel and cause accidents that way.

The problem of radars jamming each other can be solved the same way 2G networks (and a few more wireless standards) have multiple devices on one channel, TDMA.

Alternatively they can use what the military uses – pseudo-random frequency hopping. Not as good as TDMA, but would be easier to implement and probably more useful, because it doesn’t require compliance from the other radars.

Obviously all this requires a properly designed and built advanced radar sensor and will cost a few $$ more, but it shouldn’t be anything drastic once produced in large numbers…

Increasing the noise in the channel will still decrease what the cars can see, because the signal reflections become weaker and weaker relative to the in-band noise, and directly saturating the reciever will blind it regardless of the modulation scheme.

There is a heavy burden required when it comes to dealing with regulatory bodies in a highly regulated industry. These skill sets are generally not a design engineer’s forte. I know everyone has opinions, I would not stop work on a project that was my passion because of the regulatory hurdle. There are people and companies out there that may help overcome this obstacle. The issue with this is pride, he is unwilling to compromise on his pride to see this project through. He will not sell out, he will not be bought, he has to go it on his own to prove something to himself.

this guy has been boned by corporations quite badly in the past. i would think he would rather be hacking than dealing with more of that type of crap. Is that too prideful?

Well, the problem is that big egos don’t work well when it comes to actual engineering.

Having the skillset to do something is one thing, but that is only part of the job. Government regulation is a fact of life and anything transport related is heavily regulated everywhere in the world. This feels more like a software guy suddenly discovering that there are things he cannot “hack around” and that a lot of real world stuff can’t be solved by writing more code.

I think he has realized now that when he bragged about that $1000 AI that could be retrofitted to any car he had no idea whatsoever what he was talking about. Now that NHTSA came asking about his intentions, he got a rude reality check and pulled the plug.

Is “boned” code for “didn’t understand his contracts before he signed them,” or does it mean something else?

You have to be able to deal with regulators and understand contracts to go it on your own, there is just no other way to have any chance without a bunch of expensive staff. If he had the skills required to be doing this sort of project, he would have anticipated the obvious things the NTSB has said to him. He didn’t get any surprise treatment, this is what “everybody” expected as the likely outcome. He could have planned for it and it wouldn’t even be a speedbump.

For the same reasons, I don’t build my own power supplies that plug into the wall; I buy generic switches to do the AC-DC part, and I only design the low voltage DC-DC that I need. Connecting to mains is regulated, and I’m supposed to have safety testing done before selling it. I don’t want to spend that money, and I certainly don’t want to whine about it since it is foreseeable and my own choice which path to take.

When your mindset is, “of course I’m to comply with relevant regulations” then when you come into these things the actual rules that you encounter are very loose and the solutions can be implemented in a variety of ways. What surprises me is how lax the regulations are even things are regulated!

And no, changing the wording isn’t going to appear to regulators as if you found a loophole. It doesn’t occur to people, but when they write a letter saying, “You can’t do Foo unless you do Bar first,” those terms are already narrowly defined in some regulation. Just saying, “Golly, I hate the term Foo, I only call it Baz” isn’t going to impress them at all. That isn’t how words work, or how regulation works. The goal isn’t to find another meaning for the words they used where what you did would sound OK; that is cartoon thinking. The goal is to figure out what they actually meant, because that will lead you to all the work-arounds that will actually work.

You’ve done great job at trying to prove a socialized government actually does something. However reality is something much different. If a society actually did what you propose, innovation would be legally dictated by the government. Because people are corrupt and sin is real, only big capital corporations would be able to play, to jump through the hoops necessary, limiting innovation to near nothing, and just keeping the status quo. While at the same time the general public would be “stupefied”, and the overall growth of intelligence, understanding, and innovation would come to a stand still, since only someone else can do it.

Welcome to modern day United States. Your philosophy has anyway been tried and tested, and didn’t work. It just feed the greedy and wealthy, and destroyed real society and innovation. The biggest innovation leaps took place during the least regulations and corruption. History speaks for itself

Looking at the mosaic illustration: in red – not safe, obstacles and signs to be avoided; in green – safe to drive by.

:o)

I suggest if one does not wish to drive the car, that one takes the frickin’ bus!!!!

I suggest if one doesn’t want to enjoy the out put of one doesn’t use the help of .

I suggest if one doesn’t want to enjoy the out put of [chore x] one doesn’t use the help of [automatism y that does chore x].

Or he takes a taxi or an autonomous driving car, as soon as they are available. So you can take the bus, if you want, but you shall not dictate, what other people want to do.

New York City’s “Ground Zero” is the World Transportation Center (WTC), the point of origin for America’s new driverless-car infrastructure.

When you search “World Transportation Center” on Google Images, Manhattan’s former World Trade Center (WTC) comes up.

So you’re telling me searching for “WTC Ground Zero” turns up the expected results?

Luckily, cars were invented before the NHTSA and their various oversea cousins, otherwise we’d be having this same discussion about wether to allow humans to operate those metal boxes on wheels.

Heh, imagine cars had never been around but planes and ships had been… they’d be like… “Well okay, but the first officer of the vehicle will have to complete a navigation course and maintain 2000ft separation from other vehicles….”

Ha, yep +1

Don’t forget that we actually had that discussion.

Remember when it was required to have a man walk in front of the car holding a red flag?

There was a big concern over whether automobiles would be safe. The difference is that they actually were safer than what they replaced, which was horses that used to run off and trample people all the time.

Bingo +1. A lot of the safety come from paved roads though. However, cars were better at nearly every point, most importantly speed.

Self-driving cars DO NOT need to be perfect! They ONLY need to be better than human drivers! It takes foolish arrogance to think that humans are capable of performing any task with consistent perfection. Driving machines mixed with arrogant human drivers can’t either!

The Tesla is not self driving, and it never will be until it has one of those ugly rotating LIDARs on the roof, like the Google self-drivers. Even with the recent fender bender involving a bus, Google self-drivers are ALREADY earning driving records better than typical human drivers.

BTW, the idiot who was killed recently in a Tesla was WATCHING A HARRY POTTER MOVIE when he died. Tesla Autopilot REQUIRES the driver to agree to DRIVE the car before it ever engages. With the airplanes you fly in, “autopilot” controls air speed, heading, and altitude ONLY! If the human pilot is absorbed in his Harry Potter book, the plane is still very capable of flying right into the side of a mountain. The Tesla “autopilot” is no more capable than that. Forget that, and you too can be exterminated promptly!.

But your GPS says not to use when driving either, so who ever takes notice. We’re all numb to “click to agree”

Actually they only need to be good as an average driver to show a significant benefit.

The mean driver is worse than the median driver. Specify what “average” you mean.

Heh, if they’re exactly dead on the mean, there will be no safety benefit. Even if a little bit under it would be a stretch to call it “significant”

Although, some people realise that they suck at driving, they buy huge vehicles in the hope that they’ll live nevertheless and it makes them feel safer in the meantime…. despite the fact that such vehicles may never have to pass the same passenger safety tests as passenger cars. Anyyyway, if the worst 20% bought “good as mean” self driving cars, then there would be a positive effect on overall safety, although the funny thing would be that the mean would move left and leave the selfdrives sitting right of it on the old mean looking like a bad thing!

LIDAR is not necessarily required, if sufficient cameras (for surround view) are available and the software works. Plus, there exist LIDAR sensors that can be custom fit to body (requiring more of them as they can only cover what they can see, rather than 360 from on top), so at worst case you’re at least not going to have ugly LIDAR in the future.

Tesla claims that the new AP 2.0 hardware suite will (eventually, once software matures and is allowed to take over) allow full level 5 autonomy. Personally I’m surprised they didn’t add at least another radar in the back, but apparently they’ve decided their vision coverage is sufficient, now that they have multiple cameras providing a 360 view. They’ve already demonstrated it performing L5 capability, but of course the video was edited for time (so we can’t be sure they didn’t edit multiple runs together) and a human was ready to take over if things didn’t work as planned, and it was on a known path that was probably thoroughly tested (though traffic of course would be outside their control and thus shows at least a modicum of capability beyond memorizing a particular route).

“and the software works”

That’s the biggest stumbling block at the moment. The software and the hardware isn’t powerful enough for the task, so the cars use something simpler that they can handle, that gives them unambiguous information directly – such as the distance to the nearest obstacle.

Oh, and Elon Musk is employing the minimum viable product principle. They won’t add anything until it proves necessary, where “necessary” means until someone actually dies again.

“The deadly Tesla accident proved how that flimsy that fiction is.”

However, what that accident did prove is that for every mistake a Tesla car makes, every remaining Tesla car will become a better driver. This is a vast improvement over human drivers. What’s happening is that we are finding the edge cases where the autopilot fails. One death to fix every bug in the system is a bargain compared to the 30K+ deaths per year as the result of human drivers.

The process by which humans improve is just slower.

The process by which humans improve is also not very well shared among them, whereas learning for a fleet of vehicles can be distributed to all via a software update. Essentially, the fleet can 100% learn vicariously through the mistakes of the few, rather than each driver having to learn through their own experience (sometimes given in driving lessons, sometimes learned in real life driving, but never with a 100% distribution of “updates” to all humans)

The “learning transfer” thing assumes that the information can be passed between vehicles.

But, if each car employs a neural network to do the actual driving, how do you combine them? You can’t even extract the exact information that was learned by one car because you can’t tell where it is encoded in the network.

If on the other hand it’s not a neural network but a more classical AI, the program will quickly grow beyond the means of the onboard computer to handle, because you’re essentially programming it to “see” the world and react to it case-by-case.

Even with the neural network, the size of the network starts to determine how much information it can hold and retrieve with any accuracy, and trying to teach it everything is impossible. You simply run out of CPU and memory.

You’re going out on a limp there. As long as you don’t work at Tesla or MobileEye or whatever other tech company exists that does this kind of thing your rambles are nothing more than hot air.

Time will tell.

“every remaining Tesla car will become a better driver.”

That’s just science fiction. There may not be anything to learn from an accident if the causes and conditions to the accident were beyond the capability of the AI to comprehend.

It’s like the story about Jane Goodall, where she tried to housetrain chimps to not shit indoors, by pushing their nose to the business and then throwing them out the window. The chimp eventually got the point: whenever it shat on the floor, it would nuzzle the poo and jump out the window voluntarily.

A chimp is a tremendously intelligent being. A self-driving car in comparison is a flatworm that was dropped as a baby.

Indeed, watching Harry Potter that day turned out to be an important QA milestone, and convinced Mr Big to change some of the technology to what the Other Guys are using. Don’t crash and die, that is horrible, but at the same time, having it improve the performance and safety in other vehicles is unparalleled in the history of transportation, and turns tragedy into sacrifice.

One other great thing is that eventually new cars will all be required to have short range vehicle to vehicle communication, so they can even start learning to predict human driver behavior before the vehicle even changes position; as soon as the wheel or a pedal is touched, that drivers car can warn neighboring vehicles and even give vector updates.

Actually, I think that’s the only way it’s going to work. Vehicle to vehicle telemetry.

Until someone spoofs the communications and makes a pileup.

Though there’s several dozen ways to cause a pileup already. Penalties against premeditated murder are quite harsh and generally they tend to stop you getting stabbed the minute you step outside your door.

So you trust other peoples to tell you the truth all the time?

Really?

Aehm.. “I’m a banking guy in Africa and I found some account with money in it that we could split.. “

Call it an extension of the directional signal indicator, it more or less works, you’d still override it with local sensors. It’s like saying why trust a human with a brake pedal, they could just go in the fast lane and slam it on. Yeah they could, but there’s very little incentive for them to do so.

Humans can double guess whether to trust a particular signal – such as not taking a turn signal for granted.

The computer on the other hand is programmed to either trust it or not. It doesn’t have the ability to self-reflect and empathise with the other driver to assess from their behaviour whether the signals they’re getting are consistent with their intent.

If the computer is programmed not to trust the signal, then it won’t matter that the signal is there, and if it does trust it then it better be damn reliable or else people will die.

Unless there are more than 30k+ bugs per year?

This is a really good point. Overall growth due to collective learning will have a peak, but humans lack the ability nearly altogether. Nearly no one actually studies history, or other people’s post to learn from them, they’re too arrogant.

Can anybody stop people from open sourcing software to which one owns all copyright? I’m not in the US, but I guess this is infringement of the free speech. Slap some disclaimers and remove references as to how to use it, so “officially” the usage is not given at all.

Well the 3D printed gun people didn’t make out too well with that theory.

Oversold? Really? How many deaths per vehicle mile were there while these systems were in control of the vehicle and how does that compare to deaths per vehicle mile under human control? The goal, of course should be 0, but if the requirement is 0 you will quite literally never have self-driving cars.

We just won’t know until there are hundreds of people dead because of self-driving vehicles, to get enough of a statistical body to say anything with any certainty, and that’s going to take a long time. That’s because the deaths per miles figure is very low in general. 1.5 fatalities per 100 million miles traveled.

You can’t say much anything of a handful of accidents because each individual accident may involve any number of victims – maybe the car was loaded with people, maybe only the driver died, maybe it ran into a bus which veered off the road and killed 50 – that’s a possibility.

Most of the accidents, over half, are due to distracted driving, which is also where self-driving cars have problems because they’re lacking in the ability to percieve and understand their surroundings, which is virtually the same thing – failure to notice dangerous situations and react to them.

I doubt they’re seeing the driver in the car in the next lane a little ahead, half glancing over shoulder and not catching your eye, so you slow up and yup, moves into your lane. Seeing kids feet under line of parked cars… seeing ball appear in road and expect kid behind it…

There is no reason for any of those not to be detected.

Yeah, but that’s like saying to the Wright Brothers…”There’s no reason that you can’t slap some kind of gas turbine on this and fly at 600mph”

I submit that none of that matters if the net deaths per passenger mile is 1/100 that of human piloted cars.

So once the next tesla drives about 999.9 million miles without killing someone, we can consider it safe enough.

Yeah, should be pretty safe when it gets out past Jupiter, very few people to hit out there.

Define “without killing someone”.

If a Tesla causes a huge pileup with 100 people dead, does that count as one or many? And how many?

It’s kinda like how Google claims their cars haven’t caused accidents because a rear-ending is always the fault of the person driving behind you – since they’re supposed to keep enough distance to stop – but that’s not what people actually do, and the Google cars are indeed causing accidents by braking erratically and not driving like people would.

“how does that compare to deaths per vehicle mile under human control?”

At the moment, very poorly. Tesla has (totally made up number) a million miles under its belt with autopilot on, and one dead driver. If an average US driver puts in a million miles in a lifetime (ballpark right), he or she can expect to die at the wheel of a full-time-autopilot Tesla with something between 20% and 80% of the time.

If you told me that driverless cars killed 50% of their passengers over their lifetimes, I wouldn’t call that safe.

Google has gotten luckier so far, but they only have “millions” of miles under their belt. They need hundreds of millions or billions to start to get any reasonable statistics. And tech is changing so fast that there’s no way to get even a million driven under any given regime.

Short answer: nobody knows, but even one death makes it look really bad.

The Tesla number of miles with the autopilot on are apples to oranges, because the autopilot is only on when there’s the least chance of accidents and the highest number of miles: on the highway. It drops offline when the traffic gets any more demanding.

It’s just a lane-keeping assistant, which is why the German courts ordered Tesla to stop using the word “autopilot” in advertising the feature.

We know that google is approaching it the Thun-way (establish level5 capability, then let it loose into the wild) while Tesla approaches it the Tesla-way (grow capability over time and become self-aware in the future).

Both approaches have merrits and both approaches will cost lives.

If you don’t like the Tesla way, don’t buy one or be found in one.

Being killed by one as an innocent bystander in the wild – bad luck – but there are a couple hundred million humans out there with all sorts of soft&hardware versions in all kinds of configurations (under the influence of alcohol, drugs, distracted, etc. pp) and you have to take your chances with those daily as well.

Before autonomous Teslas get so common that your chances of being hit are more than ‘never’ the AI will be at lvl5 anyways.

The trouble is that if the system is generally accepted, then all the manufacturers will quickly have similiar features, and they’ll all become dangerous because they will be pushed onto the market before they’re actually capable and ready.

SHOCK AND SURPRISE ECHOES THE WORLD!

As far as I’m concerned most people driving are a danger on the road to me and you and the guy walking on the sidewalk.

All cars should come with full 360o video recording. And we are all ready at a point that a car ca automatically give the driver a ticket for speeding and reckless driving and being Impaired. You can also look at the toll roads, they know how fast you are driving on there roads. Simple math.. Ticket the dam car. and start removing points. and after so many points that car should be impounded.

And this would be a great way for the government to make a S#$t load of money. Then they can start fixing the roads better or make more jails. Or better yet give themselves more pay raises.

I’m going to Yerkes-Dodson all over your assertion that speeding is necessarily dangerous, for some people it’s safer.

From personal experience, I find that your concentration will lapse regardless of the speed. Driving tired is just as hard whether you’re going 55 or 75, and the latter will result in a worse accident. The adrenaline of speeding will quickly wear off and you begin to nod off just the same, because the driving itself isn’t much of a challenge until you’re going really really fast. Modern cars are too well-behaved and comfortable for that.

The only mitigating factor of driving faster is getting to your destination sooner, but the penalty is tunnel vision and a shorter window for reaction in an already compromized situation.

I was in the room at the four letter car company where the decision was made to take out small businesses and hackers like geohot using the thugs they knew at NHTSA.

“The goal is to convince NHTSA to place enough of a regulatory burden on AVs such that we’ll be the only ones capable of complying.”

“They want more power and authority, as all bureaucrats do, so we’ll provide them an excuse by selling this as protecting the public. Here’s how we’re going to do it…”

Thus, the very existence of NHTSA is what stifles innovation by providing a target for all these big companies to lobby for increased regulation. Big companies LOVE regulations.

I’d try to be surprised but it’s all game theory at this point.

Besides, the last vestige of public interest ethics got pummeled out of them a few years back, when they put forward a proposal to co-develop mandatory safety systems among the big three, which could have resulted in lower consumer cost, and with the application of 3 sets of leading minds and know how, a superior, safer solution…. but .gov threatened to go all antitrust on their collective asses so that was the end of that.

I didn’t realize Tata Motors had a large presence in the US. Either way, the questions posed to GeoHot and Comma were softball at best. Any company or person that was willing to release a platform that actually was ADAS by the end of the year (TWO MONTHS AWAY) should have answers to said questions. In the end, they’re likely using end to end CNNs which are straight up nearly impossible to certify. This gives Comma a convenient way to back out of shipping a subpar product while pointing the finger at regulation — something everyone loves to hate.

The biggest issue is, it’s not just the drivers who are the guinea pigs here. The drivers get to opt-in, but everybody driving or walking nearby is taking the risk of early adoption here. This is why the penalties for drink driving are so high – you’re not just putting yourself in danger.

Other than spawning tech spinoffs that could make people BETTER and SAFER drivers, i have absolutely no use for self-driving cars. Mainly because we need LESS cars on the road, not more. There’s a 200 year old technology that has a better safety record than self-driving cars will ever have – railroads. I also think it’s a bit of a techno-wank, but it’s the new hotness and money is being dumped into it, with little consideration for how hard it will be to roll out, how hackable it will inevitably be, and what life will be like with intensified traffic.

Don’t worry, I only have about 20 years of driving left, til some young punk pries my gnarled arthritic hands off the wheel of the future’s equivalent of a Chrysler New Yorker (or more likely, a seriously battered pickup or jeep). It will take at least that long to be mainstream, anyway.

In maximal benefit mode, could use the same roads to move more people more efficiently, experienced fuel economy enthusiast driver can double the EPA mileage of many cars with no modifications. So selfdrivers programmed to follow the somewhat tedious techniques involved can do that too. Plus when we get to where selfdrivers have reaction and perception times way way in excess of humans they can “train up” behind each other and lose aero drag in the “draft”. … then when all the basics have been solved, and presuming we’ve gone all electric, there will be possibilities of power pickup and power sharing in rolling “clouds”….

So yeah, there’s potential to improve energy usage, efficiency, and not despoil environment with new roads, whether it will go that way or not is debateable.

Railroads, unfortunately a lot of that infrastructure has been lost from golden age and what’s left is probably at rip it up and start over status when you think about high speed efficient passenger networks. Then you can go “It’s twice as safe per mile!” but forget that due to the nodal topology and having those in mostly the huge major cities, you’re maybe having to do twice or three times the miles if where you need to go is not one of those.

There are traffic infrastructure improvements that are possible independent of self-driving cars. If we do make it to ultra smart systems that do drafting and stuff, well it’s as if they were coupled, and then we’d wake up to the fact that we’ve just reinvented “buses But just for those who can afford the self-driving cars.

Can you imagine what city and expressway traffic would look like, then? Forget about sharing the road with bicycles, pedestrians would definitely be second-class citizens. A well-aimed cinderblock would cause massive chaos and injuries and deaths.

If you have enough inter-city traffic that self-driving cars are more efficient, then you have more than enough to make trains cost-effective.

Self-driving cars are like SUVs; many want’em, few really need’em, and all told they’re not going to be a net benefit to society, other than some useful spinoffs. But it’s a free market, companies sense an opportunity, and they’re going to concentrate on this, rather than some harder choices about how to design cities and to plan for a reduced carbon future.

Railroads suck in terms of usefulness, especially for passenger transport. There’s no way you’re going to have railroads for “heavy” trains (light trains are limited in speed by laws) to more then just a few points even in a big city, meaning you’ll still need a car or bus at some point, which means you could have used it in the first place and not have to change modes of transport.

Freight trains are whole different game as the throughput is much better, but still limited to heavy industry or transport between big nexuses(mostly ports). From nexus to endpoint, you’ll still likely have to use a normal road.

Last but not least, railroads are stupidly expensive to build, as they can’t have anything close to the max. allowed slope when compared to normal roads.

Traditional passenger trains are a thing of past, too slow and rigid to cope with modern demands without being overly expensive.

Although I think the idea behind what George and Comma has built is awesome and a leap forward for both autonomous cars and AI I have to wonder if he was just waiting on a scape goat. It seems to me he bailed at the first sign of trouble. If he was truly intent on bringing this to market he would have faced much greater challenges than a strongly worded letter from a government oversight committee.

I hope this doesn’t damage the publication perception of DIY autonomous vehicles or of AI to much.

“Comma.ai’s downgrade to driver-assistance system really begs the Tesla question.”

No, it doesn’t: http://begthequestion.info/

Cool. Thx for the info.

“is making people inattentive at the wheel…” – Isn’t that the very reason for an auto-driving car? Nobody is paying attention as it is so why not make a car that will do it for them? It’s either that or make cell phones explode like on Law Abiding Citizen when a driver puts a phone to their ear while driving.

I think you’ve found a market for those recalled then cancelled Samsung phones

Much of this fuss is missing out on some critical factors. This article touches on a couple.

1. If someone gets killed, who pays. No ordinary citizen wants to get stuck with paying when the real problem was the someone else’s software. Nor do that want to see their child ran over because, as Mercedes recently announced, their software would favor their rich driver over that poor kid on a bicycle.

2. Assisted driving is nonsense. It is easier and far more enjoyable to drive yourself than it is to sit there, bored to tears, waiting to take over in an instant if the software fails.

There are a host of other factors that matter too, including the fact that many of us not only enjoy driving in most contexts, but we’re quite good at it. The statistics about accidents are mostly about a small slice of the population who drive badly and often DUI.

Much of this madness is driven by corporations enchanted with getting even richer by replacing all driving. That’s why much of the debatehints at later mandates forcing everyone to buy an auto-driving car.

It’d make far more sense to concentrate on specific areas of driving that people hate. That’s either a long, daily commute on crowded freeways or long-distance travel on freeways. Controlling traffic on dedicated to auto-driving lanes on limited access highways is much easier than managing it in residential neighborhoods filled with kids on bicycles.

And it is the failure of the advocates of these cars to recognize that distinctions that leaves me suspecting they’re fools whose schemes are doomed to fail.

Woah. you get it.

re #1 – I suspect that if self-driving cars are mainstream enough, insurance companies would somehow accomodate. And/or car companies would quietly settle quickly whenever there was injury or death. Same as they already do now with vehicle defects.

To be clear, I think that self-driving is an interesting technical challenge and worthy of being solved. I just don’t see a near-ubiquitous application as being of net benefit to society.

The self-driving system should begin to be just an assistance.

Something that helps when you do acts that can danger you and others such as falling sleep behind the wheel, having a heart attack or stroke, or anything that would make the car loose control.

After a while, we can talk about letting the car to drive itself, while reading a book.

The self-driving car will change the game we have being playing so far with vehicles.

We are going to be able to synchronize organize the traffic in order to reduce traffic jams.

Even those who don’t own a car will benefit. Just imagine, your application calls a service such as Uber and a car will shown on your door.

Asking “When Robot Cars Kill, Who Gets Sued?” is a dumb question, it is like asking “if a safety belt might strangle someone one day, should we ban all safety belts ?”

Actually the legal issue is very very simple: under true capitalism and free market, you are the sole owner of your car once you bought it, and therefore are the responsible person in case of the car killing someone. This way, if self-driving cars are really dangerous and present a high risk, people will not buy them, and if they present a LOWER risk than human drived cars (which we know to be the case already), they will take over because people will buy them. Free market should be the only one to decide this rather than government agencies.

Of course under freemarket assurance companies can propose contracts for self-driving cars and they would probably be expensive at first because of the new technology, but when these companies realise that self-driving cars are actually less dangerous, the prices will drop below human-driven ones and will facilitate the adoption of the new technology, if it’s really a good one.

Their final work was basically improvements to OEM assist not “self driving”.. Who cares it was over-hyped..

No wonder. Check this out – spontaneous acceleration of Model X.

https://investoralmanac.com/2017/01/01/korean-celebrity-sues-tesla-claiming-model-x-sped-up-on-its-own/