The new Raspberry Pi 4 is out, and slowly they’re working their way from Microcenters and Amazon distribution sites to desktops and workbenches around the world. Before you whip out a fancy new USB C cable and plug those Pis in, it’s worthwhile to know what you’re getting into. The newest Raspberry Pi is blazing fast. Not only that, but because of the new System on Chip, it’s now a viable platform for a cheap homebrew NAS, a streaming server, or anything else that requires a massive amount of bandwidth. This is the Pi of the future.

The Raspberry Pi 4 features a BCM2711B0 System on Chip, a quad-core Cortex-A72 processor clocked at up to 1.5GHz, with up to 4GB of RAM (with hints about an upcoming 8GB version). The previous incarnation of the Pi, the Model 3 B+, used a BCM2837B0 SoC, a quad-core Cortex-A53 clocked at 1.4GHz. Compared to the 3 B+, the Pi 4 isn’t using an ‘efficient’ core, we’re deep into ‘performance’ territory with a larger cache. But what do these figures mean in real-world terms? That’s what we’re here to find out.

Pi 4 is Very Different Hardware

The standard for benchmarking a Raspberry Pi and other single board computers is Roy Longbottom’s Raspberry Pi benchmarks. Yes, you have to compile them. When the Raspberry Pi 3 Model B+ was released, we could disregard many of these benchmarks as the memory chip and GPU were identical to the Raspberry Pi 2; there simply would be no meaningful difference apart from clock speed, which wasn’t very significant to begin with. This time, it’s different. The Pi 4 brings an entirely new SoC, a new GPU, new RAM, and new everything. The question is, does this matter? These tests will compare the Raspberry Pi 4 Model B+ to the Raspberry Pi 3 Model B+

CPU performance

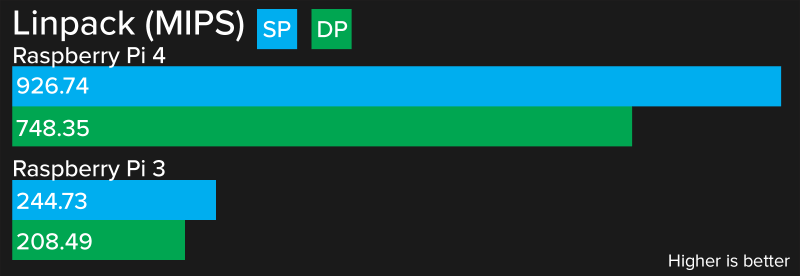

The LINPACK test simply solves linear equations and is a good enough test for raw CPU performance. The test comes in two variants, single and double precision. The huge increase seen in the Linpack benchmarks is a direct result of the change in SoC. The Raspberry Pi 3 featured a quad-core Cortex-A53, the ‘efficient’ core in the family. The Cortex-A72 found in the Raspberry Pi 4 features a larger cache and the Linpack measurement is in part a measurement of cache size.

The results show a significant gain over the Raspberry Pi 3. This is only a test of how fast a computer can multiply, though, and there’s much more that goes into the speed of a system. Memory bandwidth, for example.

Memory Bandwidth

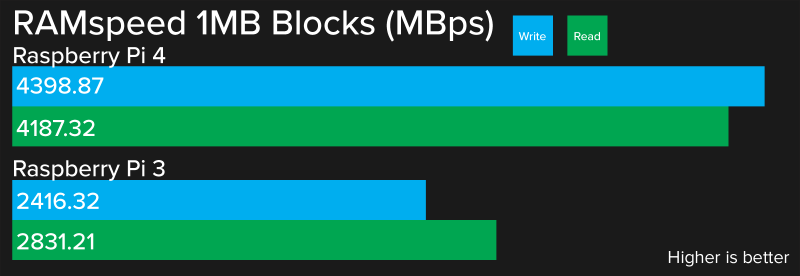

For years, the Pi has had a 32-bit memory bus, although this really didn’t matter because you could only get a Raspberry Pi 3 with 1GB of RAM. Years ago, the RAM was soldered directly onto the SoC, which meant production of that model would stop when production of that RAM chip stopped. RAM has always been the limiting factor for the Pi. This changed with the Pi 4. We now have an SoC with more data and address lines going to the RAM. Oh, and we have more than 1GB of RAM now. How does it perform?

The Pi 4 shows a significant increase in memory bandwidth, but that’s burying the lede. You can get a Pi with 4GB of RAM now, and the 8GB version has been unofficially announced in official spec sheets. If I were to guess I’d say we can expect the 8GB version in a year or so. As for memory bandwidth? Who cares — we have four times the RAM now.

Networking far Surpasses Pi 3 in Wired and Wireless Performance

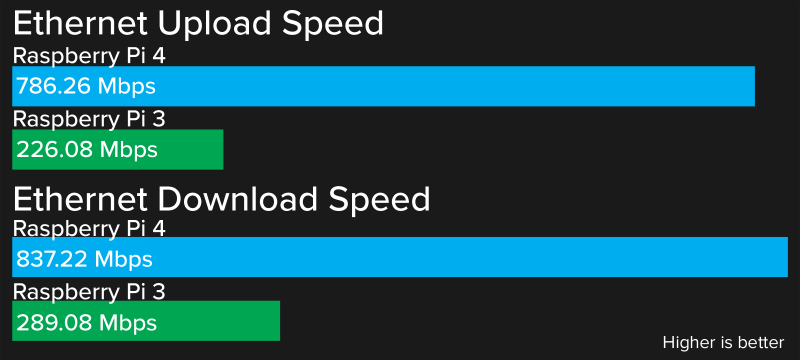

Since 2012, there has been one problem with the architecture of the Raspberry Pi, particularly the popular Model B: the USB ports and the Ethernet are all hanging off a single USB hub. From the first Raspberry Pi Model B to last month’s Raspberry Pi 3 Model B+, the USB ports and Ethernet port were controlled through a LAN7500-series chip. This chip turns a single USB connection (on the SoC) into a few USB ports and an Ethernet controller. While this is a great part to add ports to a System on a Chip, there is a bandwidth limitation: everything must go through a USB 2.0 connection, therefore the maximum combined throughput will be 480 Mbps. Gigabit Ethernet was impossible, no matter what it says in the LAN7500 datasheet, and any use of the USB connections would sap bandwidth from the Ethernet.

The good news is that the new SoC in the Raspberry Pi 4 has an Ethernet controller:

The Ethernet connection is saturated by all accounts. This was a test pinging, downloading, and uploading from speedtest.net. I’m lucky enough to have fast (and cheap) fiber, and by every account the Raspberry Pi 4 is pulling down the bits as fast as my router will allow. The Raspberry Pi 3, however, is hindered by the USB to Ethernet controller. The Raspberry Pi 4 is now a competent 4K streaming box, and not just because this is the Raspberry Pi that supports 4K HDMI.

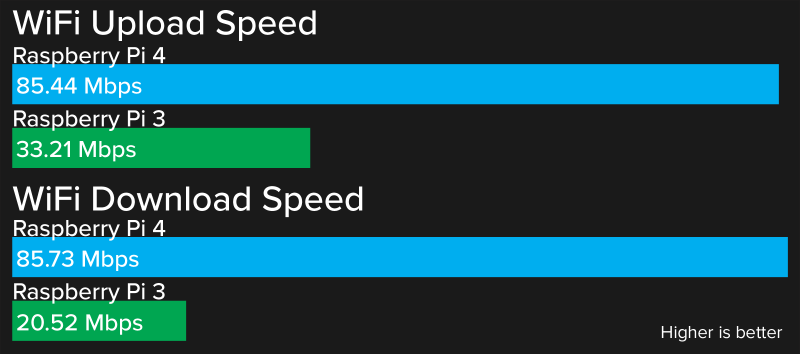

But what about WiFi? The Raspberry 3B+ has on board WiFi and Bluetooth thanks to a CYW43455, the Cypress chipset hidden underneath the lovely RF shield embossed with the Raspberry Pi logo. The Raspberry Pi 4 loses the embossed RF can, but does it perform better than the 3B+?

The Wireless in the Raspberry Pi 4 is better. While wired connections are always better, the Raspberry Pi 4 is no slouch pulling 85 Mbps from the router across the room. The Raspberry Pi 3 could only manage between 20 and 30 Mbps in the same environment.

Conclusion: Responsive Enough to Use as a Daily Driver

The Raspberry Pi was originally designed as a small, cheap Linux computer for education, the idea being that if you gave a kid a computer, they would learn STEM, or something to that effect. Computer education is mostly one of osmosis, though; you can’t tell someone how to code, there must be hands-on time. You can’t teach someone how to use a spreadsheet, you need hands-on time. Until now, the Raspberry Pi platform has lagged behind traditional desktops, laptops, and Chromebooks in terms of power and speed. This lessens the impact of the Pi in education.

Now the Raspberry Pi is on par with any desktop experience. If the Raspberry Pi 3 is a capable computer to shove underneath your workbench when you need to look something up real quick, the Raspberry Pi 4 is a capable daily driver. You could very well use a Raspberry Pi 4 as your main computer. It’s snappy enough, there’s enough memory, networking is great, and it’ll do everything you want it to do with the same responsiveness as a thousand dollar desktop.

You are comparing RPi 4 to RPi 3, but could you please compare RPi 4 to NanoPi M4 ($50), Rock Pi 4 ($64) and Odroid XU4 ($49) in terms of performance?

I currently like the Odroid-N2 ($79) with 4GB RAM, it is about 10-20% faster at number crunching than the XU4, which is still better than a RPI4 at number crunching and the USB 3.0 port actually supports 5000Mbps signalling rate unlike the RPi4 at a maximum of 4000Mbps because it is x1 PCIe. OpenCL is about 25% faster than the XU4. The SoC used is a Amlogic S922X Processor (12nm) which has a quad core core A73 (1.8Ghz without thermal throttling) and a little dual core A53(1.9Ghz).

Odroid hardware might be marginally better but it’s also more expensive, and Amlogic software support is miles away from being as good as Raspberry Pi, which is a big factor for a _lot_ of RPi buyers.

> “marginally”

The N2 is roughly two to four times the number crunching performance of a RPi4. And even the older XU4 has roughly two to three the number crunching performance of a RPi4. So it is not like you are paying more for “marginally” better.

I’ve never had any issue with software, but I have been using UNIX in one form or another for a very long time, I started on DEC Ultrix. When it comes to buying, I would be a very atypical. I read everything I can about a product and dig deep into the details to find all the known flaws before I pull the trigger on buying.

It is also double the price.

@Marcello It is really double where you are that a 4GB RPi4 is only $40 ?

Because where I am the prices are:

RPi4 with 4GB of RAM (excluding vat and shipping) $55

Odroid-N2 with 4GB of RAM (excluding vat and shipping) $79.00

the RPI has a huge community of users. find me more than 50 forums dedicated to your odriod. I dare you.

To be clear, i know Rpi 4 is worse on performance compared to latest odroid, but the only comparable board on the same price range (here in my place) as the rpi4 is odroid c1+ (literally the same lowest price i can find on my local online shop), its just stupid to buy anything other than rpi or orange pi here, everything seems to have been jacked up by the distributors or sth like that

N2 suffers from a USB saturation bug that makes raid and NAS operation impossible at least at USB3 high speed.

You are comparing RPi 4 to RPi 3, but could you please compare RPi 4 to NanoPi M4 ($50), Rock Pi 4 ($64) and Odroid XU4 ($49) in terms of performance?

No proper support for mainline kernel (maybe except of very experimental one in case of NanoPi). No open-source graphic acceleration and closed-source one only on specific kernel version – usually eons old. No promise of long term availability of hardware.

On the other hand VC6 (GPU in RPi4) have documentation and opensource graphic driver. Any new RPi feature missing in mainline kernel is usually just release away. RPi4 has long term availability and will get Compute module for integration to other products.

So they are basically incomparable. Odroid and NanoPi are wholly other kind of SBCs than RPi.

Allwinner mainline support is stable except the GPU part. Most embedded applications don’t need that, but if you do then these SBCs are not for you. The VPU has been sufficiently reverse engineered to be usable. The other peripherals are basically completely supported.

You are wrong on almost all counts. FriendlyELEC has been making ARM single board computers since before RPi existed, and they produce LTS boards specifically.

SoC’s that are mainline supported, with images available from projects like Armbian:

RK3399, RK3328, RK3288, S905X, S912, and others.

Closed source graphics drivers are not long with this world, and honestly, compared to closed source firmware that lies about processor frequency and has absolute control of the hardware, is not such a big deal.

Late 2021, we have open source drivers for many non potato 4 platforms and they perform excellent, also, now we understand how lacking is the videocore 6 on features,not been able to use dxvk or gallium9 bc of that. Also, rk3399 can run blobless, not like potato4, and that shpuld matter if you are a “hacker”. While rk3399 cant adress 8 gb of ram it have 2 times the ram bandwidth of your potato 4 and that explains how badly rpi4 performs on X compared to wayland.comparing I/O, rk3399 still have 6 times the I/O of your potato4…

Rpi4 is the most overrated sbc ever.

Lol, loved the comment, will the rpi4 emulate/play games as good as the s922x? I’m considering the 922 for emulating.

This is a fairly good improvement.

Though, I already have problems to make the Raspberry Pi 3 B+ I have struggle CPU wise, due to the RAM usually being the main bottleneck in terms of its size. After all, most modern applications do not need all that much performance, but tends to eat RAM for breakfast…

“After all, most modern applications do not need all that much performance, but tends to eat RAM for breakfast…”

This is my annoyance with most modern day programs as well. From what i can see, the blame can be pointed to the over reliance of frameworks or the incorporation of legacy code that is only needed for edge cases.

It is annoying that modern day developers are being taught to just use frameworks to make things easier. There isn’t a problem in doing so, but there is a problem in that there is no evaluation of cost/benefit and i have seen a few times where a large and comprehensive framework is being used for just one function. While theoretically this shouldn’t increase the ram foot print of the program, it often does due to inter-dependencies in the frame work that call on other functions or even whole other frameworks to be loaded. This is doubly true for web applications.

The other half is the legacy code that is often included to either deal with edge cases or just because no one has removed it from the code-base. Take a look at modern day cad packages, the solidworks install has jumped in size repeatedly and is now pushing 16GB and while i understand that it is an incredibly complex software package, the feature set change does not mesh with the size change. This always tends to happen with software developers that are more concerned with bolting on new features rather than a proper architectural vision, Microsoft windows is a great example of this with all of the problems plaguing computers with each new update. As every new version comes out bugs are fixed and new features are bolted on but no one goes back to clean up the garbage as cleaning up that garbage is not a sell-able feature and thus a cost center that must be minimized in order to maximize profits. Companies have continually kicked the can down the road because they can usually rely on hardware getting better and better but at some point it will come to a plateau, we are very close to it as can be shown by intel trying to rewrite moore’s law.

Every release of Windows (with the notable exception of Windows 8.0) has been better than the previous version – they better manage available resources, offer better security and usually improve the UI as far as typical users are concerned.

Over time, Windows has dropped certain archaic features, but it can take a decade for those features to leave the code base.

I haven’t seen Windows installations balloon in size over the past few releases.

I find that windows goes back and forth between good and bad, windows 8, ME, and even 10 are all bad and they happened between XP, 7 and 8.1 but the entire argument is more of a personal taste, well except for the issues that have been cropping up with their windows update in windows 10. For example, manually checking for updates used to put you on a test release of windows, I think that they may have changed that but it caused a lot of headaches for people who have been taught since win 95 to regularly check for security updates. Windows 10 is an absolute nightmare as Microsoft tries to transition windows into a subscription service, I have never heard of so many issues with their build updates when compared to the service packs of 8,7 and xp. The easiest way to get a bead on windows versions is to have to support the average user in using them and from my experience 10 is definitly not better than 8 or even 7.

as for your last two statements, how could the installations not balloon if new features are being added every year yet it can take a decade for those features to leave the code base. Balloon may not be the right word to describe it but the sizes have generally increased over time.

Has Windows Vista been removed from the timeline?

Don’t mention Vista please. Or ME for that matter. I’m still in therapy.

“Has Windows Vista been removed from the timeline?”

It would deserve this, but that would be too good to be true :-)

In my opinion Win 7 is still the best Windows up to now. Better than 10 or 8.

I agree Win7 is better than WinH8 or Win10.

(heck, WinXP is probably better than those two!)

Windows ME was worse than 8 or Vista. Windows ME was hard to install and hard to use because it corrupted itself just by running. And one didn’t have to do anything to cause ME to break – simply running it would be enough for it to corrupt its system files. And this was based on legendary Windows 2000 and NT.

Vista was very good at bloating over time to absurd sizes. WinSxS folder in Vista reached 15-25GB in size in one year. I once run out of disk space because of that. – Windows folder reached 45GB. Win10 holds WinSxS steadily at 7,2GB in my setup…

To be fair to Microsoft & history in general: ME wasn’t based on Win NT OS. It was truly the end of the line for the original DOS based Windows. XP was the first “consumer” Windows based on the NT line, which then moved to Vista, then 8 and now 10. All of this said doesn’t change the thrust of your post: ME was bad…things got way (IMHO) better with XP and then horrible with Vista…

“Windows ME was worse than 8 or Vista”

That’s really saying something.

One of the reasons ME was so horribly bad was that they originally planned to make a consumer version of Win2000 available with the NT core, like they did with XP later. It was originally called Neptune (internal, same as Chicago was Windows 95). But they changed their minds at the last minute and quickly hacked together a Win98 redesign.

Personally I think that was a mistake, I don’t think putting consumers on the NT core would have caused as many problems as Windows ME did. Win 2000 was actually satisfyingly stable and I preferred it to XP.

do you not remember vista or windows me? those days were awful.

win 10 was the greatest thing since xp.

Those days were fine for me. I decided quickly not to touch them with a barge pole.

For the everyday user 8.1 beats the hell out of 10. Just the experience of opening a .jpg sucks on 10. How can it take that long? And why must the cursor hover over the corner for a while before you let me close the infernal app.

Then you add updates…!

Notable exception was alsow WME and Vista.

About baloon size… I am just looking at MS site for requirements and it asks for 16GB for 32bit and 32GB for 64bit. That is insane amount for base system only.

Did someone say frameworks?

Frameworks make everything easy!

Just use druffle to interface with puffle, which needs duffle to compile luffle, which enables drabble to build babble, which needs dabble to compile dribble, which enables puffle to fluffle the socksifier.

each framework introduces between s , and mSec of latency, consumes 100’s of Mb of memory to do it’s small trivial task, and has at least 3 severe vulnerabilities.

OH,you’re back at it again…

I almost missed reading your point, because my eyes started to glaze over when I read druffle/puffle…

I haven’t used Ashton-Tate Framework since the early ’90’s.

I once tried to install something with pip and it had a dependency on NumPy. This obviously took forever because NumPy is a very large; it’s a powerful full-featured toolkit for scientific computation. This app, however, had nothing to do with scientific computation so I was very curious why it needed the entire NumPy library.

Turns out it was just using that several-hundred-MB library to convert an array of bits to bytes. That’s it. Including a library with probably a million lines of code, to do one thing that you could do in like six lines of code. Such waste.

Yes, improper use of libraries, frameworks, DLL files, etc can lead to some intense RAM utilization before one even realizes what happens.

I myself generally do some work with Node.js, it does have some overhead, especially when a program uses a lot of libraries. But if one uses the tools the libraries provides, it can at a lot of times be a fairly efficient way to do things.

Some people have stated I should use Python, or Lua, but these are practically doing the same thing as Node does with Javascript, why should I have 3 different environments for doing the same thing? And this is the next problem, a lot of programs/systems can contain a bunch of different environments for different languages that does the same thing in the end…

But yes, a lot of larger programs tends to eventually just carry a lot of unneeded luggage and could be in need of a restructuring. But cleaning up an application is costly, mainly since it takes time and doesn’t give much of an improvement to performance. Though, would be nice to see developers clean up their RAM wasting every now and then.

Indeed, it annoys me too that more and more applications are switching to easy-for-the-developer but extremely wasteful frameworks like Electron. Even key Microsoft applications are using it now like MS Teams – at least the Mac version. Just starting it up uses 700MB+. It’s ridiculous.

The biggest Problem is still existing.. no SATA / M2 SSD Port

Actually you can run SSD via USB 3.0 and it flies: https://www.tomshardware.com/news/raspberry-pi-4-ssd-test,39811.html

The full-speed USB 3 ports can, for most applications, take the place of a SATA port. An SSD in a USB 3 carrier can feed lots of fast data to the Pi.

I do not understand why that is always listed as a problem.

Everyone who wants sata or m.2 wants to use this as a storage device, which it is objectively not designed for.

To counter, there are other cheap products that actually are designed specifically for this use-case, so why would rpi spend time creating a product outside their target market, that is already occupied by dedicated competitors?

Look at the Odroid HC2, or even the single bay Synology or Qnap devices. Or if you really want a board, look at the Qboat Sunny.

And if you really must have an rpi as a storage device, wait until the compute module 4 comes out, and hopefully it has pcie and you can build a carrier board that will have m.2 or even a pcie sata controller.

I dunno. At this point, the compute module isn’t really competitive with just using a different SoC and rolling your own. So far as I can tell the only real advantage to the Pi line *FOR EMBEDDED SYSTEM USE* is Raspbian (there are a ton of other uses for the Pi where the Pi line is manifestly well suited – uses that are arguably the foundation’s target market anyway).

For enterprise I would agree because there would be cost savings in volume or even designing an entire board simply to reduce the number of assemblies.

For hobbyist though it is more appealing, as you can build a *something* and then if you wanted to scale out or sell it you could move to a compute module and get some savings in quantity vs using a full solution and hats.

I really want to get something built up for motioneye OS with a camera and POE, as using the compute module would get me POE, SD, and CSI without a whole lot else to keep it pretty small, but not need to use wifi like the pi-zero.

Yes, this is a subject Brian mentioned in his original article about the Pi 4:

https://hackaday.com/2019/06/23/raspberry-pi-4-just-released-faster-cpu-more-memory-dual-hdmi-ports/

The RPi org put together a chart showing that the Pi 4 B + a USB-attached SSD can achieve greater than 325 MB/sec read and write performance.

https://www.raspberrypi.org/magpi/raspberry-pi-4-specs-benchmarks/

RPI needs to wake up and realize that using uSD is just plain silly. If and when they do wake up and put eMMC on there then it will be worth wild as eMMC is much, much faster than any uSD ever will be.

I can go to the corner store and buy a 32 Gig uSD card for under $10 (actually under $5 if I get in my car and drive to Microcenter), eMMC is not nearly as cheap or ubiquitous.

IMHO the Pi needs removable storage, so on-board eMMC is out, and removable SSD M.2 cards are comparatively huge and expensive.

How would you accommodate eMMC on the current Pi board? Where would the chip go? How much would cost increase?

Would a USB3 flash drive like this ( https://www.amazon.com/dp/B07855LJ99/ref=cm_sw_em_r_mt_awdb_cZFjDbJ0B12SW ) be faster than a class 10 uSD card? You can set the Pi to boot off that port if speed is an issue.

lok how the orange pi pc plus does this: both sd and emmc. and a simple script to copy a bootable system from sd to emmc.

I guess people are asking for eMMC because it’s generally more reliable than microSD. Many (including me) had problems with remote Pi devices because microSD has failed. With industrial grade microSD and external SATA or USB3 key this issue can mostly be mitigated, but eMMC stays on the wishlist for some new iteration of Pi.

If it really matters, you can use a compute module and put better flash on the host part.

But I suppose at that point the better move is to design a board with a different SoC and go that way.

From what I have read eMMC, like everything else, can and does fail. Unless if the emmc device uses a socket on the bosrd, any failure would mean puchasing a new board. They way the boards are made it would be super difficut fo most the change out an eMMC module

It is important to use eMMC intended for firmware storage. It is much more reliable than bulk data storage, but also more expensive per bit.

PS if you want a bulletproof eMMC look at swissbit. Not so cheap but never seen corruption, not even when intentionally trying to cause it.

Raspberry Pi organization shows an SSD on the USB 3 port achieved in excess of 325 MB/sec – why is faster storage needed?

See: https://www.raspberrypi.org/magpi/raspberry-pi-4-specs-benchmarks/

I guess he’s asking for something like the socketed 8-bit bandwidth eMMC chips Odroid uses

Well when they add the proper NIC firmware (with PXE support), you will be able to network boot (with PoE, you could just plugin a new/replacement RPi4 and boot). And if you have ever network booted a machine, from a RAM disk to a RAM disk across a network, it can be damn fast. And no corruption caused by too many tiny writes to RAM disk.

Then again, the RPi isn’t designed for “reliability” in a sense that this was made as a tool, and not really a desktop replacement. RPi isn’t trying to replace your daily drivers, really, that’s why I’m super confused why a lot of people keep looking for features that aren’t really relevant to learning how to code, or use Linux.

In addition it gives users the ability to switch out storage as quick as you can find a uSD than (1) getting stuck with a fixed eMMC storage, or (2) buying eMMC modules that aren’t as readily available.

I think the RPi4 is the best upgrade since RPi1, and the only thing I hate about it is the removal of the full sized HDMI.

Thanks for the testing and writeup. With all the hype, it is interesting to see what the improvement actually looks like.

Also, what’s with switching up the layout of the charts half way through the article? Who does that?

I also say thanks for this information, I was considering getting a couple of pi3’s in the hope that they may be unwanted and therefore cheaper, but may reconsider and get a pi4 to run the K40 laser.

One gotcha ive found is you cant boot from USB yet.

You cant use the same method as the pi 3 .

We have to wait for a future update for booting from an external drive as I wanted to do for my zoneminder pi box.

It should not be too difficult to use the external drive as a root device, even if you don’t boot off of it.

Officially, that’s a typo in the leaflet. https://www.raspberrypi.org/forums/viewtopic.php?f=63&t=243372&start=350#p1485310

Any idea how this compares to a Celeron N2807? This might make a good replacement for my NGINX reverse proxy: https://engineerworkshop.com/2019/01/16/setup-an-nginx-reverse-proxy-on-a-raspberry-pi-or-any-other-debian-os/

Now if only they’d fix the USB-C power non-compliance issue…

Supposedly gonna happen when they order a fresh batch of PCB’s for the next big run.

FYI: https://arstechnica.com/gadgets/2019/07/raspberry-pi-4-uses-incorrect-usb-c-design-wont-work-with-some-chargers/

>Benson Leung, an engineer at Google and one of the Internet’s foremost USB-C implementation experts, has chimed in on the Pi 4’s USB-C design too, with a Medium post titled “How to design a proper USB-C™ power sink (hint, not the way Raspberry Pi 4 did it).”

>We reached out to Raspberry Pi about this issue and were told a board revision with a spec-compliant charging port should be out sometime in the “next few months.”

Ars Technica…

“Raspberry Pi admits to faulty USB-C design on the Pi 4“

“I expect this will be fixed in a future board revision,” says co-creator.”,/i>

Ron Amadeo – 7/9/2019, 11:20 AM:

“…The Pi 4 was the Raspberry Pi Foundation’s first ever USB-C device, and, well, they screwed it up…”

“…After reports started popping up on the Internet, Raspberry Pi cofounder Eben Upton admitted to TechRepublic that “A smart charger with an e-marked cable will incorrectly identify the Raspberry Pi 4 as an audio adapter accessory and refuse to provide power.” Upton went on to say, “I expect this will be fixed in a future board revision…It’s surprising this didn’t show up in our (quite extensive) field testing program.”

https://arstechnica.com/gadgets/2019/07/raspberry-pi-4-uses-incorrect-usb-c-design-wont-work-with-some-chargers/

****************************************

(from Ars Technica)–

“Pi4 not working with some chargers (or why you need two cc resistors)”

By Tyler | June 28, 2019

https://www.scorpia.co.uk/2019/06/28/pi4-not-working-with-some-chargers-or-why-you-need-two-cc-resistors/

*************************************

(from Ars Technica)–

“…Benson Leung, an engineer at Google and one of the Internet’s foremost USB-C implementation experts, has chimed in on the Pi 4’s USB-C design too, with a Medium post titled “How to design a proper USB-C™ power sink (hint, not the way Raspberry Pi 4 did it).”

“How to design a proper USB-C™ power sink (hint, not the way Raspberry Pi 4 did it)”

Benson Leung

https://medium.com/@leung.benson/how-to-design-a-proper-usb-c-power-sink-hint-not-the-way-raspberry-pi-4-did-it-f470d7a5910

The @RaspberryPi twitter account recently tweeted dashing hopes for an 8GB unit. They said the 8GB reference was a typo.

Uh-huh…

I mean, if they didn’t deny the 8Gb was in their plans, people might hold off buying one NOW! and wait for the 8Gb to arrive.

I once had an email exchange with Horowitz (or was it Hill?), they had mentioned a new edition was in the works (the current edition) but were forbidden to say when it would arrive. (Existing stocks needed to be used up)

I bought the (then) current edition, it turned out to be years before the next edition hit the shelves.

This comment ( https://hackaday.com/2019/06/25/is-4gb-the-limit-for-the-raspberry-pi-4/#comment-6159684 ) which I’m about 95% sure is from one of the core RPi people, says that parts do not exist for it to be supported (yet). But it does not say that a 8GB and/or 16GB version are not going to happen at some future date when parts eventually exist.

I a few days ago I received a RPI4-1G and tested (empirically) as a data server. I have a 2TB WD passport USB3.0 drive attached to RPI4 using NFS. I am using the wired ethernet port for the home network and the WIFI for internet access. Seems very ‘acceptable’ as a small server. I was impressed. I did an rsync session to bring the drive up to date with my ‘real’ data server and as far as I could tell, the speed was consistent with same drive on a desktop PC. The only thing that irritated me was the ‘sleep mode’ of the drive… Ie. not ‘instant’ access to your data when using it as a ‘server’. Maximum memory used was only 100M, so even with 1G I have plenty of memory for this activity. OS was Raspbian ‘Buster’ Lite. I don’t need a GUI for using this as a server. I just accessed RPI4 via SSH. The RPI4 does run warm. With a heat sink it is running 54C idle. When I was transferring files it jumped to 65C. No fan. My Ryzen powered Desktops and server run in the 30s idle.

I need to explore the GPIO more now as my as a ‘server’ curiosity is satisfied.

use hdparm to adjust the sleep mode timings of the drive. small heatsink and fan should do nicely.

may want to use pi-hole to surf the internet.

When I found an xbix-360 in the garbage a few years ago, I got excited, I’d read Linux could run on it. It had potential of being better than the desktop I was using at the time.

But it took only 512k of ram, no easy way to expand. I wanted more ram.

I have a full blown system now, but I’ll get a 4 when I can. They are smaller than the laptops I have, even the $20 netbook I got last summer. Dual core desktops can be had for abiut fifty dollars, bht the 4 uses less power and space, unless you need the expansion.

Michael

Yeah, my thought was to build a cheap small server (lunch box) that just needed two external connections — power and ethernet cable. This could possibly replace my mid-tower size server. Set in corner anywhere and be done with it as no need for screens/keyboards. I tried an ODROID XU4 quite a while back but wasn’t satisfied with it (reason escapes me).

“Roy Longbottom”

I’m sorry, but that last name either reminds me of a lesser known (and less successful) pirate, or the red guy from Cow & Chicken.

More review I read and more I am convinced this raspberry Pi 4 is a very bad board for makers.

Look like is targeting DIY media player with very bad storage management or cheap desktop.

Th PI 3 is enough powerful for 99% of usage but is already too power hungry.

The Pi4 have computation power than no body need unless you want a desktop computer with terrible storage management and bad connection and you will NEVER be able to run on battery or solar pannel.

I really don’t understand what is the point of this board.

Low power home server.

Digital signage system (two HDMI ports)

Minimalistic sbc tinkering system (deploy to appropriately specced hardware once you know your exact needs)

For a small stand alone SBC or embedded computer, it is okay for the price. There are better ones out there too.

I wouldn’t call it a “Real Desktop Contender” Pretty sure that one could find a 7-8 years old used computer at that price range that is easily a few times faster and can do most of what I need (minus AAA games). I can live with a slower processor as trade off for power, but even 4GB RPi4 is not simply not enough for my desktop need. The PC route also offer upgrade path with memory, GPU and networking.

I do really wish tech reporters would quit encouraging that silly crowd who thinks the Pi is meant to be your main computer or something. People get really unreasonable expectations about this $35 naked board replacing their desktop and then they go online and whiiiiine and whiiiiiiinnneee. It’s really unfair towards the Pi. It’s an educational/utilitarian dev board with amazing support and a fair price, not a consumer desktop. It kind of single-handedly started its own product niche (in the popular market at least) and I think that’s enough. It keeps getting loads better, which is just a bonus at this point.

It’s cool that it’s capable enough to be a decent web browser and stream video, but it’s not exactly the intended use.

To be fair to the RPi4 the 28 nm process 1.5GHz quad core BCM2711B0 is almost (in terms of integer and floating point operations per second) equivalent to a 65nm process 1.83GHz Intel Core 2 Duo (T5600) from 2006.

I can’t wait to get my hands on one.

Wait for the 2nd batch with the corrected USBC power delivery resistors.

FWIW, compared to my daily driver:

########################################################

Linpack Double Precision Unrolled Benchmark n @ 100

Optimisation Opt 3 32 Bit, Wed Jul 10 18:04:14 2019

Speed 2789.83 MFLOPS

Numeric results were as expected

########################################################

SYSTEM INFORMATION

From File /proc/cpuinfo

processor : 0

vendor_id : GenuineIntel

cpu family : 6

model : 61

model name : Intel(R) Core(TM) i5-5300U CPU @ 2.30GHz

stepping : 4

microcode : 0x21

cpu MHz : 2300.359

cache size : 3072 KB

physical id : 0

siblings : 4

core id : 0

cpu cores : 2

apicid : 0

initial apicid : 0

fpu : yes

fpu_exception : yes

cpuid level : 20

wp : yes

flags : fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush dts acpi mmx fxsr sse sse2 ss ht tm pbe syscall nx pdpe1gb rdtscp lm constant_tsc arch_perfmon pebs bts rep_good nopl xtopology nonstop_tsc aperfmperf pni pclmulqdq dtes64 monitor ds_cpl vmx smx est tm2 ssse3 sdbg fma cx16 xtpr pdcm pcid sse4_1 sse4_2 x2apic movbe popcnt tsc_deadline_timer aes xsave avx f16c rdrand lahf_lm abm 3dnowprefetch epb invpcid_single intel_pt kaiser tpr_shadow vnmi flexpriority ept vpid fsgsbase tsc_adjust bmi1 hle avx2 smep bmi2 erms invpcid rtm rdseed adx smap xsaveopt dtherm ida arat pln pts

bugs : cpu_meltdown spectre_v1 spectre_v2 spec_store_bypass l1tf

bogomipLinux version 4.4.172 (root@hive64.slackware.lan) (gcc version 5.5.0 (GCC) ) #2 SMP Wed Jan 30 17:11:07 CST 2019

From File /proc/version

Linux version 4.4.172 (root@hive64.slackware.lan) (gcc version 5.5.0 (GCC) ) #2 SMP Wed Jan 30 17:11:07 CST 2019

So my laptop is only ~3x as fast

Hi, I wonder how is the new pi wrt browsing, with a few tabs open. Cheers.

How have the graphics improved? The show-stopper for me using a Pi as a desktop has always been how slow the graphics are. With no proper graphics driver for X, and having to use the crappy framebuffer driver, it’s really really sluggish and unresponsive. Are there proper drivers now for this new GPU, or do we still have to suffer a really unresponsive desktop?

> cheap homebrew NAS

The USB 3 and GBE make it nearly *perfect* for replacing the dead backup server. Considering that the dead machine was a single-core Athlon64 3800+ with 2GB of PC3200 and lived in an ATX case, things are looking pretty good.

could you compare PI4 to Intel Celeron J3160 please?

Raspberry Pi Benchmark Test

Author: AikonCWD

Version: 3.0

temp=44.0’C

arm_freq=1750

core_freq=550

core_freq_min=275

gpu_freq=600

gpu_freq_min=500

sd_clock=50.000 MHz

Running InternetSpeed test…

Ping: 131.915 ms

Download: 11.74 Mbit/s

Upload: 1.84 Mbit/s

Running CPU test…

total time: 7.4176s

min: 2.89ms

avg: 2.97ms

max: 10.65ms

temp=52.0’C

Running THREADS test…

total time: 12.7010s

min: 4.70ms

avg: 5.08ms

max: 38.35ms

temp=55.0’C

Running MEMORY test…

Operations performed: 3145728 (1501495.43 ops/sec)

3072.00 MB transferred (1466.30 MB/sec)

total time: 2.0951s

min: 0.00ms

avg: 0.00ms

max: 9.40ms

temp=55.0’C

Running HDPARM test…

HDIO_DRIVE_CMD(identify) failed: Invalid argument

Timing buffered disk reads: 130 MB in 3.02 seconds = 43.04 MB/sec

temp=46.0’C

Running DD WRITE test…

536870912 bytes (537 MB, 512 MiB) copied, 39.2605 s, 13.7 MB/s

temp=44.0’C

Running DD READ test…

536870912 bytes (537 MB, 512 MiB) copied, 11.8643 s, 45.3 MB/s

temp=43.0’C

AikonCWD’s rpi-benchmark completed!

This is my Pi4, lightly overclocked and stable. Running off a Pi3B Power Supply with adaptor, Pretty sure I can get 2.0Gig with genuine PS.