The computer security vulnerabilities Meltdown and Spectre can infer protected information based on subtle differences in hardware behavior. It takes less time to access data that has been cached versus data that needs to be retrieved from memory, and precisely measuring time difference is a critical part of these attacks.

Our web browsers present a huge potential surface for attack as JavaScript is ubiquitous on the modern web. Executing JavaScript code will definitely involve the processor cache and a high-resolution timer is accessible via browser performance API.

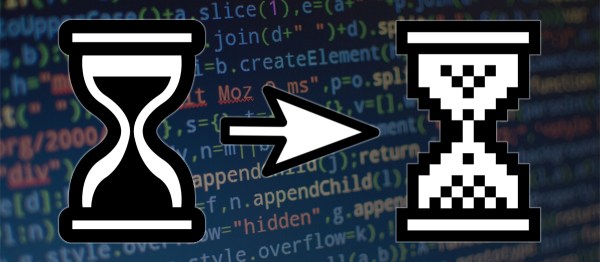

Web browsers can’t change processor cache behavior, but they could take away malicious code’s ability to exploit them. Browser makers are intentionally degrading time measurement capability in the API to make attacks more difficult. These changes are being rolled out for Google Chrome, Mozilla Firefox, Microsoft Edge and Internet Explorer. Apple has announced Safari updates in the near future that is likely to follow suit.

After these changes, the time stamp returned by performance.now will be less precise due to lower resolution. Some browsers are going a step further and degrade the accuracy by adding a random jitter. There will also be degradation or outright disabling of other features that can be used to infer data, such as SharedArrayBuffer.

These changes will have no impact for vast majority of users. The performance API are used by developers to debug sluggish code, the actual run speed is unaffected. Other features like SharedArrayBuffer are relatively new and their absence would go largely unnoticed. Unfortunately, web developers will have a harder time tracking down slow code under these changes.

Browser makers are calling this a temporary measure for now, but we won’t be surprised if they become permanent. It is a relatively simple change that blunts the immediate impact of Meltdown/Spectre and it would also mitigate yet-to-be-discovered timing attacks of the future. If browser makers offer a “debug mode” to restore high precision timers, developers could activate it just for their performance tuning work and everyone should be happy.

This is just one part of the shock wave Meltdown/Spectre has sent through the computer industry. We have broader coverage of the issue here.