Getting exact statistics on one’s physical activities at the gym, is not an easy feat. While most people these days are familiar with or even regularly use one of those motion-based trackers on their wrist, there’s a big question as to their accuracy. After all, it’s all based on the motions of just one’s wrist, which as we know leads to amusing results in the tracker app when one does things like waving or clapping one’s hands, and cannot track leg exercises at the gym.

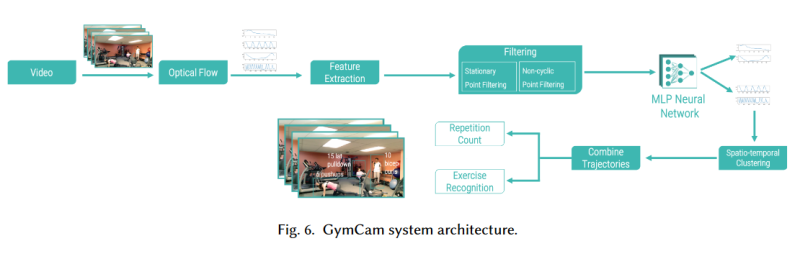

To get around the issue of limited sensor data, researchers at Carnegie Mellon University (Pittsburgh, USA) developed a system based around a camera and machine vision algorithms. While other camera solutions that attempt this suffer from occlusion while trying to track individual people as accurately as possible, this new system instead doesn’t try to track people’s joints, but merely motion at specific exercise machines by looking for repetitive motion in the scene.

The basic concept is that repetitive motion usually indicates forms of exercise, and that no two people at the same type of machine will ever be fully in sync with their motions, so that merely a handful of pixels suffice to track motion at that machine by a single person. This also negates many privacy issues, as the resolution doesn’t have to be high enough to see faces or track joints with any degree of accuracy.

In experiments at the university’s gym, the accuracy of their system over 5 days and 42 hours of video. Detecting exercise activities in the scene was with a 99.6% accuracy, disambiguating between simultaneous activities was 84.6% accurate, while recognizing exercise types was 93.6% accurate. Ultimately repetition counts for specific exercises were within 1.7 counts.

Maybe an extended version of this would be a flying drone capturing one’s outside activities, giving one finally that 100% accurate exercise account while jogging?

Thanks to [Qes] for sending this one in!

So does it capture gym activity like paying for a membership and then never going?

That’s already being captured by the CRM of the gym :) They’re their favorite type customers.

Maybe you can have members wear qr codes or similar on their shirts to identify them and show them stats at the end of the day. Pretty cool

“Detecting exercise activities in the scene was with a 99.6% accuracy, disambiguating between simultaneous activities was 84.6% accurate, while recognizing exercise types was 93.6% accurate. Ultimately repetition counts for specific exercises were within 1.7 counts”.

Are these stats a comparison to the wrist-based fitness trackers? If so, it would seem reasonably close for most users, so why bother. Maybe a professional athlete might need more precise stats, but not the average gym rat. Guess it’s more of a marketing scheme, giving the gym a new feature to attract and keep more members. To the people on the lens-end of a camera, resolution doesn’t matter, it’s still a camera, and there could be some pervert on the other side of the ‘curtain’, streaming every bounce or jiggle. If an AI can be train to track movements, couldn’t a higher resolution camera, and different AI training be used, to focus on pervert aspects just as well? Cell phones are frowned upon in many gyms for a reason, not just that they are a distraction….

someone make an AI to fix the smell in gyms ..

I farted at the gym all the time

I just write down what I do. Seems to work. How does the camera know how much weight I’m lifting at any one time? Sorry, but this seems like a waste of time.

That’s my way of doing things. Some people poke fun at the log book, but there’s something to be said for being able to look plainly at a written record when you feel like you’re struggling.

Seeing your own writing telling you that you should be able to do that last set, or that extra plate just seems to give you a little… extra.

It’s entirely psychological though, so maybe doesn’t work for everyone.

I could see this system being adapted for form check, that would be a huge benefit for a lot of people. Throw a projector onto a semi-mirrored surface, and you’ve then got augmented reality feedback, could show you in real time where you’re maybe overstretching or such.

Throw in QR codes (or otherwise) to track plates / equipment, or even skeletal joints, and it suddenly opens up a lot of potential.

That would be /very cool/.

If you have no notion about your repetitive motions,no clue about your get fit-lotions

, this will make you snigger about the gym-jock-jigger and do it in double digits ,whilst the algorithm fidgets, to sort out the mitt from the other bit that sorts out angular velocity with some ferocity ,whilst the dangly bits get plenty of hits,at the cheap end of town where everyone is let down …by the antics of a few ….

I will keep taking the pills.

seems it not work