Amidst the ongoing RAM & storage apocalypses, Mad Max-esque scenes are unsurprisingly developing, with the eMMC recycling project by [Chase Fournier] from a pair of XBox One S (‘XBone’) mainboards being just one more example. These mainboards come equipped with a 5 GB eMMC chip installed, alongside 8 GB of DDR3.

Amidst the ongoing RAM & storage apocalypses, Mad Max-esque scenes are unsurprisingly developing, with the eMMC recycling project by [Chase Fournier] from a pair of XBox One S (‘XBone’) mainboards being just one more example. These mainboards come equipped with a 5 GB eMMC chip installed, alongside 8 GB of DDR3.

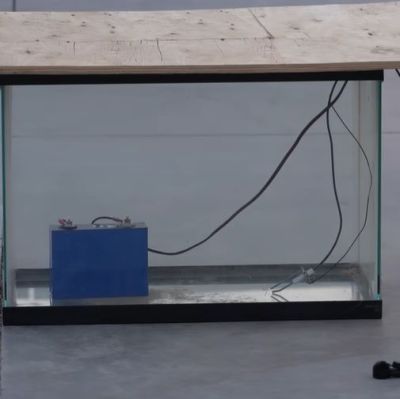

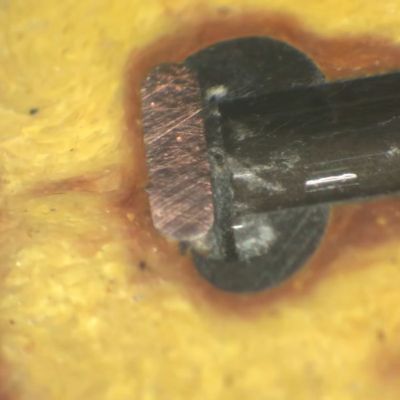

Removing the eMMC chips isn’t that complicated and after some reballing fun the chips were both installed on a carrier board with a Norelsys NS1081 controller IC. This provides a USB 3.0 interface and can connect to up to four SD or eMMC memories, with here just two channels used.

Although the eMMC testing device didn’t seem too happy with either chip, after mounting them on the PCB the controller could be programmed and saw both eMMC packages for a grand total of 10 GB storage.

Sequential read performance in CrystalDiskMark was about 140 MB/s while write performance was about 64 MB/s, which is zippy enough for smaller files. Not that you can store more than 10 GB on this USB drive anyway.

Turning the DDR3 ICs on the mainboard into proper DIMM or SODIMM sticks would also be an idea, as even such older memory tech keeps ramping up in demand. As for the XBone X variant with its 12 of GDDR5, that’s probably a harder proposition to repurpose, but recycling old consoles suddenly has become a lot more exciting.

Continue reading “Recycling Two XBox One Consoles Into A 10 GB USB Flash Drive”