We love the idea of [Amos]’s Tangible Programming project. It reminds us of those great old Radioshack electronics labs where the circuitry concepts took on a physical aspect that made them way easier to digest than abstractions in an engineering textbook.

MIT Scratch teaches many programming concepts in an easy to understand visual way. However, fundamentally people are tactile creatures and being able to literally feel and see the code laid out in front could be groundbreaking for many young learners. Especially those with brains that favor physical touch and interaction such as ADHD or Asperger’s minds.

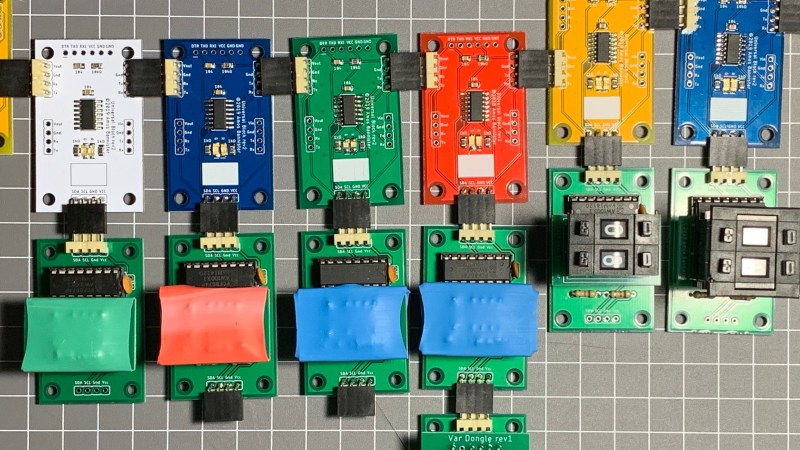

The boards are color-coded and communicate via an I2C bus. Each board’s logic and communication is handled by an ATTiny or ATMega. The current processing is visible through LEDs or even an OLED display. Numbers are input either through thumbwheel switches or jumpers.

The code concepts will, of course, be simple and focused due to the physical nature of the blocks. Integer arithmetic, simple loops, and if/else conditionals. Quite a lot of concepts can be built around this and it could be a natural diving board into the aforementioned Scratch and eventually an easy to learn language like python.

Code I can smell, that is what I am looking for. And frankly I have run across some that smells pretty bad.

Not sure the world is ready for scratch-and-sniff programming. OOP (Outgoing Orificial Programming) was hard enough.

And quite odorous as well…(olfactory offensive)

I dunno how useful this will be. The physicality limits it to simple concepts and processes.

Rather than actual circuitry in the blocks, and a plug-in buss (which itself is an advanced concept which distracts from the basics), I believe the best implementation would be some sort of physical gridded board with input and output points, on which students place physical blocks representing logic gates, decision branches, blocks containing common functionality, etc. it would look like a physical representation of a UML diagram. Blocks would be symbolically connected with wires. Either through the plugins to the grid, or by optical scanning, the physical diagram would be converted to software.

At its heart, programming is pretty abstract. If someone can’t quickly make the leap from such a physical representation to an on-screen logic diagram (and then finally to written code), I don’t think they are likely to get much out of this.

Very basic, and lets face it, very expensive implementation for what it does. It will join a long list of other ‘plug pieces together and make things!’ products laying in clearance bins in short order, assuming it makes it that far.

Reminded me of LogicBots when I first saw it.

Does seem as though a Lego style brick would be more inline with Scratch and minimal electronics in each brick to determine type and placement not necessarily an actual working components system

†As well a as a ‘send /execute button not so close to thick thumbs to complete a thought before commiting. Grid pattern cube placement with the same idea of representation would be my second thought.

Scratch that. Make it hexagons and triangles. Cubes overdone.

There was bitching about AR/VR support being dropped in phoneland recently. Printing out a bunch of hex cubes with associated functional quality represented by QR code and processing as elementts to a programming language… Nah too complex. Nevermind.

Oooh, I bet Brainfuck is easy to implement as a physical programming sort of thing. However, each device should have a knob for how many times you want to repeat that symbol, otherwise you’ll run out of pieces soon… eh, no. Never mind.

yes very correct

Very forward thinking and more in line with what Bret Victor is doing at Dynamicland. Also reminds me of microbits