Ready for more speculative execution news? Hope so, because both Intel and AMD are in the news this week.

The first story is Load Value Injection, a different approach to reading arbitrary memory. Rather than try to read protected memory, LVI turns that on its head by injecting data into a target’s data. The processor speculatively executes based on that bad data, eventually discovers the fault, and unwinds the execution. As per other similar attacks, the execution still changes the under-the-hood state of the processor in ways that an attacker can detect.

What’s the actual attack vector where LVI could be a problem? Imagine a scenario where a single server hosts multiple virtual machines, and uses Intel’s Secure Guard eXentensions enclave to keep the VMs secure. The low-level nature of the attack means that not even SGX is safe.

The upside here is that the attack is quite difficult to pull off, and isn’t considered much of a threat to home users. On the other hand, the performance penalty of the suggested fixes can be pretty severe. It’s still early in the lifetime of this particular vulnerability, so keep an eye out for further updates.

AMD’s Takeaway Bug

AMD also found itself on the receiving end of a speculative execution attack (PDF original paper here). Collide+Probe and Load+Reload are the two specific attacks discovered by an international team of academics. The attacks are based around the reverse-engineering of a hash function used to speed up cache access. While this doesn’t leak protected data quite like Spectre and Meltdown, it still reveals internal data from the CPU. Time will tell where exactly this technique will lead in the future.

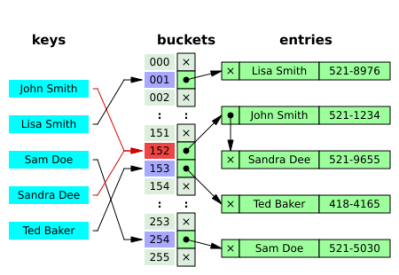

To really understand what’s going on here, we have to start with the concept of a hash table. This idea is a useful code paradigm that shows up all over the place. Python dictionaries? Hash tables under the hood.

Imagine you have a set of a thousand values, and need to check whether a specific value is part of that set. Iterating over that entire set of values is a computationally expensive proposition. The alternative is to build a hash table. Create an array of a fixed length, let’s say 256. The trick is to use a hash function to sort the values into this array, using the first eight bits of the hash output to determine which array location each value is stored in.

When you need to check whether a value is present in your set, simply run that value through the hash function, and then check the array cell that corresponds to the hash output. You may be ahead of me on the math — yes, that works out to about four different values per array cell. These hash collisions are entirely normal for a hash table. The lookup function simply checks all the values held in the appropriate cell. It’s still far faster than searching the whole table.

AMD processors use a hash table function to check whether memory requests are present in L1 cache. The Takeaway researchers figured out that hash function, and can use hash collisions to leak information. When the hash values collide, the L1 cache has two separate chunks of memory that need to occupy the same cache line. It handles this by simply discarding the older data when loading the colliding memory. An attacker can abuse this by measuring the latency of memory lookups.checking

If an attacker knows the memory location of the target data, he can allocate memory in a different location that will be stored in the same cache line. Then by repeatedly loading his allocated memory, he knows whether the target location has been accessed since his last check. What real world attack does that enable? One of the interesting ones is mapping out the memory layout of ASLR/KASLR memory. It was also suggested that Takeaway could be combined with the Spectre attack.

There are two interesting wrinkles to this story. First, some have pointed out the presence of a thank-you to Intel in the paper’s acknowledgements. “Additional funding was provided by generous gifts from Intel.” This makes it sound like Intel has been funding security research into AMD processors, though it’s not clear what exactly this refers to.

Lastly, AMD’s response has been underwhelming. At the time of writing, their official statement is that “AMD believes these are not new speculation-based attacks.” Now that the paper has been publicly released, that statement will quickly be proven to be either accurate or misinformed.

Closed Source Privacy?

The Google play store and iOS app store is full of apps that offer privacy, whether it be a VPN, adblocker, or some other amazing sounding application. The vast majority of those apps, however, are closed source, meaning that you have little more than trust in the app publisher to ensure that your privacy is really being helped. In the case of Sensor Tower, it seems that faith is woefully misplaced.

A typical shell game is played, with paper companies appearing to provide apps like Luna VPN and Adblock Focus. While technically providing the services they claim to provide, the real aim of both apps is to send data back to Sensor Tower. When it’s possible, open source is the way to go, but even an open source app can’t protect you against a malicious VPN provider.

Huawei Back Doors

We haven’t talked much about it, but there has been a feud of sorts bubbling between the US government and Huawei. An article was published a few weeks back in the Wall Street Journal accusing Huawei of intentionally embedding backdoors in their network equipment. Huawei posted a response on Twitter, claiming that the backdoors in their equipment are actually for lawful access only. This official denial reminds me a bit of a certain Swiss company…

Does the word “#backdoor” seem frightening? That’s because it’s often used incorrectly – sometimes to deliberately create fear. Watch to learn the truth about backdoors and other types of network access. #cybersecurity pic.twitter.com/NEUXbZbcqw

— Huawei (@Huawei) March 4, 2020

[Robert Graham] thought the whole story was fishy, and decided to write about it. He makes two important points. First, the Wall Street Journal article cites anonymous US officials. In his opinion, this is a huge red flag, and means that the information is either entirely false, or an intentional spin, and is being fed to journalists in order to shape the news. His second point is that Huawei’s redefinition of government-mandated backdoors as “front doors” takes the line of the FBI, and the Chinese Communist Party, that governments should be able to listen in on your communications at their discretion.

Graham shares a story from a few years back, when his company was working on Huawei brand mobile telephony equipment in a given country. While they were working, there was an unspecified international incident, and Graham watched the logs as a Huawei service tech remoted into the cell tower nearest the site of the incident. After the information was gathered, the logs were scrubbed, and the tech logged out as if nothing had happened.

Did this tech also work for the Chinese government? The NSA? The world will never know, but the fact is that a government-mandated “front door” is still a back door from the users’ perspective: they are potentially being snooped on without their knowledge or consent. The capability for abuse is built-in, whether it’s mandated by law or done in secret. “Front doors” are back doors. Huawei’s gear may not be dirtier than anyone else’s in this respect, but that’s different from saying it’s clean.

Abusing Regex to Fool Google

[xdavidhu] was poking at Google’s Gmail API, and found a widget that caught him by surprise. A button embedded on the page automatically generated an API key. Diving into the Javascript running on that page, as well as an iframe that gets loaded, he arrived at an ugly regex string that was key to keeping the entire process secure. He gives us a tip, www.debuggex.com, a regex visualizer, which he uses to find a bug in Google’s JS code. The essence of the bug is that part of the URL location is interpreted as being the domain name. “www.example.com\.corp.google.com” is considered to be a valid URL, pointing at example.com, but Google’s JS code sees the whole string as a domain, and thinks it must be a Google domain.

For his work, [xdavidhu] was awarded $6,000 because this bit of ugly regex is actually used in quite a few places throughout Google’s infrastructure.

SMBv3 Wormable Flaw

Microsoft’s SMBv3 implementation in Windows 10 and Server 2019 has a vulnerability in how it handles on-the-fly compression, CVE-2020-0796. A malicious packet using compression is enough to trigger a buffer overflow and remote code execution. It’s important to note that this vulnerability doesn’t required an authenticated user. Any unpatched, Internet-accessible server can be compromised. The flaw exists in both server and client code, so an unpatched Windows 10 client can be compromised by connecting to a malicious server.

There seems to have been a planned coordinated announcement of this bug, corresponding with Microsoft’s normal Patch Tuesday, as both Fortinet and Cisco briefly had pages discussing it on their sites. Apparently the patch was planned for that day, and was pulled from the release at the last moment. Two days later, on Thursday the 12th, a fix was pushed via Windows update. If you have Windows 10 machines or a Server 2019 install you’re responsible for, go make sure it has this update, as proof-of-concept code is already being developed.

Ah yes, I often secure one of my ground floor window openings with only a sheet of tissue paper, secure in the knowledge that it’s against the law to break through it.

Because criminals would never dream of breaking the law.

Tissue paper on the other hand can be broken by a lot of creatures, weather. The replacement come in bulk pack. Traditional Japanese house window anyone? :P

A regular window can be broken by someone more determined. If you are in a bad neighborhood, reinforced windows with steel bars.

right now tissue paper is more likely to be stolen than what’s inside your house. (in the UK)

Well yeah, we can go right up to, “I like to store my ATM behind a solid block wall, secure in the knowledge that it’s illegal to reverse a truck through it, lasso it with a chain and drag it away.”

Funny story, in the town I live in, there used to be a little store. The little store had an ATM in it, and taped on the ATM there was a card that said “Bolted for” and 3 initials. We knew the owner and we asked what the heck was the card for. It turned out that they came into the store one morning and realized something was wrong, it took them a bit to realize the ATM was missing. Gone. Poof. Even funnier was it was later found in a locals garage. He was one of the local troublemakers but there were no fingerprints on the ATM, and no proof that he put it there or knew it was there. To be honest, it someone had put it in my barn in the winter, I might not notice it until the spring.. Anyway, the store got the ATM back and bolted it down very securely this time and immortalized with with the suspected thief’s initials. Gotta love small towns.

Huawei effectively IS the Chinese government.

Same as Hikvision, the CCTV monster.

Both were funded out of the PLA to startup. Directed by if you will – a mandate to take over their respective markets through domination with technological advances (starting from copying others) AND destroying competition by under cutting and dumping product on the market.

And both are complicit in the torture and rendition of the Uyghur minorites but we dont care because, meh, muslims.

We cant go around killing thousands of them in the Middle East then standing by and watching Syria do the same and then have the gaul to call China out about it.

So we ignore it.

All companies in China know what they must do if they get that knock at the door.

Their CEO’s know who really owns the company. They are allowed to be rich as long as they do what they are told.

It’s part of the game.

Using Huawei in your critical infrastructure means you’re happy at some point in the future to be broken to China’s will.

I don’t understand why anyone is concerned over governments spying on citizens when these same concerned citizens are completely non-plussed about private companies like google, micro$oft, amazon and Facebook who way more invasive in stealing your information, monetizing you and selling all your info for profit. And hiding behind fake user agreements that change unilaterally with your automatic consent.

Are there any processors where the hash algorithm for cache would be actually secret?

Of course it is not necessarily documented, but it seems unlikely that any processor would waste time using a cryptographically secure hash algorithm in caches. And anything non-cryptographic can always be reverse engineered with modest level of effort. I guess it could be randomized at runtime with some key, but I’m not aware of any processor doing that either.

Then anything that ppl are unable to reverse engineer is an NSA black blob, can’t win really.

Some things never change, like the continuous stream of SMB bugs.

https://securitytracker.com/id/1007154

Microsoft’s finest programmers are incapable of writing secure C++ code, as usual. No surprise because nobody can do it.

https://web.archive.org/web/20021006074221/http://afr.com/it/2002/10/01/FFXDF43AP6D.html

from 2002:

“A lot of the really technical people who really understood the protocol appear to have left Microsoft. Certainly (the Samba team) knows a lot more about the Microsoft protocol than the people who Microsoft sends to the (annual) CIFS conferences. The people they’ve sent along haven’t had a clue, but I don’t know if they were just people who happened to be walking up the corridor when the manager decided he needed someone to go along.

Nobody writes articles about the well-written, secure c++ code out there.

Closed Source Privacy?

Not only we don’t have access to source code, but with iOS devices for example, we don’t even have access to binary code, since every app you download is encrypted with a key unique to your device. The only solution is to have access to a jailbroken device.

And if in addition the app is using encryption to communicate with the outside world, either a proprietary encryption, or a TLS encryption with certificate pining, no MITM is possible to inspect content of exchanged data.

Yes, privacy and security are important, but one should have the right and means to decrypt every binary code and incoming/outgoing data from its own device.

Fer regex i find https://regex101.com/ is a little bit better.

Another online regex tester: https://extendsclass.com/regex-tester.html