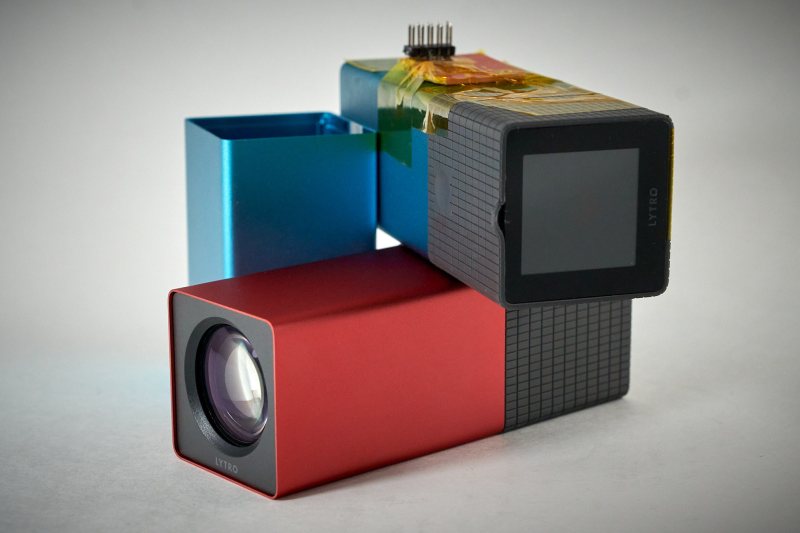

Back in 2012, technology websites were abuzz with news of the Lytro: a camera that was going to revolutionize photography thanks to its innovative light field technology. An array of microlenses in front of the sensor let it capture a 3D image of a scene from one point, allowing the user to extract depth information and to change the focus of an image even after capturing it.

The technology turned out to be a commercial failure however, and the company faded into obscurity. Lytro cameras can now be had for as little as $20 on the second-hand market, as [ea] found out when he started to investigate light field photography. They still work just as well as they ever did, but since the accompanying PC software is now definitely starting to show its age, [ea] decided to reverse-engineer the camera’s firmware so he could write his own application.

[ea] started by examining the camera’s hardware. The main CPU turned out to be a MIPS processor similar to those used in various cheap camera gadgets, next to what looked like an unpopulated socket for a serial port and a set of JTAG test points. The serial port was sending out a bootup sequence and a command prompt, but didn’t seem to respond to any inputs.

Digging deeper, [ea] began to disassemble the camera’s firmware. He managed to find a list of commands like “take photo”, “delete”, “reboot” and so on which neatly mapped to known camera functions, as well as a few undocumented ones. The command interpreter also seemed to check for a certain input string, generated by passing the camera’s serial number together with the word “please” through an SHA-1 hash function – this turned out to be the keyword to unlock the serial interface.

Digging deeper, [ea] began to disassemble the camera’s firmware. He managed to find a list of commands like “take photo”, “delete”, “reboot” and so on which neatly mapped to known camera functions, as well as a few undocumented ones. The command interpreter also seemed to check for a certain input string, generated by passing the camera’s serial number together with the word “please” through an SHA-1 hash function – this turned out to be the keyword to unlock the serial interface.

Now able to send commands directly to the camera’s CPU, [ea] wrote a Python library and a set of tools to operate the camera remotely, and enabled several new features. The Lytro can now function as a webcam, for instance, or be operated remotely with full control over its zoom and focus mechanisms. All those functions can be accessed through the built-in WiFi interface, so there’s no need to solder wires to the CPU’s serial port.

With the low-level functions now out in the open, we’re curious to see what hidden potential there still is in Lytro’s technology. Perhaps these cameras can be repurposed to make more advanced 3D capture systems, similar to research Google presented in 2018. If you need a primer on light field technology, check out Alex Hornstein’s presentation from the 2018 Supercon.

The HAD effect strikes again. Lytro light field cameras immediately shot to $100/each.

Out of curiosity, how much were they before?

They were not $20 on ebay even before this article. I’ve tried to buy them but they go for $150 to $250 usually.

Yeah I have been hunting for one, but I kinda want the Lytro illum (but to expensive). but I never saw one for $20

The original ones are sometimes available for cheap because they won’t charge, and the owner doesn’t know that there’s a sequence of buttons you have to hold to instruct the camera to try and charge its battery if it is empty. I didn’t see an illum for cheap though.

expensive.

$400 basic

$600 16 gb memory

later $1500 for the 2nd gen.

Still cheaper than a raytrix.

https://raytrix.de/

@Ostracus says: “Still cheaper than a raytrix.”

Raytrix came after Lytro: On March 27, 2018, Lytro announced that it was shutting down operations. In November, 2018, the original Lytro website lytro.com was redirecting to Raytrix, a German manufacturer of scientific light field cameras.[1]

Raytrix is a highly refined professional 3D-capable light-field camera.[2] So yeah, understandably lots more fun means lots more money.

1. https://en.wikipedia.org/wiki/Lytro#History

2. https://raytrix.de/

$20??? Look on ebay, it’s 10 times that. Check your sources, not fair to mention the lucky buy as market value.

“Get quote” business model? Sorry, but: NO! :-D

If you have to ask you can’t afford it.

Barry is a wise man….

sorry, its far too early to reply….at this hour, report loos like reply…Apologies. (Need more coffee!)

What I wanted to say was this isn’t always true, so if you know whatthe price should be and know you should be able to afford it, get the quote, but yeah, more often than not, what you say is true.

My biggest counterexample is Fry Steel. They may have put prices up on their website now, but only a limited set ofwhat they offer! They sell raw metal stock, and for about the best prices around. An order from there for maraging 300 steel was $400. The same order from onlinemetals was $1200! Even with a 20% “pro” discount, Fry Steel blows them out of the water. For anything abnormal though, like Maraging 300, you’re going to have to call for a quote :-/ Drives me nuts, but sometimes, just sometimes, its worth calling for the quote :)

Lol I was wondering

Was wondering where the $20 ones went to…

I was super-obsessed with these when they came out but didn’t have any money since I was in college. Might have to keep an eye out for when/if the price drops again.

Same, but divorce.

I was poor, but thought the tech sounded cool, so I scraped. I have one somewhere. The results were underwhelming though, and the DSLR with with focus stacking did a far better job. (But I was far too poor then for that! – Nowadays I do have a mirrorless, with the Arsenal Pro 2 which can do focus stacking without the need for a computer!) I may have to see if I can find mine and see if these “upgrades” might make it worth something. Especially if paired with a modern CPU to do some additional processing on raw data (if available) in realtime. I think I somehow acquired a second one as well, but its been far too long….going to have to dig through boxes!

I got mine for $75 (on Woot???) million years ago.

And then the big one on firesale for $350.

The cool thing is, the software can export depth map for the picture, which then I can convert to

Probably the design was too advanced for its time. The concept may be directly applicable to any resurrected designs for dual (or triple) lens stereoscopic cameras which are useful for 3D virtual reality.

Focus stacking is not new but neat to see this finally reverse engineered.

The idea of taking multiple refocused photos at once and automatically change or fix the focus actually does have merit and can make some really neat photos. You just needed to work around physical limits of what an average camera can do. That’s what that was doing.

Or take a bunch of photos and then do it all in software as that works too. Most people just want to take a quick high resolution photo and don’t care though.

https://en.wikipedia.org/wiki/Focus_stacking or just search for focus stacking here too.

Lytro isn’t doing focus stacking. It is literally recording the light field and then simulating a lens system in software to reconstruct a focussed image.

How is it “literally recording the light field”? My understanding at the time was that they use a micro-lens array in front of the image sensor, so different pixels are recording the light rays coming from different directions. In essence, trading off resolution for field data.

Yes. That is literally recording the light field data, not focus stacking.

Check out the wikipedia on light field cameras: https://en.wikipedia.org/wiki/Light_field_camera

Also check out this video from an undelivered kickstarter: https://youtu.be/Hpar1-oHg8o

There are versions of light field cameras that work on the focus stacking principle.

One of the papers referenced in the Wikipedia article is also pretty interesting. https://opg.optica.org/oe/fulltext.cfm?uri=oe-24-19-21521&id=349880

I’ve only just skimmed it, but it looks like it describes the same sort of operation as the Lytro cameras.

The two methods look to be roughly equivalent.

as the other guy said, thats not what lytro did and if raw data is available, it might be able to do some neat things…

However, if you want automatic focus stacking, check out https://witharsenal.com/ Great way to simulate the effect if you have a halfway modern DSLR (or mirrorless)

I have one of these, It’s not that it was all that advanced. The resolution is horrible, because you are giving up pixels to collect the light field information, and you need special software and support to get the enhanced viewing experience and no one else ever supported that. Without a viewer being able to manipulate the image a bit, you just have a low res photo. Another issue is you really need to think a lot about composing shots and getting just the right set up or there’s not much of an effect. In my experience, macro was always the best use case.

That aligns with my experience. I had hoped I could do more with the depth field info the software could produce but I never tried that hard. I still have my camera lying around and I may dust it off to see what I can do with this hack though.

Thinking back on this camera gives me some ideas to try that may not need a huge amount of resolution.

I believe the “dual-pixel” features on various cameras make a tiny use of the same concept – but you need a lot more pixels per microlens to do what these do. It may be time to revisit these, since we’ve gotten to the point of needing to combine many of the ridiculous numbers of pixels we have anyhow. It’d be great for smartphone users; simulate the dof of a much larger aperture by turning their processors to light field compute instead of ai fakery. Plus the refocus effects could be very interesting, especially if they could manage to do it at high enough framerate for video. Could make for very cool things in VR/AR with some work.

It looks to me that you only need 3 infinitely sharp pictures from 3 pinhole cameras to construct some reasonable 3D model of the field. Stereoscopic viewers of the 1960 needed only 2 sharp pictures from left and right positions.

Pixels needed may be only 3 times of a single pinhole camera.

I don’t know where you’re finding this for $20. I always wanted one, but didn’t want to pay that high price tag for one. I’d love to get my hands on one for $20, but over on eBay, the lowest current bid is $40 and goes up from there all the way to $200!

I’d snap someone’s hand off for one of these at $20, have never seen them at that price.

I’m curious to know how they figured out it was waiting for the SHA-1 of the serial number + “please” – that seems like inspired guesswork!

Found an ex-lytro engineer and offered them favors

quiet a lucky guess

“please” x3

Found an ex-lutro engineer and hit them with a wrench.

They reverse engineered the firmware and used the serial interface…. seems pretty logical to me. its all documented on his github: https://github.com/ea/lytro_unlock#secret-unlock-command

No guesswork at all, reverse engineered the firmware as the linked writeup explains

By dissecting the firmware with Ghidra. The readme in the linked GitHub tells the story.

* Now the Lytro Light Field Camera has jumped to $299.00 at my post time:

https://www.amazon.com/dp/B0099QUUBU

* Here is a comprehensive review of the Lytro Light Field Camera from Feb 29, 2012:

https://www.dpreview.com/reviews/lytro

* On March 27, 2018, Lytro announced that it was shutting down operations:

https://en.wikipedia.org/wiki/Lytro

Apple stole this tech from Lytro and baked it into the iPhone. Apple had a meeting with them to discuss a purchase. About a year later Apple introduced “portrait mode” they lifted the tech from Lytro.

Correct.

Sounds about right for Apple. Bad actors all around.

Yup, they always have been, ever since the first 128K Macs they will snow you under with litigation if you dare step a toe into their walled garden.

I was under the impression they just used a bunch of processing informed partly by the depth map from face id? I don’t see anything about light field mentioned in a brief search.

No, portrait mode is mostly just software, there is no lightfield tech in iphones currently.

Faking it is good enough, only technophiles care if it’s actual lightfield tech.

Always wanted one, and $20 would be worth it on a lark, but oh well.🤗

A shame there’s no place to host the live images that users can manipulate. You can still record animations from the shots that you take with them but the beauty was taking a shot, and letting viewers control what they wanted to see and do with that shot. Lytro had a proprietary website to host of those photos that went away a long time ago. I was hoping something like Flickr or Instagram would offer those options to host of that but it never panned out. Let it be fun to play with some more as I have a perfectly good Lytro Illum, The fixed lens camera that looked more like a DSLR that they released after the little box cameras.

You can host them yourself! After I restored many of the Wayback Machine’s archived living pictures[1], I played around with the archived JavaScript and got a bare minimum self-hosted version working[2]. This leans on their “v2” WebGL-based viewer and surfaces the minimum HTML, JS, and JSON to get it working, including monkeypatching a WebGL whitelist. View source, download the three JS and two CSS files.

To try it yourself, manually export a Lytro image from Desktop 5 to “editable LFP,” which gave me a stack of seven TIFF images + the depth map, and a stack.lfp file, which has some JSON embedded in it. Convert the TIFFs to JPEG, manually extract some of the values from the JSON, and guess at the aspect ratio in the HTML (I don’t know where the aspect ratio value comes from, if it changes per-image, or per-camera, or differs based on orientation, or what). And, uh, it should work.

A few caveats: It requires WebGL, because I couldn’t figure out how to get the original 37-image still stack out of LPT with the optimal UV values. The JSON there is not all of the possible JSON, just the minimum to get the WebGL viewer working; I don’t know if quality improves in some way with all the data. The aspect ratio (which does appear to be 1.0 for the Gen1 camera) doesn’t seem to match the dimensions, which are all over the place in archived images, so idk. There’s no good WebGL fallback in this; it’d be neat for someone to monkeypatch that further to show a static preview image without the play button or instructional text

Reply if you self-host anything!

[1] https://wiki.archiveteam.org/index.php/Lytro

[2] https://s3.amazonaws.com/vitorio/lytro-webgl-export-test-01/lytro-webgl-viewer-fix.html

Saying please didn’t help Bowman… https://www.youtube.com/watch?v=dSIKBliboIo

Maybe because his lycro looks slightly different from the regular ones? Probably different processor and firmware altogether.

The price hike was just the futures market. Some AI reading the internet saw “Litro” and decided “buy Litro futures”.

Dystopia is already here.

(Only somewhat) tongue-in-cheek ;-P

nice

This is such great news! I hope we can achieve something with the Lytro Illum. Its operating system is Android! So it just might be possible to pack the original Lytro Os into an app and use the camera with a Stock Android Rom with or without Google play store instead.

Combined with the built in wifi module you could use apps like Instagram, Snapseed or Lightroom LR to diretly post your images online.

The possibilities could be endless!

During a robotics course I’d done a little discussion on the Lytro.

What I proposed was you get two of them a known distance apart mounted on gimbles. From there a very simple algorithm to compare the scene from both cameras. With that you’ve got all the angles to compare across the baseline between the cameras.

Now you’ve got an entirely passive sensor that can determine the size and or distance to an object. or even across scene.

Wonder if setting up two side by side or four of them in a grid would let you overcome the pixel count / resolution complaints people had.

On another note…

In all seriousness, how difficult would it be to home brew or reverse engineer the hardware side of this? I mean Cannon, Nikon, and Panasonic all made “binocular” 3D lens hardware that fit their standard cameras. Between those big three and the Lytro micro array of lenses, this is a proven concept.

Could a similar effect be rendered with multiple sensors, each with its own micro array lens, or would a grid of 16 much smaller sensors, with every four sensors equipped with its own fixed focal length be better?

Like this;

1 2 3 4

4 1 2 3

3 4 1 2

2 3 4 1

Similar to smart phones with multiple camera sensors, only with quite a bit more.

Are there custom lens grinders or jewelers out there that could cut a better engineered micro array lens for a modern single sensor to allow for a similar form factor? I don’t know enough about lenses and image processing software to know what to ask here, but I think using cameras like this might be applicable to giving remote systems better “human vision”.

It looks like a company called Pelican Imaging did something along the lines of what you are suggesting.

It also looks like a hobbyest has also taken a crack at making a light field camera: http://cameramaker.se/Lightfield.htm

Holy crap. My Google-Fu is weak.

I dug around for an hour and didn’t find Wernersson’s site. This is awesome.

Cool, I bought mine back when they were about a year old or so for $150. It wasn’t my best purchase at the time, but I’m glad I hung on to it. I’ll be trying this out as soon as I get a chance.

“The command interpreter also seemed to check for a certain input string, generated by passing the camera’s serial number together with the word “please” through an SHA-1 hash function – this turned out to be the keyword to unlock the serial interface.”

Curious how the ‘please’ was figured out

I bought one they came out. It’s living in a drawer somewhere around here.

nice article

I just got one for 90 bucks, making sure it was “tested”, and praying it would work when it got here. It does. When these run down for years, you can’t always get the battery to recharge, no matter what secret button presses you know. So do not ever buy an untested one.

The other challenge was that the camera would not connect to my modern 2019 Mac running OS 12.x. The Lytro Desktop 4 sw would run, but some helper extension would not load to connect the camera. My solution was to run the sw on my 2012 MacBook running 10.14 Mojave. Once the pictures are transferred to the lytro photo file on my iCloud, I can edit on the higher powered computer, or the laptop.

That’s great! I actually purchased mine when it was around a year old for $150. Initially, I wasn’t too thrilled with the purchase, but I’m glad I held onto it. I’ll definitely give this a try as soon as I have the opportunity.