If you’ve ever played around with macro photography, you’ve likely noticed that the higher the lens magnification, the less the depth of field. One way around this issue is to take several slices at different focus points, and then stitch the photos together digitally. As [Curious Scientist] demonstrates, this is a relatively simple motion control project and well within the reach of a garden-variety Arduino.

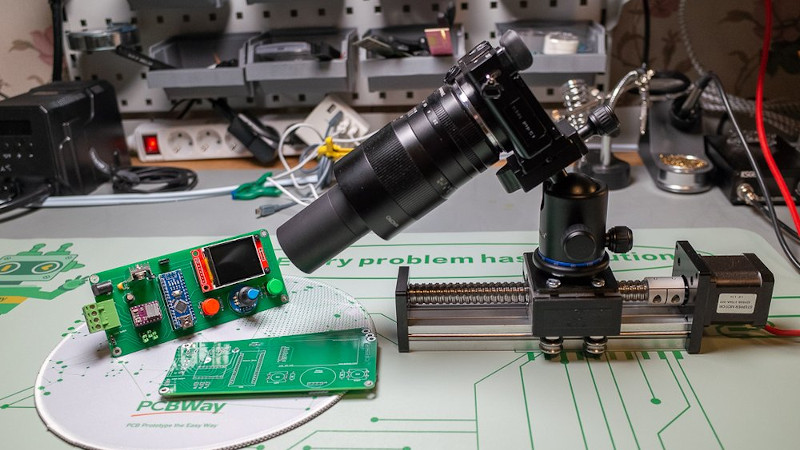

You can move the camera or move the subject. Either way, you really only need one axis of motion, which makes it quite simple. This build relies on a solid-looking lead screw to move a carriage up or down. An Arduino Nano acts as the brains, a stepper motor drives the lead screw, and a small display shows stats such as current progress and total distance to move.

The stepper motor uses a conventional stepper driver “stick” as you find in many 3D printers. In fact, we wondered if you couldn’t just grab a 3D printer board and modify it for this service without spinning a custom PCB. Fittingly, the example subject is another Arduino Nano. Skip ahead to 32:22 in the video below to see the final result.

We’ve seen similar projects, of course. You can build for tiny subjects. You can also adapt an existing motion control device like a CNC machine.

Had to watch video on silent

How do you merge the photos? Somehow make a mask from focus (? Based on an FFT)

Stacking software is used to combine the images. Using it is a bit of an art as it can produce strange, surreal results if you get the settings wrong.

Alan Hadley’s Combine ZP is popular (and free), while Picolay (also free for non commercial use) has its admirers.

Google “free image stacking software” for a more complete list, downloads and manuals.

Be warned, much of this software is Windows only.

Stitching scanned images also uses software that takes account of corner distortions when photographing a large object. As I have never used it (I go deep rather than wide), I can only suggest that google might be your friend.

Flat bed scanners used to come with software such as scan ‘n’ stitch, but they didn’t have any correction for the field curvature found in cameras.

Photoshop will do exactly that process, each image in a stack is auto evaluated and a mask is applied so the sharpest area shows. The process is only three steps 1) Load all images into stack 2) Auto auto-align stack (just in case there was any slight movement that changed alignment) 3) Auto-blend stack

There are free automatic stacking software. Astrophotographer use them alot.

I use Zerene Stacker which works really well.

A popular free option is enfuse, which is packed with Hugin, means you can use Hugin to do focus stacking too.

I do a lot of focus stacked macro photography. Photoshop can do it but it’s slow and not very accurate. Helicon Focus is my preferred tool. It’s not free but it’s the fastest that I’ve found and is really easy to use. The latest version supports the M1/M2 chips on Macs.

It would have been nice to see how he merged the individual images. Does it require dozens of images or just a handful? How automated is the merging process? Then you could assess whether the choke point is in the image acquisition or the post processing.

See my previous for Image Stacking software.

Mileage varies with the number of frames to stack, the size of those frames and your choice of software and settings. The software tends to run faster than image acquisition if you allow settling time when moving the sample/camera.

In Photoshop the process is fairly automated, all frames are loaded as a stack and auto-blend with auto-align pretty much takes care of the heavy lifting (a layer mask is created on each image in the stack so the sharpest area remains visible).

The video was focusing (pun intended) on the rig and not on the stitching. You can find the answer on Google in like 2 seconds by searching “how to merge images by focus stacking”… The number of images depend on many parameters such as the magnification, aperture, the depth that you want to cover and so on, as others also said it. There will be another video on this (or actually a new) rig relatively soon and I will include the stacking process in it as well.

You can also keep the camera and subject still, and change the lens focus – an arduino can talk to a canon over v-usb though a real host would probably be better.

This is what I did with a Sony camera and a homebrew app called FocusBracket that runs on the camera. Ended up working better for my purposes than a macro rail.

The idea is good, but not if you go towards extreme macro photography where you mount a microscope lens on your camera using extension tubes and/or a bellow. In that case, it is almost certain that you must use a rig like I presented in the video.

True, however, a lot of lenses will change actual magnification when changing the focus point. It’s called “breathing”. Plus, moving the camera allows very precise control of the focus point when stacking hundreds of images with very thin depth of focus. Also, that works with any camera.

If shooting indoors under constant light, why not just stop down the lens to get more depth of field? Who cares if it takes 2 seconds instead of 1/100th?

That’s not how optics work. Below a certain aperture (f8-f11-ish), diffraction will play more and more role and your image will start to soften. Also, even at narrower apertures, you won’t get wide enough depth of field so you would need to do stacking anyway.