Over the history of this business, a lot of people have foreseen limits that look rather silly in hindsight– in 1943, IBM President Thomas Watson declared that “I think there is a world market for maybe five computers.” That was more than a little wrong. Depending on the definition of computers– particularly if you include microcontrollers, there’s probably trillions of the things.

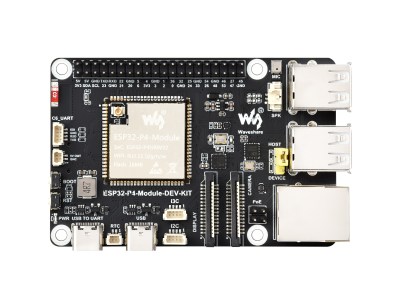

We might as well include microcontrollers, considering how often we see projects replicating retrocomputers on them. The RP2350 can do a Mac 128k, and the ESP32-P4 gets you into the Quadra era. Which, honestly, covers the majority of daily tasks most people use computers for.

The RP2350 and ESP32-P4 both have more than 640kB of RAM, so that famous Bill Gates quote obviously didn’t age any better than Thomas Watson’s prediction. As Yogi Berra once said: predictions are hard, especially about the future.

Still, there must be limits. We ran an article recently pointing out that new iPhones can perform three orders of magnitude faster than a Cray 2 supercomputer from the 80s. The Cray could barely manage 2 Gigaflops– that is, two billion floating point operations per second; the iPhone can handle more than two Teraflops. Even if you take the position that it’s apples and oranges if it isn’t on the same benchmark, the comparison probably isn’t off by more than an order of magnitude. Do we really need even 100x a Cray in our pockets, never mind 1000x?

Image: ASCI Red by Sandia National Labs, public domain.

Going forward in time, the Teraflop Barrier was first broken in 1997 by Intel’s ASCI red, produced for the US Department of Energy for physics simulations. By 1999, it had bumped up to 3 Teraflops. I don’t know about you, but my phone doesn’t simulate nuclear detonations very often.

According to Steam’s latest hardware survey, NVidia’s RTX 5070 has become the single most common GPU, at around 9% total users. When it comes to 32-bit floating point operations, that card is good for 30.87 Teraflops. That’s close to NEC’s Earth Simulator, which was the fastest supercomputer from 2002 to 2004– NEC claimed 35.86 Teraflops there.

Is that enough? Is it ever enough? The fact is that software engineers will find a way to spend any amount of computing power you throw at them. The question is whether we’re really gaining much of anything.

At some point, you have to wonder when enough is enough. The fastest piece of hardware in this author’s house is a 2011 MacBook Pro. I don’t stress it out very much these days. For me, personally, it’s more than enough compute. If I wasn’t using YouTube I could probably drop back a couple generations to PPC days, if not all the way to the ESP32-P4 mentioned above.

Image: ESP-32-P4 Dev Board, by Waveshare.

The 3D models for my projects really aren’t more complex than what I was rendering in the 90s. Routing circuit diagrams hasn’t gotten more complicated, either, even if KiCad uses a lot more resources. For what I’m working on these days, “enough compute” is very modest, and wouldn’t come close to taxing the 2 Teraflop iPhone. That’s probably why Apple was confident they could use its guts in a laptop.

How about you? The bleeding edge has always been driven by edge cases, and it’s left me behind. Are you surfing that edge? If so, what are you doing with it? Training LLMs? Simulating nuclear explosions? Playing AAA games? Inquiring minds want to know! Furthermore, for those of you who are still at that bleeding edge that’s left me so far behind– how far do you think it will keep going? If you’re using teraflops today, will you be using petaflops tomorrow? Are you brave enough to make a prediction?

I’ll start: 640 gigaflops ought to be enough for anybody.

Not a lot of the stuff I have is any newer than about 2012-2014, with my daily driver being a T430 from 2012. (I’d like more ram, but don’t really need more speed.) I have maybe something like a slightly better low-end AMD GPU from 2016 here or there. The biggest exception is a fairly new machine with an nvidia 30 series GPU whose only purpose was to train small vision models in pytorch. The oldest machine is from 2006 and that’s really the only place there’s an “every day” struggle since it’s CPUs aren’t quite enough to watch video streaming from twitch, though it’s 32GB of ram is quite luxurious for anyone who keeps a lot of tabs open during a project.

For any given task, there’s some minimum amount of compute required to accomplish it comfortably, and really the compute that is “enough” is the maximum of that set.

If you only need word processing, the floor is quite low. Add web browsing and it goes up a fair bit (adblocking helps), add video streaming and it goes up a bit more, and then training AI models is probably the worst offender. (though perhaps a fast GPU could still be coupled to a fairly old system) Gaming straddles a wide spectrum, with some games being very light and some not too far below model training.

In terms of the absolute minimum requirements, I typically want anything that isn’t a single-purpose device to have an MMU, since debugging system crashes from errant applications is no fun. I’d also probably want it to have support in the latest NetBSD to have useful libraries and applications available, though they still support the VAX 11/780 from 1977 so eg. the specific arm boards they support tends to be more limiting than age.

This could become an issue of real import if asia explodes into a war over taiwan. Thankfully, there are TONS of ewaste computers from the last 1.5 decades that are perfectly serviceable for a lot of everyday needs.

I haven’t replaced my main PC for a decade or so, and all the while it’s supported by Linux I’ve no particular reason to do so.

Finding that sweet spot in compute is tricky. I’ve been wrestling with this while optimizing some embedded display modules for a project. Efficiency always beats raw power.

i bought a low-end CPU+MB in 2019, and in 2024 i bought a new CPU for the same MB…got it used…the high-end 2019 CPU, the fastest that was compatible with the MB at the time. ryzen 3 2200G -> ryzen 7 3700X. first time i upgraded the CPU halfway through the lifespan of a motherboard (about a decade imo).

and i actually enjoyed the upgrade! 8 cores! “make -j” is so fast…i remember when i started at my job in 2001, i tried to avoid re-compiling the world because it took 10 minutes but now i can do it in about 10 seconds.

can’t imagine wanting anything faster :)

IBM CEO Thomas Watson, Sr. never said, “I think there is a world market for maybe five computers.” Unfortunately, this spurious quotation has been repeated so often, in so many places, that it has taken on the patina of truth.

According to IBM, this is a misquote of a statement by his son, Thomas Watson, Jr. from IBM’s stockholder meeting in 1953:

“We believe the statement that you attribute to Thomas Watson is a misunderstanding of remarks made at IBM’s annual stockholders meeting on April 28, 1953. In referring specifically and only to the IBM 701 Electronic Data Processing Machine – which had been introduced the year before as the company’s first production computer designed for scientific calculations – Thomas Watson, Jr., told stockholders that ‘IBM had developed a paper plan for such a machine and took this paper plan across the country to some 20 concerns that we thought could use such a machine. I would like to tell you that the machine rents for between $12,000 and $18,000 a month, so it was not the type of thing that could be sold from place to place. But, as a result of our trip, on which we expected to get orders for five machines, we came home with orders for 18.'”

That’s a lovely quote. Can you cite a source? It would be great to have that the next time someone misquotes Tom Senior.

The quotation comes from the FAQ section of IBM’s history website circa 2007. The website has since been replaced, but the FAQ can still be found in this PDF on page 26 (thank you Wayback Machine): https://web.archive.org/web/20071010071202/http://www-03.ibm.com/ibm/history/documents/pdf/faq.pdf

I’ve always heard the quote attributed to IBM’s giving away rights to PC computer’s DOS to Micro$oft because IBM didn’t see value in PC’s. I always thought it to be ironic that the prediction of “I think there is a world market for maybe five computers” was just ahead of its time if you think about companies like Goggle and Fakebook and Amazone pushing all our devices to omnipotent “cloud” based computing. AI is the pinnacle ode to cloud computing.