[Michael] from Nootropic Design wrote in to share an interesting and fun project he put together using one of the products his company sells. The gadget in question is their “Video Experimenter” shield which was designed for the Arduino. It is typically used to allow the manipulation of composite video streams via overlays and the like, but it can also serve as a video analyzer as well.

When used for video analysis, the board lets you decode closed captioning data, which is exactly what [Michael] did here. He decided it would be fun to scrape the closed captioning information from various shows and commercials to do a little bit of content analysis.

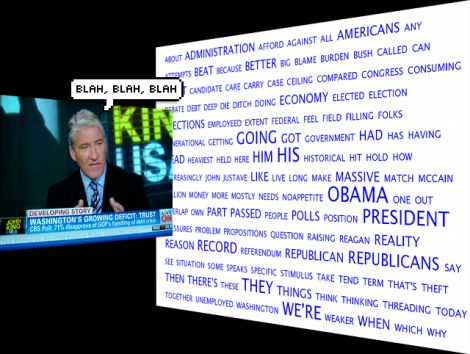

Using a Processing sketch on his Arduino, he reads the closed captioning feed from his cable box, keeping a count of every word mentioned in the broadcast. As the show progresses, his sketch dynamically constructs a cloud that shows the most commonly used words in the video feed.

The results he gets are quite interesting, especially when he watches the nightly news, or some other broadcast with a specific target audience. We think it would be cool to run this application during a political debate or perhaps during a Hollywood awards ceremony to discover which set of speakers is the most vapid.

if you’re interested in learning more about the decoding process, [Michael] has put together a detailed explanation of how the closed captioning data can be pulled from a video stream. For those of you who just want to see the decoder in action, keep reading to see a quick video demonstration.

[youtube=http://www.youtube.com/watch?v=s_2zWhPJvW8&w=470]

Well, I’m quite impressed. I expected some meaningless new media BS, but this is interesting, useful, and effective.

Now you can keep track of the most used sensationalist words used by all newscasters.

keywords:

obama

debt ceiling

palin

casey anthony

space shuttle

libya

heat wave

I don’t know, but perhaps anyone who needs to use an Arduino to determine what set of speakers at a Hollywood awards show are the most vapid aren’t really in a position to judge. ;)

I’d like to use this for the next apple key note ( or any other company ) provided they use closed captioning.

It would be fun to write this into a piece of software to use this while recording/analyzing a broadcast and at the same time grab relative timecode positions for each logged word. Then select all the words you would like to export in a video clip. For now it would probably require you to adjust the in and out points for a shot list, but it would be much easier to create a clip like the following:

http://www.youtube.com/watch?v=Nx7v815bYUw

@mess_maker

Record the program.

Scan through for all words have a separate data field for time.

Just take the top 20 words or so and go to the times listed for those words re-record those times only and tada you have what you see up there.

@Drake, yes, that is pretty much what I meant, though I got tripped up in my description. *blush*

This would be made into a Bullshit Bingo game machine.

http://en.wikipedia.org/wiki/Buzzword_bingo

If your computer has a TV Tuner in it, it shouldn’t be too difficult to pull the CC data from it as an alternative.

Anyone else notice that “Republican” and “Republicans” are pretty big, but nowhere in the list does “Democrat” show up? I’m going from the photo at the top, rather than the video.

I also like some of the hidden messages, like “We’re Sorry” and “Against All Americans”, “More Mostly Needs No Appetite” and “Propositions Question Raising Reagan”, “That’s Theft! Then There’s These”

and the winner is (drumroll) “Today, Together… Unemployed Washington.”

Since the list is alphabetically sorted I am pretty sure that just happened to work out that way.

I really like coming here because most everywhere else on the internet is so politically charged that I enjoy a small escape. I’d love it if HAD stayed that way.

I just think they’re funny, that’s all. I’d rather avoid politics altogether, myself.

@mess_maker

Ah, if it were that simple…

I work at a TV station, and all of our monitoring feeds have CC turned on, so I see QUITE a bit of it. I can’t say this applies to all stations, but I would guess it would be similiar everywhere.

First, closed captioning almost never corresponds exactly with the spoken word. Sometimes it can be as much as a minute behind. When that happens, expect to see a dropped sentence or two. This problem is worst for live events, but is better if the closed captioner has a script available.

Second, mispellings are not uncommon. Some programs are better than others, and I would assume that it depends largely on the individual captioner (closed captioning is done by someone at a keyboard watching the program). I have seen some truely horriable CC, and I do well to understand what was meant even when I hear what was said.

Finally, sometimes the captions get garbled. If that happens, nothing is going to help.

won’t be nicer if we can capture 100s news program, and provide real time statics on words mentioned in last 24 hours. I guess that means we need more than 100 Arduino too… :(

any thoughts ?

@jack:

The real limiting factor for me is the access to multiple cable TV sources, not the electronics equipment, which is relatively cheap.

Personally, I would only be interested in providing real time stats for CNN, Fox, and CNBC; that way you could show the real-time media topics. You could add in comparison with Twitter and Google News, and see how certain phrases started trending after showing up on the news.

So that would only require 4 Arduinos and 4 Video Experimenters, so ~ $50 for each unit would make $200, plus a cheap laptop and a VPS to host a website. And 4 cable TV sources. VPS is ~$20 a month, and I don’t want to even think about how much cable for 4 TV’s is in my area…probably around $50 + a month, if I went for analog TV and not including the install price for cable…

I almost want to start up a quickstart project on it right now…

Something else that comes to mind with a project like this is that sometimes it is more important what is not being said. For instance, there was a recent article indicating that FOX was not reporting about the UK tabloid scandal for some reason. Why? What are they hiding? Pot calling the kettle black? Media watchdog organizations could put something like this to work quickly and cheaply.