Everything is online these days creating the perfect storm for cyber shenanigans. Sadly, even industrial robotic equipment is easily compromised because of our ever increasingly connected world. A new report by Trend Micro shows a set of attacks on robot arms and other industrial automation hardware.

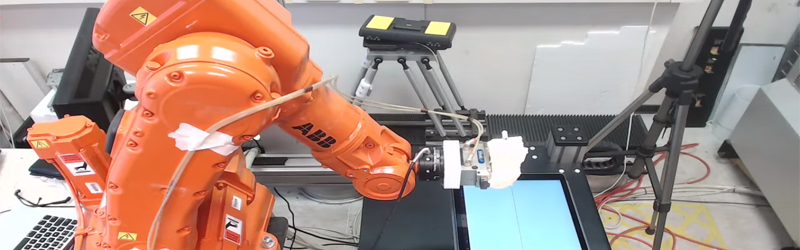

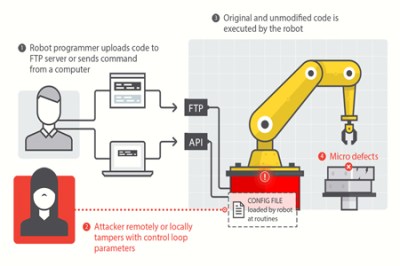

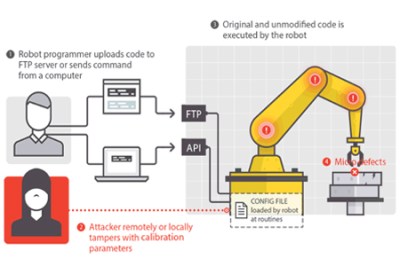

This may not seem like a big deal but image a scenario where an attacker intentionally builds invisible defects into thousands of cars without the manufacturer even knowing. Just about everything in a car these days is built using robotic arms. The Chassis could be built too weak, the engine could be built with weaknesses that will fail far before the expected lifespan. Even your brake disks could have manufacturing defects introduced by a computer hacker causing them to shatter under heavy braking. The Forward-looking Threat Research (FTR) team decided to check the feasibility of such attacks and what they found was shocking. Tests were performed in a laboratory with a real in work robot. They managed to come up with five different attack methods.

Why are these robots even connected? As automated factories become more complex it becomes a much larger task to maintain all of the systems. The industry is moving toward more connectivity to monitor the performance of all machines on the factory floor, tracking their service lifetime and alerting when preventive maintenance is necessary. This sounds great for its intended use, but as with all connected devices there are vulnerabilities introduced because of this connectivity. This becomes especially concerning when you consider the reality that often equipment that goes into service simply doesn’t get crucial security updates for any number of reasons (ignorance, constant use, etc.).

For the rest of the attack vectors and more detailed info you should refer to the report (PDF) which is quite an interesting read. The video below also shows insight into how these type of attacks might affect the manufacturing process.

So dont people use routers with firewalls for industrial robots? Starting to sound like the IOT. Every report I read about IOT seems to indicate that they are just plugged directly into the net without a firewall.

Maybe it’s a grand conspiracy to undermine the industrial might of the western world by making the bean-counters think a firewall is a cost-center?

Well here’s the thing: Communication is bidirectional, and not all connections are initiated from the outside (really, if any). You need to firewall the machine’s outgoing connections as well to only allow them to connect to known good hosts, or not at all. Otherwise it’s possible to give them a bad update package when they ask, or other foolery. Whenever I make this point people always sqwak about cryptosigned update packages, but I’d argue there’s ways to be malicious during the download phase long before the validation phase. Robot arm out of free space on / and then what happens? One of many possibilities.

Updates are rarely/never done automatically in industrial robotics. You have to get the firmware directly from the manufacturer to perform an update, then go through the rigmarole of applying and veryifying it on the controller and associated hardware before it gets anywhere near being left to run alone on a production system.

Kids, remember the Natanz[1] hack? Yeah, that one with Stuxnet[2]?

Remember that the vector typically was an USB drive which most probably infected… some engineer’s Windows workstations?

It’s a while ago since I last saw a Siemens S7, but at that time, the programming and config software tended to run on an old-ish Windows version *and nothing else*.

One more: look at those little diagrams above. See the little pod labelled FTP? Guess there’s a default password no one bothers to change?

Firewalls we have. Too many. What we don’t have is people who know how that shit works.

“Firewalls we have. Too many. What we don’t have is people who know how that shit works.”

in most case they’re just interpreted as security.

that is at the end, the false sense of security.

if one doesn’t understand the working principles, then may not know the threats. so how could one feel secure then?

Lol, I wish i had one of these so i could just set it up and let hackers play with it. I must say it would be fun going to sleep at night and waking up to some creative and unique things being done by hackers :P

But as @Dave has said, It is king of amazing that some of these companies are not buffering themselves with a first line of defense such as a firewall.

Industrial version of Battlebots.

Putting robots online for the public to play with is starting to become common. There’s a guy with a small army of them running around in his apartment, and I’ve certainly allowed a few folks to drive around my place. :)

In the real world, in real factories, the network where the robot lives is often completely disjoint from a company LAN, like airgap separated. Let alone attached to the WWW without a firewall. Usually there is even heavy security measures between the industrial control network and the company LAN.

With all that IoT and “industry 4.0” hype that’s gona’ change. Everything should get connected if possible… So it is very important to point out the dangers of this and try to prevent the worst errors.

Firewalls are worth nothing if you have a stupid user finding a USB drive and sticking it into his computer. Or if you have someone with physical access to your network.

Of course, the latter is somewhat easy to circumvent, put the robots in their own network and firewall that from the rest of the company. Or have it running autonomously without any connection to the company network. But there is no way to circumvent human stupidity. Especially if you remember that there always will be some really old piece of hardware in a manufacturing line that is operated by a completely unpatched WinXP or even older machine.

Here’s my guess as why they’re connected to the internet, if you’re assembling John Deere tractors in the home of the free but you’re having castings made in the home of the not so free (China) then you would probably want to keep an eye on progress, or possibly make changes while still being in the home of the free.

“home of the free”, where said JD tractor is so DRM’d, that you can be sued if you want to repair some things yourself :P

Also, aren’t castings for JD made in India?

Should be changed to “home of the not so free” (or “Country of the DMCA”) and “home of the completely unfree” although the latter could be misinterpreted as e.g. Turkey.

I’ll have to read the PDF latter, but wouldn’t QC catch some of these intentional defects?

They are on a microscopic scale a few mm off here and few mm extra here.

If you consider “a few millimetres” minuscule, you clearly have no idea of the scales of QC acceptance in manufacturing.

Hehe, I had to surface grind a connector for an exhaust port and was 0.008mm out! all 50 of them were rejected. :(

it was just one pass too many :(

Was that for a military space project? :-) Sure not for the average car.

B.S. No one has a tolerance that tight, Let alone a human being able to grind away that minuscule amount.

0.0003149597, ya i dont think so.

out of spec is out of spec even if the tolerance is +/- 0.100mm. 8um out of spec is not the same as 8microns away from mean. you have to draw a line somewhere.

Introducing a latent defect into a product that is bad enough cause real problems but not bad enough to be caught in end of line testing requires a detailed knowledge of the product and the specific process step being performed. That may be theoretically possible, but is so far fetched that the risk is pretty small.

More likely is if hackers could cause a large number of industrial robots to go out of control or damage themselves which would bring the economy to a screeching halt. Nearly all factories would shut down for at least a day and if many of the same type of robots are damaged some would be stopped for a long time as replacements would be in short supply. That could easily trigger a recession and a huge reaction by industry and government. Of course the over-reaction might be just what we need to finally get some security in out industrial networks and the subsequent spending might make the recession short, so maybe it is just what we need.

In other words, war is good for business.

I want to agree but then I think about the massive airbag recall & lithium battery trouble Boeing, Samsung et al have run into.

You’d need to be a savant to design flaws like the afore mentioned shotgun airbags that pass muster but given a war scenario (or even industrial espionage) there’s certainly enough funding to find the right people. Or just have the robot only follow the flawed plans enough to stay below 6 sigma or Fight Club math standards.

When they catch on to your nefarious scheme have it stall the servos or overload the control board for every ‘bot in the factory to make retooling expensive.

I really don’t see it as far-fetched, industrial espionage goes on every day and some countries are more shameless about it than others. Paying some hacker in a far-away land to knobble your competitor’s production line (and/or steal their process data) would seem well within scope.

Also, some stuff could be easily guessed – a spot-welding robot for example you could just cut the power to every 2nd weld operation. No knowledge of the part/process detail required, but you can be pretty sure quality will go down. Likewise, painting robots – just apply a little less paint, it will fail faster / rust sooner. Machining, just introduce a bit of variation into tool speed or path to throw tolerances out.

Huh.. hey bob why did we already receive this paint shipment we still have a lot of paint in storage…

not to worry bill it’s not like hackers could have adjusted the amount of paint going on cars so they will rust out prematurely and ruin our reputation thus causing our ultimate demise..,

Oh.. ha.. ha.. that’s good bob… maybe Larry picked up a few extra gallons from the box store when he was painting his house and brought us the left overs… surely that would account for our 2000 gallons we have extra…

Everything is accounted for people… when you are manufacturing large scale engineered volume of parts and amounts required is very easy to account for and see deviation through… its math people.

Delta critical processes have 100% NDT & first off/ sampled teardown inspection, so a repetitive fault ( which requires process knowledge to make happen ) will be discovered. This is how you empirically find the best process parameters. If sabotage / malware then the source can be found & sacked ( or shot in China).

Airgaps between eqt & www are common. Incompatible automation hardware & the airgap means PCs cannot be updated unless the hardware is also upgraded. This also goes for maint laptop virus checker upgrades.

Flashdrives are the risk factor. If they get past the virus checker it will lively for all the wrong reasons. To me the most likely vector is a stuxnet type virus transferred from a maintenance laptop which resets the motherboard real-time

axis encoder values & drain the backup lithium battery. All axis positions are lost & there are only a handful of techs in the country to re-datum the axes. Spare motherboards are likely obsolete & rarer than rocking horse poo.

This is complete horseshit. Rule 101 of industrial robots. Small, managed and very secure networks for industrial control. Then secure gateways to company LAN if absolutely necessary, even then perhaps with a firewall online, of not, definately firewall between company LAN and WWW. 9/10 absolutely no link with the company LAN, let alone the internet. And DEFINATELY not via FTP. What a joke.

Source: 3 years working in industrial robotics designing and installing production robotics systems.

*firewall inline between industrial control network and company LAN

Agreed all that is common sense in any production system.

Most of the time there is no need for a connection to the Internet for industrial robots,CNC machines, or PLCs as you need techs to manage the systems anyway.

Not everyone actually cares as much as you do though. Some people (accountants, hassled IT interns, workers under pressure to get shit done) just see “yada yada expensive yada yada complicated yada yada time consuming”.

People WILL bypass all that stuff in the name of just getting something done. I’ve seen it (and far worse) hundreds of times at hundreds of places, 95% of which definitely knew better. Source: 8 years with a major telco, fixing the big tubes.

Not to mention the industrial safety systems used to ensure safe positioning of all joints… anyone who thinks this shit is feasable should really look into ProfiSafe and other realtime industrial safety systems employed in control systems, let alone the performance levels of safety systems used in industrial robotics

So Trend Micro just made it up?

Look at some real life robotics systems deployed in factories and you’ll see. I’m not saying they made it up, but their lab setup is entirely unrealistic of what is actually deployed by system integrators in real factories. I’ve designed enough systems to know that this is the case, the the ones in car factories are far better secured than those in the applications I have worked on.

So attack the safety systems.

Yeah good luck with that. Come back when you’ve actually tried to modify or attack a real industrial safety system…

The best and usual safety systems can’t be attacked without someone physically there. No computer wizardry will do anything about a dead mans switch or a hard power cut switch attached to your sensing device.

They are probably the hardest point to attack.

I understand you have a lot of knowledge in this field but there is got a to be a few companies out there doing things wrong. Trend Micro are a reputable company. Yes I agree it’s not an easy hack to pull off but I do believe with right circumstances it could be done (just not on any system though). If something is connected it’s hack-able. firewalls routers etc are great but there is no bullet proof vest out there. I think anyone trying to pull this off would be a government agency or a corrupt corporation trying to stifle competition as funding, research etc are musts. you can’t just randomly hack a machine you must have some sort of knowledge about it’s setup in the first place.

1 word: Stuxnet.

I think, the question is different. Let’s assume, the safety systems are not easy to compromise, as they are for human safety. But if you are able to just change some parameters, which don’t compromise workers safety, then the safety systems will not be triggered. But you could reduce product quality.

I don’t know, if this would be easy or even possible, but I think this safety systems alone will not necessary offer protection against such an attack.

Get some concrete evidence backed by real subject matter experts from manufacturers like KUKA, Yasakawa and ABB, or those involved in real system deployment and maybe I’ll consider this a credible threat.

I think they made some bad assumptions – specifically that an attacker would have ready access to whatever LAN the robot was on and that the company owning the robot had an IT team of orange monkeys.

Reality is – firewalling. Lots of firewalling. Plus, in many cases, these robots are used as part of an integrated system (such as a SICK safety controller and safety-rated sensors which will yank power to the robot if any sensor detects a dangerous condition) and Trend was working with a standalone robot.

Thankyou! Finally someone with some common sense and half an idea of the real technology used.

True, but it is also true, that Stuxnet worked. Tampering with manufacturing quality need not trigger the safety system, as long as no dangerous condition of the robots occurs. If it e.g. makes every second (or randomly) spot weld with only 1/2 of the power – you see a weld spot, but it does not hold – then this safety system will not trigger.

QC would likely catch any defects on precision parts like engine, drive-train, and brake components. The tolerances these parts are machined to would typically be around +/-0.005″ and probably much tighter; internal engine components would be +/-0.001″ at most. It would be unlikely that a part out of tolerance would skate by, if it’s out of tolerance it’s scrap.

The odds of a part on a safety critical (brakes) part getting by out of tolerance is minuscule.

If anything it would just cause the manufacturer to lose money due too a high scrap rate, they would investigate and solve the problem.

If you had a copy of the acceptance criteria I’m sure you could come up with something outside of it’s scope.

Probably, but the real question is this: If the feature you modify isn’t being inspected by QC or has a high tolerance, could you modify it sufficiently to induce a failure? I think it would be a crap shoot, I mean if a non-critical tolerance like say +/-0.015″ is out by an additional 0.015″ will it matter?

If for example a brake caliper casting was to be “sabotaged”, critical machined areas would be measured but non-machined areas would probably just be visually inspected (human or machine vision). So even if you sabotaged an area that would normally be “non-critical”, could you do enough damage that a visual inspection would not detected it while causing it to fail?

Remember that video of an electric generator tested by being attacked remotely to see how much damage could be done?

Having worked in company selling and supporting niche software and hardware in the food manufacturing sector, it was shocking how readily plant personnel gave remote access to the factory networks, and how little attention was paid to hiow this infrastructure was deployed in the first case.

We had several instances where huge network latency caused issues with scada and live connected hardware, traced back to employee overuse of downloads in the break room pc.

As usual, people are the weak link, especially where they’re being tasked with oversight of the hardware designed to usurp them

Having spend decades working with food/packaging/CPG, the level of technical understanding in the physical operations is astoundingly variable to say the least – there were still plants working on paper with typewriters in the early 2000s and trying to get systems integration can be frustrating at best. Security is more variable than that.

Also a lot of the software systems advertise “Access… from any browser-based device with richer functionality for an improved user experience…” in order to convince management to adopt them. You can fill in the security/access problems that are likely to follow…

A lot of IoT stuff broadcasts UPnP, asking your firewall to open some ports for it. Worst. Thing. Ever.

Now everyone finds their IoT devices sitting directly on the internet waiting for abuse.

Although perhaps outside the intent of this post, one of the more interesting attacks is to simply watch what the robots themselves are doing and how they’re going about it in order to elucidate the nature and capacity of their products. Knowing how Boeing lays out its rivet patterns or how GE mills an engine bearing race may be able to tell you a great deal about the design.

Unintentional open source hardware? :-)

Not a hack, and stop giving beer to robots!

AlexB and others (me included) obviously know how this *should* be done. However, the real world is a messy compromise and there are challenges to establishing and maintaining an effective cyber security programme in a production environment that cannot be ignored. For example…

1) Many companies do not see cyber security as a priority. My experience has been that the executive and the engineers get it, but middle management frequently see it as a cost with no tangible benefits. Where programmes do exist, they are usually underfunded and under-resourced. Richard Clarke put it like this: “If you spend more on coffee than IT security, you will be hacked. What’s more, you deserve to be hacked.” Until we see companies go under due to cyber security incidents, this will continue to be the case.

2) There is not a lot of cyber security expertise in the engineering community. Similarly, there’s not a lot of manufacturing and safety engineering in the IT community. This fuels misunderstanding and conflict, especially between information assurance practitioners and safety engineers who use similar language to mean different things. Often a security practitioner can be in the position of wanting to make a change to a production system, but is unable to gain the support of the safety engineer. In my experience of working in a conservative industry, cyber security measures are often perceived as risks to safety, yet perversely a lack of security is not seen as a safety risk as malicious acts are excluded from safety assessments. This has to change.

3) Perimeters are always being eroded for a variety of reasons. For example, it is common to have production networks attached in some way to corporate operating environments – there are business benefits to this, so it is a practice that is highly likely to continue. A more worrying trend is increasing off-site connectivity, typically for remote management of assets under maintenance contract – these connections can be by VPN, or “out of band” devices like 3G modems that may be undeclared. This is how Target got done over. Control systems may also include wireless links that extend beyond the physical site perimeter. Because these links are on the factory side of the firewall, they are often ignored, however they can still be used for mischief e.g. the Dallas EWS hack.

4) Air gaps are not a panacea. Even in networks that use air gaps, there’s usually information being transferred across the gap in both directions i.e. production reports and software updates. At best, air gaps are a barrier to productivity. At worst, they create a false sense of security that fosters the belief that no further security measures are necessary. Most of the infections I see on air gapped systems are opportunistic infections of untargeted malware that have bridged the gap on removable media. The moral of this is that systems with hard perimeters and soft insides are fragile, and that a degree of dense in depth is needed.

5) The message from the security industry about APTs is, at best, distracting businesses from spending time and money on basic security. Vast amounts of money is being spent on the latest and greatest network security appliances, that add little to the overall security of the business, but are often at the expense of the basics. When your production system goes down at 2am, it’s much more likely to be Conficker clogging the tubes than an APT trying to undermine the world’s supply of toilet brushes. At worst, the security industry’s fetish for APTs creates a climate of fatalistic hopelessness in organisations: why bother if we’re going to get hacked anyway?

What are the answers? A lot of it comes down to being a good communicator. Unless we, as security professionals, can put the message in a way that is both understandable and relevant to our stakeholders, things won’t change. Executives care about risk and return, safety engineers care about hazards and harm, manufacturing engineers care about productivity and quality, middle management care about targets, and the security industry cares about selling you boxes.

Thanks for the write-up, Tryst. There’s a gap between the writing and the doing, and I think it’s more than just one of language. It comes down to the fact that security costs money, and sometimes lot of it.

You write well, and I’m not in disagreement with you at all. Readers need be told that what you’ve given is a very very brief description of the complications within organizations and only hints at the magnitude of the technical exposures. Do wish you had gone farther and hope you will with this prodding. It is a much larger and more deeply troubling situation than told. Even an air-gap can be overcome. I’ve been a CNE since the mid 80’s, and I’d like to hear more from you.

Hackers? The scenarios in that first paragraph just sound like standard run of the mill planned obsolescence.

Heavens next???

IoT Nuclear warheads????

I thought this was called planned obsolescence?