If you have about 10 hours to kill, you can use [Edje Electronics’s] instructions to install TensorFlow on a Raspberry Pi 3. In all fairness, the amount of time you’ll have to babysit is about an hour. The rest of the time is spent building things and you don’t need to watch it going. You can see a video on the steps required below.

You need the Pi with at least a 16 GB SD card and a USB drive with at least 1 GB of free space. This not only holds the software, but allows you to create a swap file so the Pi will have enough virtual memory to build everything required.

The build step not only has to create TensorFlow but also Bazel, which is Google’s Java-based build system. There are dependencies between the version of TensorFlow and the version of Bazel so you have to make sure the versions match as explained in the video.

The video is based on some older instructions on GitHub, but those instructions are not up to date and the changes required are covered in the video.

Given how long it takes to compile, we wondered if cross compiling might have been a better option. If you need models that work with this setup, you can always work in your browser.

Pro tip, on recent ubuntu versions (16.04 up) you can enable zram (compressed swap in ram or ‘compressed RAM’) with a single command, `apt-get install zram-config`. I don’t know whether that works on debian too but probably?

The point is that this gives your RAM-limited computers a bit more to play with when compiling big packages. It can’t work miracles though, I’ve got Ceph compiling at home on a board with 2GB of RAM but it’s demanding 6GB. That’s when USB HDDs and giant swapfiles come in.

Thanks, for the knowledge of this existing. Looks like it is really good for SSD swap as well to slow down the failure rate.

(ref: https://en.wikipedia.org/wiki/Zram )

“A compressed swap space with zram/zswap also offers advantages for low-end hardware devices such as embedded devices and netbooks. Such devices usually use flash-based storage, which has limited lifespan due to write amplification, and also use it to provide swap space. The reduction in swap usage as a result of using zram effectively reduces the amount of wear placed on such flash-based storage, resulting in prolonging its usable life. Also, using zram results in a significantly reduced I/O for Linux systems that require swapping”

Does add extra load to the CPU, but that’s preferable to a build failing due to not enough memory.

I put ARM Raspbian in a directory on my main PC, configured binfmt to run ARM binaries under QEMU user emulation, then switched to the Raspbian install as a chroot jail. Compiling in that environment SCREAMS along, especially since I can take advantage of all my cores and RAM. Even though GCC is being emulated! (it helps that everything on the kernel side is still running native.)

Custompios does this to build octopi images. It’s great. Ive been experimenting with using docker to do this too. So many possibilities.

Yup – It’s there in Debian too. just happened to enable it last night on Debian stretch on a Pi 3 last night running nextcloud.

I love these software packages that glom together layers and layers of “stuff”. Really, you have to create a java-based build system first? What ever happened to “make”. Oh, I forgot. This is Google we’re talking about. Good grief. And swapping to a USB thumbdrive? Sigh.

Think you saw too much layers of crap? Try yocto.

I’ve done this and it’s fine for a tutorial, but it wasn’t nearly fast enough to be useful. I’m going to try again with one of the accelerator USB sticks.

How did you check performance? Can you share a little bit of your experience?

When I saw this I though it’s a joke. I think RPi has no enough computational power to do something useful with TF on it. HW acc is rather required (but accelerators supports inference only)

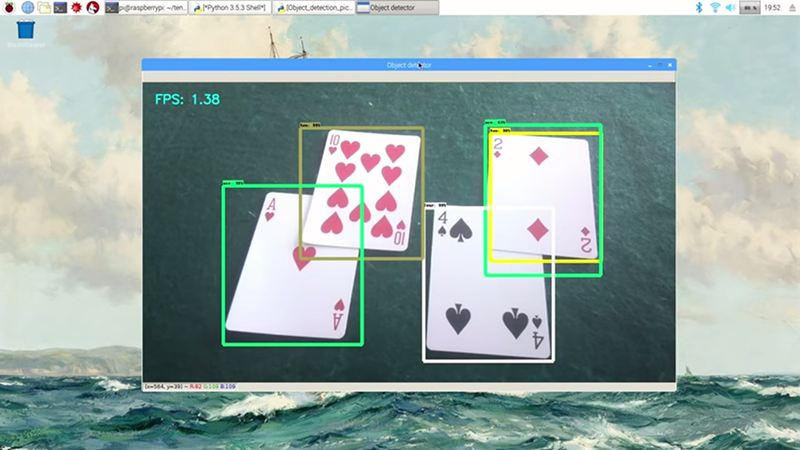

For those interested, this is part of my project to make a blackjack-playing, Raspberry Pi-powered robot that can detect cards that are dealt in front of it and make hit or stand decisions, all while counting cards and implementing card counting strategies!

https://hackaday.io/project/27639-rainman-20-blackjack-robot

What about using tensor flow lite?

See https://medium.com/@haraldfernengel/compiling-tensorflow-lite-for-a-raspberry-pi-786b1b98e646 for some more recent instructions

TensorFlow developer here… I’m working on a TensorFlow Lite runtime pi whl that will be small and easy to install (about 400k). It will also be buildable from Make. I have some examples of it in action here:

https://youtu.be/ByJnpbDd-zc?t=28m35s

We are also working on making a whl all of TensorFlow and having it built nightly so that people don’t have to build it themselves.

That’s awesome to hear! Thanks for letting us know. I’m using TensorFlow’s object detection API on the Pi, and I also had to build Protobuf from scratch because it was not easily available through apt-get or elsewhere. Is there any chance you’ll be making an easy way to get Protobuf on the Pi?

Yes, we know that is currently difficult. We’ll also be making at more encapsulating in lite models (and also be providing a quantized model for object detection which is faster).

I’m making a sequel to this video on how to set up the TensorFlow Object Detection API on the Pi, but based on your comments, I’m interested in using TensorFlow Lite instead. However, looking at the GitHub repository, I’m not sure how where get started with using object detection (aka multibox_detector?) on TF Lite. Is there a good way for me to contact about it, if you have time to answer a dumb hardware engineer’s questions?

The application of ultraviolet laser processing in the PCB industry

Hi Andy,

When you mention the whl for full tensorflow, aren’t these the packages that you are talking about?

Python 2.7 – http://ci.tensorflow.org/view/Nightly/job/nightly-pi/

Python 3.4 – http://ci.tensorflow.org/view/Nightly/job/nightly-pi-python3/

The Python 2 version worked for me, but the Python 3 didn’t, as it is compiled for Python 3.4, but the Python 3 version that comes with Raspbian is 3.5. Is there any ETA for generating a 3.5 version? Having that version available would be of great impact for the Raspberry Pi community.

Thanks,

Eric

A java build system? whatever happend to just “make”. It seems everyone needs their own custom system, to compile even the simplest or most trivial of projects. 100s of MBs of crapware.

X needs Crambulizer to compile Brooble, which is used by Drooble to make snozzler to fluffle to socksifier.

This is why VMs or containers are AWESOME. I don’t pollute my system with MBs to GBs of crapware that I might only use once, for 10 mins, and then spend days uninstalling all that crap.

Build systems should be considered malware.

This is great and all but why this approach? Just use tensorflow on your own machine to build out models and then embed those models into applications that run on the Pi. Save that time for something useful…

I don’t know how to run a TensorFlow-trained model on the Pi without having TensorFlow itself installed. Can you (or anyone else) point me to where I could learn more about how use TensorFlow models in standalone applications on the Pi?

Google already have a RPi disk image for this, no build required.

Egad! I was feeling intimidated about asking a dumb question:

“Can’t you just download a pre-compiled version, ready to run?”

If I had to compile MS Office / Libre Office, Photoshop / Gimp, Autocad, Blender etc. from source code I doubt I’d ever use them.

My tesk is complete. What is my next tesk…

I have installed tensorflow on a Raspberry PI 3 using your instructions and it worked perfectly. After that I started installing a number of libraries that I needed for loading a Keras model trained on my PC (numpy, scipy etc) and I have observed the following error while importing tensorflow:

Runtime Error: module compiled against API version 0xc but this version of numpy is 0xa

Segmentation fault

I have updated all the necessary libraries, and importing any of them doesn’t result in an error in a Python 3 script. Also the error is not keras related, because the statement import tensorflow raises an error no matter what follows after it. Should I uninstall tensorflow and start all over again?