It’s a concern for Europeans as it is for people elsewhere in the world: there have been suggestions among governments to either outlaw, curtail, or backdoor strong end-to-end encryption. There are many arguments against ruining encryption, but the strongest among them is that encryption can be simple enough to implement that a high-school student can understand its operation, and almost any coder can write something that does it in some form, so to ban it will have no effect on restricting its use among anyone who wants it badly enough to put in the effort to roll their own.

With that in mind, we’re going to have a look at the most basic ciphers, the kind you could put together yourself on paper if you need to.

There have no doubt been cryptologists and codebreakers at work as long as there have been humans capable of repeating messages, and the strong public-key cyphers we use today were created by mathematicians who stood on the shoulders of those before them in an unbroken line that goes back thousands of years. It’s the public-key ciphers that are in the eyes of the lawmakers, but perhaps surprisingly they are not the only strong encryption scheme that remains functionally unbreakable. A much older and simpler cypher also holds that property, and it’s this that we’re presenting as the paper-based answer to strong encryption legislation. The so-called one-time pad was a staple in tales of Cold War espionage for exactly the properties we’re looking for.

To explain a one-time pad it’s necessary to first travel back to ancient Rome, for the simple alphabetic substitution cypher. In its most basic form an alphabet from A to Z is either shifted or randomised, and the resulting list of letters is used to encrypt the message into the cyphertext by direct substitution. The cyphertext is encrypted, but its flaw comes in that it preserves the frequency distribution of the letters in the message text. Frequency analysis was a technique developed and refined by the mathematicians of the Islamic caliphates, in which the frequency of individual letters in a cyphertext could be compared with that of letters in the language as a whole. By this technique a codebreaker could identify enough of the letters in the message to reconstruct it by guessing those which remained.

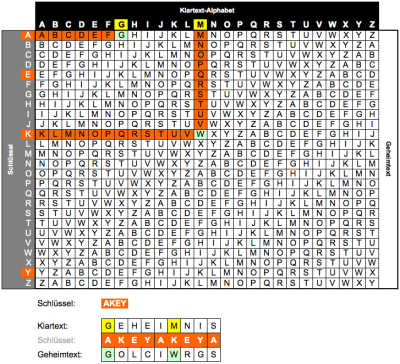

Addressing this flaw in the substitution cypher leads us to 16th century Italy and the polyalphabetic cypher, which instead of using a single substitution alphabet uses a number of them, switching from one to the next in sequence. The Vigenère cipher uses a table of alphabets each shifted by one letter with respect to the previous one, and switches from one to the next with each successive letter. By the use of a keyword to determine the sequence of which shifted alphabets in the table would be used for the substitution it created a cypher which was considered unbreakable until the 19th century, when mathematicians including Charles Babbage succeeded in breaking it by spotting the repeating patterns of its keyword.

So the Vigenère cipher is compromised, but its weakness lies not with its method but in the use of a repeating keyword to implement it. If a short keyword is used, such as “hackaday”, then it becomes in effect a series of eight sequentially repeating substitution cyphers; alphabet shifts h, a, c, k, a, d, a, and y in order over and over again, and a more complex but still achievable set of calculations will reveal its secret. These calculations become more complex as the length of the keyword increases, to the point at which it is the same length as the cyphertext and the possibility for spotting its repeats no longer exists.

A One-Time Pad

A Vigenère cypher whose key is the same length as its text is unbreakable by frequency analysis in an attempt to spot the repeating keyword. But if the keyword itself contains a recognisable pattern such as a passage from a book or even a pseudo-random sequence there is still a chance that it can be compromised particularly if it is re-used, and hence we come to the idea of the one-time pad.

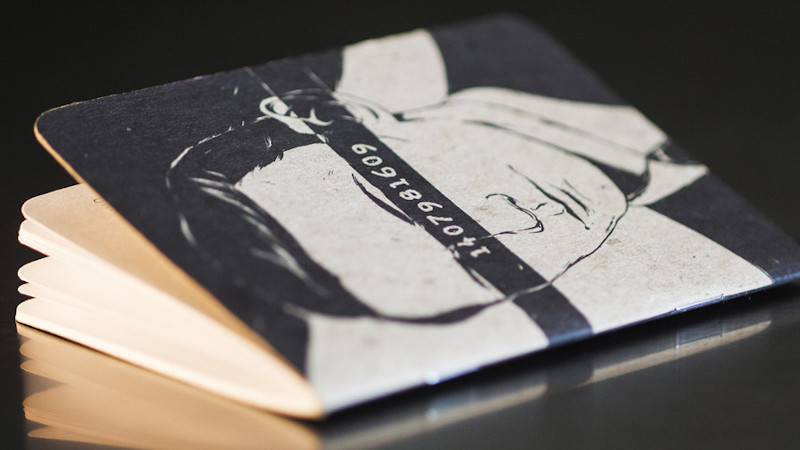

If every message uses a fresh encryption key composed of random characters that is the same length as its text then it contains no clues to help a code-breaker, because the entropy of the cyphertext is the same as that of the random key. Those cold-war spies achieved this in a low-tech manner using a pad whose pages contained random key text and from which each page could be torn and discarded once it had been used, hence the term “one-time pad”.

One-time pad encryption then. It’s unwieldy because both parties have to have a copy of the same pad, and even though it’s simple enough to do with a paper and pencil (or even a set of XOR gates) it’s probably not a sensible alternative to more modern forms of encryption. But it is genuinely strong end-to-end encryption, which would make it subject to any attempts to curtail encryption. So the point of this article hasn’t been to persuade you all to switch to using a Vigenère square and a notebook full of random digits, instead it’s been to write down just how simple secure end-to-end encryption can be. If a child can do it with a pen and paper then the most novice of coders can implement it in a script with nothing more than a text editor, so any proposal to censure it seems like closing the stable door after the horse has bolted.

Great, instructions so that you too can be a Zodiac Killer. ;)

If such a person did away with all the annoying astrologers such as Russel Grant, I would not object! ;^)

One-time pad is also future-proof, in the sense that no quantum computer makes it any easier to break.

It’s still vulnerable to rubber hose cryptography.

Not if you have fake tooth ampule.

Or Clarissa Mao’s endocrine implant.

Or Alzheimer’s.

There’s an answer to that: https://youtu.be/QRa_zzQOEe8?t=571

Retain your message with a custom convoluted key, to alter the cypher text meaning after the fact. The message become “deniable” when the provenance of the OTP cannot be proven.

The biggest problem with a one time pad is that the key must be as long as the message being sent in order to be secure. Also, the “one-time” aspect makes it unwieldy for high speed communications. Perfect security for short, infrequent messages though. About the only way to compromise it is loss of the secret key.

Or loss of synchronization. Suppose you inject a false message that consumes one pad. Now you have no way of knowing whether the message you received got garbled along the way, or whether your decryption key is offset by an unknown amount.

That is why often in secure communication there’s a plain or common easy encryption giving that sync info, for instance the ‘file created/ modified’ time in its meta data can be used, and even if you give out the information for how to interpret that time they still need the pad to make any use of it.

What you are describing is an implementation administration/training issue. Others have suggested methods to overcome this, most commonly in practice is key expansion. If you are aware of AES counter mode, in the simplest implementation, one could essentially use your OTP as the key for AES (128/256-bits), and then “encrypt” the 128-bit counter “plain text” incrementing for each “block”. This gives a 1.4821387422376473014217086081112e+79 length key-stream to use as from a single 128-bit key… One page from any agreed upon book, could in theory hold enough OTP data to expand out to decades of continuous data transmission, if the expansion method was designed to do so.

For synchronization issues, there is one constant that happens at a steady pace for all people on Earth… time. If one receives two messages in the same time frame, that limits the OTP key search space from the pad, one will decrypt another will not. This also gets around the Endor problem: “It’s an older code, but it checks out… I was about to clear them…” i.e. deterministic OTP key life time. This also increases the difficulty in transmitting a message that will be properly decrypted, as time is a factor.

But this can be improved with: message authentication/attribution. The hazard here is that one would be intentionally adding information that is identifiable to the message – this can make deniability harder, as someone who had compromised the authentication scheme could validate the OTP seed for decryption, and then the rubber hoses come back out when they find out you lied.

An important thing to understand is having a huge, loud, continuous payload of binary or otherwise obvious encrypted cyphertext may be a problem in itself, certainly in various countries. The practice and art of steganography is equally important, as is brevity. Especially when lives are held in the balance, transport of an encrypted message in a way to avoid detection, is just as important in reducing the attack surface as giving as little cypher text to cryptanalyze out in the open.

For a goof, more than a proof of concept, I once “transmitted” a cypher text by setting 4x power windows on a 4-door vehicle to one of 4x states (for example: open = 3, ~2/3 = 2, ~1/3 = 1, closed = 0), on an outbound and return trip once a day to a coffee shop. It’s 32-bits of data per round trip, that never needed direct contact with the receiver (CCTV cameras along the route remotely accessed for example could “read” the payload). In a work-week it was enough time to pass-along a 128-bit message for the meet time that Saturday… Of course I would never use this method again now that I’ve posted it – loose lips sink ships.

But along the line of brevity and a recognizable example “Execute Order 66” – was a rather mundane message with huge implications and meaning (kill all Jedi, your life allegiance to to the Emperor, and all order come from him now), but it could be distilled down to putting on a baseball cap, or turning it around. It could even show how messages could be in advertently transmitted by unwitting parties – someone who received the message but didn’t understand it might verbalize it to a room of Stormtroopers who were without communicators, who now had received the message.

For example – what if Joe Biden tripping on the steps of Air Force One was a signal (not suggesting it was but bear with me)? What if tripping once was one signal, and twice was another? The international news broadcast it far and wide, and it was picked up by the meme community who spread it even farther – you’d essentially have to live under a rock not to know that it happened and how many times he tripped… how’s that grab you for message transmission robustness? And unless you were waiting for an old guy to fall over in what everyone would assume is an embarrassing gaff, no one would see meaning there. Biases are a huge help for steganography BTW.

There is essentially no way to stop data exfiltration/transfer when the parties are genuinely committed to the effort, everything is in the table as a transportation medium if you’re okay with it being a lower data-rate! ;-)

Its also nearly perfect even if you reuse the key a few times, and gets better if the key is reused in a secret pattern “x” (forwards, backwards, skip to middle and backwards, forwards from the nth z, read vertically for instance).

As long as the key isn’t stupidly stupidly short that is going to be nearly as unbreakable, unless the attacker also knows some of the secrets, to better guess which letters correspond.

Just using a tiny 100 symbol key, and repeating it forwards every time it ends would still mean you need a message something like 50x that length in properly spelled normal language to really get any hope at frequency analysis, and realistically to make it crackable in a reasonable time probably more like 5000x (assuming they don’t actually know the key’s length as that will help enormously – as it means they will know in the first example 50 characters that have been encoded for each one in the key, a big enough sample to make some educated guesses from the statistical analysis and cut the cracking time massively).

So it can be used for high speed reasonably well without needing a stupendously massive key, just be aware that key reuse does reduce the security factor. And if you ever fall into a pattern, transmitting predictable data those chunks of your key used are almost given away, and as you will reuse it later so will be parts of future messages.

I still have my one time pad from the party!

Me too!

ObXKCD: https://xkcd.com/538/

Thanks for the chuckle.

Considering we’re all geeks here, I’m sure we could throw together some encryption based upon a 555 timer. :-D

ROT555? Going into Unicode above 127? :)

Let’s hear it for ROT-13, the training wheels of encryption that is only slightly more secure than pig latin but still felt like a fantastic discovery to me when I was like 9 :D

Easy to crack though. You should upgrade to 2 rounds of ROT-13 for extra security.

Four is better.

That helps significantly with one major problem of ROT13. Every character in a message can be separately decrypted, so cracking can be done in parallel. Large core-count processors make that easy. With two rounds each character takes two sequential steps to decrypt, so that’s a good defense. I’m thinking that with the recent doubling of cores in desktop Ryzen processors that I need to double the complexity of the encryption, so I’m thinking ROT52. Maybe I’ll future-proof with ROT104.

It’s the decoder ring from “A Christmas Story”.

The only time I saw it used was for spoilers, and it’s been a long time since that happened. It was a masking so you could avoid it, rather than keep something secret.

Before I had a utility, I just i just wrote down 13 letters, and the next 13 of the alphabet below. Easy.

Also, there’s the Solitaire Cypher from Cryptonomicon. Bruce Schneier developed it for the book. It’s not perfectly secure, but it’s a solid cypher for being a hand-cypher: https://en.wikipedia.org/wiki/Solitaire_(cipher)

I tried this over and over and couldn’t get it to work. Obviously I messed it up or misunderstood the instructions from the book. Will maybe give it 1 or 2 attempts based on the wikipedia article and try again.

Cryptonomicon was my favorite Stephenson book, until SevenEves came out.

One of my favorite books as well. According to the Wikipedia article linked above, there is a flaw that causes it to not always be reversible. (See the Cryptanalysis paragraph at the end.)

Go to the horses mouth: https://www.schneier.com/academic/solitaire/

Thank you for that link. Very cool insight into what goes into developing a system like this.

I do not think I will ever be able to successfully implement this, especially considering “this system has the unfortunate feature of never recovering from a mistake”

Coupled with “takes an evening to do” or undo, then later on recommends “go through the encryption process twice to make sure they agree” it seems unbearable.

I’m interested if anyone here has successfully encoded and decoded a message using this. Although admittedly last time I asked a similar question about something else in this forum, I got blasted for my ignorance.

Bonus points for the included reference to “A Canticle for Leibowitz”.

Loved the book. Read it at least twice. But I don’t get it. What’s the reference?

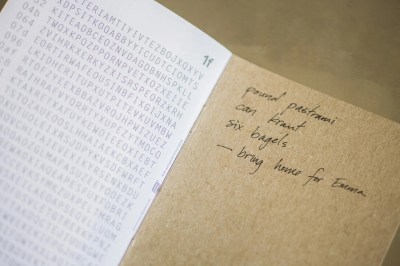

Look at the photo above the caption “Who remembers the Hackaday one-time pad?” Does the shopping list on the inside back cover ring any bells?

The photo of the notebook, it has a shopping list starting with pastrami.

There’s a list in the book that include pastrami, and the meaning has been lost over the centuries. So it’s seen as important, when it’s trivial and shouldn’t have been saved.

I didn’t see this immediately, so I went back to reread the post. I thought maybe the text had a hidden code, until I looked at the photo, and remembered a list in the book

Hmmph. That’s what I get for just reading it for the articles.

Thanks guys.

A large microSD card would provide a lifetime supply of random digits, even for a very verbose correspondent and be easy to conceal. However, if the *use* of encryption is illegal then de facto only criminals will use it. Just as if guns are illegal only criminals will have guns.

Yup. Rather than draw unwanted attention, the encrypted message should be transmitted using some sort of steganography so it looks innocent. Anyone have any thoughts about what that would look like these days? Twiddling least significant bits in image data problem won’t suffice any more.

Hide in a digital billboard.

I suspect such bity twiddling can be safe as long as it is your image. If the image already exists and an original can be found the comparison of their hashes reveals a difference and someone might look for twiddled bits. If the image was created by you and an untwiddled copy is never shared then I don’t see how anyone could ever know that bits had been twiddled.

The twiddling could in theory be detected by looking for patterns in the LSBs. To properly hide the message, you’d need to encrypt it in a way that the output looks random.

If your ciphertext *doesn’t* look random, You’re Doin’ It Wrong.

You can still see it by analyzing statistical patterns. Of course, when you get to the point of SD cards with steganography, you can probably afford to use real encryption.

For UK residents – good programme on BBC4 iPlayer about Bill Tutte cracking Tunny – the Lorenz cypher.

First cracked by hand, and then using the Colossus designed by Tommy Flowers. Both at Bletchley Park in 1941.

https://www.bbc.co.uk/iplayer/episode/b016ltm0/timewatch-codebreakers-bletchley-parks-lost-heroes

Just one problem with this isn’t there, sharing the one time pad beforehand. There’s a reason that assymmetric encryption is popular. Symmettric encryption is only useful if the participants on a conversation can meet in meatspace before beginning communication, not much good for participants continents apart or who don’t know each other yet, and you have to rely on both parties keeping a large amount of key material and keeping it highly private. If there’s any chance you might ever need to transfer gigabyte fiels this way you need to pre-share gigabytes of key material (yes that can be a USB stick, but you’ll have to meet again once you’ve had a USB’s worth of chat).

If you need to share gigs, you can use a symmetric encryption like AES and share the key via the OTP.

Or share the URL to a new one-time-pad, or pick a multi-gig public download to use as a OTP.

Even better. Distribute a shuffling program that uses a large file as a source then shuffles and balences the values in a deterministic way. Users download the agree upon material and shuffle it with the secret program. Instant one time pad for both.

That’s just regular encryption with more steps.

How would you attack it ?

yep that’s the problem – and the article should have mentioned it. With a one time pad you need another secure channel to share it, and when it runs out you need to do it again…

Yes, if it’s just text you are emailing, and to just one of your friends, you can swap a usb key full of a one time pad.. But if you are taking to multiple people, or doing larger amounts of data, nope.

Of course, you could use a symmetric algorithm with parts of the one time pad (move along each time) as key, and that gets around the data size problem. However, that is not to different from the current public/private progs..

The big problem with all this is if it is made illegal that stops a lot of people (minus us tech savvy people) not using the same program etc. That’s a problem.

But yes, banning it will be as about as effective as legislating to stop mp3 downloads, or copying dvds. Not going to work against exactly the people they – sort of – want it to work against..

The cipher only needs to be as long as the encoded text if you’re trying to beat decryption through frequency analysis. Letter frequency really only occurs in natural languages. I don’t think the data in files occur at any really noticeable frequency unless you already know what you’re looking for. A simple substitution cypher will likely be sufficient for any large data file, no? Clearly you’d need to substitute the ASCII, HEX, or some other encoding characters and not the base binary data. Seeing a bunch of As and Bs instead of 1s and 0s would be pretty obvious, but seeing one ASCII character instead of another might not be so obvious.

Am I aiming too low here?

“Just one problem”

Well two actually, but it applies to all types of encryption.

If you have sent encrypted traffic, I may or may not be able to read what you sent. But I can produce a key that will decrypt it to whatever nefarious thing I want.

Maybe the jury won’t fall for it…

Also, the solitaire/pontifex cipher, which can be done with playing cards: https://en.m.wikipedia.org/wiki/Solitaire_(cipher)

Silly Rabbit, encryption is for kids! https://www.pri.org/stories/2017-01-17/barbie-typewriter-toys-had-secret-ability-encrypt-messages-they-didnt-think-girls

For anybody interested in both programming and encryption:

https://cryptopals.com/

As a junior (and absolutely untalented, and quite ignorant) embeded software engineer, even the starting problems look insane to me, fun!

zvbxrpl

^ this

If the subject matter was copyright material, two people could publish the two files. Both could claim that their file was the encryption key, not the original material and no-one could prove which was which.

I was at a talk about the Threema messaging system, and the presenter quoted his professor as saying “Cryptography reduces the problem of secrecy to the problem of key distribution.”

This is very deep, and I wish I could attribute it better.

The problem with OTPs is not just delivering gigs of secret material to one friend, it’s delivering _different_ gigs of material to everyone you’re going to want to communicate with _in the future_.

More sophisticated encryption methods reduce the amount of key material, asymmetric encryption partly handles the “in the future” bit, etc. And that’s where the tradeoffs lie. But thinking about the on-the-surface less glamorous problem of getting the secrets to everyone is where the action is at.

https://www.technologyreview.com/2018/01/30/3454/chinese-satellite-uses-quantum-cryptography-for-secure-video-conference-between-continents/

“This problem is neatly solved by sending the key using quantum particles such as photons, since it is always possible to tell whether a quantum particle has been previously observed. If it has, the key is abandoned and another sent until both parties are sure they are in possession of an unobserved one-time pad.

That’s quantum key distribution—the crucial process at the heart of quantum cryptography. After both parties have the key—the one-time pad—they can communicate over ordinary classic channels with perfect security.”

That’s a very interesting paper that the article links to. It has a lot more information on the process of observing and the effects of that observation that are the telltale signs they look for. While we’re currently not really even trying to break this type of encryption without being observed, I believe it will eventually happen. Once you can remove the observer from the immediate vicinity of the particles being observed, we may no longer be interfering with the interference patterns.

The act of observing quantum particles is currently a “hands on” approach. We get all up in their junk and make sure they bump into our tools so we can measure that effect. Once we start looking from a distance, whatever that ends up looking like, I think the impact may be harder to notice if not impossible. That sounds like a very exciting field to be working in.

I wonder if this could be solved by using pseudo random number generators and seeds. The same seeds will produce the same OTPs, in fact like they do for those One Time Password tokens. Each message advances the seed, so the pads are always unique. If sender and receiver are out of sync, the receiver can try a couple of next sequences of the seed (same like One Time Password tokens).

The guy who did the Treema talk must have referenced Auguste Kerckhoffs principle (https://en.wikipedia.org/wiki/Kerckhoffs%27s_principle)? Is the talk available online somewhere?

Hmmm… actually now that you mention it, probably. Hold on…

https://media.ccc.de/v/33c3-8062-a_look_into_the_mobile_messaging_black_box

That was easy. It’s a good talk about messaging security in general, if memory serves.

“Cryptography reduces the problem of secrecy to the problem of key distribution.”

Unfortunately most mathematically clever schemes fall to one of several weak points: Corrupt/disgruntled/crusading personnel, capture/rubber hose decryption or, worst of all, the same thing that causes so many other problems – unauthorized/redundant resources in the bureaucratic mire that wander off.

“Hey, Vinnie, do ya have an extra copy of my OTP? Mine went through the wash and the Station Chief is gonna be pissed!”

“Sure, Dave…I keep a coupla extras rightchear. Hm….funny, I thought there were three extras and I only see two….guess I’ll have to run off a couple more….”

“Bah! Just re-use the old one. It’s just as random.”

Book ciphers don’t require “meeting in meatspace”. In fact one-time pads don’t either. Some folks are less clever than they think they are.

If encryption is outlawed, then only outlaws fqknb zlrmp rlwyv qcikm

Anyone else think those notes are a hefty fine handwriting? My own is quite shabby, so I’m always i awe when I see words written by people with good handwriting…

oooo i still have that paper hackaday otp somewhere

An article about almost antique methods of cryptography is very significant for the quality of scientific work in today’s time. Unfortunately, mass instead of class overrides the really value of concrete scientific work. In any case, it is harmful to the sciences if the number of publications is used as a pure evaluation criterion for their own relevance.

For your own picture I recommend the search for the core competencies of the author.

Yeah, yeah. The point Jenny really wanted to make was about the encryption genie being _entirely_ out of the bottle. And that I think came through, no?

If you’re ahead of her on cryptography, well, good for you! :)

Why are encryption schemes character-based? Serialize the whole message, then scramble the order of the bits. Decoding then requires knowing the scrambling order. Embellish the technique with random number padding and pre-encoding the message with a conventional technique.

You left out the Playfair cypher. Easy to do by hand, very hard to break unless you have a large corpus of text to work with.

Since people mentioned rot13, here is a fun way to use rot13 to create fake OTP messages:

https://github.com/sac001/fakeOTP