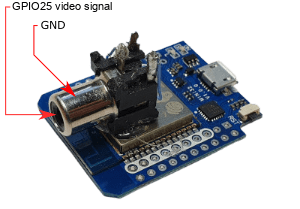

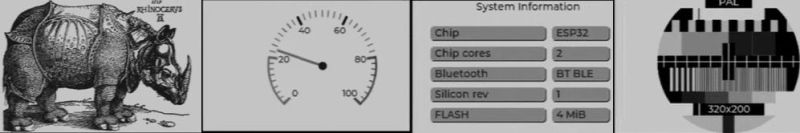

Just because a microcontroller doesn’t have a dedicated video peripheral doesn’t mean it cannot output a video signal. This is demonstrated once again, this time on the ESP32 by [aquaticus] with a library that generates PAL/SECAM and NTSC composite signals. As a finishing touch on the hardware side, [aqaticus] added an RCA jack is an optional extra. The composite signal itself is generated on GPIO 25, with the selection from a wide number of PAL and NTSC resolutions.

In addition, LVGL support is integrated: this is an open-source library that provides a cross-platform way to provide graphical UIs for embedded platforms. Using this combination any ESP32 can generate a fully graphical UI on a monochrome or color display to add some extra flair and functionality to an ESP32 project.

Currently, this library does not support color output, but hopefully this will be added in the future. Even so, together with simple VGA output using a DAC, this library provides yet another way to add analog video output to ubiquitous MCUs like the ESP32. Even if these MCUs are not going to be decoding any video formats at a reasonable speed, adding a UI that’s more user-friendly than an HD44780-based display and a few buttons can really elevate the user experience.

That’s really cool! Kudos! 😃 Just a minor complaint here: Composite really is CVBS (Color VBS) rather than VBS (monochrome video)!

S-Video (which isn’t considered being Composite), by comparison, contains color information (chroma) and a pure VBS signal (Luma=intensity; mono video with sync/blanking) on separate pins.

PAL/NTSC/SECAM are color standards based on the original b/w standards. Sure, we can use their specs for generating a “monochrome NTSC/PAL/SECAM” signal that works on mono/color monitors and TVs. But to be precise, something like RS-170 in the US or CCIR were the true matching monochrome standards.

https://www.epanorama.net/documents/video/rs170.html

A pure monochrome video can be of very high quality and resolution (up to 1000 lines in practice), unlike composite video which people often associate with really bad graphics quality.

For example, in France, there once was a HD TV broadcast in monochrome with 819 lines, even.

https://en.wikipedia.org/wiki/Analog_high-definition_television_system

Or in other words, VBS is/was the RGB of the black and white world. A b/w TV set used VBS natively, also. It’s frontend, the RF tuner, merely demodulated the signal and separated audio/video.

The single CRT was pretty much fed with the unaltered VBS signal.

Anyway, no offense. It’s just a little, but important detail I want to mention. I don’t mean to say the article isn’t wrong, whatsoever. It’s a common misunderstanding/thing that people see monochrome video as “composite video”. Which isn’t exactly wrong, even. It’s just that “composite video” has a bad reputation and doesn’t do justic to the otherwise fine monochrome video.

I tried explaining this once and some guy got mad and said quit calling it component the god damned cables are RGB call it RGB like everyone else!

Well, a monochrome composite signal is actually the luma portion of a component video signal, YCbCr style.

It is definitely not correct to say RGB in this particular context.

TV sets have one or both, RGB and component inputs.

A component input will accept this signal on the “Y” channel and the quality will be great!

An RGB input will not accept this signal.

In addition to component inputs being able to handle a monochrome composite signal, component inputs do allow for some easy control over things like saturation. Simply change the other two signal levels. Making the different will work a lot like a tint control will too.

I am not knocking RGB here. It’s great and I use it.

Just saying component is a thing and it isn’t RGB.

If you want to be *super* pedantic, SVideo, YPbPr, and RGB are all technically component video formats, since they separate the signal into its constituent components. The first two each have a “Y” (Luminance) component, but the latter does not. This is also why composite video is called that: it’s a composite made of the separate components (Luma and Chroma).

Although I may be wrong, last time I did some reading on this it appeared possible to move the video output to pin 2, which would mean one could make use of the esp-cam board’s SD card slot.

^ but you had to use esp-idf, and I am a lot better at researching things than doing things (and am also lazy).

^ I mention this as the only time I had seen it done before was with Adurino, which didn’t allow the pin to be moved. This project does use esp-idf I read (after the fact), so just ignore me :-)

“ Currently, this library does not support color output” Perhaps i misunderstand, but according to the github page, LVGL mode supports (apart from monochrome):

RGB232 – color mode 1 byte per pixel

RGB565 – color mode 2 bytes per pixel

Yeah, but second sentence in readme: “For now, color is not supported.”

I have a couple of thoughts.

Those monochrome displays for say an Apple 2, or security cams, old amber screens will often display NTSC or PAL and will do 6 to 800 lines, and interlaced vertically, 480 plus lines.

640×480 will work great on most any display.

A super easy way to get color would be to simply add a color burst and make sure the phase relationship between it, and the porch signal leading into the active area is constant, and one can do artifact color Apple 2 style.

640 pixels, 1bpp will yield a 160 pixel, 16 color display. Vertical resolution depends on what ones does with the frame buffer info.

2bpp will yield a 160 pixel x 256 color display, given one can get black two greys and a white. This looks a lot better than you might think.

As long as all the scanlines fall on an even colorburst cycle, and the phase between it and the pixel clock are constant, the colors will be stable.

If the system can generate 4 luma levels, the colorburst can be done in software too. And that method takes care of the phase issues. Just make sure the pixel clock is fast enough to yield 2 samples per colorburst wave period and encode the burst as pixels.

Here is an example done with Propeller. This is a software color burst, and artifact color as described above.

https://youtu.be/-gyO2lRXLyg

Sounds are done with software synthesis and info about all that can be had in the Parallax forums.

There are reasons for such compatibility among displays..

The first and most obvious is that they are effectively all using the same CRT units. Any actual differences are with respect to the drive circuitry and the inputs it accepts.

An important difference between a video monitor and a television set is the latter has an analog tuner that demodulates the transmitted signal received by the antenna.

However, a crucial distinction is that Color TV is just a B&W (luminance or intensity) TV signal with an overlaid signal encoding the color data. So a color transmission shows up just fine on a B&W set.

OK.. Anybody got thoughts on how to go the other way? Take a monochrome RS-170 video signal and save it to memory on command?